万字长文,针对游戏易用性测试的案例解构分析

作者:Maria Sifnioti

你们当中也许有人听说过我们的Total Eclipse,这个游戏小工作室的核心成员有五人。虽然我们规模小,但我们仍然认为游戏的易用性测试是非常重要的。

过去,我们三件最大的作品和发行商签订了协议,由发行商负责为我们的游戏作易用性和试玩测试,内容包括摄像机记录、问卷调查、定位玩家群体等等。之后由我们团队查看测 试结果。我不得不告诉你,特别是在易用性测试,我们看这些视频的大部分时候都感到心碎,因为记录效果很不理想。这启示我们易用性测试是多么关键,以及由我们的核心团队 之外的人作测试是多么重要。

Usability-Part-1(from totaleclipsegames)

在我们的工作室,我们也找朋友和家人帮我们测试游戏,但形式并不正规——他们玩游戏,我们站在他们背后一边看一边记录。然而,两年以前,我们开始自己搞发行,所以就不 再享有发行商提供易用性测试的待遇了。

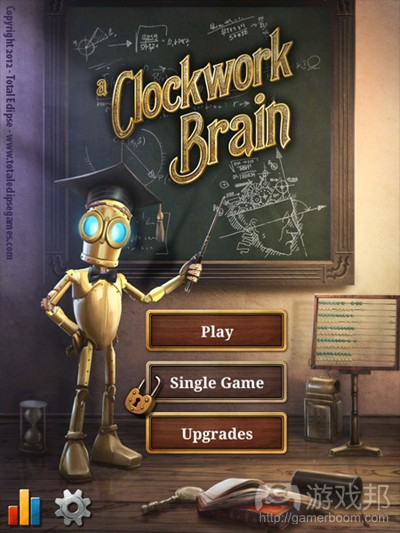

我们决定自己为最新iOS游戏《A Clockwork Brain》设计易用性测试环节。

在此之前,我们当中无人具有任何正规的易用性测试工作经历。我本人在问卷调查设计和实验的简化方面算是有些经验,因为我之间从事过大学研究。

研究、设计和易用性环节费了我们一个月才完成。我们想和读者分享一下我们从中学到的经验,希望读者们能从中受益。因为要讲的内容太多,所以我们把文章分成两个部分。

在这个部分,我们主要讨论我们使用到的硬件和软件装备。

首先要提到的东西是:

使用的设备(如摄像机、设备套壳等)

捕捉影像和声音的软件。

安装摄像机和制作易用性测试台

Room-Set-Up(from totaleclipsegames)

我们想在玩家游戏时监控他们的面部表情和在游戏中的活动。这需要安装两部摄像机:一部面向玩家,另一部面向游戏设备——这是比较复杂的地方。

我们发现了许多非常实用的方法。第一个是由Harry Brignull设计的,这告诉我们如何用有机玻璃制作一个成本只要5英镑的iPhone易用性测试台。第二个方法是由Nick Bowmast提 出的,他使用了类似的方法。Belen Barros Pena设计的最后一个方法适用于许多不同的移动设备,是用玩具模型部件制作的。

我们决定模仿Brignull和Bowmast的方法。需要的设备如下:

1、用于保护游戏设备的坚硬透明套壳(取决于你想使用的游戏设备,我们有iPod和iPad)。在亚马逊上花大约5欧元可以买到。虽然其他的方法用不到套壳,但我们认为用上会使 玩家感到更自然舒适。

2、条状的有机玻璃(也叫作树脂玻璃、塑胶玻璃),规格是26cm x 3cm x 3mm,大约2欧元。

3、加热有机玻璃的设备。最安全的方法是使用电热丝式加热器。我们自己使用的却是喷灯。记住,加热要均匀,不要对着火焰直接加热,所以使用喷灯的话要格外小心。Nick Bowmast太有才了,居然使用烤箱!

4、质量好、轻的、带话筒的摄像头,还要能够安装在有机玻璃条上。我们使用的是Microsoft LifeCam HD(成本大约42欧元)。它的底座很容易拆除,拆除底座之后可以固定到有 机玻璃条上。

5、另一个带话筒的摄像头要面向玩家。我们使用的是办公室里已有的一个Logitech Orbit AF摄像头(66欧元)。

Tools-of-the-Trade(from totaleclipsegames)

把有机玻璃条加热并折出合适的角度,我们又在上面钻了孔用来通摄像头的电源。摄像头安装牢固后,这个设备就要接受考虑了。必须绝对保证角度正确,摄像头不会阻挡玩家面 向屏幕的视线。我们把加热好的有机玻璃条固定到透明套壳上。

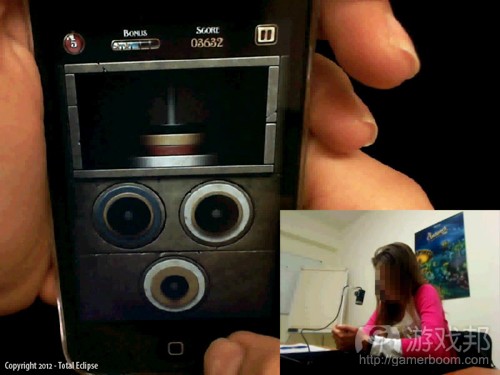

使用这种设备台非常有好处。首先,摄像机和设备屏幕之间的距离总是固定的。当你调整好摄像头的焦点后,你会看到,无论玩家怎么持设备,视频都会流畅稳定。第二,摄像机 放置的方式还会对玩家游戏产生干扰,也不会迫使玩家保持特定的姿势。玩家可以像平时一样自由地持设备,无论他习惯左手还是右手。增加一个坚硬的塑料套壳使设备看起来更 自然;这类小设备最好有一个塑料套壳。最后,从测试台上添加或移除设备非常容易,因为只需要将设备从塑料套壳上拿下来就行了。

这种测试台组合的缺陷之一是额外的重量(游戏邦注:iPod/iPhone大约80-90克,iPad大约100-110克)。然而,在整个测试环节中,没有一个玩家说累或表现出疲劳的迹象。以下 详细的重量情况:

套壳:大约18克(iPod touch);大约30克(iPad)

摄像机:大约50克

有机玻璃条:10克

另一个缺陷是,你必须为各个设备制作独立的测试台(有机玻璃与设备套壳相连)。另外,你必须提前知道你在测试时将使用什么设备;否则,在玩家的触屏手机上测试游戏时, 你就没有适用于那个设备的套壳了。

现在我们来看看如何安装摄像机。

安装摄像机记录

Device-Sled-Close-Up(from totaleclipsegames)

我们希望有一个正规的易用性测试装置,允许玩家单独呆在一个房间中玩游戏,且不受我们在场的干扰。所幸的是,我们的工作室有一间会议室正好派上用场。我们将电脑放在那 里,将它与两部摄像机和扬声器相连。

下一步是写下我们的记录需求。制作这种需求表是非常有用的。它让你更清楚整个步骤,迫使你记下测试环节中需要和不需要的固定条款。以下是我们认为必须的:

1、从测试间到观察间的视频流必须流畅稳定,质量高。

2、同时监控两部摄像机(画面对画面)

3、记录声音和视频。

4、通过话筒与玩家即时交流。我们不想直接闯进测试间。

5、可靠的软件——不要让技术因素干扰测试。

我们测试了许多不同的免费和便宜的视频和屏幕录像软件(Windows版),如Camtasia、Amcap和VLC。这里我们还要提到的是Silverback(Mac版),虽然我们没有测试过,但该软 件的好评很多。

上述软件都不适合我们的需求,所以我们不得不转向TechSmith公司出品的Morae。我们相当犹豫,因为这款软件很贵,完全超出了我们的预算(1427欧元)。它非常强大,功能多 ,但大多是我们不需要的。

Morae由3个不同的部件组成:记录器、观察器和管理器。记录器记录来自两个摄像头或一个摄像头和电脑桌面的信息。如果有必要,记录还可以进一步强化,即追踪鼠标的点击情 况、分发调查问卷、中断任务和记录。观察器用来远程观察记录器工作情况,还可以启动或中止记录。记录器文件以Morae专用的视频格式保存,可以用管理器打开和编辑。

Morae-Capture(from totaleclipsegames)

我们发现观察器可以用wmv格式保存来自摄像机的本地的即时信息。我们还发现,当关闭即时信息的声音时,传输的质量会显著提高。

以下是我们的最终设置情况:

1、在测试间的电脑上安装Morae记录器,并正确地启动。

2、使用默认的模板配置,即“硬件或移动软件设备研究。

3、移除所有与任务记录、鼠标效果、研究、标记和调查相关的东西。这些我们都不需要。

4、在测试间的电脑和监控间安装Skype语音电话。这样我们就可以听到玩家的评论,如有必要还可以和他们通话。

5、在另一台电脑上安装Morae观察器。减少音频流,以wmv格式保存视频(即保存的视频不包含声音)。

我们免去了Morae管理器。因为记录器总是会以Morae格式保存视频,我们没有办法查看,但观察器的wmv格式的质量相当高,很符合我们的需要。

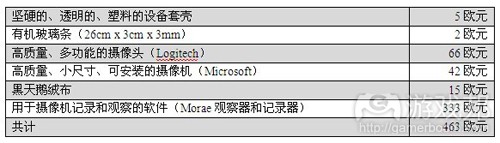

Techsmith公司将三个组件分开出售,我们可以单独购买观察器和记录器,花了333欧元。你看吧,这个价格公道多了。

安装好以后,为了确保达到最佳的光照条件和摄像机设置,我们做了一系列测试。不同房间和不同摄像机的条件当然是不同的,但有两件东西确实帮了大忙:人照光和一块黑天鹅 绒布。在测试期间,我们在测试间内使用人照光,并调整到特定的亮度。测试间的百叶窗一直是关闭的,无论白天黑夜。这样我们就可以控制房间的亮度了。

我们还用一块黑天鹅绒布盖在部分记录区域上。这块布要15欧元,但挺值当。它形成的黑色背景可以吸收光线,会让设备记录得更好。我们的天鹅绒布的尺寸是50cm x 50cm,用魔 术贴固定在木头桌子上,这样,这片区域就不会受到任何移动的影响了。

总结

我们希望第一部分内容能给读者带来启发。到此,我们已经介绍了硬件和软件的选用。如果你不是像我们一样使用便携设备,而是记录来自台式机和面向玩家的摄像机的信息,那 事情就好办多了。如果观察信息传输没什么大问题,你会发现免费或低价的软件可能正是你需要的。

以下是我们的设备成本:

设备及其成本

在第二部分的内容中,我们将重点介绍测试环节的细节,如游戏特性、参与者的招募、问卷调查的设计、测试的简化和易用性测试的结果。

研究、设计及开展易用性测试从始至终耗费1个月时间。在此之前,我们在设计正式易用性测试方面都没有任何经验。我自己在问卷设计和简化实验方面有些许经验,这主要源自我 们之前的大学研究工作。

第一部分的文章内容主要解释我们的硬件和软件选择,详述设计成本。而本文则主要查看游戏本身及解释易用性测试过程。

本文中,我们将讨论下述话题:

* 了解你的游戏。

* 你希望邀请什么类型的玩家,我们如何招募他们。

* 找出测试内容。

* 设计首个易用性情境。

* 在测试过程中利用iGEQ问卷和开放式问题。

让我们开始吧!

了解你的游戏

我们起初不知道要如何着手。阅读相关文章几天之后,我们发现:

* 众多有用的网页易用性信息。

* 众多关于游戏易用性的学术文章。在我们看来,这些内容大多都过于学术性,无法立即进行实际应用。存在某些例外情况,详见下文。

* 有许多出自工作室之手的、清楚阐释游戏易用性的事后分析(游戏邦注:或是将其外包给易用性测试公司)。

我们发现,解决下述关键问题有助于后期设计工作。

游戏距离完工还有多久?

我们决定在Beta阶段前进行数个月的测试,这样多数插图和机制到时候就已完成。我们没有理由不在较早阶段进行易用性测试。事实上,我们觉得这有利于解决各种设计之间的分 歧。你需要弄清完工阶段,因为你手头的内容就是你的测试目标。

通过仔细规划,你可以在不同制作阶段安排一个以上的易用性项目。

谁是你的玩家?

了解潜在玩家意味着你将清楚得安排哪些玩家测试你的游戏,是吧?是的,这是我在易用性文章中读到的大多数内容,但我并未将其看作是真理。我觉得你还应该邀请若干反派玩 家,如果你希望游戏有更广覆盖面的话。

《A Clockwork Brain》是款针对大多数玩家的益智游戏系列。对于我们来说,控制装置要能够快速把握,即便玩家没有阅读指南(许多玩家都没有阅读指南),玩家要能够快速把 握进展情况。因为这是款iOS游戏,我们的目标玩家是持有这类设备、会下载我们游戏的群体。但我们也将从未用过触屏手机的用户纳入在内。我们还邀请喜欢硬核游戏、不喜欢在 自己的触屏手机上玩游戏的用户。

你通过未玩过这类游戏或未玩过游戏的用户获取的反馈信息非常有价值,会令你大开眼界。

你应邀请多少玩家?

Nielsen表示,邀请5名用户参加易用性试验是最佳选择。融入越多玩家,我们从中把握的东西越少。若你安排10-12名人员,最好将他们划分到2个连续的易用性试验中:进行首个 试验,修复内容,进而第二个试验,测试你所修复的内容。其他人则建议在各试验中安排8-10名用户。总之,你手头要安排额外人员,尤其是:

* 各模拟易用性试验至少安排1名人员

在你将易用性设计呈现给有价值的玩家之前,你应先进行测试。模拟试验将证实,你预计的时间安排颇为合理,过程没有漏洞,所有内容进展顺利。显然,你不能借助参与易用性 试验设计的人员。若你的模拟试验按计划完成,你的用户也是游戏的潜在用户,你将会在着手之前获得宝贵的反馈信息。我们的模拟试验就是这样——我们当天获得的多数反馈信 息和我们在正式运行阶段获得的信息大同小异。

* 安排适当人员,以防有些用户最后没有出现

有人也许会取消约定——这不应影响你的试验。你应安排若干供你调遣的用户。在我们的两个易用性试验中,我们在首个试验中安排5位玩家,在第二个试验中安排6位玩家,还有2 名人员配合各模拟运行环节。这里我们要强调的是,5名玩家是个合理数字,如果你不想要区分玩家。例如,如果你想要测试成年人&儿童的行为,那么你就需要在各试验中安排两 组人员,1组是儿童,1组是成年人。关于此方案,Nielsen建议:

— 若测试两组用户,那么各类型各3-4名用户

— 若测试3组或更多用户,那么各类型安排3名用户(你总是希望有至少3位用户,以确保能够囊括小组中的多元化行为)。

如何招募玩家?

我们通过Facebook和Twitter平台发动call for Beta Testers计划,从中招募玩家,在此我们安排有兴趣的人士填写调查表。

最好是提前计划,融入同时也能够帮你挑选未来项目测试玩家的问题。你需要知道年龄和性别之类的典型信息,还有玩家能够接触到什么技术,他们玩游戏的频率,基于什么设备 。这样你还能够扩宽潜在测试者的覆盖面;完成我们调查问卷的用户只有40%来自希腊。最后,这能够让你在玩家到达现场前,了解他们的信息——这样玩家就不会花费宝贵时间完 成用户信息问卷,相反,会着眼于关键内容:游戏。

顺便提一下,务必给予玩家奖励,提供刺激因素。我们的每位易用性测试参与者都会得到我们的2份游戏及2张闪亮的游戏海报。

我们想要测试什么?

A clockwork Brain(from forums.toucharcade.com)

这是个很难回答的问题,需进行严肃思考和计划,因为这会定义易用性试验本身的结构。在《A Clockwork Brain》中,我们发现尽可能准确描述游戏非常有帮助,然后从此着手。

总之,它的特点是:

* 具有蒸汽朋克外观和感觉的免费下载脑力训练游戏。

* 平台:植入应用内部消费的iOS。

* 奖励玩家的代币机制。

* 11个迷你游戏测试各种技能(如逻辑和算法):4个免费,6个通过虚拟交易购买,1个通过代币解锁。

* 各迷你游戏有单击控制装置。

* 玩家需要在1分钟里给出尽可能多的正确答案。若他们表现突出,关卡就会变得越发困难。若他们回答错误,任务就会变得更加简单。

* 两种体验模式:Challenge(连续4个迷你游戏)和Single Game(随意体验任何游戏)。二者都以Token作为奖励,将分数提交至本地&全球排行榜。

仅是通过这么做,我们意识到,自己希望测试两种不同的游戏元素:

1. 迷你游戏本身。各迷你游戏机制是否按计划运行?个人指令是否足够清晰?玩家喜欢或者不喜欢迷你游戏?

2. 我们对于此“仅下载应用”体验的落实情况如何?游戏在初期关键时刻的运行情况如何?

这些元素取决于第一印象,是相互抵触的关系。初次体验游戏的玩家不会立即访问到所有游戏内容,或Single Game模式。另一方面,玩过所有迷你游戏的玩家不是测试“游戏初体 验”的最佳人选。我们需要至少2个截然不同的易用性试验,由两组不同的全新玩家完成。

第一个试验:测试迷你游戏

这一试验主要着眼于各迷你游戏的易用性和可玩性。我们首先是给各玩家设定若干任务:

1. 玩家需要体验各迷你游戏3次:这能够确保玩家清楚把握机制,能让我们获悉他们从完全的新手到新手的表现情况。例如,是否存在这样的机制:他们似乎到第3次试验才把握, 但我们希望他们能够立即把握?

2. 玩家需要完成各迷你游戏的问卷调查:完成迷你游戏后,玩家需要进行评估其对迷你游戏第一印象的问卷调查。

3. 我们应和玩家进行最后讨论:试验结束后,就他们的总体体验展开沟通。

各任务/要求对于试验的设计有深远影响。

迷你游戏的顺序

由于游戏基于认知技能,因此我觉得,若玩家需要体验各迷你游戏3次,完成11种迷你游戏,他们将非常疲惫。我们需要确保,玩家不会因心力交瘁而给迷你游戏消极评价。为保持 平衡关系,我们根据参与者调整各个游戏的体验顺序。我们的内容包括:

* 4个核心游戏(免费游戏,可供所有玩家体验,较高优先级,良好第一印象最重要)。

* 3个各包含2个游戏的包裹(付费游戏,付费体验,中等优先级)。

* 随后解锁的奖励游戏(通过Token激活,低优先级)。

这里需要强调的是,在试验中,所有游戏都平等呈现给玩家。我们不会透露游戏类型,以防影响玩家的看法。当玩家出现在Singe Game Mode时,他们需要体验迷你游戏。

事实上,游戏给各个玩家安排不同的体验顺序。为在Singe Game Mode中快速转变游戏顺序,我们举行游戏内部的开发者座谈会,这让我们能够在试验之间快速做出类似变更。我们 还植入快速“保存/导出”玩家数据功能,这让我们能够快速将各玩家的具体分数保存于数据库文件中。最后,为了帮助玩家简化体验,我们隐藏若干菜单选项,这不是这一试验的 必要内容(游戏邦注:如Upgrades版块和Challenge模式)。

设计问卷调查

各迷你游戏的问卷调查需保持简短,方便玩家完成内容,其中原因显而易见。我们想要知道玩家是否享受其中,是否面临足够挑战,他们是否沉浸于游戏之中,他们是否喜欢游戏 的视觉效果。最后,我们想要通过玩家获悉若干易用性信息:他们是否清楚关卡何时改变?当玩家做出正确回答时,游戏是否清晰显示?反馈信息是否足够?

问卷调查由两部组成。第一部分是iGEQ版本(FUGA的游戏体验问卷调查)。iGEQ是包含42个问题的游戏体验问卷调查的简称。各问题着重于不同因素,如技能,感觉和想象沉浸性 ,流动性,紧张感,挑战和消极/积极影响。

我们根据FUGA的指令将GEQ翻译成希腊语,然后将iGEQ调整成迷你iGEQ。我们没有设置14个问题,给各个因素各2个问题,相反我们设置7个问题;各因素各1个问题。关于调查方面 ,这不是相当准确,因为针对同个因素设置更多问题能够进一步强化结果。但在所有iGEQ记录中,玩家鲜少完成内容超过3次。就我们而言,玩家需要完成11个问题,因为1个迷你 游戏有1个问题。因此,我们针对各因素选择最具代表性的问题。

最后讨论内容

关于此,最重要的是,让玩家谈论和表达他们对游戏的感觉。这也让我们有机会就在试验中所发现的问题进行发问。我们给予这部分内容极大灵活性,除设定若干具体问题外,如 玩家是否有最喜欢/最不喜欢的迷你游戏。回过头看,没有提问玩家的固定问题列表不是可取模式。有时,问题的提问具有引导性。此外,我们试验过程的一个推进因素是,故意提 及之前玩家在游戏中的表现,进而影响讨论结果。在第二个易用性试验中,我们仔细设定所想要提问的问题,在讨论过程中将内容打印出来。

总结

这是文章的结尾部分;我希望你觉得内容颇为有趣。本文中,我谈及我们的具体游戏内容,如何确定测试内容,如何招募玩家,以及如何着眼于迷你游戏设计易用性试验。

拓展阅读:篇目1,篇目2(本文由游戏邦编译,转载请注明来源,或咨询微信zhengjintiao)

Game Usability Testing for Indies: It’s Easier than you Might Think! (Part 1)

by Maria Sifnioti

As some of you may know, Total Eclipse is a small studio, with a core team of five. Even though we’re small, we consider usability testing very important.

In the past, for three of our largest productions we had a publishing agreement. The publisher had been in charge of doing usability & beta testing for our games, with camera recordings, questionnaires, targeted player groups, the whole lot. We used to get the videos and watch them as a team afterwards. I’ve got to tell you, especially during the usability, those videos were most of times heart-breaking and not in a good way. That taught us how important usability is and how crucial it is to test things outside our core team.

In our studio, we also tested our games with friends and family but in a much more informal setting – them playing, and us, behind their backs watching and keeping notes. However, for the last two years we’ve turned to self-publishing; we no longer have access to a publisher’s usability perks. As a result, for our latest iOS game, A Clockwork Brain, we decided to design the usability session from scratch.

Prior to this, none of us had any working experience with formal usability testing. I, myself, have had some experience in questionnaire design and facilitation of experiments, based on previous work in university research.

The research, design, and deployment of our usability session took one month from start to finish. We wanted to share what we learnt with you, and we hope you find it helpful. As there are a lot of things to talk about, we’ve split it in two articles.

This here, the first article, discusses the hardware and software setup that we used.

Things we’ll talk about in the first part are:

■Equipment used (i.e. camera, device case).

■Software for capturing video and sound.

Setting up cameras and making the usability sled

We wanted to monitor the players while playing: both their facial expressions as well as their actions in the game. This called for a two-camera setup. The first would be a camera that faced the player. The tricky part was to find a suitable second camera that would face the device.

We discovered a number of very useful approaches. The first one is by Harry Brignull (of “90 Percent of Everything”, a treasure trove of material on Usability!) which shows how to make an iPhone usability sled for £5 by using acrylic. The second one, by Nick Bowmast uses a similar approach . The last, by Belen Barros Pena, has the capability to fit a number of different mobile devices and is constructed of Meccano parts.

We decided to do something similar to Brignull and Bowmast. Equipment required:

1.Hard protective crystal clear case for the device (depends on what you want to use, we had one for iPod and one for iPad). Costs around €5 on Amazon UK. While the other methods don’t use cases, we thought it would feel more natural to the player’s touch.

2.An acrylic (we call it plexiglass) strip (26cm x 3cm x 3mm, at around €2)

3.A way to heat the plexiglass. The safest way is by a strip heater. We, instead, used a blowtorch. Keep in mind that you need uniform heat, not direct flame, on the plexiglass, so use blowtorches with great care. Nick Bowmast ingeniously uses a toaster instead!

4.Good quality, light, webcam with microphone, capable of being mounted on the plexiglass strip. We used Microsoft LifeCam HD and were extremely happy (costs around €42). Its base can easily be removed and the camera attached to the strip.

5.A second webcam with microphone that would point towards the player. We already had a Logitech Orbit AF Webcam (€66) in the office so we used that one.

After heating and bending the strip to the desired angles, we drilled the mounting socket for the camera. Once the Lifecam was securely mounted, the kit was extensively tested. When it became absolutely certain that the angle was correct and the Lifecam did not obstruct the player’s view towards the screen, we heated/attached the plexiglass strip to the clear case.

There are a number of benefits in using such a sled setup. For starters, the distance between the camera and your device’s screen is always fixed. When you setup your camera’s focus, you will observe that, no matter how the user holds the device you will have a steady video stream. Secondly, the way the camera is placed does not obstruct the player during the game. It also does not enforce him to remain at a specified position. The player is free to use the device

as he normally would, whether he is left- or right-handed. The added hard plastic case gives an even more natural feel for the device; gadgets like these are expected to have plastic cases. Finally, adding/removing the device from the sled is very easy, since it only requires detaching it from the plastic

case.

An obvious downside to this setup is the extra weight (about 80-90 gr for the iPod/iPhone and 100-110 gr for iPad). Through the sessions, however, none of the players raised an issue or showed any sign of tiredness. Here is the distributed weight:

■Case: ~18 gr (iPod touch) ~30 gr (iPad)

■Camera: ~50 gr

■Plexiglass strip: 10 gr

Another downside is that you will need a separate sled setup for each different device that you use (plexiglass strip glued to device case). Also, such a setup will always require you to know what device you will use during usability; testing a game on a user’s touchphone when you don’t have a case for that phone would be a problem.

We will now look at how we setup the recording from the camera setup.

The odyssey of camera recording

We wanted to have a formal usability setup, where the player would be alone with the game in a room and would not be hindered by our presence. Fortunately, we have a conference room in our studio which was perfect for this. We put a high-spec desktop PC there, and connected it to the two cameras and speakers.

The following step was to set down requirements for our recordings. Making such a list of requirements is extremely useful. It makes you think more clearly about the whole procedure and forces you to start setting down fixed rules about what you want and what you don’t want from your usability session. This is what we considered mandatory for our session:

■A good quality of continuous video streaming from the usability room to where we watched.

■Monitor both cameras at once (picture in picture).

■Record voice as well as video.

■Communicate in real time with the player through microphones. We didn’t want to barge in!

■Reliable software – usability flow should not be disturbed due to technical reasons.

We tested a number of different free and cheap webcam and screen recording software under Windows, such as Camtasia, Amcap, and VLC. Here we should also mention Silverback (for Mac) which we did not test, but read a lot of good reviews about it.

Some very useful articles that helped us:

■Cheap alternatives to Morae

■Record two camera feeds using only free software

None of that software fitted the requirements we had set so we went on to trial Morae by Techsmith. We were quite hesitant as it was a very expensive piece of software and completely outside our budget (€1,427). It is quite powerful, having a wide range of research features, but frankly we did not need most of them.

Morae is made up of 3 distinct components: Recorder, Observer, and Manager. The Recorder records the feed from the two cameras or from a camera and a computer desktop. The recording can be enhanced, if wished, bytracking mouse clicks, handing out surveys, breaking the study in tasks and recording a log. The Observer allows you to observe the Recorder feed remotely and also commence or stop Recordings. The Recorder files are saved in Morae’s proprietary

video format that can then be opened and edited with the Manager.

We found out that the Observer can save the live feed from the cameras locally in .wmv format. We also discovered that when you turn the sound off in the live feed, the streaming is of much higher quality.

So this was our resulting setup:

■Install Morae Recorder on the PC in the usability room and set it up properly.

■Use the default template configuration for “Hardware or Mobile Software device study”.

■Remove everything related to task logging/mouse effects/studies/markers and surveys. We did not need any of that.

■Have a Skype voice call between the computer in the usability room and one of ours. This allowed to hear any player comments and to talk to them if needed.

■Install Morae Observer on another PC. Have audio-less streaming, and save the video in .wmv format (the saved video does include sound).

By doing this, we eliminated any direct need of the Morae Manager. The Recorder will always save in the Morae format, which we were not able to view, but the quality of the Observer .wmv was quite good for our needs, as it offered what we wanted.

Techsmith sells an Observer and Recorder bundle separate from the Manager bundle, at €333. You can see how this price range is much more affordable for us!

Once the setup was confirmed, we run a series of test sessions in order to ascertain the optimal light conditions and camera settings. These of course will be different per room and camera, but two things really helped: artificial light and a piece of black velvet cloth. During usability, we lighted the room with artificial light set to a specific level. The room’s blinds were always shut, no matter the time of day. This way, we were always in control of the light level.

We also covered part of the recording area with black velvet. This piece of cloth will set you back about €15 but it is worth it. It ensures that the dark background absorbs the light and will make your device recordings so much better. Our velvet was a 50cm x 50cm area, attached with Velcro strips on the wooden table. The participant was instructed to try and constrain any moves around that area.

Conlusion

We hope you’ve found the first part of our usability design useful. In this article we have explained how we decided on which hardware and software to use for our usability sessions. If you do not have a portable application like we did, and you want to record from a desktop and a user-facing camera, things will probably be a bit easier. If streaming observation is also not a big issue for you, you will find that one of the free/low-cost software options would probably be just what you need.

Until this point, the cost for our usability setup is:

Total €463

Hard, clear, plastic case for mobile device €5

Plexiglass strip (26cm x 3cm x 3mm) €2

High quality, versatile webcam (Logitech) €66

Good quality, small-size, mountable camera (Microsoft) €42

Black velvet cloth €15

Software for camera recording & observation (Morae Observer+Recorder) €333

Stay tuned for Part 2, where will focus onto the particulars of usability sessions, such as game specifics, participant recruitment, questionnaire design, session facilitation and usability results

This is the second in a three-part series of articles detailing how we designed and deployed usability testing for our latest iOS game, A Clockwork Brain.

The research, design, and deployment of usability testing took one month from start to finish. Prior to this, none of us had any experience with designing formal usability testing. I, myself, have had some experience in questionnaire design and facilitation of experiments, based on previous work in university research.

The first article explained our choice of hardware and software and detailed the set-up costs. This article examines the game itself and explains its usability testing procedure.

The following topics will be discussed:

* Knowing your game.

* What kind of players we wanted to invite and how we recruited them.

* Discovering what to test.

* Designing the first (of the two) usability scenarios.

* Using the iGEQ questionnaire and open-ended questions, during testing.

Let’s begin!

Know your game

We had no idea how to proceed in the beginning. After reading articles for days, we discovered:

* A ton of information on web usability that could be helpful.

* A lot of academic articles on games usability. Most of them were found to be too academic-oriented and with no immediate practical application in our case. Some exceptions did exist, which are referenced below.

* A handful of post-mortems with clear how-to’s on games usability by games studios themselves (or contracted usability companies).

We found that answering the following key questions proved very helpful with the subsequent design.

How far is your game from completion?

We decided to test a couple of months before Beta, so most artwork and mechanics were completed by then. There is no reason why one should not do a usability test at a much earlier stage. In fact we think this would be very beneficial in resolving disputes between different designs. You need to know the stage of completion simply because what you have is what you can test.

With careful planning you could organise more than one usability sessions at different stages of your production.

Who are your players?

Knowing your prospective players means knowing whom you should invite to play-test your game, right? Well, that is what I read in most usability articles but I do not take it as gospel. I think you should also invite some anti-players if you want your game to have a very broad reach.

A Clockwork Brain is a series of puzzle games that target pretty much everyone. It is imperative for us that the controls can be quickly understood and, even if someone does not read tutorials (many people don’t), she should quickly grasp what’s going on. Because it’s an iOS game, our targeted players should be ones that own such devices and thus would be also likely to download our game. However, we also brought in people who had never used a touchphone in their

lives. We also invited people who we knew love hardcore games and dislike playing games on their touchphones.

The feedback you get from people who have no experience with your game’s genre, or with games in general, could be extremely helpful and a real eye-opener.

How many players should you invite?

Nielsen suggests that 5 users is the best number for a usability session. As one adds more and more users, he learns less and less. If you have 10-12 users, it is much better to split them in two consecutive usability sessions: do the first, fix stuff, do the second and test what you fixed. Others suggest 8-10 users per session. In any case, consider that you must have some extra users handy and in particular:

* At least one for each mock usability session(s)

Before you unleash the usability design onto your worth-their-weight-in-gold players, you should test it. The mock session will verify that the timings you have estimated are correct, the procedure has no holes, and that everything runs smoothly. Obviously don’t use someone who has worked in designing the usability session. If your mock session goes according to plan and your user is also a prospective user of your game, you will have valuable feedback before you even begin. That’s what happened with our mock sessions – most of the feedback we got on that day we also got on the official runs.

* People to invite in case of no show

Someone might cancel – this should not affect your session. You should have a list of people you can invite in their place. For our two usability sessions we had 5 players in the first, 6 in the second and two more for each mock run. We should stress here that 5 players is a good number if you don’t want to differentiate between the players. For example, if you wanted to test the behaviour of adults vs. the behaviour of children then you would need two groups for each session, one with children and one with adults. For this scenario, Nielsen suggests:

* 3-4 users from each category if testing two groups of users.

* 3 users from each category if testing three or more groups of users (you always want at least 3 users to ensure that you have covered the diversity of behaviour within the group).

How will you recruit your players?

We recruited players through a call for Beta Testers through Facebook and Twitter, where we tasked interested persons to complete a survey.

It’s best to plan forward and include questions that will help you select players for future projects, as well. You need to know typical things like age and gender, but also what access to technology a player has, how often he/she plays games and on which devices. This way you can also broaden your reach of possible testers; just 40% of those who completed our survey were located in Greece. Lastly, this allows you to have each player’s demographic info before he/she arrives at the session – so the player won’t spend precious time completing demographic questionnaires, and will instead focus on what matters: the game.

By the way, make sure that you reward the players, give them an incentive. In our case, each usability participant was rewarded with two of our games plus two shiny game posters.

What do you want to test?

This is a very hard question to answer and requires serious thought and planning because it will define the structure of the usability session itself. For A Clockwork Brain, we found it helpful to describe the game as concisely as possible and start from there. You can read the description here. In summary, it identifies:

* Free to download Brain-training game with a Steampunk look and feel.

* Platform: iOS with in-app purchases.

* A Token system that rewards players as they play.

* Eleven mini-games testing various skills (such as logic and arithmetic): 4 free, 6 bought through in-app purchases and one unlocked with Tokens.

* One-tap control for every mini-game.

* The players have to give as many correct answers as possible within a minute. If they do well, the levels become harder. If they answer wrongly the tasks become easier.

* Two modes of play: Challenge (4 mini-games in a row) and Single Game (play any game you want). Both give Tokens as rewards and submit scores to local & global Leaderboards.

Just by doing this we realised that we wanted to test two different aspects of the game:

1. The mini-games themselves. Does each mini-game mechanic work as planned? Are the individual instructions clear? Do players like, or dislike, the mini- game?

2. How well have we implemented the ‘just downloaded the app’ experience? How does the game flow in those first crucial minutes?

These aspects depend on first impressions and are conflicting with each other. A player, playing for the first time, would have no immediate access to all the games, or the Single Game mode. On the other hand, a person who has played all the mini-games and has had experience with the game would not be a good candidate for testing the ‘first experience with the game’. We needed at least two, differently designed, usability sessions with two different, fresh, sets of players.

1st Session: Testing the Mini-Games

This session focuses on the usability and playability of each mini-game. The first thing we did was to set some tasks for each player:

1. The player has to play each mini-game three times: This would ensure that players would have (hopefully) understood the mechanics and would also allow us to see how they perform from total novices to just novices. For example, is there a game mechanic that they seem to understand later, in the third session, that we’d want them to understand mmediately?

2. The player has to complete a questionnaire for each mini-game: Immediately after playing the mini-game, the player should complete a questionnaire that evaluates their first impressions of the mini-game.

3. We should have a closing discussion with the player: Once the session is over, have a discussion about their overall experience.

Each task/requirement had further implications on designing the session.

Mini-games order

Because the games are based on cognitive skills, we thought they could be tiring for the player if he’s asked to play each mini-game 3 times and has 11 games to play. We had to ensure that the player will not rate a mini-game negatively because he is somehow mentally tired. In order to be balanced, the order with which games are played changes for each participant. We have:

* Four core games (free games, playable by all, high priority, good first impressions matter the most).

* Three packs of two games each (premium games, playable by those who purchase, medium priority).

* One bonus game that unlocks much later (activated with Tokens, low priority).

It should be stressed that during the session all games are presented as equal to the player. Any categorisation of the games is not revealed, in case that it might somehow affect the player’s perception. The player is simply asked to play the mini-games as they appear in Singe Game Mode.

In reality, each player is assigned a different play order. To quickly switch the game order in Singe Game Mode we implemented an in-game developer’s panel that allowed us to make changes like this rapidly between sessions. We also implemented a quick ‘save/export’ player data feature, which allowed us to quickly save each player’s detailed scores in a database file. Lastly, to make the experience easier for the player, we hid some menu options that were not

required for this session, such as the Upgrades section and Challenge modes.

Designing the questionnaire

For obvious reasons, the questionnaire for each mini-game had to be short and easy for the player to complete. We wanted to know if the player was having fun, if she had enough of a challenge, whether she was immersed with the game, if she liked the visuals. Lastly, we wanted to get information from the players on specific usability areas: Did they understand when the level changed? When they answered correctly, did the game make it clear enough? Was the

feedback sufficient?

The questionnaire consists of two parts. The first part is a version of iGEQ (the in-Game Experience Questionnaire by FUGA). The iGEQ is a shorter version of the 42-question-long Game Experience Questionnaire. Each question gives weight to different factors, such as competence, sensory and imaginative immersion, flow, tension, challenge and negative/ positive affect.

We translated the GEQ in Greek as per FUGA’s instructions and then adapted the iGEQ to a mini-iGEQ. Instead of 14 questions, two per factor, we used 7; one for each factor. Research-wise, this is not extremely accurate as more questions for the same factor will give greater strength to the results. However, in all documented uses of the iGEQ, the players rarely completed it more than 3 times. In our case, the player had to complete 11 of those, as we needed one for

each mini-game. As a result, we chose the most representative questions for each factor (in their Greek equivalent) and used those.

Here’s the full GEQ questionnaire translated in Greek along with the questions chosen for iGEQ and mini-iGEQ. Additionally we provide a sample of the resulting questionnaire in English and Greek, for one of the mini-games.

Closing Discussion

A big part of this was letting the players talk and express their feelings regarding the game. It was also an opportunity for us to ask questions on issues we observed during the session. We left that part pretty much unscripted, apart from having a few set questions such as whether they had any favourite/least favourite mini-games. In retrospect, not having a fixed list of questions to ask the player was not a good approach. Sometimes, questions were asked in a leading way. Additionally, one of our facilitators perhaps inadvertently influenced the discussion by referring to previous players’ performances in the game. For the second usability session we carefully created all the general questions we wanted to ask and had a printout during the discussion.

Conclusion

This marks the end of the second usability article; I hope you’ve found it interesting. In this article I have explained what our game is, how we decided which parts of it we wanted to test, how the players were recruited and how we designed the usability session focusing on the mini-games. The final part will examine the second usability session. We’ll also discuss the lessons we took away from the whole experience and, of course, present some results and design choices that we made due to the usability sessions.

闽公网安备35020302001549号

闽公网安备35020302001549号