万字长文,关注音效设计在游戏制作中的加成效能,上篇

篇目1,音效可从6个层面强化游戏体验

作者:Michel Henein

“声音对影像的强化作用不是加法,而是乘法。”——黑泽明

引子

过去几年,我们已经目睹了电影中的声音从5.1/7.1声卡过渡到高通道数和基于目标的声音格式,使当下的电影制作者可以将“环绕立体声”进阶到更大大增加受众沉浸感的程度(Dolby ATMOS、DTS Multi-Dimensional Audio和Auro Technologies的Auro 3D)。这些格式在周围添加扬声器,包括上述的受众发出“elevation cue”,使声音得以在所有三个维度中环绕。

游戏也可以使用elevation cue使音效在所有三个维度中进一步拓展(游戏邦注:例如,使用立体耳机,不需要额外的扬声器);通过使用数字过滤器,可以通过调整声音对象在游戏空间中的XYZ座标位置来创造令人信服的3D“效果”,模拟在人在3D声音域中听到声音的对应点。

是的,3D音效技术和解决方案已经出现了好一段时间了,但现在大部分游戏开发者都没有在游戏中执行真正的3D定位音效;相反地,开发者往往坚持使用标准的立体声和5.1围绕声作为绝大部分游戏的事实标准。记住,并非所有3D音效解决方案都相似,真正吸引人的3D音效往往需要大量资源——音效处理并不能总是获得宝贵的资源。随着手机的多核处理器的普及、强大的次世代游戏机将于年底推出,新GPU(即AMD的TrueAudio)提供的专用音效DSP资源,游戏开发者在他们跨平台游戏中大规模使用和标准化3D音效解决方案的时机已经成熟了。

3d-audio(from futuristicnews.com)

以下是3D音效可以带给游戏开发者的东西:

Elevation(仰角):上下位置

Azimuth(偏振角):前后和左右位置

Distance(间隔):使用仰角和偏振角来确3D音域(即包围着你的球形声音领域)中的声音的位置,声音可以用间隔推出空间,以便改进深度知觉(比如,使用间隔线索处理,3D房间模拟)。

这些参数扩展音域,超越了立体声音、5.1和7.1可以产生的音域(2D格式)。对于追求现实感和沉浸感的开发者,3D音效可能是“最容易摘到的果实”。

以下是开发者使用3D音效来强化游戏体验的6种方法:

1、强化手机游戏沉浸感:

小屏幕的游戏(手机或平板游戏)可以利用3D音效来创造大游戏的错觉同。虽然小屏幕和球形音域可能有一点儿脱节,但3D音效可以增加“在游戏世界中“的感觉。

Ear Games工作室的iOS游戏《Ear Monsters》就非常有前瞻性,利用3D音效来促进玩法而不是视觉效果。使用3D声音线索来驱动玩法有助于把游戏世界的范围拓展到小小的屏幕之外,例如,玩家可以触击他听到的声音的在3D空间中来源(比如,当听到攻击的声音来自屏幕上方,就触击屏幕上方)。

2、以声音为中心的游戏:

使用3D音效,以声音为中心的游戏可以:

1)帮助视觉受损的人享受游戏。比如,玩家不需要看屏幕上发生什么就可以玩《Ear Monsters》。

2)交互故事可以利用旁白和3D音域中的音效创造有沉浸感的故事叙述体验。

3)恐怖游戏可以利用黑暗和缺少能见度,使玩家依赖3D声音线索,从而创造更有沉浸感的体验。

以上只是列举了声音为中心的游戏的无限可能性中的三种。

3、提高3D游戏(即3D FPS)的环境意识

通过使玩家依赖对声音线索来源的正确判断,3D音效可以提高玩家在FPS游戏中的环境意识。例如,在利用立体声、5.1或7.1音效传输的大部分现代战争FPS游戏中,当地面上的玩家被藏在高塔的狙击手射中时,玩家听不出来狙击手的枪声来自高处。而使用3D音效,玩家可以听到狙击手的声音来自高处。

4、减少UI图形:

声音可以代替部分UI(如子弹用光了或用声音提示玩家命值),从而使界面更加整洁,这是众所周知的。通过使用空间来暗示更多信息,3D音效可以拓展声音线索(使用声音线索,用仰角来显示暴雨即将来临)。

5、多人语意聊天的位置3D音效:

利用3D音效,可以制作一种“3D无线电通信”式的交流方法,即根据小组成员在游戏中的位置听到成员的通信声音(即小组成员在山上时,玩家听到的通信声音就来自高处)。

6、3D音效增强虚拟现实体验:

传统的立体声、5.1或7.1音频播放把音域限制为二维平面,这与虚拟现实技术提供的视野是脱节的。3D音效允许全面3D音域解决了这个问题,完美地增加了3D立体视野。头戴设备(例如Oculus Rift)与3D音效相结合,使玩家通过头部转动来确定声音的来源方向。

开发者如何在游戏中实现3D音效?

游戏的运行时间声音引擎通常使用音效中间件解决方案(比如FMOD和Wwise),这样开发者就可以利用Dolby、DTS、GenAudio、Auro 3D和Iosono的3D音效技术。鼓励你的团队探索各咱3D音效解决方案,使你的游戏的听觉体验胜过立体声、5.1和7.1能提供的。

篇目2,优质音效设计能够显著提高游戏的沉浸性

作者:Caleb Bridge

“沉浸性”已成为一个司空见惯的词语。这通常是谈及游戏优秀之处的另一流行术语,但这些游戏鲜少深入探究构成沉浸性的必要元素。

和所有游戏设计要素一样,音效必须同图像和游戏机制相互配合,方能促使玩家沉浸于各式各样的玩法体验中,这主要通过向玩家传递众多细节内容,通常是在他们没有意识的情况下。

Visceral Games的J White、Playdead的Martin Stig Andersen以及Freshtone Games的Thom Kellar都是音效设计师,他们在制作音效体验上经验丰富。

这属于精神层面

《地狱边境》的音效设计师及作曲人Martin Stig Andersen表示,“若你创造出音效循环及恼人内容,这种媒介就会自彰其丑。当游戏揭示机制时,这些元素背后的媒介及装置就会遭到破坏,玩家就会跳脱体验之外。”

《地狱边境》是2010年的一款杰作,深受粉丝喜爱,虏获众多年度大奖。

游戏突出的视觉画面备受赞誉,但作品之所以取得成功部分归功于它让玩家有种与世隔绝及不祥之兆的感觉,这主要源自于优质的音效设计。

Andersen及《地狱边境》总监Arnt Jensen的合作契机始于Andersen在看完游戏的最初预告片后,觉得自己的独特音乐(电声乐,非商业性,完全基于融资形式)能够有效强化体验。

Andersen表示,“看过预告片之后,我就非常着迷于男孩的表达方式。它让我回想起光与声的美感;这些内容颇具辨识度,非常逼真,但同时也很抽象。”

“这就是我所欣赏的音效运用方式。这是着眼于模糊性的少量引用,所以更多涉及听众的想象,而非我想传递的东西。”

audio limbo from gamasutra.com

很多人会回想起如下的《地狱边境》情境:他们因男孩在这一地点的行为而萌生某种感觉。但《地狱边境》并未让玩家完全把握具体发生的情况。Andersen通过有意扭曲物体的音效,促使玩家更多思考所发生的情况以及如何要做出回应,做到这点。这带来一定程度的模糊性,让玩家能够“置身其中,做出自己的诠释”。

Andersen表示,“音效特性越鲜明,我越会扭曲它们。所以我不会融入带来更多联想的音效。如果我们添加带来鲜明特性的内容(游戏邦注:如语音或动物),那它就会破坏氛围。所以通过这一风格,《地狱边境》呈现能够深入玩家脑海中的音效和视觉氛围,让他们感觉到恐惧、担忧或紧张情绪。”

《地狱边境》围绕恐惧主题,但在这一题材中,合理音效设计在激发玩家共鸣及吸引玩家眼球方面必不可少。

一个典型例子就是《死亡空间》。J White(LucasArts元老,是Visceral Games《死亡空间2》的音效设计负责人)因团队能够做到这点而颇感自豪。

在谈到《死亡空间2》的音效时,White表示,“在制作过程中,我们会遇见这样的人士——他们来到这里,从未看过眼前的内容。我们正在体验内容,他们会直接跳到椅子上。这着实吓到大家。在我看来,这有些低劣,但我从中获得许多乐趣。”

我们投入许多精力思考要如何通过游戏音效元素吸引玩家眼球,有时甚至是在毫无意识的情况下。

“促使玩家忍不住做出回应的一个基本要素是,人类的声音,更准确来说,是人类遭遇困境时所发出的声音。这是人类无法避免的深刻反应。所以我们会用作音景的一个元素是,人类置身痛苦中时发出的声音。这也许被深深潜藏起来,但人耳和人类思维都习惯于人类所发出的声音,因此他们会做出回应,即便这只是整个音效设计的亚声音。”

情感元素在更小规模游戏(甚至是iOS游戏)中的运用方式和AAA作品类似。举个例子,iOS平台的《只有一条命》赋予玩家角色一次从系列屋顶跳至另一屋顶的机会。Freshtone Games音效设计师Thom Kellar希望带给玩家这样的感觉:他们真的置身屋顶上,准备进行跳跃。

他表示,“我想要运用各种能够让你感到紧张的音效,所以你在屋顶上会听到风声及各种声音,如小鸟拍打翅膀,飞机从头顶上飞过。但这总是会回到这一问题:‘这带给我什么感觉?’这也许是最棒或最酷或最精彩的声音,但若这没有配合游戏的情感或氛围,那么很遗憾,我们也只能将其弃置一旁。”

进行操纵

“操纵”是个肮脏字眼,用来指开发者用于激发玩家情感共鸣的糟糕举措。但实际情况是,音效设计师试图利用他们的技巧以全新不同方式进一步操纵玩家。

许多游戏会利用激动人心的分数强化玩家某个时刻的情感状态。虽然音乐有其作用,但它并非影响音效设计游戏的关键因素。

Kellar表示(游戏邦注:尽管他的最初角色是创作游戏音乐),“我们初期融入更多惊动人心的音乐,但我们觉得,从情感方面来说,仅让高分出现在玩家濒临绝境的末尾阶段效果更显著,他们已在游戏空间确立自己的位置。”

因此《只有一条命》对于音乐的高效及选择性运用让游戏呈现额外的紧张感。同样,Andersen也在《地狱边境》中追求类似感觉。

他表示,“我和Jensen鲜少将音乐比作操纵玩家情感或操纵任何东西的工具。我们都觉得,所有内容都应该让玩家自由诠释,玩家要能够将自己的感觉和情绪映射到体验中。当你提供这一空间,同时创造能够吸引玩家眼球的内容时,他们就会释放这些感觉和情绪。所以在他们感到恐惧的情况下,如果没有音乐引导他们,告诉他们如何去感受,他们将更加恐惧。”

《地狱边境》这类的游戏非常富有美感,因此具有可行性。相反,《神秘海域》或《质量效应》若没有通过音乐则很难传递情感高潮,因为这是动画体验风格的组要要素。

当然,这并不意味着,《地狱边境》丝毫没有配乐,只是音乐元素更加抽象,就和Andersen自己的音乐一样。他表示,“这是对男孩及其‘地狱边境’之旅的个人诠释,而非执行操作,突出既有内容,我们尝试添加其他尺寸。在我看来,男孩承受所有这些暴力非常悲惨。他已经习以为常,因此音乐存在宽恕情感。”但这也用于并置动作和情感,例如“当你首次遇到加特林机枪时,背景会出现神圣音乐,但若你添加动作音乐之类的元素,这就变得非常肤浅。”

《死亡空间2》采用更加传统的音乐和音效混合模式。自始至终,Isaac Clarke前女友Nicole的幽灵经常萦绕在他身旁。在随后章节中,这一关系发生变化,在他们达成和解之后,她出场的音效由唐突和不安(因为玩家对此毫无意识)转变成这样的效果:提示玩家她的出现能够带来促进作用。

White表示,塑造这一效果的一个方式是通过兼容幽灵特有的音效,在“空间中的一次性出场”进行多元化运用(游戏邦注:能够让人联想到角色或事件的音效),随着玩家逐步靠近幽灵,音效会越来越清晰。

配乐和音效的混合最终带来协调的体验。White列举如下例子:在某房间中,玩家对抗成群尸变怪,这些尸变怪赶走政府军队,而Nicole幽灵则尾随其后。

他表示,“我们寄希望于这些竞争性元素。我们设置这些背景音效,旨在让你有追随此大型战斗的感觉,但同时,你获得一个辅助角色,那就是Nicole。组合所有这些元素完全就是舞蹈设计问题,在此你获得有关战斗、逃亡、宁静及女友的感觉。”

音效设计还能够带给玩家地域感觉,游戏音效能够奠定游戏体验的基础。留心此基础环境音效能够强化游戏效果。

Andersen表示,“我们通过较大脚步声告知玩家,环境非常寂静。当然你无法在整款游戏中都融入较大脚步声。它们的层次非常重要,所以我们添加不同参数,例如男孩奔跑多久,若这已持续特定时间,那么情况就会出现变化。内容需要持续变化,否则持续听到这些脚步声,你将非常抓狂。”

audio ds2 from gamasutra.com

同样,精心设计背景音效能够带来独特的玩法体验。White表示,《死亡空间2》运用其背景音效“预示未来发展情况。如果玩家在清理房间或打败boss后回到同个地方,那么音效将截然不同。用华丽词藻来表述,《死亡空间2》的背景音效本身就是个角色。这是用户体验及游戏心理体验的内在组成要素。”

沉浸性

White表示,“杰出游戏机制能够自然吸引玩家眼球。《Pong》包含很棒的boops & beeps游戏机制,玩家着迷其中,因为这非常有趣。如今,作为音效设计师,我们能够获得令人满意的核心机制,但依然存在许多微妙元素有待我们深入挖掘。”

White表示,音效是整体体验中的重要沉浸性元素,音效设计师的部分职责是协助强化这些游戏机制和环境,进而吸引玩家眼球。

Andersen(游戏邦注:他除涉猎游戏外,还涉足电影行业)就游戏音效的沉浸性特性发表自己的看法。

“体验游戏和观看电影截然不同,因为在游戏中你只有在重要时刻才会听到音效。通常在游戏中,你可以置身音效的上下左右位置, 你可以靠近它,跳过它,但当你跳过它时,你依然会在前进过程中听到它。”

最后,Andersen表示,对于某些音效设计师来说,创造或打破沉浸性是项艰巨任务。“你创造的是富有粘性但同时维持幻想性的内容,那么这就是强有力的东西。但这操作起来非常棘手。”

篇目3,如何从提升游戏声乐愉悦角度运筹音效设定

作者:Craig Chapple

作为致力于寻找全新方法去吸引玩家注意的开发者,我们分析了一些对设计师有帮助的最新工具和技术。

自《超级马里奥》诞生以来游戏工作室已经走了很长的一段路,那时候许多类似的音乐都被认为能够提供额外的音效。

而现在音乐更是以电影般的规模迅速壮大,声音也变成了所有游戏中必不可少的核心部分。

让我以Develop Awards的入围作品为例。Simogo的文本冒险游戏《Device 6》很大程度地依赖于其沉浸式音频将玩家带到游戏故事中并会在故事的重要部分出现时调动玩家的紧张感。

还有像《战地4》和《罗马2:全面战争》等更大型的游戏也依赖于真实的音效让玩家觉得自己真的身处战地中,而不是在玩游戏。

Simogo的创始人Simon Flesser说道:“对于我来说,声音真的是与任何视觉效果一样重要的反馈元素。”

“我很难理解那些会关掉声音玩游戏的人。对于我来说,这与关掉屏幕只开着声音玩游戏一样荒谬。”

Develop Award的获胜者,即《Monument Valley》的声音制作人同时也是音频老手的Stafford Bawler通过提供自己曾经致力于的一个大型项目去总结了这些效果的重要性。

他回忆道:“我只是将第一个最适合的音频带到游戏中,并用能够呈现游戏发展的最终音频取代所有早前的测试内容/占位符。在第一次听到这一音频时,游戏的首席设计师便跟音频程序员说道‘这感觉就像一款真正的游戏,我们不再只是在构建一些内容。’”

加强声音

对于手机和主机的全新硬件以及一些不断更新的音频技术,如FMOD和wwise(游戏邦注:最近刚开启免费独立授权)同样也帮助着游戏工作室去执行一些全新风格的音频。

来自Creative Assemble同时也是《全面战争》的首席音频设计师Matt McCamley说道:“在过去,人们拥有非常有限的工具,因为这些技术上的限制导致他们很难创造各种不同的内容。而现在,随着游戏技术的发展,游戏音频也不断发展着,如此我们可以更好地向玩家传达游戏体验。”

Creative Assembly最让人期待的一款游戏《异形:隔离》便明显地呈现出了音频在游戏中的重要性。该团队采取了各种全新且独特的技术去唤醒最初电影中让人难以忘怀的氛围,并以此带给玩家身临其境之感。如果缺少了声音,这款游戏可能就没有这样的效果了。

而所有的这一切很大程度要归功于全新的主机和工具。

Alien Isolation 2(from develop-online)

Cooper说道:“伴随着额外的处理能力和频繁更新的中间件,现在的我们能够在全新一代的主机上使用丰富的卷积混音并使用像游戏内部的HRTF/双声道处理等新型的DSP。我们正逐渐摆脱新主机的硬件限制。”

来自CA的另外一名声音设计师,同时也是《全面战争》的音频管理者Richard Beddow表示,《罗马2》标志着该工作室第一次使用音频引擎wwise,该引擎为声音设计师提供了一些能够帮助他们重放有关游戏事件的声音资产的工具,还有资产容器以及DSP设置等分享资源。这意味着设计师能够设置全新的内容,并且多亏于其视觉GUI,他们还能够创造Beddow所谓的更有效能的工作流程。

Bawler还推荐了其它工具,如Unity和Fabric,他表示这些工具帮助他们创造了有序的层次结构以及精细的数据树管理,让他们能够花更多时间去处理音频创造的创意元素。

手机

就像Creative Assembly在其高预算的主机和PC上试验声音执行一样,手机同样也是这些有趣的技术的归属。

Simogo的Flesser以及编曲家Daniel Olsen面对着一项复杂的任务,即在《Device 6》的互动故事中整合音频。该音频必须符合玩家的理解,同时还需要避免在错误的时间播放音频而失去玩家的关注。

他解释道:“我们想要确保许多内容都是符合玩家的想象力,所以决定文本中的哪些内容是听得见的真的是一项很难保持平衡的任务。”

“创造太空感是我们最优先的选择。所以我们将使用脚步去传达不同的材料,并使用适当的混音去传达房间的规模,在每个声音中利用EQ确保玩家在当下能够真正感受到这些内容的存在。”

音频限制

在音频的质量和多样性方面,时间和预算限制扮演着一个重要的角色,特别是当设计师需要使用这些资源,但是手机却呈现出一系列特别的挑战。

Bawler说道,智能手机所呈现的主要限制便是扬声器本身,通常是使用单声道放音并让用户的脸避免接触听筒,或者是放置在玩家的手下。

他说道:“任天堂3DS的扬声器便拥有非常棒的HRTF回放。如果更多手机能够拥有这样的功能的话就好了。”

“你同样也需要处理玩家可能在任何地方玩手机游戏的情况,经常是在一些噪杂的环境中,或者玩家不想打扰到别人的情况下。当我在伦敦乘地铁以及在Monument Valley工作时,大概有10个人会在路上玩手机。一方面我会因为他们安静地玩游戏而感到可耻,但同时我也会感谢他们没有打开刺耳的声音。”

Flesser还补充道,让人们打开声音玩手机游戏是开发者所面临的最大挑战之一,并且他建议如果这是游戏体验核心的话应该尽早做出要求。他同样也表示设计师应该确保音频也能够运行于一些早前的设备中。

他说道:“出于某些原因,人们总是倾向于关掉声音玩游戏,但是《Device 6》中的许多谜题却要求玩家听取音频线索。”

“我们使用了低通滤波器,但我们在早前设备的游戏版本中关闭了这一功能,因为这在早前设备上使用会太吃力,我们想要保持稳定的帧速和性能以确保游戏体验足够顺畅。”

现场录音

尽管许多游戏都保持着较小的规模,但仍有许多游戏变得更大且更有野心,特别是最顶端的那些游戏。所以现场录音得到了更广泛的使用,并且因为之前游戏的声音已经过时而出现了全新的程序库。

Bawler建议现在的声音设计师需要根据自己的游戏去判断是否创造消费者所需要的音景。

他说道:“到外面去收集所需要的材料或投资针对于你自己的游戏的小型程序库是必要的。我们已经使用了好几年早前的程序库,但它们大多是源自电视,电影或广播—-这是你收集声音的一些先行环境,而不是我们创造游戏所需要的动态且真实的音景。”

Beddow认为音频处理并不需要随着游戏的变大而变得更加复杂,并且他认为这样的规模将加大对于音频处理的管理挑战。

他说道:“你必须足够聪明。很大程度上这是管理资产规模并在内部和外部部署适当的资源去传达现代玩家所期待的带有沉浸感且达到电影标准的音景的最佳方法。”

展望未来,将会有更多技术(不管是新的还是旧的)成为音频处理的核心,如虚拟现实游戏中的双耳音频等等。

Bawler表示因为程序音频的未来发展而兴奋不已,特别是看到当前产业中广泛使用的各种程序技术。

Bawler说道:“从很早以前开始,我便致力于通过有趣的方式结合简单的组件去创造复杂的声音和音频系统。”

“程序音频似乎是最适合这种方法的选择,但是你可能仍需要一名声音设计师帮助你确保声音效果。”

篇目4,音乐是增强游戏玩家沉浸感的重要元素

作者:Sande Chen

第一部分:首席音频设计师Gina Zdanowicz将讨论电子游戏音乐会如何加强玩家的游戏体验。

音乐是娱乐媒体的一个重要组成部分。随着游戏不断发展,游戏音乐更加依赖于与游戏视觉效果的互动,以此引入情景并激发玩家的情感。游戏音乐应该影响游戏玩法,游戏玩法也应该影响音乐。玩家的行动会影响音乐的互动与发展,就像音乐会在游戏过程中影响玩家的决定一样。这种结合能够让玩家更深入地沉浸于游戏体验中。

面向电子游戏创造音乐的最大挑战之一便是在尝试着提供无缝互动体验的同时理解游戏音频引擎的局限性。

像变化的拍子,类型,乐器和音乐节点等技巧能够为每个游戏领域设置最完美的氛围,并准确地告知玩家他们将在这些领域获得怎样的情感。

分层配乐是一种使用不同乐器演奏出不同流的技术。通过作曲而成就的这些流本身就非常强大了,并且能够与游戏视觉效果很好地合作,但同时也能够与其它流混合在一起,随着游戏玩法的改变而变换音乐。

通过创造逐渐加强的音乐能够告知玩家危险就在前方。与boss的战斗需要带有乐器和沉重敲打乐器等多个层面的强烈音乐。在boss被打败后,音乐的速度将放缓,乐器声将逐渐变小,以此告诉玩家危险将不再出现。

《超级玛丽兄弟》便通过加快拍子去告知玩家快没时间了,这将唤醒玩家的紧迫感,推动着他们在时间用完前完成关卡。《死亡空间2》利用了环境音景和大型管弦乐队去创造了一种可怕的氛围。游戏还使用了弦乐四重奏去与大型管弦乐队的演奏形成对比,以此描述了主角的脆弱性。

不管是音乐和视觉效果都是开发者必须谨慎对待的内容,只有将其紧密结合在一起才能创造出一种强大且逼真的环境。游戏的节奏与音乐增强效果一样重要,即为了传达下一个紧张时刻而先让玩家感到安心。

Guitar-Hero-Game(from 4hdwallpapers)

当你着眼于游戏中的音乐发展到什么程度时,它清楚地证明了自己在游戏产业的重要性。音乐不再只是设置在游戏的背景中。像《摇滚乐队》和《吉他英雄》等基于节奏的游戏类型改变了标准的游戏玩法并将音乐变成一种游戏。

Gina Zdanowicz是Seriallab Studios的创始人,Mini Monster Media的首席音频设计师,以及Berkleemusic的游戏音频导师。Seriallab Studios是一家全方位音频内容供应商,即面向电子游戏产业提供定制音乐和音效。Seriallab Studios已经参与了60多款游戏的音频开发。

第二部分:关于游戏中剧情音乐与非剧情音乐的一些例子。

剧情音乐是游戏产业中越来越受欢迎的一种技术。剧情音乐指的是源自游戏世界的音乐。如果游戏配乐能够在游戏世界中整合史诗音乐的话就太棒了,但是在现实中,当你在公园里或海边散步时,你是不可能提到任何音乐的,除非你带着耳机。尽管剧情音乐是源自游戏中的一个对象,但却仍然能够设定环境的氛围。

让我们着眼于一些利用剧情音乐去加强玩家沉浸感的游戏。

《辐射3》有效地利用了剧情音乐和非剧情音乐。游戏中的角色配有腕带式计算机,名为Pipboy 3000,同时分配在游戏世界各地的收音机也会播放音乐和其它来自游戏内部广播电台的广播。如果玩家打开了自己的Pip-boy 3000,他们便需要小心收音机会引来NPC。当受因此被关闭时,非剧情背景音乐便会响起。

《生化奇兵》也结合了剧情音乐和非剧情音乐,以及没有音乐去设置游戏氛围。在游戏的开场中,玩家将从飞机残骸中逃到一座小小岩石岛上的灯塔中。在这个场景中为出现任何音乐将让玩家有种不顾一切地生存下去的感受。在玩家进入灯塔后,音乐将渐渐融入场景中。音乐是源自楼下,即让玩家会循着音乐走下楼去寻找球形潜水器中的收音机。在这个例子中音乐扮演着两种角色:它让玩家有理由在游戏中向前移动,同时还能感受到游戏的氛围。

在《生化奇兵》中当玩家进入一个传出刮擦声的60年代录音机时,剧情音乐有效地强调了那座衰败的城市。我们可以从角落或门缝中听到剧情音乐(游戏邦注:用于替代管弦背景音乐)。

《侠盗猎车手》便是剧情音乐的典型例子。玩家可以在游戏中驾驶汽车的同时选择播放不同的音乐。毕竟没人不喜欢伴随着音乐开车。

剧情声的转换可以用于贯穿游戏持续剧情音乐。音乐作为收音机或游戏中其它来源的剧情广播出发,随着剧情的改变,音乐将转换成同样歌曲的非剧情版本并继续回荡在环境中。

《塞尔达传说:时之笛》便是从塞尔达的剧情音乐版本开始,并引导着玩家通过迷路森林的迷宫。当歌曲声越来越大时,玩家便知道他们是朝着正确方向前进。如果玩家偏离了正确道路,歌曲的音量便会下降,以此提醒玩家改变方向。当玩家熟悉了歌曲时,它便会在环境中转变成非剧情音乐。

随着电子游戏的发展,游戏音乐也跟着发展着,推动着无缝视觉效果与听觉体验的互动,并将玩家更深入地带进游戏世界中,直至他们按下暂停键。

篇目5,研究分析游戏音频对玩家产生的影响

作者:Raymond Usher

这项研究调查的是音频在电脑游戏中的重要性。针对这个目标,我们设计了一个实验,比较研究参与者在有音频和无音频的环境下玩相同游戏的情况。参与者对游戏的生理反应被记录下来,包括呼吸波动、心率、呼吸频率和皮肤温度。

对参与者的心率和呼吸频率的分析显示,那些玩带有音频的游戏的人拥有更高层次的激发状态(游戏邦注:心率和呼吸频率综合值),由此呈现了游戏中音频促进玩家融入的能力。

参与者

阿伯泰大学的12名学生参加了此次研究。所有的参与者都有过玩电脑游戏的经验,但是对研究所选择的这款游戏,他们几乎未曾体验过。

资料

实验性测试在阿伯泰的HIVE中进行,6米背投屏幕、7.1环绕音效和可调节光照可以用来呈现绝妙的游戏体验。

参与者的生理反应用生理信号系统记录。生理信号系统可以记录一系列生理属性,包括心率、呼吸频率和皮肤温度。

研究选用了3款游戏供参与者体验:《星噬》;《横冲直撞:终极杀戮》;《失忆症:黑暗后裔》。

这些游戏题材各异(包括赛车和生存威胁),玩法类型也各不相同(包括驾驶和第一人称射击)。

图1 星噬(from gamasutra)

《星噬》的目标是将代表你的单细胞有机体(亮蓝色球体)推到其他较小的尘埃(暗蓝色球体)中,将其吞噬。如果与比你较大的尘埃碰撞,那么你自己就会被吞噬,游戏结束。喷射物质可以改变移动的轨迹。根据作用力与反作用力,玩家的尘埃会朝物质喷射相反的方向移动,但是喷射物质也会导致尘埃缩小。

图2 横冲直撞:终极杀戮(from gamasutra)

《横冲直撞》是款赛车游戏,侧重于破坏性的竞速,拥有精妙的物理引擎。比赛会在各种不同的地方展开,从繁忙的街道到暴雨水渠。玩家与11个由电脑控制的对手比赛,整场比赛需要围绕赛道跑许多圈。腾空和撞击其他汽车能够让速度获得额外的大幅提升。

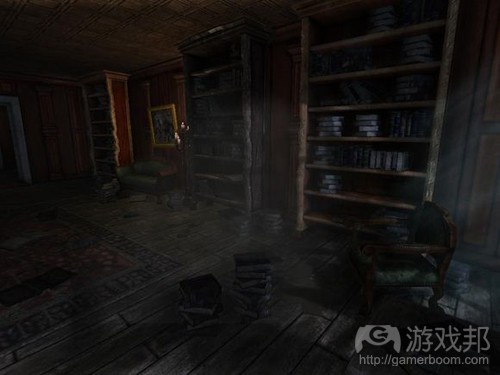

图3 失忆症:黑暗后裔(from gamsutra)

《失忆症:黑暗后裔》讲述的是手无寸铁的主角探索一个黑暗且令人产生不祥之感的城堡,在其中躲避各种怪物和其他障碍,同时游戏还包含解决谜题的内容。游戏是第一人称视角,玩家扮演Daniel随故事进展经历Brennenburg Castle的不同关卡,从Rainy Hall开始直到到达城堡的最深处,寻找城堡的所有者Alexander。

过程

实验性测试从参与者签署同意表格开始,他们在表格中承诺同意参与实验,理解实验的组成内容并声称对该实验毫无疑问,这是阿伯泰实验指导的要求。签署同意表格后,参与者学习如何佩戴生理信号系统。佩戴上生理信号系统后,设备就会开始记录生理属性。

参与者依次玩《星噬》、《横冲直撞》和《失忆症》这3款游戏。在每次开始玩游戏前,参与者必须处在冷静状态,这点通过生理信号系统来确认。如果参与者不是以休憩状态开始玩游戏,那么就很难断定游戏对生理反应所产生的影响。

有音频和无音频的条件交替使用,如果某个参与者玩有音频的游戏,那么下个参与者就玩无音频的游戏。所有参与者玩的都是这3款游戏中相同的关卡和场景。在《星噬》中,参与者玩的是两个任务为成为最大尘埃的关卡。在《横冲直撞》中,参与者使用的都是首个赛道(游戏邦注:比赛需要绕赛道跑4圈)和相同的汽车。在《失忆症》中,所有参与者的任务是导航通过游戏的开放关卡。

结果

游戏1

对参与者反应的分析专注于心率和呼吸频率,这是衡量激发状态的变量。根据测试条件,参与者被分成两组:音频和无音频。

图4显示的是两组在玩首个游戏期间的心率对比。

图4:音频组和无音频组心率比较示意图(from gamasutra)

在游戏开始时,两组的心率都在75bmp(游戏邦注:“bmp”指每分钟跳动次数)左右。音频组玩游戏的时间更长,但是心率的趋势是不断提升,心率的最大值和最小值也都高于无音频组(游戏邦注:音频组最大值为84bpm,最小值为68bpm;无音频组最大值为78bpm,最小值为61bpm)。

深入分析发现,心率上的这种差异是显著的(曼-惠特尼检验p<0.001)。

图5显示两组呼吸频率在游戏期间的对比(游戏邦注:音频组呼吸频率的最大值和最小值分别为25bpm和7bpm,无音频组分别为21bpm和2bpm)。分析显示,与心率不同的是,呼吸频率之间的差别并不显著(曼-惠特尼检验p=0.182)。

图5:音频组和无音频组呼吸频率比较示意图(from gamasutra)

游戏2

图6显示音频组和无音频组在玩第2款游戏(即《横冲直撞》)时的心率情况。图形显示,两组间有明显的差异,音频组在游戏期间的心率远高于无音频组(游戏邦注:音频组最大值为91bpm,最小值为57bpm;无音频组最大值为77bpm,最小值为64bpm)。进一步分析数据显示,这两组间的差别明显(游戏邦注:曼-惠特尼检验p<0.001)。

图7显示两组在游戏过程中的呼吸频率变化情况。在游戏期间,两组的呼吸频率都有波动,音频组的呼吸频率最大值和最小值略高于无音频组(游戏邦注:音频组最大值为24bpm,最小值为14bpm;无音频组最大值为22bpm,最小值为12bpm)。数据分析发现,两组间的差异很明显(曼-惠特尼检验p<0.001)。

图6:心率比较示意图(from gamasutra)

游戏3

图8显示音频组和无音频组在玩第3款游戏(游戏邦注:即《失忆症:黑暗后裔》)时的心率情况。图表显示,音频组在整个游戏过程中心率都高于无音频组。音频组和无音频组的心率最大值分别为90bpm和77bpm,心率最小值分别为77bpm和52bpm。进一步数据分析发现,两组心率间有显著的差异(曼-惠特尼检验p<0.001)。

图9显示音频组和无音频组在游戏过程中的呼吸频率对比情况。图形显示,音频组的整体呼吸频率(最大值为27bpm,最小值为10bpm)要高于无音频组(最大值为16bpm,最小值为6bpm)。数据分析显示,呼吸频率间的差异也是显著的(曼-惠特尼检验p<0.001)。

图8:游戏3过程中音频组和无音频组心率比较示意图(from gamasutra)

总结

首先,总结下实验结果。在游戏1期间,音频组与无音频组相比,心率显著较高,呼吸频率略高。在游戏2期间,音频组的心率和呼吸频率都显著高于无音频组。

最后,在游戏3期间,音频组的心率和呼吸频率也都显著高于无音频组。这些发现显示,游戏中的音频能够增加玩家的激发层次,生理反应的提升(即心率和呼吸频率)便是证据。

如果从游戏的角度来考虑,游戏1(即《星噬》)的结果显示,这款游戏让音频组产生相对较低的心率(数值为68bpm)和最低的呼吸频率(数值为7bpm)。尽管这些值较低,但仍然高于无音频组。

这些数值较低最有可能的原因在于,《星噬》是款低压游戏。关卡参与者需要完成的任务并不具有很大的挑战性,而且音频让人放松,因而参与者没有变现出任何紧张感。

在游戏2(即《横冲直撞》)中,与无音频组相比,音频组呈现出最高的心率(数值为91bpm)和略高的呼吸频率。出现如此高数值的原因在于,《横冲直撞》是款令人兴奋的竞速游戏,音频(游戏邦注:比如引擎轰鸣声、碰撞音效和背景摇滚音乐)会让人产生更大的兴奋感。此外,参与者在游戏中的表现也会影响生理反应。比如,如果玩家胜利,他或许就会产生兴奋感(心率和呼吸频率都会增加)。如果玩家在游戏中失败,他们可能就会产生挫败感,这也会让心率和呼吸频率增加。

游戏3(即《失忆症》)最好地呈现了游戏中音频产生的影响。与无音频组相比,游戏期间音频组的心率和呼吸频率显著较高。游戏过程中没有敌人也没有战斗,只有探索的内容,结果显示音频能够增加玩家在游戏中的沉浸度。

纵观各组对所有游戏的反应,结果显示音频组在所有游戏中的心率和呼吸频率最大值都高于非音频组(游戏邦注:3款游戏的心率最大值分别为84bpm、91bpm和90bpm,呼吸频率最大值为25bpm、24bpm和27bpm)。无音频组在3款游戏中的心率最大值几乎相同(分别为78bpm、77bpm和77bpm)。两组间心率的差异显示游戏中音频对玩家的影响。

为进一步探索游戏中音频的影响力,还有其他利用与上述相同方法论的研究,但是使用商业游戏有助于构建专门游戏环境。专门游戏环境的优势在于控制几乎所有内容的能力。这种环境可以用于探索音频的质量或现实性等层面与玩家反应间的关系。

篇目1,篇目2,篇目3,篇目4,篇目5(本文由游戏邦编译,转载请注明来源,或咨询微信zhengjintiao)

篇目1,6 Ways 3D Audio Can Expand Gaming Experiences

by Michel Henein

“Sound is that which does not simply add to, but multiplies the effect of the image.” Akira Kurosawa.

Intro:

Over the past few years, we’ve witnessed sound for cinema being transformed from 5.1/7.1 to the high channel-count and object-based sound formats available for today’s film-makers to extend ‘surround sound’ to the next level of immersion for audiences (i.e. Dolby ATMOS, DTS Multi-Dimensional Audio, and Auro Technologies’ Auro 3D). These formats add speakers all around, including above the listener to emit ‘elevation cues’, allowing sound to pan around in all three dimensions.

Games can also use elevation cues to further expand audio in all three dimensions (without the need for additional speakers by using stereo headphones, for example); through the use of digital filters (HRTFs derived from dummy-head measurements or modeling of the auditory cortex), a convincing 3D ‘effect’ can be created by leveraging a sound object’s XYZ position in game space to simulate a sound being heard at a corresponding point in a 3D sound-field.

Yes, 3D audio technologies and solutions have been around for quite some time but the majority of today’s game developers do not implement true 3D positional audio into their games; instead developers tend to stick with standard stereo and 5.1 surround sound as the de facto standard for the vast majority of titles being produced today. Keep in mind that not all 3D audio solutions are alike and really compelling 3D audio typically requires quite a lot of processing resources — precious resources that aren’t always made available for audio processing. With the explosion of multi-core processors for mobile gaming, powerful next-gen consoles arriving later this year, and dedicated audio DSP resources being offered on new GPUs (i.e. AMD’s TrueAudio), the conditions are ripe for the mass adoption and standardized use of compelling and powerful 3D audio solutions by game developers for their game titles across many different platforms.

Here is what 3D sound can provide game developers:

Elevation: placement above and to some degree, below

Azimuth: placement in front, behind, and to the sides

Distance: using elevation and azimuth to define a position for a sound inside a 3D sound-field (i.e. a spherical sound-field wrapped around you) the sound can be pushed out in space with distance to allow improved depth perception (using distance cue processing, 3D room simulation, for example.)

These parameters expand the sound-field beyond what stereo, 5.1, and 7.1 can produce (which are 2D formats). For developers looking to offer a bit more realism and immersion for their players, 3D audio may be the ‘lowest-hanging fruit’ available to do so.

Here are 6 ways developers can use 3D audio to expand gaming experiences:

Enhanced immersion for mobile games:

There are tons of fun games on the small screen (for your mobile phone or tablet) however, 3D audio can be used to create the illusion of a larger game world through the use of an immersive sound-field that envelops the player. While there may be a bit of a disconnect between a small screen and an enveloping, spherical sound-field, 3d audio for mobile gaming can heighten the feeling of being “in the game.”

Ear Monsters, by Ear Games, is a forward-thinking iOS game that employs the use of 3D audio to drive gameplay, rather than visuals. The use of 3D auditory cues to drive gameplay serves to extend the boundaries of the game world beyond the small screen of the mobile device, for example, the player can tap in the general direction of the sound being heard in 3d space (for example, tapping the top of the screen if an attack is heard coming from above.)

Sound-centric games:

Using 3D audio, sound-centric games can:

Help the visually impaired to enjoy gaming. Ear Monsters allows players to play without needing to see what’s happening on screen.

Create interactive stories using voice over and sound effects positioned in a 3D sound-field for an immersive story-telling experience.

Use darkness and lack of visibility to increase reliance on 3D sound cues can make more compelling and immersive horror games.

This list barely scratches the surface with all the possibilities that sound-centric games can offer players.

Improve situational awareness in 3D games (i.e. 3D FPS):

3D audio can improve situational awareness in FPS games by relaying sound cues from certain directions correctly to the player. For example, in most modern combat FPS games that rely use stereo, 5.1, or 7.1 audio delivery, when a player on the ground level is being shot at by a sniper who’s perched high in a tower, the sound of the sniper’s shot does is not heard from above. With 3D audio, the sound of the sniper’s shot would be heard from above, like it’s supposed to.

Reduction of UI graphics:

It is well known that using sound to substitute for UI (i.e. running out of ammo or player health indication using sound prompts) can help remove display clutter. 3D sound can be used to extend the sound cues by using space to indicate more information (i.e. using a sound cue with elevation to indicate a rainstorm is coming.)

Positional 3D audio for multiplayer voice chat:

By leveraging 3D audio, a ’3D radio communications’ of sorts can be created to hear team member communication based on actual position in the game (i.e. if a member of a squad is up on a hill, the radio communication from that player would be elevated.)

3D audio compliments the VR experience:

Conventional stereo, 5.1, or 7.1 audio playback limits the sound field to a two-dimensional plane creating a disconnect with the visual field offered by VR. 3D audio eliminates this problem by allowing a full 3D sound-field to perfectly compliment the 3D stereoscopic visual field; head-tracking (with the Oculus Rift, for example) coupled with 3D audio allows the player to move their head around and expect to hear sound all around them correctly, including sounds from above.

How can developers implement 3D audio in their games?

Games typically use audio middleware solutions (FMOD and Wwise, for example) for their run-time sound engines so developers can utilize 3D audio technologies made available from Dolby, DTS, GenAudio, Auro 3D, and Iosono (check with your audio middleware provider for available solutions.) Encourage your teams to explore using either of the various 3D audio solutions available today to enhance the aural experience for your title(s) beyond what stereo, 5.1, and 7.1 can offer.

篇目2,Creating Audio That Matters

by Caleb Bridge

“Immersion” has become cliché. It’s often just another buzzword when talking about how great a game is, but it’s all too infrequent that those discussing games will actually break down the finer details of what that immersion entails.

Like all pieces of the puzzle that is game design, audio must work in concert with graphics and game mechanics to help immerse the player into gameplay experiences of all shapes and sizes through its ability to convey vast amounts of the detail to the player, often without their knowing.

J White, Martin Stig Andersen, and Thom Kellar, of Visceral Games, Playdead, and Freshtone Games respectively, are three sound designers who have ample experience in creating such audio experiences.

It’s Mental

“It can be easy for the media to reveal itself if you’ve got a sound loop or something that becomes annoying. As soon as the game reveals the mechanics, the media and the machinery behind these things is ruined and the player is thrown out of the experience,” said Martin Stig Andersen, sound designer and composer on Limbo.

Limbo was a critical success and fan favorite in 2010, putting many end-of-year awards under its belt.

It was lauded for its striking visual aesthetic, but part of its success came from its ability to make the player feel a sense of isolation and foreboding that is largely the result of top quality sound design.

Andersen and Limbo’s director, Arnt Jensen, teamed up after Andersen saw the game’s initial trailer and felt his unique area of music (electroacoustic music, a non-commercial, almost entirely funding-based form) would add to the experience.

“I watched the trailer and I was really captivated by [the boy's] expressions,” said Andersen. “It reminded me of the aesthetics of light and sound; you have something recognizable and realistic, but at the same time it’s abstract.

“It’s the same as what I love about how we use sound. We have all these slight references that focus on ambiguity, so it’s more about what the listener imagines, rather than what I want to tell them.”

Many can think back to sections of Limbo where they were struck by a certain feeling or sense for what the boy was doing in that place. But one thing Limbo never allowed the player to do was fully understand what was happening. Andersen achieved this by intentionally distorting the sounds of objects in an attempt to make the player think more about what’s going on and how they’re meant to react. This gave it a level of ambiguity that allows the player to “be there and make their own interpretation.”

“The more identity the sounds had, the more I would distort them,” Andersen said. “So I wouldn’t include sounds that gave too strong associations. If we added something that had a strong identity like a voice or an animal, then it would almost destroy the atmosphere. So with that style, Limbo offered an audio and visual atmosphere that can really get into the player’s mind, and make them feel scared, worried or on edge.”

Limbo toys with horror themes throughout, but it is in that genre that exacting sound design is essential in eliciting player emotion and getting inside the player’s head.

One series praised for its ability to do this is Dead Space. J White — a LucasArts veteran who served as sound design lead at Visceral Games on Dead Space 2 — took great pride in the team’s ability to do just that.

“During the course of production, there were meetings I would have with people — they’d come in and have never seen [the section] before. We’d be playing, and they would literally jump in their chair. It genuinely frightened people. It might be kind of mean on my part, but I took a lot of pleasure in that,” said White, when discussing the effects of the sound in Dead Space 2.

Much effort was put into thinking about how they could use the game’s audio elements to enter the player’s mind, sometimes without them even noticing it.

“A fundamental thing that people cannot help but respond to is the sound of the human voice and, even more specifically, the sound of human suffering. It’s just an unavoidable, deep reaction that people have. So one of the elements that we’ll use as part of our soundscapes are the sounds of people in misery. It may be deeply buried, but the human ear and human mind are so attuned to human vocalizations that they’ll respond to it even if it’s just a sub-audible aspect of the overall sound design.”

This sentiment applies to smaller games — even iOS games — in the same way it does to triple-A releases. One Single Life on iOS, which literally gives the player’s character a single life to jump from a series of rooftops to another, is one such example. Thom Kellar, Freshtone Games’ sound designer, wanted to give players the sense that they were actually on that roof, preparing to jump.

“I wanted to use a lot of sounds that would make you feel a bit on edge, so there was wind and a lot of noises you’d hear on a rooftop like birds fluttering and planes flying overhead,” he said. “But it kept coming back to ‘What did that make me feel?’ and it might have been the best or coolest or most wonderful sound, but if it’s not contributing to the emotion or atmosphere of the game, unfortunately it had to go into the basket.”

Manipulation

“Manipulation” can be a dirty word, and is generally used to call out poor attempts by developers to force emotions or reactions from the player. But the reality is, that audio designers try to use their craft to manipulate players in new and different ways as an enhancement.

Many games will use a rousing score to heighten the emotional state of a moment, and while music certainly has its place, it doesn’t have to be the crutch used for affecting sound design games.

“We experimented early on with putting dramatic music over the top, but we felt that emotionally, it was a lot more effective to only have that big score happening towards the end when players were getting to the very edge, and they’d established themselves in the world already,” said Kellar — despite one of his initial roles being to write music for the game.

As a result, One Single Life’s efficient and selective use of music gives it an extra sense of tension. Likewise, Andersen went for a similar feel with Limbo.

“[Jensen and I] rarely like music as an instrument to manipulate the emotions of the player, or manipulate anything really. We both feel that everything should be open to interpretation, and people should be allowed to project their own feelings and emotions into the experience,” he said. “When you allow for that space, and at the same time create something that’s captivating and immerses the player, it lets them let go of those feelings and emotions. So if they’re scared it will probably make them more scared when there’s no music to take them by the hand and tell them how to feel.”

Of course a game like Limbo is aesthetically such that this is possible. Conversely, Uncharted or Mass Effect would be less likely to convey emotional and energetic peaks without the use of music, as it’s such an integral part of the style of the cinematic experiences they’re trying to create.

Of course, that doesn’t mean that there’s no music at all in Limbo, it’s just more abstract, like Andersen’s own music. “It’s the personal interpretation of this boy and his journey through limbo, and instead of playing the action and emphasizing what’s already there, we’re trying to add another dimension… For me, it’s really melancholy that this boy has been subjected to all this violence. It’s just the idea that he’s been habituated to it, and there’s a kind of forgiveness in the music,” he said. But it’s also used to help juxtapose actions and emotions, such as “when you come across the Gatling guns for the first time, there’s this divine music in the background, but if you add something like action music, it becomes so one-dimensional.”

Dead Space 2 featured a more traditional mix of music and effects. Throughout, the apparition of Isaac Clarke’s ex-girlfriend, Nicole, haunts him. In the later chapters, that relationship changes and after they have a reconciliation, the audio relating to her presence transitions from being abrupt and uncomfortable as the player is unaware of what’s coming, to one which indicates to the player that her presence is actually a helping one.

According to White, one of the ways this was portrayed was by incorporating sound effects that became characteristic of her apparitions, and using them differently in ‘ambient one-shots’ — effects that elicit thoughts of a character or event – that would begin to play the closer you came to an encounter with her.

The mixture of music and effects ends up being an orchestrated experience. White gives the example of a room where the player has fought of a phalanx of Necromorphs, who were chasing off government forces, which was followed by another apparition of Nicole.

“We have these competing things we want to pay off on,” he said. “We’ve got these ambient sounds devoted to giving you the sense that you’re following this massive battle, but at the same time, you’ve got this helping presence that’s Nicole coming in. It’s really a matter of choreography to get all those kinds of elements to play together, where you’ve got this sense of the battle, the desertion, the quietness and the girlfriend.”

Sound design can also manipulate the player’s mind to give them a sense of place, for which game audio helps set the foundation of the game experience. Taking care with such base-level environmental effects is part of what can help make a game great.

“We had loud footsteps to tell the audience that the environment is really silent,” said Andersen. “Of course you couldn’t have loud footsteps through the whole game. Their level is very important, so we added different parameters like how long [they boy] has been running, and if he’s been going for a certain amount of time it starts to change. It needs constant variation, otherwise you’d go crazy hearing those footsteps all the time.”

Likewise, such meticulous care with environmental sound design can help give unique gameplay experiences. According to White, Dead Space 2 used its ambient sound to “foreshadow what’s coming next. If the player backtracks to the same place after they’ve cleared the room or beat the boss, it sounds different. In the most flowery terms, the ambient sound in Dead Space 2 is almost a character in itself. It’s such an intrinsic part of the player’s experience, and the psychological experience of playing a game.”

Immersion

“A good game mechanic will naturally immerse players. Pong has a great game mechanic with boops and beeps, and people are drawn into that because it’s fun,” said White. “These days, as audio designers, we still score that core mechanic in a satisfying way, but we have a lot more subtlety available to us.”

White recognizes that audio is an important, additive element to the overall experience, and part of the role of sound designers is to help enhance those game mechanics and environments in order to immerse the player.

Andersen, who has worked on films in addition to games, gives some perspective to game audio’s immersive nature.

“When I’m playing games it’s so different from films, because there you only hear sounds when it’s important to the viewer. Often in games, you can go up and down or around a sound, you approach it and you pass it, but when you pass it you still hear it as you progress.”

Ultimately, for some sound designers, walking the tightrope of making or breaking immersion is a tough one, says Andersen. “If you create something that’s engaging but at the same time keeps the illusion alive then you’ve got something that’s very strong. But it’s so difficult to do.”

篇目3,Aural nirvana: Bringing outstanding sound to games

By Craig Chapple

As developers look for new ways to immerse players, we analyse the latest tools and techniques open to sound designers

Game audio has come a long way since the early days of Super Mario, where much of the same music was condensed to provide extra sound effects.

Enormous musical scores are now provided on the scale of film, and sound is fast becoming a near central part of all gaming.

Take our Develop Awards finalists. Simogo’s text-based adventure title Device 6 heavily relies on its immersive audio to engage the player with the story and ratchet up the tension during key parts of the narrative.

And the bigger titles like Battlefield 4 and Total War: Rome II rely on realistic sound effects to make the player actually feel like they’re in a warzone, and not just playing a game.

“To me sound is as an important factor for feedback as anything visual,” says Simogo founder Simon Flesser.

“I can’t for my life understand people that say they play games with sound turned off. To me, that’d be just as absurd as turning off the screen and playing with sound only.”

Develop Award winner, audio veteran and the man behind Monument Valley’s sounds, Stafford Bawler, sums up the importance of such effects by offering an example from a large scale project he’d previously worked on.

“I’d just delivered the first proper audio build to the game, replacing all the early tests/placeholder with first pass final audio that represented where the game was going in terms of audio,” he reminisces. “On hearing this for the first time the game’s lead designer was speaking with the audio coder and said ‘It feels like a proper game now, we’re no longer just making builds’.”

Sharpening sounds

New hardware for both mobile and console, as well as a number of constantly refreshed audio technologies such as FMOD and wwise – which recently opened up new and free indie licences – are also helping studios record and implement new styles of audio.

“In previous generations, a limited set of additional tools was available, and it was more restrictive to set up multiple instances due to technological shackles,” says Creative Assembly and Total War senior audio designer Matt McCamley. “As games tech matures, so does in-game audio, chiefly in the way we can deliver an experience to the player.”

One of Creative Assembly’s hotly anticipated titles, Alien: Isolation, is a prime example of how key audio can be in games. The team has had to adopt various new and unique techniques to evoke the original film’s haunting atmosphere and turn it up to ten to keep players on the edge of their seats for its 15-hour or so duration. And from early footage, the game just wouldn’t be the same without sound.

The team’s sound designer Sam Cooper says the game’s audio has been crafted to subtly conjure up dark and scary imagery on even seemingly mundane objects, tapping into the player’s subconscious, in-built fight-or flight responses. He explains this creates an unpredictable soundscape that will keep users second-guessing what their ears are telling them, moving the experience beyond just twitch-play and how good they are at games.

And much of this has been made possible thanks to new consoles and tools.

“With additional processing power and frequently updated middleware, we’re now able to use rich convolution reverbs on new-gen consoles and experiment with emerging DSP such as in-game HRTF/binaural processing,” says Cooper. “We’re far less limited by the hardware with new consoles.”

Another CA sound designer, Total War audio manager Richard Beddow, says Rome II marked the first time the studio had used audio engine wwise, as it offered tools for sound designers in terms of how to play back assets assigned to game events, asset containers and shared resources such as DSP settings. This meant that designers could set up new items, previously the realm of coders, thanks to its visual GUI, creating what Beddow says was a more efficient workflow.

Bawler recommends other tools, such as Unity and Fabric, which he says lent themselves to the way he likes to work, creating ordered hierarchies and the careful management of data trees, allowing him to spend more time on the creative elements of audio creation.

Mobile beats

Much like Creative Assembly is experimenting with sound implementations in its big budget console and PC outings, mobile is also home to some interesting techniques.

Simogo’s Flesser had the tough task of integrating audio, along with composer Daniel Olsén, in interactive story Device 6. The audio had to match the reader’s interpretation, while also not losing their attention by playing sounds at the wrong time.

“We wanted to keep a lot of the things up to the players’ imagination, so it was a tough balance to decide what things that are mentioned in the text should be audible or not,” he explains.

“Creating a sense of space was our biggest priority. So working with footsteps to communicate different materials, the right amount of reverb on sounds to communicate size of rooms, mixing things properly, fiddling a lot with EQ on every sound to make everything feel just right within the space that the player was in at the moment.”

Audio constraints

Time and budget constraints can of course play a big role in the quality of audio and its variety, particularly if designers are required to go to the source, but mobile in particular throws up a unique set of challenges.

The major limitations presented by smartphones, Bawler says, is the speakers themselves, which often use mono and face away from the listener or are placed just under the player’s hands.

“The Nintendo 3DS has amazing HRTF playback going on with its speakers. It would be awesome if more phones featured this kind of thing,” he states.

“You also have to deal with the fact games on mobile are played all over the place, often crowded or noisy environments, or places where you don’t want to annoy other people. When I was traveling on the tube in London whilst working on Monument Valley, there were about ten people in the carriage all playing their phones. On the one hand I thought it was a shame they were all silent, but I was also thankful that we didn’t have an amusement arcade cacophony going on.”

Flesser adds that getting people to play mobile games with the sound turned on is actually one of the biggest challenges for developers, and suggested stating the requirement early on if it’s central to the experience. He also says designers need to make sure audio can run on older devices, which can limit effects on newew mobiles despite their more advanced capabilities.

“For some reason or another, people tend to play mobile games without sound, and quite a few puzzles in Device 6 requires the player to listen to audial clues,” he says.

“We used low pass filters here and there, and we actually had to turn that off on older devices because it was too demanding and we want to keep a steady framerate and performance to keep the experience smooth.”

In the field

While many games keep to a small size, it could be argued many more are becoming bigger and more ambitious, particularly at the top end. As such, the use of field-recording is becoming more widely used and new libraries are being created as the old sounds from past games become overused.

Bawler suggests sound designers now need to up their game if they’re to create the soundscapes consumers clamour for.

“Going out and recording your own material or investing in boutique libraries specially constructed for games is a must,” he states. “The older libraries have been our bread and butter for years, but they were all recorded for TV, film, radio etcetera – a linear environment where you’re making a ‘photograph’ of sound rather than the dynamic, living, breathing soundscapes we have to create for games.”

Beddow says the audio process isn’t necessarily becoming significantly more difficult as games get bigger, but says such scale has sparked a greater management challenge of the process.

“You have to be smart,” he says. “Largely it’s about the best way to manage the asset-volume and deploy the right resources, both internal and external, to deliver the kind of deeply immersive, cinema-grade soundscape that the modern player, quite rightly, has come to expect.”

Looking forward, many more techniques, both old and new, could become more central to the audio process, such as binaural audio in virtual reality games [See boxout Virtual hearing], for example.

Bawler says he’s excited by the prospect of working with procedural audio in future, particularly given the prominence of various uses of the procedural generation technique evident in the industry at the moment.

“Since very early days, my way of working has been built upon building complex sounds and audio systems from simple components combined in interesting ways,” says Bawler.

“Procedural audio seems like a natural fit for this way of working, and from what I can tell, you still need a sound designer to make sure it’s all sounding as it should.”

篇目4,Video Game Music: Player Immersion (Part I)

by Sande Chen

In Part I of this article, lead audio designer Gina Zdanowicz discusses how video game music enhances a player’s gameplay experience.

Music has always been an important part of entertainment media. As gaming continues to evolve, game music is more heavily relied upon to integrate with the games visuals, to set the scene, and to evoke players’ emotions. Game music should affect the gameplay, and the gameplay should affect the music. The player’s actions influence the interactivity and evolution of the music, just as the music influences the player’s decisions during game play. This combination immerses the player deeper into the gaming experience.

One of the biggest challenges in creating music for video games is in understanding the limits of the game audio engines while trying to provide a seamless interactive experience.

Techniques such as varying tempo, genre, instrumentation and musical notes can set the perfect mood for each area of the game and tell the player exactly what emotions they should feel in those areas.

A layered score is a technique that has several streams with different instruments on each. Those streams should be composed so they are strong on their own and work well with the games visuals, but also be able to be mixed together with the other streams to evolve the music as the game play changes.

Music that builds to a crescendo can signal to the player there is danger just ahead. A boss battle may require more intense music with several layers of instruments and heavy percussion. After the boss is defeated, the music slows down in tempo and the instrumentation thins out, signaling to the player that the danger is no longer imminent.

Super Mario Brothers utilized increased tempo to signal to the player that time is running out, which evokes a sense of urgency to complete the level before running out of time. Dead Space 2 uses ambient soundscapes and a large orchestra to create an eerie, yet larger than life feeling. A small string quartet was used in the game to contrast the large orchestra and to portray the vulnerability of the main character.

Both music and visuals must be well thought out and tightly integrated to create a cohesive and ambient environment. A game’s pace is just as important as the musical build up that allows the player time to feel safe in order to deliver the next tense moment with impact.

When you take a look at how far music in gaming has come, it speaks volumes to its importance in the game industry. Music is no longer just set in the background of the game. Rhythm genre game titles such as Rock Band and Guitar Hero offer a twist on standard game play and offer music as the game.

Gina Zdanowicz is the Founder of Seriallab Studios, Lead Audio Designer at Mini Monster Media, LLC and a Game Audio Instructor at Berkleemusic. Seriallab Studios is a full service audio content provider supplying custom music and sound effects to the video game industry. Seriallab Studios has been involved in the audio development of 60+ titles.

Video Game Music: Player Immersion (Part II)

In Part I of this article, lead audio designer Gina Zdanowicz discusses how video game music enhances a player’s gameplay experience. In Part II, she offers examples of diegetic and non-diegetic music in games.

A technique that is becoming more popular in games is diegetic music. Diegetic music refers to music that originates from within the game world. It’s always nice when a game score can incorporate epic music in the game world, but in real life when you are walking around in a park or on a beach, you don’t hear any music unless you have your headphones on. Diegetic music, although coming from an object within the game, can still set the mood of the environment.

Let’s take a look at some games that use diegetic music to enhance the player’s immersion into the game world.

Fallout 3 makes great use of diegetic and non-diegetic music. Characters in the game have wrist-mounted computers called the Pip-boy 3000, as well as radios scattered around the game world which play music and other broadcasts from in-game radio stations. If the player has their Pip-boy 3000 turned on, they have to be careful of the radio alerting NPC’s to their presence. When the radio function is turned off, non-diegetic background music is played through the game world.

Bioshock also uses a combination of diegetic and non-diegetic music, as well as no music, to set the mood. In the game’s opening scene, the player escapes from the plane wreckage to a lighthouse set on a small rocky island. The lack of music in this scene hints to the player the feelings of a desperate struggle to survive. After the player enters the lighthouse, music starts to fade into the scene. The music is coming from downstairs, which provokes the player to follow the music down the flight of stairs to find the radio in a bathysphere. The music plays two roles in this example: It gives the player a reason to move forward in the game, as well as sets the mood.

The use of diegetic music in Bioshock really underscores the dying city when the player enters a room with a scratchy, 60’s-era record playing. Diegetic music, which is used in place of orchestral background music, can be heard from around corners or can be muffled by doors.

Left 4 Dead allows a player to turn on a jukebox, which will attract a zombie horde. During this attack, instead of non-diegetic music playing, the jukebox music continues to play even if the jukebox is out of visual range.

Grand Theft Auto is, while cliché, a good example of diegetic music. Car radios broadcast different stations and songs that the player can choose to tune into while driving the vehicles in the game. After all, who doesn’t love riding in a car with the music pumping?

A diegetic switch is a technique which can be used to continue the diegetic music throughout the game. The music starts off as a diegetic broadcast from a radio or other source within the game, and as the scene changes, the music switches to a non-diegetic version of the same song and continues to play in that environment.

The Legend of Zelda: Ocarina of Time starts with the diegetic version of Saria’s as it directs the player through the lost woods maze. As the song grows louder, the player is aware that they are moving forward in the right direction. If they player goes off course, the song’s volume decreases, alerting the player to change direction. After the player learns the song, it becomes non-diegetic music in that environment.

As video games evolve, game music must also evolve, allowing for a cohesive integration for a seamless visual and aural experience, which will deeply immerse the player into the game world and keep them there until they press the pause button.

篇目5,How Does In-Game Audio Affect Players?

Raymond Usher

This study investigated the importance of audio in computer games. To do this an experiment was designed that compared groups of participants that played the same games with and without audio. Participants’ physical responses to the games were recorded via a bioharness that recorded participant’s breathing wave, heart rate, respiration rate and skin temperature.

Analysis of the heart rate and respiration rate of participants showed that those playing games with audio had a higher level of arousal (a combination of heart rate and respiration rate) and demonstrated the immersion capabilities of audio in games.

Participants

12 students from the University of Abertay participated in the study. All participants had experience in playing computer games but little or no experience in the games selected for the study.

Materials

Experimental trials were conducted in Abertay’s HIVE facility, which provides a six meter rear projection screen, 7.1 surround sound and adjustable lighting providing a great gaming experience.

Participants’ physical responses were recorded with a bioharness (more info available here). The bioharness records a range of physical attributes including heart rate, breathing rate, and skin temperature.

Three games were selected as stimuli for participants to play: Osmos; FlatOut Total Carnage; Amnesia: The Dark Descent.

These games provided a range of genres (racing to survival horror) and play styles (driving to first person shooter).

The aim of Osmos is to propel yourself (bright blue orb), a single-celled organism (mote), into other smaller motes (dark blue orbs) to absorb them. Colliding with a mote larger than yourself will result in being absorbed yourself, resulting in a Game Over. Changing course is done by expelling mass. Due to conservation of momentum, this results in the player’s mote moving away from the expelled mass, but also in their mote shrinking.

FlatOut is a racing game with an emphasis on demolition derby-style races, and features a sophisticated physics engine. Races take place in a range of locations from busy street to storm water drains. Players race against 11 computer-controlled opponents in races consisting of multiple laps. An additional speed boost can be gained by going off jumps and crashing into other cars.

Amnesia: the Dark Descent features an unarmed protagonist exploring a dark and foreboding castle while avoiding monsters and other obstructions, as well as solving puzzles. The player plays and sees through the eyes of Daniel, making their way through the different levels of Brennenburg Castle as the story progresses, starting off in the Rainy Hall and eventually making their way into the deepest depths of the castle in their search for Alexander, the owner of the castle.

Procedure

The experiment trials began with participants signing a consent form, where they agreed to take part in the experiment, understood what the experiment would consist of, and stated they had no problem with projected images, as required by Abertay’s experiment guidelines. Following the signing of the consent form, participants were instructed how to wear the bioharness. Once the bioharness was on, recording of physical attributes began.

Participants played each of the three games in order: Osmos, FlatOut, and Amnesia. Before starting each game, participants had to be in a resting state; this was monitored with the bioharness. If participants were not in a relaxed state when they began the game, it would be challenging to determine the effects of gaming on physical responses.

Participants alternated between the audio and no-audio conditions, where one participant would play the three games with audio and the next participant would play the three games without audio. All participants were given the same levels/locations in all three games to play. For Osmos, participants were given two levels where they were tasked to become the largest. For FlatOut, all participants were given the first race (four laps) and the same car. Finally, for Amnesia, all participants were tasked with navigating through the open level of the game.

Results

Game 1

Analysis of participant responses focused on heart rate and respiration rate as variables demonstrating arousal. Participants were divided into two groups based on testing conditions: audio and no-audio.

Illustration 4 shows a comparison of the groups’ heart rate over the duration of playing the first game.

At the start of the game both groups had a heart rate around 75 beats per minute (bpm). The Audio group played the game for longer, but also demonstrates a consistently higher heart rate throughout, and had greater maximum and minimum heart rate values (audio group maximum 84bpm, minimum 68bpm; no-audio group maximum 78bpm and minimum 61bpm).

Further analysis found this difference in heart rate to be significant (Mann-Whitney, p<0.001).

Illustration 5 shows a comparison of groups’ respiration rate during game play (maximum and Minimum respiration rate of 25bpm and 7bpm for audio group, respectively and 21bpm and 2bpm for no-audio group, respectively). Analysis showed, unlike heart rate, a significant difference was not found (Mann-Whitney, p=0.182).

(Illustration 4: Heart rate comparison of groups with and without audio)

(Illustration 5: Comparison of respiration rate of audio and no-audio groups)

Game 2

Illustration 6 shows a comparison of audio and no-audio groups’ heart rate while playing Game 2 (FlatOut). The graph shows a clear difference between the groups, with the audio group having a much higher heart rate throughout the game compared to the no-audio group (Audio group maximum 91bpm, minimum 57bpm and NO-audio group maximum 77bpm, minimum 64bpm). Further statistical analysis showed this to be a significant difference (Mann-Whitney, p<0.001).

Illustration 7 shows a comparison of the two groups’ respiration rate throughout the game. During game play both group show fluctuation in respiration rates with the audio group having a slightly higher maximum and minimum respiration rate (audio group maximum 24bpm, minimum 14bpm, and no-audio group maximum 22bpm, minimum 12bpm). Statistical analysis found the difference between groups to be significant (Mann-Whitney, p<0.001).

(Illustration 6: Comparison of heart rates)

(Illustration 7: Comparison of audio/no-audio groups’ respiration rate for Game 2)

Game 3

Illustration 8 shows a comparison of heart rates for the audio and no-audio groups while playing Game 3 (Amnesia: The Dark Descent). The graph shows that the audio group had a consistently higher heart rate throughout the game-play session. The audio and no-audio groups obtained maximum heart rates of 90bpm and 77bpm respectively, and minimum heart rate of heart rates of 74bpm and 52bpm, respectively. Further statistical analysis found the differences in heart rate to be significant (Mann-Whitney, p<0.001).

Illustration 9 shows a comparison of respiration rates throughout game-play for the audio and no-audio groups. The graph shows overall the audio group had a greater respiration rate (maximum rate of 27bpm and minimum rate of 10bpm) compared to the no-audio group (maximum 16bpm and minimum 6bpm). Statistical analysis found that the differences in respiration rate were significant (Mann-Whitney, p<0.001).

(Illustration 8: Comparison of audio and no-audio groups’ heart rate for Game 3)

(Illustration 9: Comparison of audio and no-audio groups’ respiration rate for Game 3)

Summary

Firstly, a summary of results: During Game 1, the audio group had a significantly higher heart rate and a slightly higher respiration rate compared to the no-audio group. During Game 2, the audio group had a significantly high heart rate and respiration rate than the no-audio group.

Finally, during Game 3 the audio group had significantly higher heart rate and respiration rate compared to the no-audio group. These findings suggest that the presence of audio in games can increase in player arousal, as shown by an increase in physical responses (heart rate and respiration rate).

Focusing on the games individually, starting with Game 1 (Osmos) the results show this game produced a low heart rate (68bpm) and lowest respiration rate (7bpm) for the audio group. While these values are low both were still higher than that produced by the no-audio group.

These values are low, most likely, because Osmos is a low-stress game. The levels participants were tasked with completing were not challenging, and the audio is relaxing — therefore, participants did not express any frustration.

During Game 2 (FlatOut), the audio group produced the highest heart rate (91bpm) and a slightly higher respiration rate compared to the No-audio group. The rationale for these high values is that FlatOut is an exhilarating racing game, more so with audio (engine noise, crash sound effects, and background rock music). Furthermore, participant performance in the game may affect responses — for example, if a player is winning, they may respond with excitement (increasing both heart and respiration rate), or if a player is losing they may become frustrated, also increasing heart and respiration rate.

Game 3 (Amnesia) best demonstrates the affect of audio in games. The audio group obtained significantly higher heart and respiration rates compared to the no-audio group during game play. This is more impressive given that in the section of game all participants played through, very little happens. There are no enemies and no fighting — just exploration — and the results suggest that audio can yet increase immersion in games.

Reviewing group responses to all games it shows the audio group produced a high maximum heart rate and respiration rate for all games (heart rate Game 1: 84bpm, Game 2: 91bpm and Game 3: 90bpm; respiration rate Game 1: 25bpm, Game 2: 24 and Game 3: 27bpm). The no-audio group produced consistent maximum heart rate values over the three games (Game 1: 78bpm, Game 2: 77bpm and Game 3: 77bpm). The difference in heart rates between the groups shows the effect audio in games has on players.

To further investigate the effect of audio in games another study could be conducted that utilizes the same methodology as the above study, but instead of using commercial games as stimuli could build a bespoke game environment for testing. The advantage of a bespoke testing environment is the ability to control almost everything. Such an environment could be used to investigate aspects such as quality or realism of audio and the responses of players.

下一篇:设计“完美的”游戏关卡的秘诀

闽公网安备35020302001549号

闽公网安备35020302001549号