游戏设计课程之MDA模式和反馈循环机制(5)

今天我们要谈论的是如何将糟糕法则由劣转优,如何评估游戏设计师创造的各种不同法则,以及游戏法则同用户体验之间的关系。(请点击此处阅读本系列第1、第2、第3、第4、第6、第7、第8、第9、第10、第11、第12、第13、第14、第15、第16、第17、第18课程内容)

推荐阅读内容

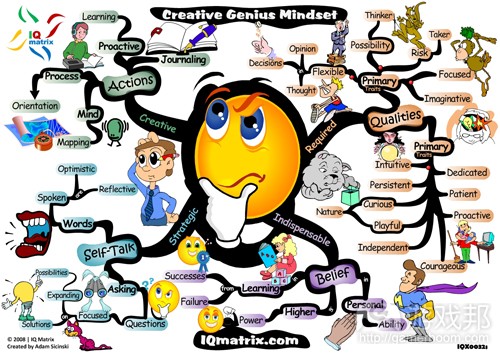

* LeBlanc、Hunicke和Zabek的《MDA Framework》,这是在游戏领域受到广泛关注的少数学术论文(游戏邦注:这也许要归功于3位作者都是资深游戏设计师)。文章有2块内容尤其突出。首先是Mechanics/Dynamics/Aesthetics(MDA)构想,这令我们能够探究规则同用户体验,用户同设计师之间的关系。第二块是“8种趣味”。

现在就来谈谈《MDA Framework》……

LeBlanc等基于Mechanics、Dynamics和Aesthetics定义游戏:

* Mechanics是游戏“规则”的同义词。这些是游戏运作的限制条件。游戏如何形成?玩家能够采取什么行动,这些行动会给游戏状态带来什么影响?游戏何时结束,如何确定解决方案?这些都由Mechanics定义。

* Dynamics呈现规则运作时的游戏。规则衍生什么策略?玩家如何进行互动?

* 这里的Aesthetics不是指游戏的视觉元素,而是游戏的用户体验:Dynamics带给玩家的影响。游戏是否“有趣”?体验是令人沮丧,枯燥,还是饶有趣味?体验是否具有情感、智力魅力?

在《MDA Framework》问世前,“Mechanics”和“Dynamics”就受到设计师的广泛运用,而“Aesthetics”也开始受到更多关注。

设计过程

谈完定义,接着就是此理论的重要性?这是《MDA》文章的要点之一。游戏设计师只直接创建Mechanics。Dynamics源自Mechanics,而Aesthetics源自Dynamics。游戏设计师或许希望自己能够设计游戏体验,或者至少这是游戏设计师心中的终极目标,但作为设计师,我们需要创建游戏规则,希望理想体验能够从我们的规则中诞生。

这就是为什么游戏设计有时被当作二级设计问题:因为我们没有定义解决方案,我们只是定义其他能够间接创造解决方案的内容。这就是为什么游戏设计如此困难。或者,至少这是一个原因。设计不只是创造游戏的“最佳构思”;而是在2/3成品(Dynamics和Aesthetics)尚未处于我们的直接控制范围时建构出系列能够落实构思的规则。

体验过程

设计师从Mechanics着手,然后持续跟进,直至它们发展成Aesthetics。你可以把游戏想像成球体,Mechanics是核心,Dynamics包围核心,Aesthetics处在表面。外围内容由深层内容发展而来。《MDA》作者表示这不是玩家眼里的游戏体验模式。

玩家首先看到表面——Aesthetics。他们也许也会注意到Mechanics和Dynamics,但能够产生直接印象,最通俗易懂的是Aesthetics。这就是为什么即便缺乏游戏设计知识或培训经历,大家依然能够体验游戏,告知你自己是否玩得愉快。他们也许无法陈述自己为何玩得愉快,或者什么使得游戏“优秀”或“糟糕”,但能够陈述游戏带给他们的感觉。

若玩家在游戏中投入充足时间,他们也许能够把握游戏的Dynamics,知晓自己的体验由此形成。他们也许会发现,他们喜欢或不喜欢某游戏是由于他们同游戏或同其他玩家进行的某些互动。若玩家在游戏中投入更多时间,他们最终也许能够深入把握Mechanics,获悉Dynamics如何由此产生。

若游戏是由内而外设计而成的球体,那么玩家就是由外而内进行体验。我觉得这是《MDA》的要点之一。设计师创造Mechanics,然后所有内容由此向外发展。玩家体验Aesthetics,体验向内延伸。作为设计师,我们需知晓这两种游戏互动方式。否则我们很容易创造在设计师看来有趣,对玩家来说非常无趣的内容。

MDA运作实例

我早前曾提到“重生地点”理论,旨在举例说明遵循不同隐含规则的玩家称某些符合游戏技术规则的内容存在“欺骗性”。下面让我们在MDA背景中分析此内容。

在第一人称射击视频游戏中,常见机制是玩家享有的“重生点”——地图上的特定地点,玩家被杀后能够重生。重生点是个游戏机制。这带来此动态模式:玩家靠近某重生点,然后在某人重生时迅速将其杀死。Aesthetics就会充斥沮丧感,因为玩家想到一重生又会被立即杀死。

假设你在设计新款FPS游戏,你在游戏中注意到此沮丧美学,你希望进行修复,这样游戏就不会如此令人沮丧。你无法简单改变游戏美学,令其“变得更富趣味”——这也许是你的目标,但这不在你的直接控制范围内。你甚至无法直接改变重生点的机制;你无法告知玩家如何同游戏互动,除非通过游戏机制。所以相反,你需要改变游戏的机制——也许你希望玩家在随机地点,而非制定区域重生,你希望预期美学效果能够从你的机制变更中诞生。

如何知晓变革是否有效?当然是进行游戏测试。

你如何知晓进行哪些调整,若机制调整的效果无法预知?最后我们会提供些许技巧。目前最显而易见的方法是设计师的直觉。实践越多,所设计的作品越多,进行的规则调整越多(游戏邦注:然后进行游戏测试,查看变更的影响),你就越能够在发现问题时做出正确调整,有时甚至能在一开始就做出正确调整。经验无可取代,这也是为什么本课程建议大家进行大量尝试,动手制作游戏。

“若电脑或游戏设计师收获的乐趣比玩家多,那你就犯下糟糕错误。”

现在引用游戏设计师Sid Meier的话恰到好处。他的警告瞄准的是电子游戏设计师,但同样适应于非数字项目。这提示我们所设计的游戏Mechanics和Mechanics也许对我们而言颇具趣味,但在玩家看来却非常乏味。常见设计错误是创造能够带来乐趣的规则,但这并不一定能够转化成有趣玩法。记住你是为玩家而非自己设计游戏。

Mechanics、Dynamics和Complexity

通常添加额外机制、新系统、额外游戏物件和新物件互动方式都会提高游戏动态机制的复杂性。例如,不妨比较下《Chess》和《Checkers》。国际象棋包含六种棋子,每颗棋子能够进行更多操作,这样游戏就融入更多策略深度。

复杂化是好是坏?这要看情况。《俄罗斯方块》非常简单,但依然是非常成功的作品。《班长高级版》虽然非常复杂,但依然取得很大成功。有些游戏过于简单,超过一定年龄层的用户会觉得其缺乏趣味,如《Tic-Tac-Toe》。有些游戏过于复杂,若其机制能够更加简单和合理效果会更好。

更复杂的机制是否总是会带来更复杂的动态机制?并非如此——在某些情况下,简单机制也能够创造复杂性。而在其他有些情况下,游戏机制相当复杂,但动态机制却相当简单。评估游戏复杂性的最佳方式是体验游戏。

反馈循环

常出现于游戏,颇值得关注的一个动态机制是反馈循环。这共有两种类型,即积极反馈循环和消极反馈循环。这些术语来自其他领域(游戏邦注:如控制系统和生物学),它们在游戏中含义相同,但在其他内容则代表不同意义。

积极反馈循环可以看作是强化关系。某事的发生会不断重复,每次重复都得到强化——就像滚雪球,起初在山顶时很小,后来越滚越大,逐步聚集更多雪花。

举个例子,NES有款相当晦涩的射击游戏叫做《嘉蒂外传》。玩家只要获胜,就能够获得特殊玩法模式。在此模式中,玩家会在关卡末尾基于积分获得升级道具:积分越高,玩家在下个关卡获得的升级道具就越多。这就是积极反馈循环:若玩家获得高分,游戏就会提供更多升级道具,这能够促使玩家在下个关卡中获得更高分,这又会带来更多升级道具。

注意在此情况中,反之亦是成立。假设你获得低分。然后你就会在关卡末尾获得较少升级道具,这样你就很难在下个关卡中获得杰出表现,这意味着玩家可能会获得更低分数,最终玩家落后很多,几乎无法继续前进。

令人困惑的是二者都属于积极反馈情境。这似乎有违直觉;第二个例子非常“消极”,因为玩家表现很差,获得较少奖励,这里的“积极”是指效果在每次重复中日益强化。

游戏设计师应把握积极反馈循环的3大属性:

1. 它们倾向在玩家日益前进的过程中动摇游戏。

2. 它们会让游戏更快结束。

3. 它们强调游戏早期阶段,因为游戏早期阶段的决策的影响会逐步扩大。

反馈循环通常包含2个步骤,但其所包含步骤其实可以更多。例如,某些即时策略游戏包含4个步骤的积极反馈循环:玩家探索地图,这能够让他们接触更多资源,然后购买更优质的技术,创建更好的单元,然后更高效地探索。就此而言,消除积极反馈循环机制不是易事。

下面是些你或许非常熟悉的积极反馈循环事例:

* 多数“4X”游戏通常基于积极反馈循环,如《文明》和《银河霸主》系列。玩家创建自己的文明帝国后,就能更快生成资源,进而更快成长。待到你同对手真正交锋时,某玩家通常遥遥领先,使此交锋算不上真正的竞争,因为推动游戏的核心积极反馈循环机制显示,初期领先的玩家将会在未来的游戏角逐中更加遥遥领先。

* 基于建造机制的棋盘游戏,例如《卡坦岛》。在这些游戏中,玩家利用资源提高自己的资源创造过程,使之能够创造更多的资源。

* 物理运动游戏《Rugby》融入小型积极反馈循环:当团队得分时,他们就获得发球的机会,这样得分的机率就更大。因此处于优势地位的团队就能够再次获得有利条件。这和多数体育运动游戏相反(游戏邦注:在这些运动中,通常刚得分的团队就有没有发球机会)。

消极反馈循环同积极反馈循环恰好相反。消极反馈循环是种平衡关系。当游戏出现某情况,消极反馈循环会降低相同情况出现的可能性。若某玩家处于优势地位,消极反馈循环会令对手更容易迎头赶上。

举个例子,想想“卡丁车风格”的赛车游戏《马里奥卡丁车》。在赛车游戏中,若玩家置身车群中,而非遥遥领先或落后很后面,体验将更有趣。因此,这类体验的标准模式是添加消极反馈循环:随着玩家开始领先车群,对手便会开始采取欺骗举措,寻找更好的升级设备,争取不可能的速度,帮自己迎头赶上。这让玩家更难维持领先位置。这个特殊的反馈循环机制有时被称作“橡皮筋”,因为赛车仿佛同橡皮筋相连,橡皮筋会将领先者和落后者都重新带回车群中。

同样,反之亦是如此。若玩家落后,他们会找到更好的升级设备,对手会放慢速度,令他们能够迎头赶上。这令已落后的玩家不会继续落后。二者都是消极反馈循环的范例;“消极”是指动态机制会在每次反复中变弱,无关乎这会给玩家的游戏地位带来积极,还是消极影响。

消极反馈循环也有3个重要属性:

1. 它们倾向稳定游戏,令玩家朝群体中心靠拢。

2. 它们促使游戏耗时更久。

3. 它们强调未来的游戏内容,因为初期决策会逐渐减少其影响。

消极反馈循环的例子:

* 在多数体育运动游戏中,如《Football》和《Basketball》,团队得分后,球就会落到对方手中,他们就会得分的机会。这样某团队就很少会反复得分。

* 棋盘游戏《Starfarers of Catan》包含消极反馈循环,胜利积分少于特定数量的玩家都会在回合开始时得到免费资源。初期这会给所有玩家带来影响,加速初期阶段的游戏进程。到后期,随着某些玩家开始迎头赶上,超过胜利积分界限,落后的玩家就会获得奖励资源。这令落后的玩家能够更轻松赶上。

* 我祖父是《Chess》高手,比他教出来的孩子还要厉害。为让竞赛更像挑战,他制定这样的规则:若他赢了比赛,下次对手就能在比赛开始移除他的一个棋子(游戏邦注:起初是一个小卒,然后慢慢变成两个小卒,然后变成马或象)。每次祖父赢后,下个竞赛对他来说就会更具挑战性,他失败的机率就会逐步增大。

反馈循环的运用

反馈循环是好是坏?我们应融入此要素,还是要回避它?正如多数游戏设计要素一样,这取决于具体情况。有时设计师会故意添加促成反馈循环的机制。有时反馈循环在体验中出现,此时设计师需决定如何处理。

积极反馈循环有其益处。当玩家开始变成赢家时它们就快速结束游戏,防止结局持续很久。另外,积极反馈循环对试图赶上领先者的玩家来说颇为沮丧,他们会觉得自己没有机会。

消极反馈循环亦能够有效防止初期出现优势策略,让玩家觉得自己还有获胜的机会。此外,它们也会带来沮丧感,因为早期表现较优的玩家会觉得自己因获胜而受到惩罚,而落后玩家却因表现较差而受到奖励。

从玩家角度看,什么促使某反馈循环机制“好”或“坏”。这存在争议,但我觉得这主要涉及玩家的公平感。若它给人的感觉是游戏有意介入,帮助不配获得帮助的玩家获胜,在玩家看来此反馈循环就起到消极作用。我们如何知晓玩家如何看待游戏?当然是进行游戏测试。

消除反馈循环

假设你在游戏中发现反馈循环,打算将其移除。你会怎么做?有两种方式。

第一个是去除反馈循环本身。所有反馈循环都包含3个要素:

* 检测游戏状态的“传感器”;

* 基于传感器感知价值觉得是否采取行动的“比较器”。

* 在比较器做出决定后修改游戏状态的的“激活器”。

例如,在先前的卡丁车赛车消极反馈中,“传感器”是指玩家比其余玩家领先或落后多少;“比较器”检测玩家是超越,还是落后某界限值;“激活器”促使对方赛车加速或减速,若玩家过于超前或落后。所有这些要素会形成单一机制。在其他情况下,形成反馈循环的机制或许有3个以上,调整其中之一会改变机制的属性。

把握创造反馈循环的机制后,你可以通过移除传感器,改变或移除比较器,改变或移除激活器破坏反馈循环带来的效果。回到《嘉蒂外传》例子(更多积分=更多升级道具),你可以通过修改传感器,或改变比较器,或改变激活器去除积极反馈循环。

若你不希望移除游戏的反馈循环,但希望降低其作用,另一选择就是添加相反的反馈循环。让我们再次回到卡丁车竞赛例子,若你希望保持“橡皮筋”消极反馈循环,你可以通过添加积极反馈循环进行中和。例如,当玩家处于领先地位时,对方赛车获得额外速度,也许玩家也能够前进更快,进而出现这样的状况:玩家处于领先能够让整个比赛进展更快。或者也许处于领先的玩家能够找到更好的升级道具弥补对手的新速度优势。

意外机制

另一游戏设计师需知晓的动态机制是意外玩法。通常意外玩法是指存在简单机制但复杂动态机制的游戏。“意外复杂性”可以用于形容任何存在此属性的机制,即便内容不是游戏。

游戏以外世界的意外情况:

* 本质来说,昆虫群落(游戏邦注:蚂蚁和蜜蜂)的行为很复杂,它们非常聪明,我们将之称作“蜂巢心智”。事实上,每只昆虫都有自己的简单规则,只有聚集起来,群落才会呈现复杂行为。

* Conway的“生命游戏”,虽然根据我们的定义算不上真正的“游戏”,但它是模拟细胞生命的系列有序简单规则。每个细胞在当前回合或“生”或“死”。为前进至下个回合,毗邻0或1个活跃细胞的活跃细胞将死去(过于孤立),毗邻4个以上活跃细胞的活跃细胞也会死去(过于拥挤);死去细胞若毗邻3个活跃细胞将“重生”,在下个回合变成活跃细胞;毗邻2个活跃细胞的的细胞将保持原状。这些就是全部规则。玩家从最初的选择设置着手,然后修改版面查看所发生情况。然而玩家也会面对相当复杂的行为:结构会移动、变化和衍生新结构及其他许多内容。

* Boid的“Algorithm”,这是模拟某些CG电影和游戏群集行为的的方式。群落个体只需遵守3个简单规则。首先,若你的一旁有很多同伴,另一旁的同伴很少,这意味着你处在群落边缘;需朝同伴靠拢。其次,若你和同伴很近,需给它们空间,这样你就不会过于逼近。第三,将自己速度和方向调整成与同伴相似。遵循这3条件规则,你将获得非常复杂、精细和真实的群落行为。

下面是些意外玩法的例子:

* 在《街头争霸》或《铁拳》系列的战斗游戏中,“组合技能”来自系列简单规则的冲突:随机进攻会吓到对手,这样他们就无法做出反应,也可以在玩家恢复前展开快速进攻。设计师也许会在游戏中设置组合技能。此惊吓和进攻速度机制创造系列复杂移动,这在系列回合的首次移动后变得不可阻挡。

* 在《Basketball》运动中,“带球”并非规则的组成部分。设计师原本打算将游戏打造成同《极限飞盘》类似的模式:持球玩家不允许移动,要嘛将球投向篮筐,要嘛“传”给其他队员。没有规则限制玩家传球给自己。

* 《Chess》的开放模式。游戏规则相当简单,游戏只有六种棋子和少量特殊移法,但有很多常见的开放式移法,这会在重复体验中出现。

我们为什么要关注意外动态机制?这通常出于现实原因,尤其是在视频游戏世界,因为你可以从相当简单的机制中获得各种深层玩法。在视频游戏中,需落实的内容是机制。若你在给某视频游戏编程,意外玩法会带来很棒的玩法时长和代码长度比例。由于这能够明显降低成本,“意外机制”几年前极度风靡,现在我依然会不时听到大家提到它。

值得注意的是,意外玩法不总是刻意设置,因此也不总是令人满意。下面是两个意外玩法的例子,都是来自《侠盗猎车手》系列,其中无意识的意外玩法会带来问题:

* 想想这两个规则。首先,车辆碾过行人会使他们掉落身上的金钱。其次,雇佣妓女会补充玩家的能量,但会消耗资金。从上述两个毫无关系的规则中,我们得到被大家称作“利用妓女”的意外策略:同妓女共度良宵,然后将其碾过,重获所付出的金钱。这在过去遭受很多流言蜚语,包括有人将此动态机制解读成有意美化针对性工作者的暴力行为。仅表示“这是意外机制!”不足以向外行人说明这不是有意设计。

* 也许更有趣的是将两个规则结合起来。首先,若玩家给无辜旁观者带来伤害,他们会通过进攻玩家保护自己。其次,若车辆遭受严重损害,它最终会爆炸,破坏周围的东西(游戏邦注:当然也会波及司机)。这会带来如下非常不真实的场景:玩家驾着破损的车辆撞击大量旁观者。然后车辆爆炸。玩家从废墟中爬出来,苟延残喘,然后附近的“撒玛利亚人”群落认为玩家损及他们,结伴来到地面,解决玩家!

正如你看到的,意外机制不总是好事。更重要的是,制作包含意外机制的作品成本不低。由于动态机制的复杂本性,相比机制和动态机制存在简单关系的游戏而言,意外模式游戏需要更多测试,更频繁更新。融入意外机制的游戏更易于编程,但更难设计;这无法降低成本,而是将原本由程序员承担的费用转移至游戏设计师身上。

从意外机制到有意设计

玩家意图,从某种程度上,看同意外机制相关。通常我们能够基于系列小型简单相关系统获得意外机制。若玩家能够弄清这些机制,有意将其设置成复杂连锁事件,也是获得深刻玩家意图的渠道之一。

推荐阅读

* Clint Hocking的《Designing to Promote Intentional Play》,这是

Clint 2006年在GDC所做的现场演讲。

所获经验

本文的要点是游戏设计不是琐碎任务。这项工作颇具难度,主要由MDA的性质决定。设计师创造规则,规则创造玩法,玩法创造用户体验。每个规则都会给玩家带来双重间接影响,这难以预测和控制。这同时也说明为何小小的规则调整会带来波及效应,严重变更游戏的体验方式。但设计师的任务是创造受人喜爱的用户体验。

这就是为什么游戏测试如此重要。若你对规则调整感到不确定,这是测试其效果最有效的方式。

迷你挑战

分析你最喜欢的体育游戏,查看其中的积极或消极反馈循环。多数运动都至少包含一种循环。然后试着调整规则,消除此循环。

游戏邦注:原文发布于2009年7月13日,文章叙述以当时为背景。(本文为游戏邦/gamerboom.com编译,拒绝任何不保留版权的转载,如需转载请联系:游戏邦)

Level 5: Mechanics and Dynamics

Until this point, we have made lots of games and game rules, but at no point have we examined what makes a good rule from a bad one. Nor have we really examined the different kinds of rules that form a game designer’s palette. Nor have we talked about the relationship between the game rules and the player experience. These are the things we examine today.

Course Announcements

No major announcements today, but for your curiosity I did compile a list of tweets for the last challenge (add or change a rule to Battleship to make it more interesting):

* “Reveal” was a common theme (such as, instead of firing a shot, give the number of Hits in a 3×3 square – thus turning the game from “what number am I thinking of” into “two-player competitive Minesweeper”)

* Skip a few turns for a larger shot (for example, skip 5 turns to hit everything in an entire 3×3 area). The original suggestion was an even number (skip 9 turns to nuke a 3×3 square) but note that there isn’t much of a functional difference between this and just taking one shot at a time.

* Like Go, if you enclose an area with a series of shots, all squares in the enclosed area are immediately hit as well (this adds an element of risk-taking and short-term versus long-term tradeoffs to the game – do you try to block off a large area that takes many turns but has an efficient turn-to-squares-hit ratio, or do you concentrate on smaller areas that give you more immediate information but at the cost of taking longer in aggregate?)

* When you miss but are in a square adjacent to an enemy ship, the opponent must declare it as a “near miss” (without telling you what direction the ship is in), which doesn’t exactly get around the guessing-game aspect of the original but should at least speed play by giving added information. Alternatively, with any miss, the opponent must give the distance in squares to the nearest ship (without specifying direction), which would allow for some deductive reasoning.

* Skip (7-X) turns to rebuild a destroyed ship of size X. If the area in which you are building is hit in the meantime, the rebuild is canceled. (The original suggestion was skip X turns to rebuild a ship of size X, but smaller ships are actually more dangerous since they are harder to locate, so I would suggest an inverse relationship between size and cost.)

* Each time you sink an enemy ship, you can rebuild a ship of yours of the same size that was already sunk (this gives some back-and-forth, and suggests alternate strategies of scattering your early shots to give your opponent less room to rebuild)

* Once per game, your Battleship (the size-4 ship) can hit a 5-square cross (+) shaped area in a single turn; using this also forces you to place a Hit on your own Battleship (note that this would also give away your Battleship’s location, so it seems more like a retaliatory move when your Battleship is almost sunk anyway)

We will revisit some of these when we talk about the kinds of decisions that are made in a game, next Monday.

Readings

This week I’m trying something new and putting one of the readings up front, because I want you to look at this first, before reading the rest of this post.

* MDA Framework by LeBlanc, Hunicke and Zabek. This is one of the few academic papers that achieved wide exposure within the game industry (it probably helps that the authors are experienced game designers). There are two parts of this paper that made it really influential. The first is the Mechanics/Dynamics/Aesthetics (MDA) conceptualization, which offers a way to think about the relationship of rules to player experience, and also the relationship between player and designer. The second part to pay attention to is the “8 kinds of fun” which we will return to a bit later in the course (Thursday of next week).

Now, About That MDA Framework Thing…

LeBlanc et al. define a game in terms of its Mechanics, Dynamics, and Aesthetics:

* Mechanics are a synonym for the “rules” of the game. These are the constraints under which the game operates. How is the game set up? What actions can players take, and what effects do those actions have on the game state? When does the game end, and how is a resolution determined? These are defined by the mechanics.

* Dynamics describe the play of the game when the rules are set in motion. What strategies emerge from the rules? How do players interact with one another?

* Aesthetics (in the MDA sense) do not refer to the visual elements of the game, but rather the player experience of the game: the effect that the dynamics have on the players themselves. Is the game “fun”? Is play frustrating, or boring, or interesting? Is the play emotionally or intellectually engaging?

Before the MDA Framework was written, the terms “mechanics” and “dynamics” were already in common use among designers. The term “aesthetics” in this sense had not, but has gained more use in recent years.

The Process of Design

With the definitions out of the way, why is this important? This is one of the key points of the MDA paper. The game designer only creates the Mechanics directly. The Dynamics emerge from the Mechanics, and the Aesthetics arise out of the Dynamics. The game designer may want to design the play experience, or at least that may be the ultimate goal the designer has in mind… but as designers, we are stuck building the rules of the game and hoping that the desired experience emerges from our rules.

This is why game design is sometimes referred to as a second-order design problem: because we do not define the solution, we define something that creates something else that creates the solution. This is why game design is hard. Or at least, it is one reason. Design is not just a matter of coming up with a “Great Idea” for a game; it is about coming up with a set of rules that will implement that idea, when two-thirds of the final product (the Dynamics and Aesthetics) are not under our direct control.

The Process of Play

Designers start with the Mechanics and follow them as they grow outward into the Aesthetics. You can think of a game as a sphere, with the Mechanics at the core, the Dynamics surrounding them, and the Aesthetics on the surface, each layer growing out of the one inside it. One thing the authors of MDA point out is that this is not how games are experienced from the player’s point of view.

A player sees the surface first – the Aesthetics. They may be aware of the Mechanics and Dynamics, but the thing that really makes an immediate impression and that is most easily understood is the Aesthetics. This is why, even with absolutely no knowledge or training in game design, anyone can play a game and tell you whether or not they are having a good time. They may not be able to articulate why they are having a good time or what makes the game “good” or “bad”… but anyone can tell you right away how a game makes them feel.

If a player spends enough time with a game, they may learn to appreciate the Dynamics of the game and now their experience arises from them. They may realize that they do or don’t like a game because of the specific kinds of interactions they are having with the game and/or the other players. And if a player spends even more time with that game, they may eventually have a strong enough grasp of the Mechanics to see how the Dynamics are emerging from them.

If a game is a sphere that is designed from the inside out, it is played from the outside in. And this, I think, is one of the key points of MDA. The designer creates the Mechanics and everything flows outward from that. The player experiences the Aesthetics and then their experience flows inward. As designers, we must be aware of both of these ways of interacting with a game. Otherwise, we are liable to create games that are fun for designers but not players.

One Example of MDA in action

I mentioned the concept of “spawn camping” earlier in this course, as an example of how players with different implicit rule sets can throw around accusations of “cheating” for something that is technically allowed by the rules of the game. Let us analyze this in the context of MDA.

In a First-Person Shooter video game, a common mechanic is for players to have “spawn points” – dedicated places on the map where they re-appear after getting killed. Spawn points are a mechanic. This leads to the dynamic where a player may sit next to a spawn point and immediately kill anyone as soon as they respawn. And lastly, the aesthetics would likely be frustration at the prospect of coming back into play only to be killed again immediately.

Suppose you are designing a new FPS and you notice this frustration aesthetic in your game, and you want to fix this so that the game is not as frustrating. You cannot simply change the aesthetics of the game to “make it more fun” – this may be your goal, but it is not something under your direct control. You cannot even change the dynamics of spawn camping directly; you cannot tell the players how to interact with your game, except through the mechanics. So instead, you must change the mechanics of the game – maybe you try making players respawn in random locations rather than designated areas – and then you hope that the desired aesthetics emerge from your mechanics change.

How do you know if your change worked? Playtest, of course!

How do you know what change to make, if the effects of mechanics changes are so unpredictable? We will get into some basic tips and tricks near the end of this course. For now, the most obvious way is designer intuition. The more you practice, the more you design games, the more you make rules changes and then playtest and see the effects of your changes, the better you will get at making the right changes when you notice problems… and occasionally, even creating the right mechanics in the first place. There are few substitutes for experience… which, incidentally, is why so much of this course involves getting you off your butt and making games .

“If the computer or the game designer is having more fun than the player, you have made a terrible mistake.”

This seems as good a time as any to quote game designer Sid Meier. His warning is clearly directed at video game designers, but applies just as easily to non-digital projects. It is a reminder that we design the Mechanics of the game, and designing the Mechanics is fun for us. But it is not the Mechanics that are fun for our players. A common design mistake is to create rules that are fun to create, but that do not necessarily translate into fun gameplay. Always remember that you are creating games for the players and not yourself.

Mechanics, Dynamics and Complexity

Generally, adding additional mechanics, new systems, additional game objects, and new ways for objects to interact with one another (or for players to interact with the game) will lead to a greater complexity in the dynamics of the game. For example, compare Chess and Checkers. Chess has six kinds of pieces (instead of two) and a greater number of actions that each piece can take, so it ends up having more strategic depth.

Is more complexity good, or bad? It depends. Tetris is a very simple but still very successful game. Advanced Squad Leader is an incredibly complex game, but still can be considered successful for what it is. Some games are so simple that they are not fun beyond a certain age, like Tic-Tac-Toe. Other games are too complex for their own good, and would be better if their systems were a bit more simplified and streamlined (I happen to think this about the board game Agricola; I’m sure you can provide examples from your own experience).

Do more complex mechanics always lead to more complex dynamics? No – there are some cases where very simple mechanics create extreme complexity (as is the case with Chess). And there are other cases where the mechanics are extremely complicated, but the dynamics are simple (imagine a modified version of the children’s card game War that did not just involve comparison of numbers, but lookups on complex “combat resolution” charts). The best way to gauge complexity, as you may have guessed, is to play the game.

Feedback Loops

One kind of dynamic that is often seen in games and deserves special attention is known as the feedback loop. There are two types, positive feedback loops and negative feedback loops. These terms are borrowed from other fields such as control systems and biology, and they mean the same thing in games that they mean elsewhere.

A positive feedback loop can be thought of as a reinforcing relationship. Something happens that causes the same thing to happen again, which causes it to happen yet again, getting stronger in each iteration – like a snowball that starts out small at the top of the hill and gets larger and faster as it rolls and collects more snow.

As an example, there is a relatively obscure shooting game for the NES called The Guardian Legend. Once you beat the game, you got access to a special extra gameplay mode. In this mode, you got rewarded with power-ups at the end of each level based on your score: the higher your score, the more power-ups you got for the next level. This is a positive feedback loop: if you get a high score, it gives you more power-ups, which make it easier to get an even higher score in the next level, which gives you even more power-ups, and so on.

Note that in this case, the reverse is also true. Suppose you get a low score. Then you get fewer power-ups at the end of that level, which makes it harder for you to do well on the next level, which means you will probably get an even lower score, and so on until you are so far behind that it is nearly impossible for you to proceed at all.

The thing that is often confusing to people is that both of these scenarios are positive feedback loops. This seems counterintuitive; the second example seems very “negative,” as the player is doing poorly and getting fewer rewards. It is “positive” in the sense that the effects get stronger in magnitude on each iteration.

There are three properties of positive feedback loops that game designers should be aware of:

1.They tend to destabilize the game, as one player gets further and further ahead (or behind).

2.They cause the game to end faster.

3.The put emphasis on the early game, since the effects of early-game decisions are magnified over time.

Feedback loops usually have two steps (as in my The Guardian Legend example) but they can have more. For example, some Real-Time Strategy games have a positive feedback loop with four steps: players explore the map, which gives them access to more resources, which let them buy better technology, which let them build better units, which let them explore more effectively (which gives them access to more resources… and the cycle repeats). As such, detecting a positive feedback loop is not always easy.

Here are some other examples of positive feedback loops that you might be familiar with:

* Most “4X” games, such as the Civilization and Master of Orion series, are usually built around positive feedback loops. As you grow your civilization, it lets you generate resources faster, which let you grow faster. By the time you begin conflict in earnest with your opponents, one player is usually so far ahead that it is not much of a contest, because the core positive feedback loop driving the game means that someone who got ahead of the curve early on is going to be much farther ahead in the late game.

* Board games that feature building up as their primary mechanic, such as Settlers of Catan. In these games, players use resources to improve their resource production, which gets them more resources.

* The physical sport Rugby has a minor positive feedback loop: when a team scores points, they start with the ball again, which makes it slightly more likely that they will score again. The advantage is thus given to the team who just gained an advantage. This is in contrast to most sports, which give the ball to the opposing team after a successful score.

Negative feedback loops are, predictably, the opposite of positive feedback loops in just about every way. A negative feedback loop is a balancing relationship. When something happens in the game (such as one player gaining an advantage over the others), a negative feedback loop makes it harder for that same thing to happen again. If one player gets in the lead, a negative feedback loop makes it easier for the opponents to catch up (and harder for a winning player to extend their lead).

As an example, consider a “Kart-style” racing game like Mario Kart. In racing games, play is more interesting if the player is in the middle of a pack of cars rather than if they are way out in front or lagging way behind on their own (after all, there is more interaction if your opponents are close by). As a result, the de facto standard in that genre of play is to add a negative feedback loop: as the player gets ahead of the pack, the opponents start cheating, finding better power-ups and getting impossible bursts of speed to help them catch up. This makes it more difficult for the player to maintain or extend a lead. This particular feedback loop is sometimes referred to as “rubber-banding” because the cars behave as if they are connected by rubber bands, pulling the leaders and losers back to the center of the pack.

Likewise, the reverse is true. If the player falls behind, they will find better power-ups and the opponents will slow down to allow the player to catch up. This makes it more difficult for a player who is behind to fall further behind. Again, both of these are examples of negative feedback loops; “negative” refers to the fact that a dynamic becomes weaker with iteration, and has nothing to do with whether it has a positive or negative effect on the player’s standing in the game.

Negative feedback loops also have three important properties:

1.They tend to stabilize the game, causing players to tend towards the center of the pack.

2.They cause the game to take longer.

3.They put emphasis on the late game, since early-game decisions are reduced in their impact over time.

Some examples of negative feedback loops:

* Most physical sports like Football and Basketball, where after your team scores, the ball is given to the opposing team and they are then given a chance to score. This makes it less likely that a single team will keep scoring over and over.

* The board game Starfarers of Catan has a negative feedback loop where every player with less than a certain number of victory points gets a free resource at the start of their turn. Early on, this affects all players and speeds up the early game. Later in the game, as some players get ahead and cross the victory point threshold, the players lagging behind continue to get bonus resources. This makes it easier for the trailing players to catch up.

* My grandfather was a decent Chess player, generally better than his children who he taught to play. To make it more of a challenge, he invented a rule: if he won a game, next time they played, his opponent could remove a piece of his from the board at the start of the game (first a pawn, then two pawns, then a knight or bishop, and so on as the child continued to lose). Each time my grandfather won, the next game would be more challenging for him, making it more likely that he would eventually start losing.

Use of Feedback Loops

Are feedback loops good or bad? Should we strive to include them, or are they to be avoided? As with most aspects of game design, it depends on the situation. Sometimes, a designer will deliberately add mechanics that cause a feedback loop. Other times, a feedback loop is discovered during play and the designer must decide what (if anything) to do about it.

Positive feedback loops can be quite useful. They end the game quickly when a player starts to emerge as the winner, without having the end game be a long, drawn-out affair. On the other hand, positive feedback loops can be frustrating for players who are trying to catch up to the leader and start feeling like they no longer have a chance.

Negative feedback loops can also be useful, for example to prevent a dominant early strategy and to keep players feeling like they always have a chance to win. On the other hand, they can also be frustrating, as players who do well early on can feel like they are being punished for succeeding, while also feeling like the players who lag behind are seemingly rewarded for doing poorly.

What makes a particular feedback loop “good” or “bad” from a player perspective? This is debatable, but I think it is largely a matter of player perception of fairness. If it feels like the game is artificially intervening to help a player win when they don’t deserve it, it can be perceived negatively by players. How do you know how players will perceive the game? Playtest, of course.

Eliminating Feedback Loops

Suppose you identify a feedback loop in your game and you want to remove it. How do you do this? There are two ways.

The first is to shut off the feedback loop itself. All feedback loops (positive and negative) have three components:

* A “sensor” that monitors the game state;

* A “comparator” that decides whether to take action based on the value monitored by the sensor;

* An “activator” that modifies the game state when the comparator decides to do so.

For example, in the earlier kart-racing negative feedback loop example, the “sensor” is how far ahead or behind the player is, relative to the rest of the pack; the “comparator” checks to see if the player is farther ahead or behind than a certain threshold value; and the “activator” causes the opposing cars to either speed up or slow down accordingly, if the player is too far ahead or behind. All of these may form a single mechanic (“If the player is more than 300 meters ahead of all opponents, multiply everyone else’s speed by 150%”). In other cases there may be three or more separate mechanics that cause the feedback loop, and changing any one of them will modify the nature of the loop.

By being aware of the mechanics causing a feedback loop, you can disrupt the effects by either removing the sensor, changing or removing the comparator, or modifying or removing the effect of the activator. Going back to our The Guardian Legend example (more points = more power-ups for the next level), you could deactivate the positive feedback loop by either modifying the sensor (measure something other than score… something that does not increase in proportion to how powered-up the player is), or changing the comparator (by changing the scores required so that later power-ups cost more and more, you can guarantee that even the best players will fall behind the curve eventually, leading to a more difficult end game), or changing the activator (maybe the player gets power-ups through a different method entirely, such as getting a specific set of power-ups at the end of each level, or finding them in the middle of levels).

If you do not want to remove the feedback loop from the game but you do want to reduce its effects, an alternative is to add another feedback loop of the opposing type. Again returning to the kart-racing example, if you wanted to keep the “rubber-banding” negative feedback loop, you could add a positive feedback loop to counteract it. For example, if the opposing cars get speed boosts when the player is ahead, perhaps the player can go faster as well, leading to a case where being in the lead makes the entire race go faster (but not giving an advantage or disadvantage to anyone). Or maybe the player in the lead can find better power-ups to compensate for the opponents’ new speed advantage.

Emergence

Another dynamic that game designers should be aware of is called emergent gameplay (or emergent complexity, or simply emergence). I’ve found this is a difficult thing to describe in my classroom courses, so I would welcome other perspectives on how to teach it. Generally, emergence describes a game with simple mechanics but complex dynamics. “Emergent complexity” can be used to describe any system of this nature, even things that are not games.

Some examples of emergence from the world outside of games:

* In nature, insect colonies (such as ants and bees) show behavior that is so complex, it appears to be intelligent enough that we call it a “hive mind” (much to the exploitation of many sci-fi authors). In reality, each individual insect is following its own very simple set of rules, and it is only in aggregate that the colony displays complex behaviors.

* Conway’s Game of Life, though not actually a “game” by most of the definitions in this course, is a simple set of sequential rules for simulating cellular life on a square grid. Each cell is either “alive” or “dead” on the current turn. To progress to the next turn, all living cells that are adjacent to either zero or one other living cells are killed (from isolation), and living cells adjacent to four or more other living cells are also killed (from overcrowding); all dead cells adjacent to exactly three living cells are “born” and changed to living cells on the next turn; and any cell adjacent to exactly two living cells stays exactly as it is. Those are the only rules. You start with an initial setup of your choice, and then modify the board to see what happens. And yet, you can get incredibly complex behaviors: structures can move, mutate, spawn new structures, and any number of other things.

* Boid’s Algorithm, a way to simulate crowd and flocking behavior that is used in some CG-based movies as well as games. There are only three simple rules that individuals in a flock must each follow. First, if there are a lot of your companions on one side of you and few on the other, it means you’re probably at the edge of the flock; move towards your companions. Second, if you are close to your companions, give them room so you don’t crowd them. Third, adjust your speed and direction to be the average of your nearby companions. From these three rules you can get some pretty complex, detailed and realistic crowd behavior.

Here are some examples of emergent gameplay:

* In fighting games like the Street Fighter or Tekken series, “combos” arise from the collision of several simple rules: connecting with certain attacks momentarily stuns the opponent so that they cannot respond, and other attacks can be executed quickly enough to connect before the opponent recovers. Designers may or may not intentionally put combos in their games (the earliest examples were not intended, and indeed were not discovered until the games had been out for awhile), but it is the mechanics of stunning and attack speed that create complex series of moves that are unblockable after the first move in the series connects.

* In the sport of Basketball, the concept of “dribbling” was not explicitly part of the rules. As originally written, the designer had intended the game to be similar to how Ultimate Frisbee is played: the player with the ball is not allowed to move, and must either throw the ball towards the basket (in an attempt to score), or “pass” the ball to a teammate (either through the air, or by bouncing it on the ground). There was simply no rule that prevented a player from passing to himself.

* Book openings in Chess. The rules of this game are pretty simple, with only six different piece types and a handful of special-case moves, but a set of common opening moves has emerged from repeated play.

Why do we care about emergent dynamics? It is often desired for practical reasons, especially in the video game world, because you can get a lot of varied and deep gameplay out of relatively simple mechanics. In video games (and to a lesser extent, board games) it is the mechanics that must be implemented. If you are programming a video game, emergent gameplay gives you a great ratio of hours-of-gameplay to lines-of-code. Because of this apparent cost savings, “emergence” as a buzzword was all the rage a few years ago, and I still hear it mentioned from time to time.

It’s important to note that emergence is not always planned for, and for that matter it is not always desirable. Here are two examples of emergence, both from the Grand Theft Auto series of games, where unintended emergent gameplay led to questionable results:

* Consider these two rules. First, running over a pedestrian in a vehicle causes them to drop the money they are carrying. Second, hiring a prostitute refills the player’s health, but costs the player money. From these two unrelated rules, we get the emergent strategy that has been affectionately termed the “hooker exploit”: sleep with a prostitute, then run her over to regain the money you spent. This caused a bit of a scandal in the press back in the day, from people who interpreted this dynamic as an intentional design that glorified violence against sex workers. Simply saying “it’s emergent gameplay!” is not sufficient to explain to a layperson why this was not intentional.

* Perhaps more amusing was the combination of two other rules. First, if the player causes damage to an innocent bystander, the person will (understandably) defend themselves by attacking the player. Second, if a vehicle has taken sufficient damage, it will eventually explode, damaging everything in the vicinity (and of course, nearly killing the driver). These led to the following highly unrealistic scenario: a player, driving a damaged vehicle, crashes near a group of bystanders. The car explodes. The player crawls from the wreckage, barely alive… until the nearby crowd of “Samaritans” decides that the player damaged them from the explosion, and they descend in a group to finish the player off!

As you can see, emergence is not always a good thing. More to the point, it is not necessarily cheaper to develop a game with emergent properties. Because of the complex nature of the dynamics, emergent games require a lot more playtesting and iteration than games that are more straightforward in their relationships between mechanics and dynamics. A game with emergence may be easier to program, but it is much harder to design; there is no cost savings, but rather a shift in cost from programmers to game designers.

From Emergence to Intentionality

Player intentionality, the concept from Church’s Formal Abstract Design Tools mentioned earlier in this course, is related in some ways to emergence. Generally, you get emergence by having lots of small, simple, interconnected systems. If the player is able to figure out these systems and use them to form complicated chains of events intentionally, that is one way to have a higher degree of player intention.

Another Reading

* Designing to Promote Intentional Play by Clint Hocking. This was a lecture given live at GDC in 2006, but Clint has kindly made his Powerpoint slides and speaker notes publicly available for download from his blog. It covers the concept of player intentionality and its relation to emergence, far better than I can cover here. The link goes to a Zip file that contains a number of files inside it; start with the Powerpoint and the companion Word doc, and the presentation will make it clear when the other things like the videos come into play. I will warn you that, like many video game developers, Clint tends to use a lot of profanity; also, the presentation opens with a joke about Jesus and Moses. It may be best to skip this one if you are around people who are easily offended by such things.

Lessons Learned

The most important takeaway from today is that game design is not a trivial task. It is difficult, mainly because of the nature of MDA. The designer creates rules, which create play, which create the player experience. Every rule created has a doubly-indirect effect on the player, and this is hard to predict and control. This also explains why making one small rules change in a game can have ripple effects that drastically alter how the game is played. And yet, a designer’s task is to create a favorable player experience.

This is why playtesting is so important. It is the most effective way to gauge the effects of rules changes when you are uncertain.

Homeplay

Today we will practice iterating on an existing design, rather than starting from scratch. I want you to see first-hand the effects on a game when you change the mechanics.

Here are the rules for a simplified variant of the dice game called Bluff (also called Liar’s Dice, but known to most people as that weird dice game that they played in the second Pirates of the Caribbean movie):

* Players: 2 or more, best with a small group of 4 to 6.

* Objective: Be the last player with any dice remaining.

* Setup: All players take 5 six-sided dice. It may also help if each player has something to hide their dice with, such as an opaque cup, but players may just shield their dice with their own hands. All players roll their dice, in such a way that each player can see their own dice but no one else’s. Choose a player to go first. That player must make a bid:

* Bids: A “bid” is a player’s guess as to how many dice are showing a certain face, among all players. Dice showing the number 1 are “wild” and count as all other numbers. You cannot bid any number of 1s, only 2s through 6s. For example, “three 4s” would mean that between every player’s dice, there are at least three dice showing the number 1 or 4.

* Increasing a bid: To raise a bid, the new bid must be higher than the previous. Increasing the number of dice is always a higher bid, regardless of rank (nine 2s is a higher bid than eight 6s). Increasing the rank is a higher bid if the number of dice is the same or higher (eight 6s is a higher bid than eight 5s, both of which are higher than eight 4s).

* Progression of Play: On a player’s turn, that player may either raise the current bid, or if they think the most recent bid is incorrect, they can challenge the previous bid. If they raise the bid, play passes to the next player in clockwise order. If they challenge, the current round ends; all players reveal their dice, and the result is resolved.

* Resolution of a round: If a bid is challenged but found to be correct (for example, if the bid was “nine 5s” and there are actually eleven 1s and 5s among all players, so there were indeed at least nine of them), the player who challenged the bid loses one of their dice. On subsequent rounds, that player will then have fewer dice to roll. If the bid is challenged correctly (suppose on that bid of “nine 5s” there were actually only eight 1s and 5s among all players), the player who made the incorrect bid loses one of their dice instead. Then, all players re-roll all of their remaining dice, and play continues with a new opening bid, starting with the player who won the previous challenge.

* Game resolution: When a player has lost all of their dice, they are eliminated from the game. When all players (except one) have lost all of their dice, the one player remaining is the winner.

If you don’t have enough dice to play this game, you can use a variant: dealing cards from a deck, for example, or drawing slips of paper numbered 1 through 6 out of a container with many such slips of paper thrown in.

If you don’t have any friends, spend some time finding them. It will make it much easier for you to playtest your projects later in this course if you have people who are willing to play games with you.

At any rate, your first “assignment” here is to play the game. Take particular note of the dynamics and how they emerge from the mechanics. Do you see players bluffing, calling unrealistically high numbers in an effort to convince their opponents that they have more of a certain number than they actually do? Are players hesitant to challenge, knowing that any challenge is a risk and it is therefore safer to not challenge as long as you are not challenged yourself? Do any players calculate the odds, and use that information to influence their bid? Do you notice any feedback loops in the game as play progresses – that is, as a player starts making mistakes and losing dice, are they more or less likely to lose again in future rounds, given that they receive fewer dice and therefore have less information to bid on?

Okay, that last question kind of gave it away – yes, there is a positive feedback loop in this game. The effect is small, and noticeable mostly in an end-game situation where one player has three or more dice and their one or two remaining opponents only have a single die. Still, this gives us an opportunity to fiddle with things as designers.

Your next step is to add, remove, or change one rule in order to remove the effect of the positive feedback loop. Why did you choose the particular change that you did? What do you expect will happen – how will the dynamics change in response to your modified mechanic? Write down your prediction.

Then, play the game again with your rules modification. Did it work? Did it have any other side effects that you didn’t anticipate? How did the dynamics actually change? Be honest, and don’t be afraid if your prediction wasn’t accurate. The whole point of this is so you can see for yourself how hard it is to predict gameplay changes from a simple rules change, without actually playing.

Next, share what you learned with the community. I have created a new page on the course Wiki. On that page, write the following:

1.What was your rules change?

2.How did you expect the dynamics of the game to change?

3.How did they really change?

You don’t need to include much detail; a sentence or two for each of the three points is fine.

Finally, your last assignment (this is mandatory!) is to read at least three other responses. Read the rules change first, and without reading further, ask yourself how you think that rule change would modify gameplay. Then read the other person’s prediction, and see if it matches yours. Lastly, read what actually happened, and see how close you were.

You may leave your name, or you may post anonymously.

Mini-Challenge

Take your favorite physical sport. Identify a positive or negative feedback loop in the game. Most sports have at least one of these. Propose a rule change that would eliminate it. Find a way to express it in less than 135 characters, and post to Twitter with the #GDCU tag. You have until Thursday. One sport per participant, please!(Source:gamedesignconcepts)

闽公网安备35020302001549号

闽公网安备35020302001549号