万字长文,关注游戏的平衡设定及其与公平实现的关联探讨,上篇

篇目1,若游戏向玩家呈现的众多选择都具有可行性,那么其就具有平衡性——尤其是在资深玩家的高级别体验中。

——Sirlin,2001年12月

若资深玩家已研究、练习游戏几年、几十年或几百年,游戏对其而言依然具有策略趣味性,那么此多人游戏就颇具深度。

——Sirlin,2002年1月

此平衡性的定义非常到位,但可行选择背后依然隐藏2个概念。一方面,我的意思是游戏不会退化到只有一个策略,另一方面,我的意思是说战斗游戏具有众多人物选择,或即时战略游戏具有众多竞赛选择,且其中的多数人物/竞赛都具有可行性。我们姑且将第一、二个概念分别称作可行选择和初始选择的公平性(游戏邦注:简称公平性)。

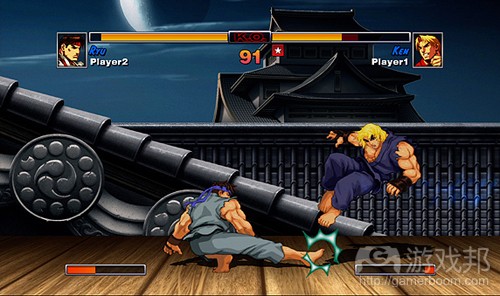

Street Fighter HD Remix(from sirlin)

可行性选择:向玩家呈现众多富有意义的选择。出于深度考虑,他们通常基于特定背景,令玩家能够运用策略做出这些决策。

公平性:技能相同的玩家具有相同的获胜机会,即便他们可能基于系列不同选择/动作/人物/资源等开始游戏。

可行性选择

我们需要在玩法中向玩家呈现系列可行选择,这就是Sid Meier所说的游戏由系列有趣决策构成。

若某熟练玩家能够持续通过某操作或策略打败其他资深玩家,那么这款游戏就缺乏平衡性,因为其中缺乏足够可行选择。这类游戏也许具有众多选择,但我们只关心那些富有趣味的内容。若众多选择只达到同个目的,或无所收获,或输给上述主导操作,那么它们就不是有意义的选择。它们阻碍内容,给游戏带来糟糕复杂性:令游戏难以掌握,失去趣味复杂性。

出于深度考虑,我们希望玩家能够基于某些原则决定这些富有意义的选择。若手边游戏是单回合剪刀石头布游戏,玩家就没有理由优先选择某种出法,所以我们很难在此融入策略。但《街头霸王》类的游戏能够基于瞬间决策:你决定阻塞、投掷或升龙拳,或者《Magic: the Gathering》类的游戏能够基于单一决策:是否采用反制法术。这些例子乍看之下同剪刀石头布模式类似,但基于比赛情境的决策存在众多细微差别,其中各玩家面对众多关乎未来举措的线索。在《街头霸王》和《Magic》中,玩家能够基于某原则优先进行某操作,我们希望游戏包含不止一个的可行选择。

关于深度,我们希望富有意义的决策能够基于对手的操作。不妨想象下《星际争霸》做出这样的调整:玩家无法进行互相攻击。他们所能进行的操作就是花5分钟创建自己的基地,然后基于所创建内容计算分数。这款游戏包含众多决策,存在系列获胜路线,但由于这些决策纯粹关乎优化问题(游戏邦注:更像是解决谜题,而非体验游戏),因此造就的是款肤浅的竞争性游戏。幸运的是,在实际的《星际争霸》中:玩家决定创建内容时需考虑对手会建造什么。

虽然我们认为游戏应融入大量可行选择方能算具有平衡性,但需创造玩家决策情境及决策要涉及对手操作的要求主要同深度相关。但这些内容颇值得特别说明,因为在我们平衡游戏的同时应试着提高游戏深度,而非降低此标准。

公平性

这里的公平性指的是所有玩家都具有相同获胜机会,虽然他们可能会基于不同选择开始游戏。在《街头霸王》中,各角色具有不同运动方式,在《星际争霸》中各竞赛具有不同单元,在《魔兽世界》中,各角逐团队具有不同级别、技能和工具。所有这些不同选择组合应存在公平性。

我要特别指出的是,我只是谈论游戏开始时玩家所面临的选择。这是不容忽视的差异。在游戏开始后呈现的选择无需具有公平性。不妨想象下这样的第一人称射击游戏:8种武器在地图上的各位置生成,其中2种非常杰出,3种还行但算不上一流,剩余3种非常糟糕,但比2款一流武器中的某款强大。

这是否就是理论角度的游戏平衡性?也许就是如此,其符合目前所述的所有标准。游戏设计师需确保所有武器具有相同威力,但只要各武器在正确情境中依然属于可行选择,他就无需考虑这点。此构思不错:设置2个玩家争相角逐的武器,若干还不错的中等武器,以及某些主要让玩家对抗强大武器的薄弱武器。决定控制地图哪部分位置涉及众多策略元素,而何时转变武器则取决于对手的操作。

相反,基于此方案设计的8角色战斗游戏就缺乏平衡性,因为无法满足公平性标准。玩家在游戏开始前决定战斗游戏的角色,但他们在玩法中选择第一人称射击游戏模式的武器。玩家所处角色劣于对手角色属于不公平情况。

让玩家基于不同选择开始的游戏很难获得平衡,因为他们需要让那些选择存在公平性,同时在玩法中呈现众多可行选择。

对称&非对称游戏

所谓的对称游戏是指所有玩家基于系列相同选择开始游戏。所谓的非对称游戏是指玩家基于系列不同选择开始游戏。不妨将此术语看作某区间,而非两个端点。

在区间左侧,具有代表性的游戏包括国际象棋。在国际象棋中,双方都基于16颗棋子开始游戏,唯一的差别是白棋优先。由于存在不同起始条件,我们很难说国际象棋具有100%对称性,但其非常接近此标准。若国际象棋是你看过的唯一一款游戏,你也许会觉得黑白双方的体验方式完全不同;白棋控制节奏,而黑棋做出相应反应。有许多书籍专门介绍黑棋一方如何进行体验。若将范围锁定全球范围的众多游戏,我们会发现国际象棋的双方同《星际争霸》的两种竞赛,《街头霸王》的两种角色或《Magic: The Gathering》的两种牌组相比则相似度很高,很难进行区分。

游戏的初始条件越丰富,其就越接近区间的右边。所以这里的非对称性是用于衡量游戏初始条件的多样性。这并非什么严密科学,所以我们就无法通过什么特定公式确定游戏在此区间所处的位置,但这是非常通俗易懂的概念。

下面就来看看几个例子。《星际争霸》包含3种不同竞赛,所以它靠近区间的右侧。也就是说,虽然3种竞赛各不相同,但其数量不算多,我们不应将其放至非常左边。战斗游戏能够融入众多体验方式各异的角色,倾向融入比其他多人竞赛游戏更多的非对称元素。

iceberg(from sirlin.net)

也就是说,单人战斗游戏的非对称性变化幅度很大。例如,《VR战士》是款非常杰出和深刻的战斗游戏,但其角色的丰富性相比其他战斗游戏就低很多。相比《街头争霸》(游戏邦注:其中某些角色具有能够延伸至整个屏幕的导弹或武器,或者能够飞行环绕整个球场),《VR战士》的角色模版都非常相似。再来就是《罪恶装备》,你也许从未听过这款战斗游戏,其丰富性胜过我所知晓的所有同类作品。某角色能够创造复杂形式的台球,另一角色能够同时控制两个角色,而另一角色则具有有限数量的硬币,这能够强化角色的其他操作,同时解锁强化操作的奇怪漂浮薄雾。仿佛所有角色都来自不同游戏,但它们却能够公平地进行竞争。《罪恶装备》始终处在区间的右侧,因为它包含不同起始选择(角色) ,而且选择量非常大(超过20)。

《Magic: The Gathering》在构造格式(其中玩家将预先制作好的牌组带入比赛中)上也相当不对称。适合牌组的丰富性非常惊人,比赛通常包含各种威力相当的不同牌组,虽然其玩法各不相同。

第一人称射击游戏倾向朝对称一侧靠拢,通常在开始时向玩家提供相同选择,除生成地点外。记住在《军团要塞 2》的玩法中,玩家可选择不同武器,甚至改变级别,这算不上非对称的体现。此外,双方具有非对称目标的第一人称射击游戏通常会转换玩家位置,让他们在其他回合中角色互换,这样整体比赛才具有对称性。

我们已描述若干游戏在上述区间的粗略位置,记住这并非衡量游戏质量的方式。若你最喜欢的游戏出现在左边(对称一侧),这并不意味着这些游戏不好。若你喜欢《星际争霸》 胜过《罪恶装备》,你无需因《罪恶装备》“更不对称”而感到沮丧。此区间旨在让我们获悉游戏起始选择的差异性,而非游戏深度或趣味。

无论游戏出现在此区间的哪个位置,其依然需要提供众多在玩法中起到平衡作用的可行选择。除此之外,游戏越靠近区间图的左侧,就越需要平衡不同起始选择的公平性。

那么,我们要如何确保游戏玩法中有足够的可行性选择呢?

Yomi Layer 3

在竞争性多人游戏中,最糟糕的情况是出现主导操作(游戏邦注:或者主导武器、角色、单位等内容)。我所指的这种主导操作并非仅仅算是优秀,而是那些必定优于其他操作选 择的操作,因而其存在会降低游戏的战略性。主导操作也很可能没有任何应对方法,因而即便对手知晓你将采取何种行动,他们依然无可奈何。

要防止此类主导操作的产生,我们应当要知道Yomi Layer 3概念。“Yomi”在日语中是“阅读”的意思,比如读出对手的思维(游戏邦注:有款出自作者之手的策略卡牌游戏的名 称也是这个词)。如果你的操作很强大,并用此来击败技术较差的对手,我将此称为“Yomi Layer 0”,也就是说双方玩家根本都不用去尝试猜测对手的做法。Layer 1指对手在察 觉到你的操作后作出对抗。Layer 2指你反抗他的对抗。Layer 3指他反抗你的对抗。

以上内容似乎听起来让人很难理解,但是这在真正的游戏玩法中显得极为直观易懂。其意思就是,你和你的对手双方都有两个选择:

你:优秀的操作和Layer 2对抗

对手:针对你优秀操作的对抗和针对你Layer 2对抗的对抗

通常情况下,设计师无需设计Yomi Layer 4,因为到这个时候,你可以回到循环起点,展开最初始阶段的优秀操作。举个我在《街头霸王HD混合版》中创造出的Yomi Layer 3为例。

本田想要通过他的冲击操作靠近肯,但是肯扔个火球来对抗这种情况。我设计本田有能力用冲击来抵抗这些火球,但是只使用冲刺并不能使之移动很长距离。如果本田能够用招数 抵消火球并且还能靠近对手,这对他来说很有好处。对于这种情况,肯的应对方法是不首先抛出火球,放任本田做出冲刺。在本田向前飞行的过程中,肯可以向前移动,在冲刺冷 却时用扫腿击中他。

本田–移动较长距离的冲击和抵消火球的冲刺

肯–火球和向前移动并扫腿

我不需要再添加任何内容来构建Yomi Layer 4,因为本田可以用原先的全屏冲击来对抗本田的向前移动和扫腿。在竞争游戏中,Yomi Layer 4可以通过这种方式来实现。

这个概念提醒我们,操作需要存在对抗方式。如果你知道对手即将采取何种动作,通常情况下你应当有某些应对方法。在你的游戏开发过程中,应当不断询问自己,各种游戏玩法 情境是否能够支持Yomi Layer 3想法。如果答案是否定的,那么肯定存在某些主导操作,这会影响到游戏的质量。

局部平衡与整体平衡

应当记住的是,我所定义的公平性指的是获胜的总体机会相同,但是会存在不同的初始选择。公平性是个整体性术语,它的使用对象是从游戏开始到某个玩家胜利的整个游戏过程 。但是局部层面不需要完全公平,也就是指游戏中的某个特定情境。即便是像象棋这样的对称游戏也有不公平的情境。当你只剩下3个棋子,而对方还剩下9个,这中情境对你来说就是不公平的。或者在《星际争霸》中,我们发现2个Zealot会击败或输给8个Zergling,这些情况都有可能发生,也是正常的,即便二者的制造消耗完全相同的资源。我们关心的并非诸如此类的情境是否公平,我们关心的只是神族与虫族的对抗是否公平。

将军情境意味着一方玩家几乎已经获得胜利,即便游戏还未结束。比如在《超级街头霸王2》中,如果本田向位于角落的古烈施放能够致人死地的Ochio Throw,随后他可以连上一 系列操作(游戏邦注:包括用上更多的Ochio Throw),事实上他的前一个操作已经确保自己能够获得胜利。玩家操作失误可能会改变结果,但是只要你见到了首个操作,就能意识到可能会进入将军情境。

将军情境的存在是否合理呢?它明显违背了我们提出的多种可行操作的需求(游戏邦注:此时本田只有1个选择,而古烈根本没有选择)。它显然违背了Yomi Layer 3概念。然而, 将军情境的存在是合理的。在这种比赛中,本田要靠近古烈非常困难。如果实现了目标,都应当获得此等奖励。在将军情境发生之前的所有游戏过程都是很公平的,即便本田在靠近对手之后可以施放此等必杀招数,但是从整体上来说比赛优势还是更倾向于古烈。

但是,我还想指出这个争论另一个方面的内容。有些玩家认为即便古烈在这场比赛中占有优势,但是本田能够重复施放Ochio Throw的能力实在过于不合理。他们表示本田的确需要这个招数来获得胜利,但是游戏整体还应当设计得更好些,不应如此极端。只要设计本田能够更加容易地靠近古烈,那么就可以取消这种将军情境。

我想起暴雪游戏设计副总裁Rob Pardo曾在游戏开发者大会多人游戏平衡演讲中回应过这个观点。他表示,即时战略游戏中设置“超级武器”往往是个糟糕的想法。它们让受害者认为他们已经无法挽回局面(游戏邦注:也就是进入将军情境)。他解释称,尽管《星际争霸》中人族的原子弹看似超级武器,但是其有许多内在的缺陷:幽灵单位必须靠近受害者的基地,受害者的基地上会出现红色的目标点,受害者会被提前10秒告知,使他有足够的时间摧毁幽灵来防止受原子弹袭击。

Pardo提出了个较好的观点,这足以应对那些抱怨本田的玩家。虽然我认为将军情境是合理的,但是换我来做决定时,我移除了《街头霸王HD混合版》中的本田将军情境。在这款游戏中,我设置其能够更容易地靠近古烈,将他的将军情境替换为Yomi Layer 3情境,这样整个比赛过程中就会有更多的可行决定。

无用情境

无用情境很像将军情境,但是有个不同之处,那就是时间。本田的将军情境需要大概3秒钟的时间就可以结束。但是,让我们考虑战斗游戏《Marvel vs. Capcom 2》中的类似情境。在这款游戏中,每个玩家都可以组建拥有3个角色的队伍,一个角色上场战斗,另外两个角色待命。玩家可以在任意时刻呼叫待命角色来援助战斗角色,让他们与自己的主角色并肩作战。或者更好的做法时,两个角色的攻击错开,这样每次攻击都会在另一个角色攻击的冷却时间进行。

当某个玩家只剩下最后一个角色时,他就无法再寻求援助。他必须用仅剩的这个角色与对手的两或三个角色战斗,这似乎也算是将军情境。问题在于,这种比赛的结束需要耗费相当长的时间,所以我们将游戏的最后部分称为无用部分。其他战斗游戏的高潮会持续到比赛最后一刻,但是无用部分的存在意味着游戏的真正高潮点出现在比赛中期的某个时间,随后输家会被迫去完成这个毫无意义的比赛,旁观者也会对接下来的比赛失去兴趣。确实,在极少的情况下可能会出现翻盘,但是没有无用结局的游戏中也会出现翻盘的情况,所 以这并非有说服力的论据。

虽然将军情境的存在是合理的,但是你在游戏设计中应当避免时间过长的无用结局。象棋和《星际争霸》中都有令人不悦的资产,玩家往往会在游戏结束之前就投降。这些游戏显示出,含有无用结局并非很可怕的事情(游戏邦注:象棋和《星际争霸》都是很受玩家欢迎的游戏),但是作为设计师,你还是应当全力避免这种情况的产生。

探索设计空间

设计空间指你可以在游戏中做出的所有设计决定。无论你的游戏是对称的还是非对称的,让游戏尽可能地触及设计空间的各个角落总是个不错的想法。这可以让游戏更有深度,也可以避免主导操作的出现。

比如,在我设计的虚拟卡牌游戏《Kongai》中,每个角色有4个操作。当操作进行时,有一定的几率触发效果。我们为角色的4种不同操作设定了不同的伤害、速度和能量消耗。如果我们止步于此的话,游戏可能就失去变得更为多样化的机会,而且也有可能出现某些主导操作,减少了可行选择的数量。因而,我尝试尽可能地探索设计空间。有个操作可以将战斗范围由近变远,这通常适宜在攻击阶段前使用。有个操作造成的伤害足以杀死游戏中的每个角色,但是你用此操作攻击某个角色后4个回合才能产生效果。有个操作可以攻击到 那些脱离战斗的角色,虽然脱离战斗可以抵挡住几乎所有的攻击。

要点在于,我们对设计空间探索得越深,玩家就越难以权衡操作的相对价值。玩家可能会产生如下疑问:是要选择有90%的几率在战斗期间改变攻击范围的操作,还是要选择有95% 的几率攻击到隐身角色的操作呢?这种选择很难,需要根据众多因素来做出决定。这算是种优秀的设计,因为它意味着在某些情境下,每种操作都可能会发挥作用,而玩家的技能就在于发现做出操作的合适时机。我将此称为技能评估。

玩家也希望你能够探索设计空间。如果游戏中的所有内容都过于简单,游戏看上去就不够丰富精彩。你呈现出的微妙之处和不同选择越多,玩家就越有可能展现出自己独特的玩法 风格。

分清良莠

以下是Strunk & White的《The Elements of Style》中我最喜欢的内容:

删除无用的内容

强大的作品往往是简明扼要的。句子中不应当包含有多余的字词,段落中不应当包含有多余的句子,绘图中同样不应当包含有多余的线条,机器中也不应当包含有多余的零件。这 并非要求作者写出的全是短句子,或者说省略所有的细节并以提纲的方式列举出所有的主题内容,而是要求每个字词都能够发挥作用。

你应当以同样的方式来对待游戏设计。探索设计空间是应当的,但是需要避开无用的字词、机制、角色和选择。可行选择的首要目标是确保能够给予玩家足够数量的选择,其次要 目标是要消除所有的无用选择。

《Marvel vs. Capcom 2》中有54个角色,多得显得有点可笑。多少数量的角色合适呢?我的意见是10个。如果战斗游戏中的10个角色能够实现平衡,这无疑算是成功,因为这是很 困难的事情。将上述情况与《超级街头霸王2》的16个角色和《罪恶装备》的23个角色相比,你就可以发现何为真正的紧凑设计。

有种游戏题材以故意创造出大量无用选择而闻名,那就是卡片收集游戏。虽然我认为《魔法风云会》(游戏邦注:下文简称“MTG”)是世界上设计最棒的游戏之一,但是我的评判 标准是竞标赛环境所能够支持的优秀卡片的数量,而并非可用选择与无用选择的比率。如果以后者来看,这款游戏完全可以算作是失败的作品。

MTG的Mark Rosewater辩解称他们是出于设计原因而故意设计出较差的卡片,但他的说法源于被营销部门的观点所误导。Rosewater声称游戏中可以存在较差卡片,因为:

1、它们承载了某些有趣的实验性机制

2、它们测试了玩家的价值评判技能,如果所有的卡片效用都是平衡的,会降低游戏的战略性

3、它们让新玩家体验到发现较差卡片的乐趣,这算是进一步研究游戏和学习游戏的垫脚石

4、它们是必要的,因为即便它们与老牌组中的已知优秀卡片出现在相同的牌组中,但竞标赛上只有8个可用的牌位,它们很可能并不会被用到第2和第3个理由完全没有道理。声称移除较差卡片会降级游戏的战略性完全是自辱游戏。声称需要让新玩家去发现故意添加其中的较差卡片显得更为可笑,因为这使得卡牌组对新玩家显得更为复杂,而对专家级玩家来说由显得冗余。我们都知道,如此设计的真正原因是要让玩家购买更多的随机卡包来获得其中屈指可数的优秀卡片。

最后,第4个理由是在公然承认游戏应该有较少的卡片。令人感到讽刺的是,我甚至不能确定第4个理由是正确的。印制大批量优秀卡片或许确实会导致竞标赛牌位增加。如果不是的话,他们完全可以不继续印制这种效果有好坏之分的卡片。

你可能会说,MTG证明了这样的设计也能够获得成功。对他们销售卡包来说,或许将少数可行选择放置在大量较差选择中是个良好的业务手段。但是我们在其他题材的游戏中并未看到这种情况。然而,我们确实也还未看到有人勇敢地站出来面对MTG的这个问题,向玩家提供设计类似但消除了所有无用卡片的竞争卡片游戏。

双盲猜测

我在自己的《Yomi》卡片游戏和《Kongai》虚拟卡片游戏(游戏邦注:看似卡片游戏的回合制战略游戏)中都使用了双盲猜测技术。这个想法是,让所有玩家在知晓其他玩家的选 择之前做出自己的选择。

我从战斗游戏中学到这个概念。虽然它们看上去似乎是完全信息游戏,因为你可以看到对手看到的所有内容,但是战斗游戏事实上是双盲游戏。玩家在游戏中需要精确地把握时机 ,在你跳跃的时候往往不知道对手是否会施放火球。你知道的只是0.3或0.5秒之前他没有这么做。你意识到对手的操作只需要很短的时间,尽管这段时间看似毫无价值,但事实上它对战斗游戏来说至关重要,正是它使得战斗游戏像是战略游戏。

《星际争霸》之类的即时战略游戏有着同样的资产,但是时间相对而言较长。在你决定建造何种建筑物之时,往往并不能精确地了解对手在他的基地中建造何种建筑物。即便你可 以侦查他的基地,你收集到的也是数秒之前的信息,所以对于他此刻的行动你只能猜测。

如果从我的两款卡片游戏中移除双盲性,或者从战斗和即时战略游戏中移除双盲性,我想这些游戏都将崩溃。这些游戏需要双盲决策才显得有趣。这种设计样式是增加游戏中可行 操作数量的方法,因为从本质上来说,它强迫玩家进入我上文中提到的Yomi Layer 3概念。在双盲游戏中,较弱的操作反而变得较好,因为做出这些操作后可以更容易地避免被对手施以对抗招数。我甚至曾调侃称,世界顶级《Virtua Fighter》玩家间的比赛是“第三最佳操作的战斗”。有时,玩家担心他们做出的“最佳”选择会被对手反攻,所以他们更 寄希望与对手无法反攻的第三最佳选择。如果游戏中完全不含有猜测元素,那么玩家也就不会使用最佳第三操作。

游戏测试

最后,游戏测试是你找到问题所在的方法,尤其是在能够找到行家进行测试的情况下。游戏行家是否忽略了很大部分的游戏操作?他们是否发现了某些你不知晓的将军情境?你是 否发现他们在游戏中使用了各种不同的战略?

如何进行游戏测试是个内容覆盖极广的话题,但是我在这里可以提供某些值得考虑的做法。第一,质疑测试者的看法。玩家对会游戏的改变有过火的反应,声称某些战略根本没有 对抗方法,但事实上这种对抗方法是存在的。解析出游戏中真正有效的战略往往需要数年时间,而游戏测试只是这个漫长旅程的开始。如果他们发现某些看似游戏最佳战略的东西 ,这些东西或许只是局部最佳战略,或许是因为他们还未发现某些更为强大的不同玩法。这种情况在战斗游戏中同样存在。

游戏测试是校验游戏平衡的唯一工具,理论根本无法完全替代它。我想所有人都知道他们需要游戏测试,但是最困难的问题是,当测试者的意见存在分歧时,你要听从何人的意见 ?你要如何得知测试者误解了游戏中的设计?这个问题很困难,我将随后的文章中进行阐述。

总结

如若需要确保我们有众多可行选择,使用Yomi Layer 3系统构建对抗是个不错的开始。但是,并非所有的情境都需要上述方法,将军情境也是可以接受的,但是你应当尽力避免出 现持续时间较长的无用情境。通过提供各种不同的操作来探索游戏的设计空间,因为这项技术很有可能让所有的操作在某些游戏时刻均可用,从而让最佳操作的决定变得困难。这 可以考验玩家的技能。删除所有没有价值的选择,因为它们只会让玩家感到困惑,但是某些题材游戏中的无用内容可以让你赚到大笔金钱。双盲猜测机制能够保持更多的操作可行 性。

最后,世界上所有的游戏理论都不能够完全替代游戏测试。

自动平衡

为了更轻松地实现这半不可能的任务,我们应该使用自动平衡力量。如此我们将在面对多种选择的同时待在自动防故障装置中,从而避免一些玩家可能在未来所创造出的未知战术 。我将列举两款游戏进行说明:《万智牌:旅法师对决》和《罪恶装备XX》。

在《万智牌》中,各种游戏机制,如反制法术,直接伤害,治疗等等都被分为5种颜色。玩家可以根据自己的喜好利用这5种颜色去创造桥牌,但是当他们选择了更多颜色,他们便 越难获得一个正确的魔法去使出各种颜色的咒语。

结果便是桥牌将趋于专门化,并明确了其内在的劣势。例如红色不能够伤害魔法纸牌,所以即使红色桥牌最为强大,它也具有内在的劣势。同样地,每个颜色拥有2种颜色的敌人, 这些敌人的颜色通常包含比敌人颜色强大的纸牌。再一次,如果红色桥牌变得非常强大,那么蓝色和白色纸牌便能将其遏制住。

最后,当Wizards of the Coast推出了带有新机制的新序列时,他们还包含了1或2张较弱的纸牌,但是足以对抗新机制了。我认为他们希望这些特定的反击是不必要的,但是如果 元游戏因为新机制变得难以战胜,它们至少还可以使用一些工具去抗击这些新机制。

举个例子来说吧,《万智牌》的Odessey专注于包含弃牌区(在游戏中也叫做“墓地”)的新机制,纸牌Morningtide能够将所有纸牌从墓地中删除。如果玩家开始不知道如何面对 墓地时,Morningtide便是反击手。尽管这种反击并非真正需要。之后,Mirrodin砖块将专注于工件纸牌。纸牌Annul可以只是用一个魔法便击败工件(和魔法),而纸牌Damping Matrix将阻止工件能力发挥作用。在Mirrodin的例子中,工件机制最终被遏制了。Annul和Damping Matrix是非常棒的理念,但是在Mirrodin机制中需要更强大的机制。

这与我在第二部分中提到的《Yomi Layer 3》的理念相同。该理念是为了对游戏做出反击,即当某些内容变得过于强大时,游戏有足够的弹性能够推动玩家去面对它们。

《罪恶装备》便是故障自动防护系统的重要例子。

防护仪表

每当你击中对手时,他们的“防护仪表”便会下降。下降越低,他们的射击范围便越短。这便意味着即使一连串的移动是一个“无限组合”,当你进行第一次射击时,你便能够不 断保持向其发射,而随着对手的射击范围不断缩短,最终他们便很难逃离这种组合了。

进程引力

当你在组合中被耍了时,引力也将让你的角色随着时间的发展变得越来越棒。所以即使组合可能会将你耍得团团转,被害者的身体也将随着时间的发展更快速下降,这将最终摧毁 无限游戏机制。

绿色阻块

想象一个关于阻块的攻击序列,即敌人连续发动攻击而导致你不断后退,退得太远而难以继续。但是当你面对最后的攻击时,你可以通过一个特别的移动取消它并让角色能够继续 向前。之后,你可以重复该序列并阻止敌人的进攻。在这种情况下存在戒备陷阱,而在《罪恶装备》中,角色可以使用我所谓的“绿色阻块”阻止该陷阱。在阻止敌人进攻的同时 ,你也可以使用一些超级仪表去创造绿色力场而将敌人与自己远远地隔开,并最终摧毁他的陷阱。

这些功能是用于解决连设计师都不知道的问题。他们只知道如果游戏在无限组合或锁定状态下结束,一些自动故障装置便能够解救它们。同时他们也可以因此去设计一些不同的角 色。不管角色多疯狂,这种战术多可怕,设计师都知道所有角色所共享的自动故障装置至少能够控制所有内容。

游戏测试和过程修正

不管你的游戏是否具有自动故障装置系统,在某些情况下你都需要设计一个多样化的角色/种族等设置,确保它们彼此连贯有趣,然后通过游戏测试自信地捡出平衡问题。这个世界 上的所有理论都不会让你脱离游戏测试。

你需要开始调整游戏,做出反应并不断学习。别让制作人将调整放到你所负责的一个固定项目列表中。这是一个持续的过程,持续到你最终发行游戏。游戏测试能够让你发现你之 前未曾预测到的情况,你也应该坦诚地面对这些发现。其目标并不是创造出你最初设想的游戏,因为你最初的理念并未考虑到自己从开发和测试中所学到的内容。当你或测试者发 现细微差别或不可预见的属性时,你便能够围绕着这些属性进行创造,并将其整合到游戏的平衡中。

层级列表

在平衡《街头霸王》,《Kongai》以及纸牌游戏《Yomi》时,我使用的是与游戏测试者类似的方法。我认为这种方法滨不能真正依赖于类型,关键还在于管理层级列表。

“层级列表”这一次是来自打斗游戏类型。它表示每个角色的能量级别,从最高到最低,但它同时也接受了这一列表并不准确。比起将20个角色设定从1到20的排名,该理念更倾向 于将他们归到能量“层级”中。要记住,即使你面对的是一款100%平衡的游戏,玩家也仍需要一个层级列表。你应该接受这样的玩家列表,而你的工作就是好好管理它们。

在《Kongai》和《Yomi》中,我甚至给予了玩家有关这种层级列表的模版(这对于作为设计师的我来说非常有用)。首先我让他们去思考3个层级:最高,中等和最低。然后我告诉 他们我希望空着的两个“秘密层级”。

0)上帝层(没有一个角色应该出现在这一层,如果他们真的做到了,你就需要赋予其挑战性)

1)最高层(不要害怕将自己最喜欢的角色带到这里)

2)中层(很棒,但是不像最高层那么厉害)

3)最底层(我仍然能够战胜它们,但有点困难)

4)垃圾层(没人应该出现在这一层。操控这样的角色就没意义了)

关于平衡我的第一个目标便是确保上帝层每人。当然有些角色最终也会变得足够强大,或并列强大,这也没关系。但是“上帝层”的角色因为太过强大将会导致剩下的游戏变得无 聊。我们必须解决这种问题,因为这会破坏整体的游戏玩法(乃至游戏)。同样地,上帝曾的任何能量级别都非常高,我们甚至不敢奢望去平衡围绕在它身边的游戏内容。

我的下一个目标是摆脱垃圾层角色。他们太糟糕了以至于没人愿意变成这样,但是他们也可以通过提高能量而攀升到最高,中间和最底层某一阶段。如果他们到了这三个阶层中, 他们便可以继续游戏了。

公共层列表

如果测试者可以看到彼此的层列表就再好不过了。我有必要去阅读有关这些内容的争论,而测试者也该以此去划分他们的理念。有时候当某些人将一个角色一反常规地放置在列表 中较高或较低的位置上时,我便会进一步挖掘玩家是否真的知道一些我们并不知道的内容。其它时候,玩家只是感到抓狂,而其他测试者则会很高兴地指出这些内容。我们同样也 能看到测试者得出了怎样的一致性,就像他们是否会将特定角色归在最糟糕的位置上。

我认为在每一款游戏中最重要的一点便是测试社区能够始终提供上帝层或垃圾层不包含任何角色的层列表。当你做到这点时,你的下一个目标便是压缩层。这便意味着你需要确保 最佳和最糟糕角色间的区别保持最小。需要注意的是这便意味着即使底层的角色仍与一个月前一样,你也有可能很大程度地完善了游戏。

调整层

在我平衡过的所有游戏中,我使用了相同的方法,即让高层去设定基础能量层。在《街头霸王》中,我便拥有一个已建立的高层作为最初游戏的起点,但是在《Kongai》和《Yomi 》中,角色在高层结束游戏则参杂着意外元素。但是在早期,当忽略上帝层时,我们很清楚哪些角色/桥牌在上层,我将其设为目标能量级别。换句话说,那一层的角色是关于“人 们对游戏的想法”。再一次,我也未计划谁应该出现在那里,但是我接受最终结果。所以如果高层是目标,你就应该尽力去调整底层。如果高层是预期能量级别,你肯定不希望陷 入一些好的内容中。相反地,推动底层角色往上走并尽可能压缩层与层间的差距,最终结果便是最糟糕的角色与最厉害的角色间不会有太大的差别。

在做出这些调整时我不断注意到一些心理元素。第一个便是每当我做出糟糕的移动或让角色掉落到较底层时,玩家便会反应过度。有时候高层会掌握较高的能量,或者一般玩家最 终面对一些意想不到的好结果,又或者某一角色的移动减少了游戏的策略性,并需要通过失败去获取其它内容。关于消弱能量也存在许多原因。

我将使用一些假设数字去传达总体思路。想象移动是在10个能量级别中的第9级,这对于角色来说太高了。我不只一次发现当我将能量级别设置在10级中第8级时,测试者便会抱怨 该移动没有价值,并将角色下放(至少)1层。这种情况经常发生。出于某种原因,每一款游戏中的玩家似乎都不能掌握高层角色即使做得不好也仍然能够待在高层的这一理念。

这是其中一个你不会听从游戏测试者的理念。忽视他们对于消弱的第一反应,让他们慢慢去适应它,让他们看看基于新移动版本是否还能获取成功,然后重视他们关于移动或角色 的反馈。

我们需要了解的其它心理效应是,当你提高移动的能量时会怎样。我在游戏开发者大会中听取了Rob Pardo有关平衡多人游戏的演讲,并尝试着将其用于自己平衡的所有游戏中,我 想Rob的观点是正确的。他说如果你采取某一行动并且是自己还不确定如何做到平衡的,你就需要确保它足够强大。如果它过于虚弱,你便会冒着没人去使用它的危险。在此之后, 当你提高它的能量时,也就没有测试者会注意或关注它了。因为他们的心里已经认定该移动是虚弱的。甚至当你提升它的能量而到达某一合理的能量级别时,你也很难吸引到人们 的注意。

相反地,Pardo认为应该一开始就赋予该移动足够的能量。如此所有人便会在乎它。我在《街头霸王HD Remix》中的T.Hawk,Fei Long和Akuma上便都这么做,因为我不知道如何设 定他们的能量级别。这些角色在游戏开发的某个点上都是最棒的角色,这便意味着我会获得来自测试者的大量反馈。有时候“太过强大”的角色版本的结果也是好的,但是有时候 也没那么好。如果能够明确上限,我便能够快速选取最适当的能量级别。

错觉

Rob Pardo在有关多人游戏的演讲中提到的另一点并不是将乐趣置身于外。我也认可这点。不要只是将游戏当成一些需要排列的抽象数集。你也需要思考人们是如何理解它,以及这 是否真的有趣。Pardo表示他希望玩家认为自己拥有的工具非常强大,尽管事实上不一定如此。Tafari便是来自我的游戏中的一个例子(游戏邦注:他是《Kongai》中的捕兽者)。Tafari的主要能力便是,敌人在与他打斗时不能转换角色。转换角色是这款游戏的主要机制, 所以与他打斗就像不能出石头而玩石头剪刀布。但是在一开始我便为Tafari设置了一些弱势,如果他在行进过程中遭遇战斗时便会不断遭遇失败。而当你带着他去抗击一个虚弱的角色时,他就会瞬间强大起来。

我知道Tafari并不是很强大。我让一些专家对其进行测试,他们更倾向于将其放置在中层。但是当我再添加新的测试者时,几乎所有人都认为Tafari过于强大。我拒绝改变他,在 一年的测试后,最佳玩家仍然将其定位在中层,而菜鸟级玩家也仍将其归为高层。Tafari是错觉般的存在。我之所以这么说是希望当你想要看到某些内容比实际上看来更强大时最好能够谨慎地审视相关反馈。如果你能做到这点的话便有可取得成功,因为Tafari不仅让游戏变得更加有趣,也创造了许多争议,最后它也获得了平衡。

比赛

除了层列表,你还应该思考所有特殊的比赛。例如《街头霸王HD Remix》拥有17个角色和153种可能的比赛。对于HD Remix之前版本的《街头霸王》,专家更倾向于将角色分为4层 (游戏邦注:没有角色是在上帝层或垃圾层),他们将Guile放置在第2层。尽管这意味着Guile的能量级别是可接受的,但是在2个特殊比赛:Vega和Dhalsim中却是处于不利地位。

一个整体还不错的角色在遭遇两个特殊角色时是否还能占据优势?这并不见得。

如果这是FPS中的武器,RTS中的单位或基于团队的打斗游戏中的角色,这便是可行的。你可以在游戏开始时在FPS中选取武器,所以它们的平衡就无需满足一款不对称游戏的严格要 求。RTS中的单位和基于团队的打斗游戏中的角色则是局部失衡的典例。但是在Guile的例子中,你在游戏一开始便锁定了自己有关Guile的选择,所以你在整款游戏中都无法摆脱他,如果他遭遇了一些糟糕的比赛,那么即使玩家将其设定在更高层也没有用了。

我们总是很难在不对称游戏中做出任何调整。我们该如何在不影响其它比赛的前提下帮助Guile战胜Dhalsim。这里并不存在简单的答案,但是我建议你能够真正去解决该问题,而 不是选择逃避。

关于该问题我的解决方法是双重的。首先,因为与这一特殊匹配无关,所以我改变了Guile闪踢的轨迹。这能帮助他避开Dhalsim的火球。其次,Guile所面对的一个问题便是 Dhalsim的低拳将摧毁Guile的音爆弹并穿过屏幕撞击Guile。我改变了Dhalsim的有效射击区,从而让Dhalsim在这种情况下发动攻击。这一改变不会对其它比赛产生任何影响,所以 是这一问题的真正解决方法。

欺骗式解决方法是这种比赛的特例,将给予Guile更多生命值。虽然这听起来很诱人,因为你不需要担心会搞乱其它比赛,但是这种非解决方法却很假。它会混淆玩家的期望与对于 Guile拥有多少生命值的直觉看法。

还有一种类似的方法,即在一款每个单位间相互对抗的RTS中创造一个巨大的表格,并创造一个特殊情况,即关于它们会给彼此造成多大伤害。这也混淆了玩家对于每个单位所造成 的破坏的直觉看法,并创造了无形且不可靠的系统。我知道在平衡不对称游戏时你总是会受到引诱去使用这种特殊情况式解决方法,但我也想提醒你们一定要努力去抵制诱惑。

结论

如果可以的话你英国先基于一些自动平衡和自动防故障装置系统开始设计。然后致力于创造所有游戏的多样性,并开始进行长期的游戏测试。当你从游戏测试中学到更多时,你可 以相对应地改变方向。开始追踪不同层,即先固定上帝层,然后固定垃圾层。并压缩层与层间的距离从而让糟糕的角色也不会比优秀的角色差多少。最后完善所有的比赛,解决所 有问题,并避免逃避式解决方法。

我所谓的解决是指,你不可能决定最佳的游戏方法是什么。如果你可以决定,你的游戏中就不可能存在什么真实的策略了,那么这个话题从一开始就没什么可说的了。如果你可以 解决,那么你的玩家也绝对可以解决一。如果你不能解决,你的玩家可能仍然解决。无论如何,我们知道你不可能解决,因为那意味着你一开始就没把游戏设计好。

当不可能知道最佳方式时,到底如何实现平衡?如果你不担心这个,那就说明你并不理解这个问题有多恶劣。我在前面三个部分所探讨的技术是有帮助的,但它们让我想到美术总 监Larry Ahern在准备画《猴岛的诅咒》的背景时说的话。他表示通过遵守经典美术作品的原则,他认为他可以获得“不太糟”的效果。但是,他说,从不太糟进阶到非常好才是他 的期望,而那是不可能通过遵循某些人的原则得到的。

挑选尖顶玩家

我们先回到更早的一个问题。想象一下,在你面前有一个房间,里面都是某款游戏的玩家,我要求你判断出谁是最优秀的玩家,谁是次优秀的玩家吗。你要怎么判断?答案:你会 让他们互相游戏。

但如果我不允许任何人玩游戏,那怎么办?我允许你采访玩家或让他们提交关于他们自己如何玩游戏和对游戏有什么了解的问卷。你可以通过这种方法判断谁是最优秀的玩家吗?

我打赌你得到的答案不会比猴子掷飞镖好多少。根据我组织和参与比赛的经验看,我可以带着一定权威的口气说,获胜的能力和解释自己的能力几乎没有关系。

为什么最优秀的玩家不一定能够在采访或谈话中以最好的方式表达自己的看法?我认为主要有两个原因:

1、说出来的和写出来的答案是很狭隘的。

2、有许多能力是不可能通过意识思维体现出来的。

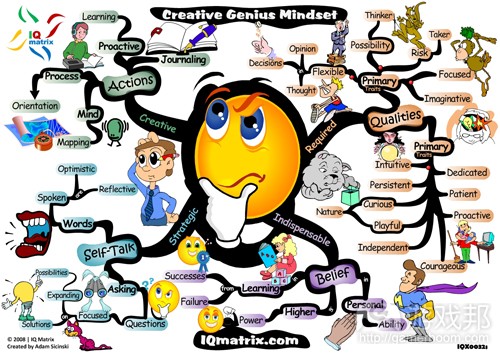

以上两个原因都与“精神冰山”的概念有关。

精神冰山

想像一下,冰山代表你的关于某事物的全部知识、技能和能力,例如玩某款竞技游戏。冰山露出水面的一小部分是你可以直接意识到的,也就是你能解释的东西。而水面之下的部分是你不能用语言解释的,但其份量却远远超过你能表达的那一小部分。当我们采访玩家或让他们写下关于自己如何玩游戏的答案时,我们只能认识到冰山的一小部分。如果一名玩家的露出水面的部分更大(他能更好地解释自己如何获胜),那么,他隐藏在水下的冰山部分其实比其他玩家的更小,这是完全有可能的。而这才是问题的关键。

(露出水面的部分代表你可以解释或意识上理解的;而庞大的水下部分则表示你没有意识到的。)

狭隘性

与你储存在头脑中的全部知识和决策规则相比,你写下来或说出来的答案所包含的信息量其实是相当有限的。另外,写下来和说出来的语言支持线性思考,而你的实际决策可能更 加复杂,需要权衡许多不同的关联因素。在书面答案中,玩家可能说“移动A打败B,所以我专注于在这场比赛中移动A。”但真实操作取决于许多因素:移动A的时机、距离、角色的相对命值、对手的心理状态,等等。玩家不可能把这些在真实游戏过程中感知到的细微之处用精确的语言表达出来。

无法直接访问的部分

一开始,这个概念可能很难理解,但当你思考一会儿以后,你就会觉得很明显了。你不是意识到你的消化系统是如何运作的。你不可能直接问你的细胞如何接通和打断ATP和ADP的 关联,以供给身体能量。当你看到飞盘掠过天空时,你没有意识到你的眼球以某种全人类都有的方式移动(你以为你的眼球是跟着移动中的物品转动的)。

有研究估计,人类大脑每秒通过五官接收约1100万个信息碎片,然而我们可以意识到的部分最多只是其中的40个。还有大量信息是默默接收的,我们不能意识到的,即使我们仍然 能够决定如保利用所有那些信息。

盲视

盲视就是一个特别有说服力的例子。盲视是因为部分视皮质受到伤害而产生的眼盲。人的视觉事实上有两条不同的神经通路,而得了盲视的人的其中一条神经通路被阻挡了。结果 是,他们眼瞎了,也就是不能有意识地体验到看这一活动。即使他们说看到一片黑暗,他们仍然可以根据视力作决定。在一个对盲视患者DB做的实验中,研究人员让实验对象看一 个具有垂直或水平黑白条的环形然后回答条是竖直的还是水平的。即使他看不到,有时候被问得焦虑了,他“猜”的正确率仍然介于90%-95%。换句话说,有盲视的人对世界的感知 比他们的意识能解释的来得更准确。

迅速决定

这个概念的另一条线索就在迅速决定中。意识不是立即联合的;需要0.3-0.5秒。我知道这个说法在脑研究专家中是具有相当的争议的,但我认为通常情况下可以说,在比那更短的 时间内,我们还没有反应过来发生什么事然后形成事件的意识。然而,实验表明,我们根据外部刺激做决定比那个时间更快。例如在一个实验中,实验员要求测试者看到杆亮起某种颜色时就抓住它。实验时,实验员故意点亮一根杆,在测试者正要抓住它时立即暗掉它转而点亮另一根杆,然后估计测验者转向新点亮的杆的过程。这个修正过程几乎是立即发 生的,比0.3秒还快,然而测验者认为他们只在最后一刻,也就是0.5秒后才调整行为。事实上,他们在做出调整以前甚至没有意识到杆的颜色改变了!他们在意识到发生什么事情 以前就做出决定了。

网球是一个更加贴近现实生活的例子。网球职业选手可以把球以130英里每小时的速度打出,而对手双方之间距离是78英尺,这意味着球从一边抵达另一边只需要0.41秒。《纽约时 报》的写手David Foster Wallace提到:

这段时间太短了,来不及深思熟虑。当时,我们更多地是依靠条件反射,也就是绕过意识思维的纯身体反应。然而,有效的把球回传取决于大量决定和身体调整,其涉及的行为比 受到惊吓时的眨眼和惊跳更有意识和计划。

网球职业选手Roger Federer在采访时解释道,他不喜欢被叫作天才,因为在接球的紧张时刻他并没有想什么,他只是在意识到情况前就发动潜意识的技能。

冰山一角

还有另一个重要的例子是棒球。外野手如何接住飞来的球?这似乎是一个涉及速度、轨迹、重力、空气摩擦阻力、风力等因素的复杂数学题。外野手能尽快地跑到球一般会着陆的 位置,然后根据上述计算做出调整?

不可能。抓住飞来的球的最好办法就是《Gut Instincts》一书所说的凝视捷思;也就是,看着球,开始跑,调整奔跑速度使凝视角度保持不变。这样,当球着陆时,你就能在刚好 的位置抓住它了。实验人员发现,优秀的专业棒球选手正是使用这种方法(狗也是),但大多数选手并没有意识到自己使用了这种方法,也不能解释他们自己接球的方法。

这个例子表明正确的答案可能隐藏在你的精神冰山的水下部分,但这不一定是典型案例。我选择它作为例子是因为我可以通过简单地描述这个潜层决策过程,使读者明白。但潜层 的决策过程要依靠不易描述的复杂的变量权衡,怎么办呢?解释如何解决复杂问题的另一条线索只是冰山一角,不一定是准确的。

在我们回到解决平衡游戏这个不可能的任务以前,我想让大家看看两个游戏之外的案例。在这两个案例中,专家解决难题的方法非常适合用于我们的问题,也适合解决其他所有高 复杂度的问题。

希腊雕塑的案例

在《Blink》一书中有一个案例:加州的Getty博物馆正在考虑斥资近1000万美元购买具有2600年历史的希腊雕塑.为了鉴定雕塑的真伪,博物馆方面派律师研究雕塑所有者的书面记 录和过去几十年的下落,还让一位名为Margolis的地质学家分析雕塑的组成材料。这位地质学家从雕塑上提取了2平方厘米的样本,使用电子显微镜、电子微探针、X射线衍射仪和X射线荧光仪作分析。他发现,这座雕塑是用希腊的Thasos岛的白云石制作而成的,并且雕塑的表面覆盖了一层薄薄的方解石——这不重要。Margolis告诉博物馆方面,因为白云石转化为方解石需要至少数百年的时间。

经过律师和科学家的14个月的分析,Getty博物馆决定购买这座雕塑。这时问题出现了。当博物馆方面揭开雕塑的遮盖时,几名艺术行家看过后觉得不对劲。他们觉得这座雕塑是伪造的。

其中一名专家是纽约的大都会艺术博物馆的前馆长Thomas Hoving。当看到雕塑时,他头脑中冒出的第一个词是“新的”。Hoving认为用这样一个词来形容经历上千年历史的雕塑太奇怪了。

Getty博物馆方面很担心,所以他们把雕塑运回雅典,邀请艺术行家召开讨论会。大多数专家也认为雕塑是假的。“指甲盖不”或“手不对”或“在土里埋了那么多年的雕塑不是 那样的”,人们这么说着。他们不知道为什么,但就是觉得。接着,博物馆的律师发现,雕塑所有者的某些文件作假。刚才说到的那位地质学家发现,使用土豆霉菌可以在短短几 个月内把白云石转化为方解石。

Thomas Hoving怎么能立即发现律师和科学家经过14个月的研究调查都没有发现的问题呢?他知道因为他在这个领域已经积累了如同冰山一样庞大的知识量。他在西西里岛自己挖雕 塑。他还说:

“我在大都会艺术博物馆工作的第二年,我有幸遇到这位欧洲馆长,并一同经历了那么多事。我们每天晚上都把东西摆在桌上研究。我们还进储藏室。里面的东西真是多得令人眼 花缭乱。我们每天晚上都呆到10点,我们可不是走马观花,而是非常非常认真地一遍又一遍地观察藏品。”

律师和科学家在这个案例中有很大的“冰山一角”。他们可以拿出很多证据说明雕塑是真的。但说到关于希腊雕塑的知识,律师和科学家的冰山藏在水下的部分就太小了。Hoving 的冰山一角很小(他只是有感觉),然而他的水下冰山部分是非常大的。

让Hoving给你一个假雕塑检测的理论吗?如果他的理论遗漏大量他不知不觉就用于解决问题的东西,怎么办?

如果他的理论其实是错的,且并不能反映他的方法(因为他自己也解释不清楚),怎么办?问题是必然存在的,因为理论要求的所有专家综合他所有知识经验。

解决复杂问题的方法是开发庞大的水下冰山部分。但那是不是说你需要“经验”?对我来说,经验是个脏词。布什有当总统和处理外交事务的“经验”。你希望他治理国家(或其 他地方)吗?而林肯几乎没有任何经验。经验只对拥有它的人来说是好的,且我们通常使用完全错误的方法来衡量经验。

例如,如果你有“发布10款游戏”的经验,那很好,但与特定类型的经验——知识冰山没有任何关系;后者与平衡复杂的非对称游戏有关。在我们开发这座冰山前,我们再看一个 例子。

航天飞机的案例

想象一下,你面临的问题是判定航天飞机挑战者号是如何以及为什么坠毁的。你是调查委员会中的一员,你正在寻找答案。谁才是解决这个问题的最佳人选?顶尖的航天工程师? 一流的事故调查的专家?答案是Richard Feynman。

Feynman是一个光辉灿烂的人物,作为一名物理学家,他以非凡的能力攻克了许多自己专业之外的复杂问题。Feynman不是工程师,但航天飞机问题需要工程分析。他是调查委员会 中不按理出牌的一位成员,没有相关的经验。他只有分析一般问题的经验。收集海量信息、在头脑中组织、分析出什么是重要的什么是不重要的。

Feynman不看表面的东西,无视委员会的原则,询问所有相关人员,无视权威人物和调查政治,而是专注于收集真正的信息。他的第一波调查对象是设计坠毁的航天飞机的工程师。

他们有他需要的知识冰山,且他知道如何将至少一点点儿那种知识转化为自己的。他不管谁有高级头衔、谁应该知道真相,而是专注于谁真的有相关的知识冰山,只有Feynman—-委员会中没有其他人,最终发现挑战者号坠毁的原因是在寒冷气候条件下O形环没有恢复弹性。

如果你想解决复杂的问题,你有两种思路,Hoving式或Feynman式。当我参与开发《街头霸王》时,我采用的是Hoving的方法。我有大量关于那种游戏的知识,所以我的专家观点,即使我表达得像感觉,也是很有份量的。在我参与的其他项目如《Kongai》和《Yomi》,我表现得更像Feynman。我知道如何解决一般的平衡问题,但与我相比,有些测试员掌握了 更多关于如何玩游戏的知识冰山,所以我要向他们讨教、观察他们、依靠他们。

开发冰山

在平衡竞争游戏领域,你要如何获得知识冰山?理想地说,你需要有Feynman式的、可以运用于许多不同类型的问题的才能,而不是专注于狭隘的一种游戏。

开发这种冰山的问题在于时间。如果我们想成为专家级玩家(而不是专家级游戏开发者),我们可以利用非常快的反馈循环。与比我们强的人一起玩游戏,看看什么策略管用,调 整然后再玩。《街头霸王》这样的游戏一回合只持续几分钟上,即使是RTS也不过1小时。但开发一款游戏并平衡它却需要数年的时间。这是一个非常缓慢的反馈循环,几乎没有什 么人会坚持下去。

但我认为获得必要知识的办法还是有的。以下是我研究过的游戏:

1、《街头霸王》。这款游戏我知道的版本超过20种。

2、《快打旋风》。据说盒装第5版是最新版本,但其实这款游戏已经有至少15个版本了。

3、《罪恶装备》。我认识这款游戏的8个版本。

4、《万智牌:旅法师的对决》。在诞生的10多年里,这款游戏每年三变(增加新牌组)。

5、《魔兽世界》。我玩这款游戏两年,我甚至不知道它发布了多少次更新。50还是100?

关于如何改变游戏的平衡性,数据太多了。你可以从游戏的第一版到下一版的变化中研究游戏受到什么影响,以及玩家如何感知那些变化。我其实把这些当成两回事,在《街头霸 王》,我有两个独立的顾问:一个知道改变会如何影响游戏系统本身,另一个精通玩家会如何感知变化。

但,你必须认真地研究我在上面列举的游戏。出于兴趣的研究并一定给让你学到太多东西。另外,在游戏公司工作的经验几乎是有害的,因为我供职过的游戏公司不花任何时间考 虑如“为什么《快打旋风》把这个动作的恢复改成8帧?”这类问题。相反地,它们专注于开发和发布游戏。

我也认为,你不可能假装。如果我不想成为更加出色的游戏开发者,我是不可能积累下像现在这么深厚的知识。我的动机是,我确实对那种东西有兴趣。Thomas Hoving确实对艺术 史感兴趣。你必须在你选择的兴趣领域成为权威,积累深厚的知识。

分析VS.直觉

在《街头霸王》,不可能制作一种“平衡算法以”来告诉你Chun Li的行走速度应该更快一些。与她的重拳相比,与她的脚踢相比,更快的行走速度有什么意义?你计算上一年的结 果可能还不如我两秒钟的猜测来得正确。

当我设计《Kongai》时,我也很清楚这一点,因为各种效果,我尽可能增加它的难度,使之与动作的相对值匹配。我希望评估(玩家直觉上知道在某种情境下的某种动作的相对值 的能力)和“yomi”(玩家揣摩对手心理的能力)成为游戏的两个主要技能。然后我遇到了一位名为garcia1000的《Kongai》玩家,他是扑克牌粉丝。虽然我作为设计师的目标是 便利评估和yomi,garcia1000作为玩家的目标是以最佳方式玩游戏,不需要做任何判断。他想计算这个可能性并解决平衡性问题。你可以说,他是我的恶梦,但他的工作也是相当 有意思的。

garcia1000先是在《Kongai》中制造了若干“终极游戏问题”。他选择非常特定的情境,比如角色A vs.角色B、其他所有角色都死了、生命总量达到一定值、战斗范围设得很远, 指定道具卡,等等。他可以给出非常特殊的情境(更简单得多,因为在这种终极游戏中选择更少),然的邀请其他玩家去想出最佳玩法。各个特定的情境都在论坛上占用了若干页 面。他们得出一个有趣的结论,最佳玩法比一般玩法增加了3%的优势。更有趣的是,解决这些终极游戏情境的数字运算远比我用于设置所有这些调整变量的计算来得复杂(因为我 基本上用的是直觉……)。

但你要记住的是,这种终极游戏情境的解决方案有多么大的局限性。你可以知道解决许多个问题的一个方法,但你不会解决甚至0.00001%的问题。可能的游戏状态的数量是非常大 的,每一个终极游戏的问题都要花好几个页纸才能计算出唯一的一种游戏状态。如果我使用严格的数学来解决《Kongai》的平衡问题,那么我要在它身上耗费100年。

相信直觉

如果你有这种知识冰山,或者如果你像Feynman一样依靠其他人的冰山,那么你仍然应该知道关于最比化直觉价值的两件事。凭直觉的专家比分析师更擅长解决复杂的问题,但是:

1、直觉的专家不会很肯定自己的答案,而不胜任的人肯定自己(错误)的答案。

2、解释你自己会首先降低你利用直觉的能力。

对于本文,这个话题太大了,所以我只能做一个简短的总结。有一个关于不胜任的研究表明,不胜任某事(逻辑、幽默、语法,等等)的人往往会高估自己在这方面的能力,不能发觉其他真正擅长这个领域的人的专业表现。原因是,他们做某事所缺少的知识正是他们评估自己和他人必须具备的知识。

结果是,你不得不应付许多不专业但对自己非常有信心的人的抱怨,那些人可能甚至没有冰山一角的推理能力,更别说水下冰山。我认为要让你在平衡问题上模糊的感觉获得足够权威的办法就是,能够克服他们大声的抱怨。你可能甚至庶务解释为什么那对游戏是最好的。

有若干研究表明,解释你自己会破坏你的直觉。如果让你看到一个人的脸,过一会儿后让你在一组人中认出那张脸,如果不要求你解释那张脸的细节特征,你会表现得更好。你的解释是不完全的,因为语言是很狭隘的,而你对脸的知识却是非常微妙的。你对脸所做解释会覆盖你对脸的真实知识,导致你在认脸测试时表现不佳。其他研究表明,要求解释思绪过程会导致测试对象更难想出创造性的解决办法。

在平衡《街头霸王》时,我很幸运不必向任何人解释自己的观点,这是一个大优势。注意,事后我会高兴解释一切,但我在开发最激烈的阶段时一直在说。如果我想平衡一个想法 ,且不能肯定为什么我想那么做,那我就去做。我不需要召集人员开会,演示计划让人投票。我只要去做,去测试就行了。

有一小段时间对我来说是灾难,因为制作人想跟进我在平衡过程中所做的每一件事。我很不情愿地提交未来的改变计划,因为每天都有一些东西会修改。明天我可能想执行一些我 以前认为不可能执行的东西;后天我可能会根据测试结果,为了一些日后的变更需要而把最近做的修改撤销了。每一天,我所做的事就是做我当天认为最重要的事。

那种方法的敏捷性让我很满足,它是我能想像得到做事的唯一方法。我认为按照制作人的想法跟进我的工作是没有意义的,因为那么做并不能给项目带来任何好处,反而损害了我 的利用直觉的能力。无论是我曾经做的任何游戏,平衡都不是开发的关键途径,总是有一些其他事情要占据开发周期。对于平衡,你能做的就是不停地做,直到有人说要发布了。

顺便一提,不久后,我继续做我想做的任何事,无视任何监督。所以,基本上忽略制作人的要求算是成功的策略。

很不幸,我提出的不解释自己和无视不胜任者的抱怨的建议,听起来好像会成为摧毁项目的自我为中心的命令。然而,利用直觉的最好办法就是在项目中获得一定的权威,然后不 要经常使用权威。毕竟,你也希望你的下属好好做他们的工作,不必每一点小事都向你解释。

总结

当我们知道我们不可能知道什么才是最佳游戏玩法时,平衡游戏就是一件非常麻烦的事了。逻辑分析通常不适合用来解决这种复杂的问题,因为它不能像我们的无意识思维和直觉 那样考虑到所有微妙之处。为了解决这个平衡问题,或任何类似的问题,我们应该积累这个领域的知识冰山和经验。我指的不是像在游戏公司工作或你的名字出现在制作人员名单中的那种伪经验,我指的是只能来自努力研究的那种真正的经验。以直觉的方式使用你自己的知识冰山,寻找其他具有知识冰山的人并倾听他们的建议。最后,在项目中获得一定 的权威,不要让那些不胜任者的大声抗议盖过你的可靠的感觉,不要那些要求你事事给出解释的人破坏你的直觉。

篇目2,Brice Morrison解析游戏平衡中的不均衡设定

作者:Brice Morrison

游戏中的“滑坡效应”是指当你落后时,你还会因此落到更后面;遭受损失时,这个损失还会继续对你造成伤害(有点像“雪上加霜”的意思)。

比如,篮球赛游戏中,每当一方得分时,另一方则损失一名队员。在这里,落后的损害是双倍的,一是每次投篮都是计分的,二是落后方更加不可能得分。尽管现实的篮球赛并没 有这个别扭的特征,所以真正的篮球赛中并不存在“滑坡效应”。在现实的篮球赛中,得分只是让你更接近胜利,但完全不会损害对方的得分能力。

“滑坡效应”的另一个名称是正反馈,就是一个扩大自身效应的环路。因为人们总是很容易就混淆正反馈与负反馈,所以我更倾向于称之为“滑坡效应”。

slippery_slope(from thegameprodigy)

滑坡效应在游戏中造成的结果通常是不好的(游戏邦注:当然,这是对对“滑坡方”而言)。如果游戏的滑坡效应太过强大,这意味着当一名玩家稍微领先一点,他就更可能离最 终的胜利再进一步,再再进一步。像这样的游戏,赢家其实早有定数,无论你是玩家还是看客,游戏的过程基本上是多余的。

《星际争霸》和象棋中确实存在滑坡效应,但撇开这个消极属性不提,这两者都是好游戏。在象棋中,玩家损失一个棋子,他的攻击能力、防御能力和控制能力都会稍逊一筹。当 然,象棋中还有其他许多因素——定位、势头、布阵等,决定着玩家到底是不“失败”,当然损失一枚棋子也确实产生了一定的效应。显然,损失太多棋子,比如8个,玩家就彻 底处于劣势了。要赢回来简直是猴子捞月了。所谓的胜利,其实是“赢”了很多、很多步,最后来一个“致命一击”的累积效应。

这就是为什么象棋中存在那么多惩罚。如果意识到对方最终会赢,好玩家其实不会再作无谓的挣扎。象棋玩家认为游戏存在这种经常性惩罚很好,没什么不合适,但与不存在滑坡 效应的游戏相比,这是个令人扫兴的特点。但不论如何,象棋仍然是一种好游戏。

《星际争霸》中也有滑坡效应。当你损失一个单位时,你受到的是双重打击。第一,你离最终的失败更近一步了(完全没有单位事实上相当于损失了所有建筑);第二,你更难进 攻和防御,因为你不仅损失了得分,用于进攻和防御的单位也减少了。

在篮球赛中,得分与玩法完全是分离开的。你的得分能力并不取决于当前的得分。无论你是领先20分还是落后20分,再次得分的机会都是一样的。在《星际争霸》(和象棋)中, 得分与玩法密切相关。损失一个单位意味着离失败更近一步,并且更难反击。

说到游戏的经济系列方面,《星际争霸》的滑坡效应表现更为明显。假设对方提前进攻你,你hold住了。其他方面损失不相上下,但你还多损失了一个生产单位。放到其他游戏中 ,这大约就相当于落后一分。但在《星际争霸》里,后果就严重多了,因为采矿量的增长接近于指数型,你的对手在资源上只领先你一个生产单位,收益就比你翻了好几番。在你 损失那个生产单位起,你就已经在斜坡上往下滚了,此时的劣势效应正在无限放大。

格斗游戏

格斗游戏中一般不存在滑坡效应。比如在《街霸》中,你的角色即使只剩一口气了,仍然行动自如。被打中只是让你的命值(得分)受损,但并不会限制你的行动选择,这点和象 棋中损失一个棋子或在《星际》中损失一个工作单位造成的恶果是不一样的。《武士之刃》中倒是意外地存在滑坡效应。在游戏中,被打中腿,玩家就会行走蹒跚;被打中手臂, 可能那手臂膊就算是残了。这在格斗游戏中是极为罕见的。

虽然,从现实一点的角度讲,快死的角色也是可能慢慢地爬着,至于能爬多远就另当别论了,但这样的游戏也太没意思了。(游戏邦注:至少在《武士之刃》中,这部分会持续数 秒钟,然后你才挂掉。)在《街霸》中,复原是很频繁的,所以所有玩家都能“笑到最后”。其实《街霸》中还是存在一点点滑坡效应的(如果你气数将近,你肯定很担忧被堵在 角落,而当你的血条全满时,你从来不担心这个),但总的来说,这是个中性的滑坡效应。

有一款格斗游戏因为是个例外而显得格外突出,它就是《Marvel vs. Capcom 2》。在这款游戏中,各名玩家选择3名角色。在任意给定的时间内,屏幕是只出显一名可活动的角色 ,其余二名则在屏幕之外的地方恢复受损的精力。玩家可以召唤屏幕之外的角色来辅助主角色,之后再切换屏幕。主角色可以与辅助角色一起发动攻击,从而丰富进攻策略和技巧 。玩家可以任意转换活动角色,但如果他已经损失了所有角色,就算是失败了。在这里,滑坡效应就出现了。当玩家只剩最后一个角色时,而其对方仍然有两个甚至全部角色无损 ,那么前者就明显处于下风。玩家的当前角色没有办法得到辅助攻击,胜算可谓微乎其微。恢复在这款游戏中相当少,游戏往往在玩家“技穷”以前就结束了。

带有“出圈即败”设计的格斗游戏,如《Virtua Fighter》和《Soul Calibur》就尤其不具有滑坡特征了。在这些游戏中,如果一名玩家的角色被推出圆圈,则玩家马上失败,无 论此时角色的血条还有多长。从根本上说,无论你目前落后对手多少、无论你的血条还有多少,出了圆圈对你的造成的伤害都是100%的。很久以前,我曾认为这个概念并不高明, 除了速战速决,不见得有什么好处,但事实上,“出圈即败”的危险给游戏加分不少。因为“出圈即败”的危险度太高了,无形中给游戏增加了一个“定位”的玩法;也就是,玩 家必须在打击对手的同时稳住自己的位置,以免被推出圆圈。

有限的滑坡效应

格斗游戏的滑坡效应范围非常小、非常有限,这对格斗游戏来说是一个优良的特点。如果游戏在任何时候都不存在滑坡效应,那么各个环节之间可能会让人觉得比较脱节。虽然, 如果你做出的决定始终会引发某种结果,进而影响到后面的游戏,那样会比较有趣。但问题是,如果这种影响发展成了“滚雪球”呢?

在受限的滑坡效应中,你能“滑”多远存在上限,造成的后果也是暂时的。在《街霸》中,被击倒了确实会引发一点滑坡效应。你的命值(得分)受损,同时,角色的活动受到短 暂的限制。角色摔倒,显然是劣势情况。这里我们要注意到两点:1、击倒结束后,你恢复所有动作;2、你不能进一步被击倒。

Street Fighter(from thegameprodigy)

图中的Ken被击倒了,暂时处于下风,但这个劣势不会像滚雪球一样越滚越大。

将对手击倒的影响确实在后面体现出来了,但这个优势很快就被重新组合,不会再“滚雪球”了,因为不存在“进一步击倒”对手这种事。如果你已经摔倒了,你当然不可能“再 摔倒”。

另一个例子是把对手逼到角落(游戏界面的边缘)。如果你这么做了,你就占据了“地利”,因为对手的行动受限了。但还是存在一个限度——一旦对手被逼到角落了,他不可能 再被逼进“角落的角落”了。对手所处的劣势程度是有限度的。

再举一个更直接的例子,任何时候你采取阻挡动作,你就得到一定的复原量。此时,你的恢复速度会领先于正在进攻的对手,所以在下一次进攻时,你在时间上有可能先出手。这 是你的优势,因为如果你们双方都打算发动相同速度的进攻,你的角色会赢(因为它会先行动)。你的阻挡动作的成效就在这里表现出来了,但这个效益是转瞬即逝的,可能仅仅 过了一秒,优势就不复存在了。

所以,格斗游戏中充满了小型滑坡效应,这确实给游戏增添了乐趣。但从宏观的角度看,这些不算真正意义上的滑坡效应,因为其效果不会像滚雪球般随着游戏进程渐渐变大。与 象棋相比,相当于你是在几个回合后就把失去的棋子拿回来了。

没有滑坡效应的RTS

这里我有一个想法,就是把完全的滑坡效应(通常是恶性的)变成有限的滑坡效应(通常是良性的)。双方玩家一开始均持有相当的资本去购买单位。当你的单位被摧毁后你的资 本就会得到偿还。一方面,偿还需要一定的时间,另一方面,重新生产新单位需要一定的时间,这两方面意味着损失单位确实产生了消极影响,但这种劣势会渐渐消失,这与格斗 游戏中的被击倒是一样的。即时策略游戏《World in Conflict》正是这么做的,不过我本人没有玩过。

我说这些的目的不是评判《World in Conflict》这款游戏好不好,或者讨论上述的“恢复系统”可取不可取。我只是想表明,如果你能努力研究一下,消除RTS中的滑坡效应还是 可能的。那些非常乐衷于此的人可能会想出更高明的解决方案吧,然后一款更高深的游戏就此诞生。

不知各位是否已在自己开发的游戏中运用了滑坡效应呢?

与游戏中的“滑坡效应”这个概念相反的,我称之为“无限恢复”,其实就是负反馈的另一种叫法。(游戏邦注:作者认为负反馈听起来很不详,但事实上游戏中的负反馈通常不 坏,所以最好给它一个积极一点的名称。)负反馈在游戏中的作用,就相当于保持室内温度恒定的温度调节器。

无限恢复实际上是默默地帮助了失利的玩家。我想对两类效应加以区别。其一,当你落后时,无限恢复就是一股扭转不利形势的力量。《虚幻竞技场》中的大男孩增变基因就是一 个例子。在FPS模式下,当你杀死一个敌人后,你会变得更肥大但更易被击中。当你挂了,你会变得更瘦小但更难被击中。所以如果你一死再死,你就会越变越瘦小。注意,虽然你 挂了N次,仍然在损失(你的得分也没用),但你确实获得了优势(更难被击中)。

Mario-Kart-DS-nintendo(from fanpop.com)

相似的例子也出现在《Mario Kart》所有版本中。你越是落后,你得到的道具就越强大。最后,你可以得到强大的蓝海龟壳,这个道具能够自动对准跑在最先的赛车开火。同时, 跑在最前的赛车只能得到最差的道具。

任天堂DS的《Advance Wars: Dual Strike》也有相似的特点。双方的仪表上各系有一个强大的“标记攻击”。当你被攻击时,你的仪表填满速度将是平时的二倍,所以落后的玩家 会有更快的速度来发动进攻,这样,他自然就有机会恢复。

在以上三个例子中,游戏中存在一个“扶弱惩强”的力量。这个力量存在的积极意义在于,拉近了玩家之间的差距,削弱了前面犯下的错误造成的影响。也就是说, 在《Mario Kart》中,这股力量可能太过极端,或者说创造了一种奇怪的人为作用,如避免某位玩家长期占据比赛的第一名。而在《Advance Wars: Dual Strike》中,标记进攻的效应可能过 大,使其始终支配着游戏。撇开争议不说,这个概念仍然是合理的,运用得好的话,可以让玩家之间的差距更接近、比赛更刺激。

极端的无限恢复

另一种无限恢复走了极端,这是比较少见的。所谓过头的无限恢复就是,当你落后时,这个劣势不仅是扶了你一把,而且等于是直接把你拉到领先地位。我认为最典型的例子就是 《Puzzle Fighter》。

个人认为,在我担任《Puzzle Fighter HD Remix》首席设计师以前,我就认为《Puzzle Fighter》是迄今为止最好的益智游戏。这款游戏看起来够水准——玩家各有一个面板接着 落下的棋子。玩家要用游戏中的四个同色碎片构成更大的单色矩形(能量宝石)。你之后可以用碰撞宝石将那些矩形破坏掉。你打碎的越多,你丢到对手一边的垃圾就越多。当你 的一方“封顶”,你就输了。

促成《Puzzle Fighter》中的无限恢复(极端版)有若干因素。首先,各个“角色”(共计11人,包括神秘角色在内)都有不同的“空投特征”,即当该角色粉碎己方的矩形时投 向对手的色块特征。例如,Ken的空投特征依次是水平红排、水平绿排、水平黄排和水平蓝排。每次Ken向对手投出的色块不多于6个时,就是一个水平红排;而当Ken投出12个色块 时,就是一个红排再加一个黄排。因为敌人知道这点,所以可以早做打算,将Ken的进攻转化为自己的优势。比如,当你向对方投色块时,这些色块会变成“反击宝石”,不能马上 被破坏,也不能组合为致命的能量宝石。过了五次行动,反击宝石就会转变为常规宝石。

另一个非常重要的特点是,在屏幕上位置越高的能量宝石破碎后,造成的破坏(投出反击宝石)会比下层的宝石更大。所以想想这款游戏的实际伤害是怎么样的吧。进攻其实只是 暂时性伤害,直到反击宝石转变为常规宝石。此时,对手可能会将这些宝石组合进自己的宝石中,因为对手知道你的空投特征。即使对手不能以这种方式从你的进攻中受益,他仍 然可以通过破坏你投给他的所有东西而“逃出升天”。对手的屏幕填得越满,反过来轰炸你的炮弹就越多。而且,因为对方快撑不住了,他的攻击会因为高度带来的额外效应而呈 现最大的伤害。越是高端的宝石,破坏后释放的杀伤力更大。

《Puzzle Fighter》具有极其罕见的特点,即“几乎失败”正是“几乎胜利”。比如说你破坏了大量能量宝石,给对手以重大打击。你自己的屏幕几乎被清空了,那么你就赢了对 吗?而对方的屏幕几乎封顶了,他输了,对吗?好吧,他只是挣扎在失败的边缘,但他拥有所有的弹药和额外优势,而你这一边却无所防御,你的对手同时处于“失败”和“胜利 ”的边缘。确实非常奇怪吧!

Ken (左)几乎输了,但他及时得到所需的黄色碰撞宝石。最终Donovan(右)会输

几乎输了,但他及时得到所需的黄色碰撞宝石%E3%80%82最终Donovan(右)会输from-thegameprodigy.png)

Puzzle Fighter(from from-thegameprodigy)

如上图,Ken (左)几乎输了,但他及时得到所需的黄色碰撞宝石。最终Donovan(右)会输。

玩《Puzzle Fighter》的最好策略就是非常小心地规划自己的宝石,不要一次打到底。不然你就是反过来帮对手一把了。你得存着一些用来打击对手。你的对手总是有足够的进攻 来杀掉你,所以你得有足够的防卫。无论一开始局势如何有利于对手,运势也会一定程度上向你倾斜。只要不到最后一刻,输赢就没有定论。这款游戏被戏称为“卷土重来”,因 为刺激总是持续到最后一秒。

结语

“滑坡效应”是对落后者的惩罚,让他们落得更后面。如果不加抑制,会使游戏预先区分出真正的胜利者,结果必然是损害游戏玩法,让玩家倍感无趣。虽然格斗游戏缺少这种彻 底的滑坡效应,但确实存在若干临时性、有限的滑坡效应,从而增强了玩法。这种受限的滑坡效应可能也存在于其他类型的游戏中,但在未来的游戏设计中,设计师们可以有意识 地采用这种形式。最后,无限恢复,即滑坡效应的对立面,是一股帮助落后玩家、阻碍领先玩家的力量,从而缩小竞争的差距。如果使用不当,这个属性非常容易走极端;但如果 使用得当,游戏将会更加好玩刺激。《Puzzle Fighter》将这一概念运用得淋漓尽致,从而给玩家带来一种“若输若赢”的感觉。

篇目3,游戏设计课程之设置游戏平衡性技巧

平衡未能达标的游戏完全就是浪费时间,调整完核心机制后,你需要再次平衡游戏。现在我们手中握有的是经过多回合测试的半成品,是时候该进入下个步骤。

什么是游戏平衡性?

在双人游戏中,“平衡”通常指所有家都不具有任何不公平优势。但此词也也出现于单人游戏中,其中游戏玩家未面临任何除游戏本身外的对手,游戏可能存在的“不公平优势”可以视作挑战。我们也许会说《Magic: the Gathering》的个人纸牌具有“平衡性”,即使是在所有玩家都能接触这些纸牌的情况下,这样任何个人都未存在任何有利条件。这里出现什么情况?

Magic the Gathering from wizards.com

就我的经验来看,通常我们谈论“游戏平衡性”主要指下列4种情况。具体情境通常能够清楚呈现我们谈论的是哪种情况:

1. 在单人游戏中,我们以“平衡性”形容挑战水平是否适合用户;

2. 在非对称多人游戏中(游戏邦注:即玩家起始位置和资源不同),我们以“平衡性”形容某玩家的起步是先于其他玩家。

3. 若游戏存在多种获胜策略或路线,我们以“平衡性”形容某种策略是否优于其他策略。

4. 在包含若干类似物件的游戏机制中,我们以“平衡性”形容物件本身,尤其是判断不同物件是否具有相同成本/效益比例。

下面我们就来详细分析各点内容,然后我们会重温平衡游戏的若干实际技能。

单人游戏的平衡性

在单人游戏中,我们以“平衡性”形容挑战水平是否适合用户。

注意通过体验游戏,用户最终会变成熟练玩家。这就是为什么电子游戏的后期关卡要比前期困难得多。形容单人游戏难度随时间而变化的专有名词是:节奏。

游戏设计师此时面临一个明显问题:我们如何把握什么是“合适”挑战水平?当然我们知道瞄准成人的逻辑/谜题游戏要比同类儿童游戏复杂很多,但除此之外,我们如何知晓什么太难,什么太简单?最明显的答案就是:游戏测试。

但这依然存在另一问题:不是所有玩家都一样。即便就小范围的目标用户而言,他们依然呈钟型曲线分布,有些玩家非常老练,而有些则相反。在进行游戏测试时,你如何知晓玩家分布在钟型曲线的何处?若你只是涉猎测试,最理想的方式是覆盖大面积的测试者。当你获得大量用户的反馈信息时,你就能够大致知晓总体分布。随着设计经验的增多,你对用户的了解就会越多,此时你只需通过少量用户便能得到同等有效信息。

即便你清楚如何调整游戏难度,目标用户置身何处,面对用户多元化的情况,你如何选择游戏挑战水平?无论你采取什么措施,游戏总是在某些人看来太难,而在某些人看来太简单,所以你将处于必输局面。若你必须选择某种水平的关卡,根据概测法,你通常会瞄准曲线的中间部分,因为这样你能够囊括最多用户。另一方式通过提供多种难度水平、障碍或系列规则组简化或强化内容,从而起到支持两端用户的目的。

非对称游戏的平衡性

在非对称性多人游戏中(游戏邦注:玩家未基于同等位置和资源出发),我们以“平衡性”形容某起点位置是否更易于获胜。

围棋 from appjidi.com

真正对称的游戏很少。在我们看来,国际象棋、围棋之类的经典游戏具有对称性,因为每个玩家都以相同棋子数量开始游戏,遵照相同规则,但这里存在一个不对称情况:有个玩家可以先走!若你修改游戏,让两个玩家悄悄在纸上写下自己的操作,使得移动能够同时进行,游戏就具有完全对称性。

这带来一个有趣问题:若游戏具有对称性,你是否就无需担心游戏的平衡性?毕竟两位玩家都以相同资源和初始位置开始,所以从定义来看,没有玩家具有不公平优势。这完全正确,但设计师需考虑其他类型的平衡性,尤其是游戏是否存在优势策略。只是让所有玩家处在同等位置无法令你摆脱困境。

纵然游戏具有非对称性,你为何要费神进行平衡?一个简单的答案是:玩家通常希望在其他条件都相同的情况下,游戏不会给予某玩家任何天然优势或劣势。

非对称游戏通常更难于进行平衡。越是不对称,测试过程就越要谨慎进行。达到此种平衡的一个最简单方式是建立各玩家间的资源联系。若你设定在游戏玩法中,1个苹果等于2个橘子,那么以1个苹果开始游戏的玩家就与以2个橘子着手的玩家就保持平衡。

有时,玩家间的差异很大,无法进行直接比较。有些游戏不仅给予玩家不同起步资源和位置,还提供不同体验规则。有些玩家能够特别享有某些资源或技能。游戏常见的一个不对称情况就是向玩家呈现不一致的目标。直接比较玩家的难度越大,游戏测试的工作量就越艰巨。

游戏策略的平衡性

若游戏具有多种获胜策略或路线,我们以“平衡性”形容某策略是否优于其他策略。

也许有人很好奇为什么要费心于此?若游戏顾及各种策略,但某策略比其他策略强大,利用最佳策略不就等于让玩家获得胜利?只要没有任何玩家具有不公平优势,游戏转变成“找到最佳策略便胜出”模式有何不可?

这里的问题是,只要找到优势策略,聪明的玩家就会忽略所有次佳策略。所有非优势策略组成要素的内容瞬间就会变得无关紧要。嵌入单一制胜策略的游戏本身并未存在任何问题,但在这种情况下,设计师应该移除次佳策略,让游戏变得更合理。若你融入次佳选择,这些就会变成错误决定,因为游戏只有一个解决方案。

若融入多个潜在获胜策略很有必要,那么这些策略富有平衡性会让游戏更有趣。而这些归根到底都在于游戏测试。在这种情况下,当玩家体验你的作品时,查看是否存在某策略的运用频率超过其他策略的情况,哪种策略更倾向带来胜利。若游戏有若干道具供玩家购买,是否有哪些道具玩家会优先购买,而其他道具则鲜少有人问津?若玩家在每个回合都具有行动选择,每次测试的赢家是否进行某操作的次数比其他玩家多?

单凭游戏测试无法证明某策略缺乏平衡性,但这清楚说明你需要就游戏的某些内容进行深入剖析。有时,玩家采取某策略是因为它非常显眼或简单,而非由于它是最佳选择。有些玩家会回避那些过于复杂或需要技巧的内容,即使这些内容长久来看作用显著。

游戏物件的平衡性

在包含若干相似物件的机制中(游戏邦注:例如集换纸牌游戏的纸牌,角色扮演游戏的武器),我们以“平衡性”形容物件本身,尤其是不同物件是否具有相同成本/效益比例。

这种平衡性主要瞄准给予玩家若干物件选择的游戏类型。下面是几个例子:

* 集换纸牌游戏的纸牌。玩家基于所收集的系列纸牌创建牌组。选择添加哪些纸牌是游戏结果的关键,设计师试着让各纸牌保持平衡性。

* 某些战争游戏和即时策略游戏的道具。玩家能够在体验过程中购买道具,不同道具具有不同能力、移动率和战斗优势。设计者需试着让这些道具保持平衡性。

* 角色扮演游戏中的武器、道具和魔力等(不论是桌面,还是电脑/掌机内容)。玩家也许会购买这些内容应用于战争中,这些道具具有不同成本、统计值和能力。设计师需让这些物件保持平衡性。

所有这些情况都要确保达到两个目标。首先就是防止游戏物件过于薄弱,相比其他物件变得微不足道。再次变成玩家的错误选择,因为他们很快就会发现自己得到或购买的物件缺乏使用价值;因此物件完全是在浪费玩家的时间。

第二个目标是防止游戏物件过于强大。任何变成优势策略的游戏物件都会让其他物件在对比之下变得毫无用处。通常若你需选择制作过弱或过强物件,最好还是朝过弱靠拢。

若两个物件具有相同成本/效益比例,他们就具有平衡性。也就是说,你获得某物件的代价应同你从物件中得到的利益相称。成本和利益无需完全一致。但当比较两种不同物件时,成本/收益比例应大致相同。

平衡游戏物件的方式:传递性、非传递性及独特性

我遇到3种平衡游戏物体的基本方式。首先就是所谓的传递关系。用更通俗的话说,就是成本曲线。这是平衡游戏物件最直接的方式。大体就是找到预期成本/收益比例。这也许呈线性分布,也许呈曲线态势,完全取决于游戏,但游戏测试、实验和直觉能够帮你判断它们究竟属于什么关系。

下步就是将所有成本和利益简化至某些能够比较的数值。将物件的所有成本累加起来;同样合计所有收益。比较二者,看看成本是否创造合理的数值回馈。

此方式通常用于集换纸牌游戏。若游戏有既定成本曲线,那么通过既有混合效应创造新纸牌就简单得多。在《Magic: the Gathering》中,若你想要创造包含既有颜色、能量、韧度和系列标准技能的新生物,其中已知某些成本,设计师便能够准确告诉你对应成本是多少。融入更多技能就会带来更多成本,降低成本就需要移除某些统计值或技能。

第二个方式就是游戏物件间的非传递关系,更通俗的说法是石头剪刀布关系。在这种情况下,成本和利益也许没有直接关系,但游戏物件本身存在联系:有些物件本身优于其他物件,但依然比某些物件略低一等。游戏“石头剪子布”就是典型例子;三种出法都没绝对优势,因为每种出法都具有平衡关系,打败其他出法但又输给第三种出法。

剪刀石头布 from wangluohongren.wangluoliuxing.com

这点也体现在某些策略游戏中。在很多即时策略游戏中,各道具间都存在某种非传递关系。例如,常见情况就是步兵比弓箭手强,弓箭手比飞行员强,飞行员比步兵强。游戏的很大部分内容都是对照对手设定道具位置。

注意就如前个例子所述,传递性和非传递性关系能够结合起来。在典型即时策略游戏中,道具具有不同成本,所以脆弱弓箭手依然会被强劲飞行物打败。同类道具存在传递关系,但不同类道具则存在非传递关系。

非传递关系能够通过矩阵和基本线性代数解决。例如,剪刀石头布的解决方案是你设想每种出法的比例与对方相当:那就会出现1:1:1。现在假设你稍微修改游戏,凭借石头胜出能够得到3分,凭借布胜出能够得到2分,凭借剪刀胜出能够得到1分。现在的预期比例是多少?若你希望玩家按一定比例运用某道具,平衡性良好的非传递关系就是很好保障。

第三种平衡游戏物件的关系是让所有物件别具一格,能够进行直接比较。由于物件间无法进行正式数值比较,唯一的平衡方式就是进行额外测试。

这三种方式都存在某些联系。传递关系主要依靠设计师找到正确成本曲线。若你计算错误,游戏的所有物件都会出错;若你发现某块内容缺乏平衡性,你就需要调整所有内容。测试完再创建传递关系要比提早设计简单得多。由于很多东西依赖于正确计算,我们通常要进行反复试验,因此会消耗很多时间。

非传递关系需要解决非常棘手的算术问题。另一缺点就是需要谨慎行事,否则就会给人翻版剪刀石头布的感觉(游戏邦注:这会让玩家丧失兴趣)——很多人觉得非传递关系不过就是猜谜游戏,所有决定都不是基于策略而是靠运气和随机性。

“奇特”关系很难进行平衡,因为在这里你无法运用原本能够实现此目标的工具——数学运算。

3种常见游戏平衡技巧

通常我们能够通过3种方式平衡游戏:

* 运用数学运算。在游戏中创造传递或非传递关系,确保所有内容同成本匹配。

* 运用设计师直觉。调整游戏平衡性直到你“感觉不错”。

* 运用游戏测试。基于测试结果调整游戏(在测试中,老练玩家根据指示在游戏中探索,争取获胜)。

这些方式都有相关挑战性:

* 数学运算很难,而且会出现失误。若公式错误,所有游戏内容都会发生偏离,这不利于快速建模。某些奇特游戏技能或物件若过于罕见也许就无法进行数学运算,需要利用其他平衡方式。

* 直觉常出现人为误差。这并不绝对;不同设计师对于游戏的最佳设计会有不同看法。这在大团队项目中尤其危险,其中某位设计师也许会在项目中离开,而另一设计师不知如何接手。

* 游戏测试取决于测试者的质量。测试者无法发现游戏中的所有平衡问题;某些问题会沉寂几个月或几年都未被发现。更糟的是,有些测试者会有意不向你展示某些规则利用技巧,因为他们计划在游戏发行后将此运用于游戏体验中。

设计师要怎么做?尽自己所能,把握所有平衡技巧的利弊。作为游戏玩家,下次你碰到平衡性糟糕的游戏时,请体谅设计师要做到尽善尽美绝非易事。

更多游戏平衡技巧

下面是若干建议。

把握游戏的不同物件和机制及它们之间的关系。当然你应该在游戏设计初期就完成这些任务,但当你开始锁定细节内容时你很容易就会忘记把握大局。当你打算调整游戏内容时,有两点需要注意:

1. 游戏的美学核心是什么?此调整是否能够支撑核心?

2. 着眼机制的相互关联性。若你调整某内容,需知晓其他内容也会受到影响。独立游戏元素鲜少出现在真空状态,调整某要素通常会带来涟漪效应。通过把握机制和物件间的关系,我们很容易预测机制调整的二级影响。

每次进行一个调整。我们之前就谈到这点,但这需要忍受重复性。若做出调整后某内容出现中断,你就无法获悉这是由哪次调整所引起的。

学会运用Excel。你可以采用任何电子表格程序,但微软的Excel无疑在游戏设计师中颇受欢迎。当我称电子表格在游戏设计中非常重要时,很多人都觉得我有些不可思议。下面是些电子表格的应用体现:

* Excel让我们得以轻松记录和组织内容。罗列所有游戏物件和统计数据。就角色扮演游戏来说,罗列所有武器、道具和怪兽;就桌面战争游戏而言,罗列所有道具及其统计数据。任何你将在指南参照图表中找到的内容也许最初都是在设计师的Excel表格中诞生。

* Excel非常适合记录任务和数据,这对包含众多机制和部件的复杂游戏来说作用很大。若你看到桌上布满涉及大量怪兽及其相关数据的文稿,其中也许还记录怪兽的美工制作是否完成,怪兽数据是否已进行平衡或测试。

* 电子表格有助于搜集和编辑游戏数据。在各玩家拥有众多数据的运动游戏中,是否所有团队都具有平衡性?合计或平均各队的所有数据,你就能够把握各团队的优缺点。在融入传递关系的游戏中,是否所有游戏物件都具有平衡性?在电子表格中合计所有成本和利益。

* 你可以通过电子表格运算模拟数据。通过生成随机数据,我们就能够获悉此类信息:一定范围物件的破坏频率,从而把握总体范围和结果分布。

* 电子表格能够帮你查看游戏调整的因果关系。通过基于特定你希望调整的数值创造公式, 你就能够调整某数值,然后查看其他相关数值会发生什么变化。例如,若你制作的是大型多人在线RPG,你就能够通过Excel计算武器每秒带来的破坏性,然后立即获悉调整基础破坏、准确性或攻击速度将产生的变化。

运用“翻倍规则”。假设你知道游戏有某些数值过高,但你不知道此数值是多少。也许只是有些过高,或者有些不妥。无论是哪种情况,将其减半。同样,若你知道某数值过低,无论低多少,将其翻倍。若你不是100%确定正确价值所在,将其翻倍或减半。

从表面数值来看,这听起来有些荒谬。若宝石成本的偏低程度只是10-20%,翻倍的激烈举措将产生什么影响?在实际操作中,其可行性体现在如下几方面。首先,你也许认为这只是稍微偏离,完全错误;若你只进行微调,而数值需要翻倍,这需要你不断重复方能到达你原本需要达到的标准。

但翻倍规则存在一个更突出的优点。游戏设计是个发现过程。实际情况是,你并不知道平衡游戏的正确数值;若你知道,游戏早已得到平衡。若某游戏数值发生偏离,你就需要找到正确数值,所采用的方式是调整数值,然后查看所出现的情况。通过进行大幅度调整,你就会看到此数值给游戏带来的影响(游戏邦注:也许这只需要小幅调整,但通过翻倍或减半某数值,你将更深入了解自己的游戏)。

有时你会发现较大幅度调整游戏能够以预想不到的方式改变游戏机制,但此变化会让设计变得更杰出。

平衡首回合的优势。尤其在回合游戏中,玩家在开始时存在某细微优势(或劣势)的情况非常普遍。情况并非总是如此,但当出现暗此种情况时,你可以通过系列常见技巧进行弥补:

* 替换初始玩家。例如在4人游戏中,你应在每个完整回合后将下回合的初始玩家替换为左边的用户。这样此回合优先的玩家就会在下回合最后开始。

* 给予劣势玩家额外资源。例如若游戏目标是在游戏结束时争取获得最高分,那么你要让玩家以不同积分开始游戏,让最后进行的玩家获得额外积分补偿。

* 降低发起玩家初期回合的效率。在纸牌游戏中,玩家通常在轮到自己时出4张牌。你可以进行调整,让初始玩家出一张牌,下位玩家出两张牌等,直到所有玩家都出4张牌。

* 对于非常简短的游戏,不妨设置系列游戏回合,让所有玩家都有优先机会。这在纸牌游戏中很常见,其中完整游戏体验通常经由多人之手。

在学习规则时将其写下。设计游戏的过程中总是会有成败。你将从二者中学到东西。当你发现游戏设计“法则”或新游戏平衡技巧时,将其记下,定期翻看笔记。遗憾的是,鲜有设计师能够真正做到这点;因此,他们无法将自己的经验传递给其他设计师,他们有时会在许多作品中犯相同错误,因为他们忘记早前得到的教训。

平衡角色

在早前的设计师生涯中,我非常着迷于游戏平衡。“有趣游戏即平衡游戏,平衡游戏即有趣游戏”是我的真言。我知道我常因不满作品缺乏平衡性,建议即时进行修复而惹恼众多前辈。

虽然我依然认为平衡性非常重要,是游戏设计师不可或缺的技能,但我现在的态度要温和许多,我知道有些作品虽然缺乏平衡性但依然非常有趣。我也发现有些作品之所以非常有趣是因为它们故意融入不平衡因素。而且有些作品虽然极度缺乏平衡性但依然在市场中表现突出。这些都是罕见情况。但需注意的是本文所谈及的观点还是要以遵循游戏终极设计目标为前提。这些技巧只是你的工具,而非主宰因素。

篇目1,篇目2,篇目3(本文由游戏邦编译,转载请注明来源,或咨询微信zhengjintiao)

篇目1,Balancing Multiplayer Games, Part 1: Definitions

By Sirlin

Balancing a competitive multiplayer game is not for the faint of heart. In this article I’ll define the terms that will let us know what we’re talking about in the first place, then in the second and third articles, I’ll pretend that we have some hope of solving the wicked problem of game balance and I’ll explain techniques to do it. Then in the fourth article, I’ll try to impress upon you what deep trouble we’re really in.

First, the terms. Let’s start with balance and depth as defined by the Philosopher King of game balance:

A multiplayer game is balanced if a reasonably large number of options available to the player are viable–especially, but not limited to, during high-level play by expert players.

–Sirlin, December 2001

A multiplayer game is deep if it is still strategically interesting to play after expert players have studied and practiced it for years, decades, or centuries.

–Sirlin, January 2002

This definition of balance is pretty good, but there are two concepts hiding inside that term viable options. On one hand, I meant that the game doesn’t degenerate down to just one tactic, and on the other hand, I meant that if there are lots of characters to choose from in a fighting game or races to choose from in a real-time strategy game, many of those characters/races are reasonable to pick. Let’s call the first idea viable options and second idea fairness in starting options, or just fairness for short.

Viable Options: Lots of meaningful choices presented to the player. For depth’s sake, they are presented within a context that allows the player to use strategy to make those choices.

Fairness: Players of equal skill have an equal chance at winning even though they might start the game with different sets of options / moves / characters / resources / etc.

Viable Options

The requirement that we present many viable options to the player during gameplay is what Sid Meier meant when he said that a game is a series of interesting decisions (a multiplayer competitive game, at least).

If an expert player can consistently beat other experts by just doing one move or one tactic, we have to call that game imbalanced because there aren’t enough viable options. Such a game might have thousands of options, but we only care about the meaningful ones. If those thousands of options all accomplish the same thing, or nothing, or all lose to the dominant move mentioned above, then they are not meaningful options. They just get in the way and add the worst kind of complexity to the game: complexity that makes the game harder to learn yet no more interesting to play.

For the sake of depth, we also hope that the player has some basis to choose amongst these meaningful options. If the game at hand is a single round of rock, paper, scissors against a single opponent, there is nearly no basis to choose one option over the other so it’s hard to apply any kind of strategy. And yet a game of Street Fighter might be decided by a single moment when you choose to either block, throw, or Dragon Punch, or a game of Magic: the Gathering might be decided by a single decision to play a Counterspell or not. These examples at first glance look like the rock, paper, scissors example, but the decisions take place inside the context of a match that has many nuances where each player is dripping with cues about his future behavior. In Street Fighter and Magic, the player does have basis to choose one move over the other, and more than one choice is viable, we hope.

Also for depth, we prefer if the meaningful choices depend on the opponent’s actions. Imagine a modified game of StarCraft where no players are allowed to attack each other. All they can do is build their base for 5 minutes, then we calculate a score based on what they built. There are many decisions to make in this game, and it might have several paths to victory, but because these decisions are purely about optimization–more like solving a puzzle than playing a game–they make for a shallow competitive game. Fortunately, in the actual game of StarCraft, you do need to consider what your opponent is building when you decide what to build.

While we require many viable options to call a game balanced, the requirement about giving the player a context to make those decisions strategically and the requirement that the decisions have something to do with the opponent’s actions are really about depth. They’re worth pointing out though because we should attempt to increase the depth of the game as we balance it, not decrease it.

Fairness

Fairness, in the context I’m using it here, refers to each player having an equal chance of winning even though they might start the game with different options. In Street Fighter, each character has different moves, in StarCraft each race has different units, and in World of Warcraft, each arena team has different classes, talent builds, and gear. Somehow, all of these very different sets of options must be fair against each other.

I want to stress that I am only talking about options that you’re locked into as the game starts. That’s a very important distinction. Options that open up after a game starts do not necessarily have to be fair against each other at all. Imagine a first-person shooter with 8 weapons that spawn in various locations around the map. Two of these weapons are the best overall, 3 are ok but not as good as the best weapons, and the remaining 3 are generally terrible but happen to be extremely powerful against one or the other of the 2 best weapons.

Is this theoretical game balanced? It certainly might be, meaning that nothing said so far would disqualify it. A designer could decide that he wants all weapons to be of equal power, but he need not decide that as long as each weapon is still a viable choice in the right situation. It might be fine to have two powerful weapons that players compete over, a few medium power weapons that are still ok, and some weak weapons that allow players to specifically counter the strong weapons. There could be a lot of strategy in deciding which parts of the map to try to control (in order to access specific weapons) and when to switch weapons depending on what your opponents are doing.

By contrast, a fighting game with 8 characters designed by that scheme is not balanced because it fails the fairness test. Players choose fighting game characters before the game starts, but they pick up weapons in the first-person shooter example during gameplay. Being locked into a character that has a huge disadvantage against the opponent’s character is unfair.

Games that let players start with different sets of options are inherently harder to balance because they must make those sets of options fair against each other in addition to offering the players many viable options during gameplay.

Symmetric vs. Asymmetric Games

Let us call symmetric games the types of games where all players start with the same sets of options. We’ll call asymmetric games the types of games where players start the game with different sets of options. Think of these terms as a spectrum, rather than merely two buckets.

Symmetric Asymmetric

<————————————->

Same starting options Diverse Starting options

On the left side of the spectrum, we have games like Chess. In Chess, each side starts with exactly the same 16 pieces. The only difference between the two sides is that white moves first. Because of this different starting condition, we shouldn’t say that Chess is 100% symmetric, but it’s damn close. If Chess were the only game you had ever seen, you might think that the black and white sides are played radically differently; white sets the tempo while black reacts. There are entire books written about how to play just the black side. And yet if we zoom out to look at the many games in the world, we see that the two sides of Chess are so similar as to be virtually indistinguishable when compared to two races in Starcraft, two characters in Street Fighter, or two decks in Magic: The Gathering.

The more diversity in starting conditions the game allows, the farther to the right of our spectrum it belongs. So asymmetry, as we mean it here, is a measure of a game’s diversity in starting conditions. This is not meant to be an exact science, so there is no specific formula to determine where a game belongs on this spectrum, but it’s a handy concept anyway.

Let’s look at a few examples. StarCraft has three very diverse races so it belongs toward the right side of our spectrum. That said, even if the three races were as different as imaginable from each other, the number three is small enough that we shouldn’t put it at the far right (admittedly, this is a judgment call). Fighting games can have dozens of characters that play completely differently and they tend to have more asymmetry than most other types of competitive multiplayer games.

That said, individual fighting games can vary quite a bit in just how asymmetric they are. Virtua Fighter, for example, is an excellent and deep fighting game, but the diversity of characters is relatively low compared to other fighting games. All characters have a similar template compared to Street Fighter where some characters have projectiles, or arms that reach across the entire screen, or the ability to fly around the playfield. Meanwhile, Guilty Gear, a fighting game you’ve probably never heard of, has more diversity than any other game in the genre that I know of. One character can create complex formations of pool balls that he bounces against each other, another controls two characters at once, another has a limited number of coins (projectiles) that power up one of his other moves and a strange floating mist that can make that powered up move unblockable. It’s almost as if each character came from a different game entirely, yet somehow they can compete fairly against each other. Guilty Gear is possibly all the way to the right of our chart because it has both wildly different starting options (characters) and many of them (over 20!).

Magic: The Gathering is also extremely asymmetric in the format called constructed where players bring pre-made decks to a tournament. The variety of possible decks is staggering and tournaments usually have several different decks of roughly equal power level, even though they play radically differently.

First-person shooters tend to be very far toward the symmetric side of the spectrum, usually offering the same options to everyone at the start, except for spawning location. Remember that picking up different weapons during gameplay, or even changing classes during gameplay in Team Fortress 2, does not count as asymmetric for our purposes. (Again, because those different options don’t need to be exactly fair against each other.) Also, first-person shooters that do have asymmetric goals for each side often make the sides switch and play another round with roles reversed so that the overall match is symmetric.

Now that we’ve mapped out where some games fit on our spectrum, remember that this is not a measure of game quality. If your favorite games appear on the left (symmetric) side, that does not mean they are bad. If you like StarCraft more than Guilty Gear, you do not need to be upset that Guilty Gear is “more asymmetric.” The spectrum is simply meant to give us an idea about how different the starting options of a game are, not about the depth or fun of the game.

No matter where a game appears on this spectrum, it still needs offer many viable options during gameplay to be balanced. In addition to this, the farther a game is to the right of the spectrum, the more it needs to care about balancing the fairness of the different starting options. In the next part of this series, I’ll talk about how we can design games that make sure to offer enough viable options and in the article after that, I’ll explain how we can attempt to create fairness in those pesky asymmetric games.

篇目2,Balancing Multiplayer Games, Part 2: Viable Options

Sirlin

In the previous article I divided the idea of balance into the two sub-concepts of viable options and fairness. I also defined the concepts of symmetric and asymmetric games, where the more varied the different starting options are that must be fair against each other, the more asymmetric the game is.

How do we make sure we have enough viable options during gameplay?

Yomi Layer 3

The worst thing you can have in a competitive multiplayer game is a dominant move (or weapon, character, unit, whatever). I don’t mean a move that is merely good, I mean a move that is strictly better than any other you could do, so its very existence reduces the strategy of the game. A dominant move also probably has no real counter, so even if the opponent knows you will do it, there’s not a lot he can do.

To protect against dominant moves, we should be aware of the concept of Yomi Layer 3. I wrote a whole article on just that, but I’ll quickly summarize it here. “Yomi” is the Japanese word for “reading,” as in reading the mind of the opponent (and it’s also the name of my strategy card game). If you have a powerful move and use it against an unskilled opponent, I call that Yomi Layer 0, meaning neither player is even bothering with trying to know what the opponent will do. At Layer 1, your opponent does the counter to your move because he expects it. At Layer 2, you do the counter to his counter. At Layer 3, he does the counter to that.

That might sound confusing, but it’s very straight-forward in actual gameplay of real games. All it means is you and your opponent each have two options:

You: A good move and a 2nd level counter

Opponent: A counter to your good move and a counter to your counter

The designer generally does NOT need to design Yomi Layer 4 because at that point, you can go back to doing your original good move. Here’s an example Yomi Layer 3 situation that I created in Street Fighter HD Remix.

Honda wants to do his torpedo move get close to Ken, but Ken throws fireballs to prevent this. I gave Honda the ability to destroy these fireballs with his torpedo, but only with the jab version of the move that doesn’t travel very far. If Honda can destroy a fireball with it and end up closer, that’s good for him. Ken can counter this by not throwing the fireball in the first place and letting Honda do the jab torpedo. As Honda is flying forward, Ken can walk forward and sweep, hitting the recovery of the jab torpedo.

Honda: torpedo that goes far or jab torpedo that destroys fireballs

Ken: fireball or walk up and sweep

I did not need to add anything to allow for Yomi Layer 4 though because Honda can counter Ken’s walk-up-and-sweep option by simply doing the original, full-screen torpedo. Yomi Layer 4 tends to wrap around like this in competitive games.

This concept is a reminder that moves need to have counters. If you know what the opponent will do, you should generally have some way of dealing with that. As you go through development of a game, always be asking yourself if various gameplay situations you find yourself in support Yomi Layer 3 thinking. If they don’t there might be a dominant move in there somewhere, which is bad.

Local vs. Global Balance

Does every possible situation in a game need to support Yomi Layer 3?

Answer: no.

Does every possible situation in a game even need to be fair to both players?

Answer: definitely not.

Remember that I defined fairness by the overall chance of winning, given different starting options. Think of that as a global term, in that it applies to the game as a whole from the start of gameplay until someone wins. But the local level, meaning a particular situation in the middle of gameplay, does NOT need to be fair. Even symmetric games like Chess are supposed to have unfair situations. When you have 3 pieces left and the other guy has 9 pieces left, it’s supposed to be unfair to you. Or in StarCraft, if we find that two Zealots beat (or lose to) 8 Zerglings–even though they cost the same resources to make–that is perfectly fine. We don’t care if local situations like that are unfair or not, we only care if Protoss is fair against Zerg.

Checkmate Situations

I call a situation a checkmate situation if it means that one player has almost certainly won, even though the game isn’t actually over. For example in Super Street Fighter 2 Turbo, if Honda lands his deadly Ochio Throw against Guile in the corner, he can then follow up with a series of moves (involving more Ochio Throws) that virtually guarantee victory. Human error could change the outcome, but as soon as you see that first move, you know it should be a checkmate.

Are checkmate situations ok? They clearly violate our requirement that there be many viable moves (Honda really only has one option here and Guile has no good options). They clearly violate the concept of Yomi Layer 3. And yet, the answer is that checkmate situations can be ok. It’s sooooo hard for Honda to get close to Guile in this match, that if he does, he basically deserves to do 100% damage. All the gameplay that takes place before the checkmate is pretty good, and even though Honda can do this abusive thing up close, the match is still heavily in Guile’s favor overall.

I’d like to point out the other side of this argument though. Some players think that even though Guile has the advantage in this match, Honda’s ability to repeat that Ochio Throw is too degenerate. They say yes he needs it to win, but the game would be better overall if things weren’t so extreme. If only Honda could get close to Guile a little more easily, then he would not need a checkmate situation.

I think Rob Pardo, VP of Game Design at Blizzard, echoed this sentiment in a lecture he gave at the Game Developer’s Conference on multiplayer balance. He said that “super weapons” in real-time strategy games are generally a bad idea. They leave the victim feeling that there is nothing they could have done (checkmate!). He explained that even though the Terran nuclear missile in StarCraft looks like a super weapon, it has many built-in weaknesses: a ghost unit must be nearby the victim’s base, there is a red targeting dot on the victim’s base, and a 10 second countdown is announced to the victim, giving him time to destroy the ghost to prevent the nuclear missile.

Pardo has a good point and so did the players who complained about Honda. Even though I think checkmate situations can be ok, it’s telling that when it was my turn to make the decisions, I removed Honda’s checkmate situation in Street Fighter HD Remix. In that game, I gave him an easier time getting close to Guile, but replaced his checkmate situation with a Yomi Layer 3 situation so there’d be more viable decisions throughout the match.

Lame-duck Situations

Lame-duck situations are just like checkmate situations, but with one difference: time. Honda’s checkmate situation takes something like three seconds to get through. But consider a similar situation in the fighting game Marvel vs. Capcom 2. In that game, each player has a team of three characters: one on the playfield and two on the bench. Players can call in one of their benched characters for an assist move at any moment, letting them attack in parallel with their main character and assist character at the same time. Or better yet, they can stagger the attacks so that each attack covers the recovery period of the other.

When one player is down to his last character, he can no longer call assists. Fighting with just one character against an opponent with two or three characters might as well be checkmate, almost all the time. The problem is that it takes excruciatingly long for the match to actually end. It takes so long, that I call that last portion of the game the lame-duck portion. Other fighting games are exciting right up to the last moment, but a lame-duck portion of gameplay means the real climax is somewhere in the middle, and then players are forced to act out a mostly pointless endgame while spectators lose interest. Yes, on rare occasions someone pulls off an amazing comeback, comebacks also happen in games without lame-duck endings, so that’s not a good argument.

While a checkmate situation is maybe ok, you should try to avoid game designs that allow for long lame-duck endings. Both Chess and StarCraft have this undesirable property, and it just means that players often concede the game before the actual end. Those games also show that it’s not the worst thing in the world to have lame-duck endings (because Chess and StarCraft are good games), but you should still avoid them as a designer if at all possible.

Explore the Design Space

Design space is the set of all possible design decisions you could possibly make in your game. Whether your game is symmetric or asymmetric, it’s usually a good idea for your game to touch as many corners of the design space as possible. This helps give a game depth and nuance, but also tends to protect you from dominant moves.

For example, in the virtual card game I designed called Kongai, each character has four moves. When a move hits, it has a percentage chance to trigger an effect. For a given character, we could vary the damage, speed, and energy cost to come up with four different moves. If that’s all we did, though, we’d be missing out on a chance for more diversity in the game, and we’d get dangerously close to making some of those moves strictly better than others which would reduce the number of viable options. Instead, I tried to explore the design space as much as possible with different effects. One move can change the range of the fight from close to far, which is usually only possible before the attack phase. Another move deals enough damage to kill every character in the game, but only four turns after you hit with it. Another move can hit characters who switch out of combat, even though switching out usually beats all attacks.

The point is that by exploring the design space as much as possible, it’s a lot harder for players to judge the relative value of moves. How good is a 90% chance to change ranges during combat as opposed to a 95% chance to hit a switching opponent with a weak move? It’s hard to say and depends on a lot of factors, and that’s good because it means each move is likely to be useful in some situation and knowing when is an interesting skill to test. Incidentally, I call that skill valuation.

Players want you to explore the design space, too. When everything is too similar in a game, it feels like one-note design rather than a symphony. The more nuances and different choices you present, the more each player can express his own playstyle.

Wheat from the Chaff

Here’s my favorite quote from Strunk & White’s The Elements of Style:

Omit Needless Words

Vigorous writing is concise. A sentence should contain no unnecessary words, a paragraph no unnecessary sentences, for the same reason that a drawing should have no unnecessary lines and a machine no unnecessary parts. This requires not that the writer make all his sentences short, or that he avoid all detail and treat his subjects only in outline, but that every word tell.

Treat your game design the same way. Yes you should explore the design space, but omit needless words, mechanics, characters, and choices. Although your primary goal regarding viable options is to make sure you’re giving the player enough options, your secondary goal should be to eliminate all the useless ones.

Marvel vs. Capcom 2 has 54 characters, which is ridiculously many. How many are viable in a tournament? I’ll say 10, and I’m being generous. I actually call that a success because coming up with 10 characters in fighting game that are fair against each other is really hard. That said, it does look pretty bad to have more than FOUR TIMES that many characters sitting around in the garbage pile of non-viable choices. Compare this to Super Street Fighter 2 Turbo’s 16 characters, almost all of which are tournament viable, or Guilty Gear’s 23 characters, almost all of which are viable, and you see what a compact design looks like.

One genre of game is notable for intentionally creating an enormous number of useless options: collectable card games. Even though I claim Magic: The Gathering is one of the best designed games in the world, I’m judging the balance on an absolute scale of how many good cards/decks the tournament environment supports, not the ratio of viable to worthless. On that scale, we’d have to rate the game as a complete failure.

MTG’s Mark Rosewater defends the intentional inclusion of bad cards for design reasons, but this is only because the marketing department has brainwashed him into going along with their admittedly very successful rip-off scheme. Rosewater claims that bad cards are ok because they:

a) allow for interesting experimental mechanics that might end up being bad

b) test valuation skills because if all cards were equally good, there’d be less strategy

c) give new players the joy of discovering that certain cards are bad, as a stepping stone to learning the game

d) are necessary because even if they came out with a set that consisted entirely of known good cards from old sets, there’d still be only 8 tournament viable decks and the rest of the cards would not be used.

The solution to this problem is clear if we only cared about design and not rip-off marketing: print fewer cards. Reason a) is a great one, experimental cards that end up accidentally bad are fine. Reasons b) and c) are just silly. Saying the game would not have enough strategy if bad cards were removed is an insult to Mark’s own (terrific) game. Saying that new players need to discover the intentionally bad cards is even more silly because this comes at the cost of making sets overwhelming to new players and needlessly unwieldy for expert players. We all know the real reasoning here is to make players buy more random packs of cards to get at the few good ones.

Finally, reason d) is a blatant admission that the game should have fewer cards. Ironically, I’m not even sure d) is true. Maybe printing a large set of all good cards really would lead to more viable tournament decks than the game currently supports. If not though, they should stop printing all that chaff.

You could say that MTG proves that it’s really all about chaff, though. Maybe giving a few viable options amidst a sea of bad ones is good business when you sell by the pack. But we don’t see this in other genres and really we just haven’t seen anyone crazy enough to stand up to MTG on this issue and offer a competing card game that’s just as well designed but that eliminates all chaff. (A future Sirlin project?)

Double-blind Guessing

I used the technique of double-blind guessing in both my Yomi card game and my Kongai virtual card game (that one’s actually a turn-based strategy game dressed up like a card game). Anyway, the idea is to make all players commit to a choice before they know what the others have committed to. This is the same setup as the prisoner’s dilemma.

I learned this concept from fighting games. Though they appear to be games of complete information because you can see everything the opponent can see, fighting games are actually double-blind games. They come down to very precise timing and the moment you jump, you often don’t know that the other guy threw a fireball. You only know that 0.3 or 0.5 seconds ago he didn’t. It takes a small amount of time for the opponent’s move to register in your brain, and though it might seem insignificant, it’s actually critical to fighting games even working as strategy games at all.

Real-time strategy games like StarCraft have the same property, but on a much slower time-scale. You often do not know exactly what the opponent is building in his base at the moment you must decide what you should build. Even if you were able to scout his base, you might be working on information that’s several seconds old, so you have to guess what he did during that time.

If we were to remove the double-blind nature from my two card games Yomi and Kongai, and from fighting games and real-time strategy games, I think all of them would be broken. All those games need double-blind decision-making to be interesting. This design pattern is a way to increase the chances that you have many viable moves in your game because it naturally forces players into the Yomi Layer 3 concept I talked about earlier. Weaker moves become inherently better in a double-blind game because it’s easier to get away with doing them without being countered. I’ve even joked that some matches between the world’s best Virtua Fighter players are “a battle of the third-best moves.” Sometimes the players are so paranoid about doing their “best” option for fear of being countered, they fall back on a third best option that no one would ever counter (though it’s quite a sight when the opponent counters even that!). If no guessing was involved at all, players would not use third-best moves.

Playtesting

Finally, playtesting, especially with experts, is how you figure out where your problems really are. Do the experts ignore some vast portion of you game’s moves? Have they discovered a bunch of checkmate situations that you didn’t know about? Do you see them using a variety of strategies?

How to use playtests is really a whole topic of its own, but here’s a few points to keep in mind. First, be skeptical of them. Gamers tend to overreact to changes and claim that no counters exist to some strategies when counters do, in fact, exist. It can take years to sort out what is really effective in a game, and playtesters during your beta are only on the first few steps of that long journey. If they find what looks like the best strategy in the game, it might just be that they have found a local-maximum. Maybe some radically different way of playing that they have not yet discovered ends up being more powerful. This is actually par for the course in fighting games.

That said, playtests are really all you have. Theory is not a substitute for experts playing against each other and trying their hardest to win. I think everyone knows they need playtests, but the hardest question is who do you listen to when all your playtesters disagree, and how do you know when playtesters are wrong about how powerful something is? That question is so hard that I’ll save it for part 4 of this series when I tell you how much trouble we’re really in trying to balance a game at all.

Conclusion

To ensure we have many viable options, building in counters with the Yomi Layer 3 system is a good start. Not all situations need this though, and checkmate situations might be acceptable, but you should avoid their longer cousins, lame-duck situations, if possible. Explore your game’s design space by offering moves as different as possible because this technique has a good chance of making all moves useful somewhere and it makes it very difficult to determine what the best moves really are. That becomes an interesting skill test for players. Eliminate all the worthless options because they confuse the player and add nothing, but they make you a lot of money in a certain genre. The double-blind guessing mechanic helps keep more moves viable than otherwise would be.

And finally, all the theory in the world does not substitute for playtesting.

篇目3,Balancing Multiplayer Games, Part 3: Fairness

By Sirlin

In asymmetric games, we have to care about making all our different starting options fair against each other in addition to making sure the game in general has enough viable options during gameplay. That means each character in a fighting game and each race in a real-time strategy game should have a reasonable chance of winning a tournament in the hands of the right player. For collectable card games and team games like Guild Wars and World of Warcraft’s arenas, we should instead say that at least “several” possible decks and class combinations should be able to win tournaments.

Self-Balancing Forces

To make this semi-impossible task easier, we should use self-balancing forces if possible. This will let us go nuts with diverse options while building in some fail-safes to protect us from unknown tactics that players might develop in the future. I’ll give examples of this from two games: Magic: The Gathering and Guilty Gear XX.

In Magic, the various game mechanics such as counterspells, direct damage, healing, and so on, are divided amongst five colors. Players can build decks with as many of these five colors as they want, but the more colors they include, the harder it is to have the right mana to actually play the various colors of spells.

A simple diagram of the 5 colors of magic.

Consequently, decks are forced to specialize, which gives them inherent weaknesses. The color red, for example, has no way to destroy enchantment cards, so even if a red deck ended up being strong, it has a built-in weakness (it must either accept that it can’t destroy enchantments, or weaken its consistency by trying to incorporate another color that can). Also, each color has two enemy colors, and those enemy colors often include cards that are specifically powerful against their enemy colors. Again, if a red deck became too powerful, there will be blue and white cards that keep red in check, at least somewhat.

Finally, when Wizards of the Coast prints a new set with new mechanics, they usually include a card or two that are tuned to be fairly weak, but that specifically counter the new mechanic. I think they hope that these specific counters are not needed, but if the metagame becomes completely overwhelmed by the new mechanics, then there are at least some fail-safes the metagame can use to fight the new mechanic.

For example, Magic’s Odessey block focused on new mechanics involving the discard pile (called the “graveyard” in Magic), and the card Morningtide could remove all cards from all graveyards. If players started getting too tricky with their graveyards, Morningtide was a counter. It practice, this counter wasn’t really needed though. Later on, Magic’s Mirrodin block focused on artifact cards. The card Annul could counter artifacts (and enchantments) for only one mana, and the card Damping Matrix prevented artifact abilities from working. In Mirrodin’s case, the artifact mechanics really did get pretty out of hand. Annul and Damping Matrix were good ideas, but even stronger failsafes were needed during Mirrodin.

This is really a similar concept to Yomi Layer 3 that I mentioned in part 2. The idea is to build in counters to the game so that even if some things end up more powerful than you expected, the game is resilient enough that players can deal with it.

Guilty Gear is a very important example for its fail-safe systems. I described that game’s system in detail in this article, but here’s a quick refresher.

Guard Meter

Every time you hit the opponent, their “guard meter” goes down. The lower it is, the shorter their hitstun is. That means that even if a string of moves is an “infinite combo”, meaning that once you land the first hit, you could keep hitting them forever, their shorter hitstun eventually lets them block to escape the combo.

Progressive Gravity

When you are juggled in the air during a combo, the gravity applied to your character gets greater and greater over time. So even if a combo could juggle forever somehow, the victim’s body falls faster and faster over time, which would eventually ruin the infinite juggle.

Green Blocking

Imagine an attack sequence against a blocking opponent where do a few hits in a row that leave you pushed back, too far away to continue. But when you get to the last hit, you cancel it with a special move that makes your character move forward. After that, you repeat the sequence and force the opponent to block forever. In case this type of lock-down trap exists, Guilty Gear heads it off at the pass with a feature I call “green blocking.” While blocking, you can use some of your super meter to create a green force field that pushes the opponent pretty far away from you, letting you ruin the spacing of his trap.

Here’s that green blocking thing in Guilty Gear.

Each of these features is designed to solve a problem that the designers didn’t even know they had. They just know that if the game ever ended up in a state of infinite combos or juggles or lockdowns, that some fail-safe features need to save them. Also, these fail-safe features freed them to design incredibly varied and extreme characters. No matter how crazy a character is, or how scary this rushdown tactics ended up, the designers knew that this defensive system of fail-safes shared by all characters would keep things at least somewhat in check.

Playtesting and Course-correcting

Whether or not your game has fail-safe systems, at some point you have to design a diverse set of characters / races / whatever, make each one coherent and interesting, then have the confidence that you’ll sort out the balance problems in playtesting. All the theory in the world will not save you from playtests, of course.

You need to start tuning the game, and react and learn as you go. Do not let a producer turn tuning into a fixed list of items that you are accountable for checking off, one by one. It’s an organic, continuous process that keeps going until you need the ship the game. Playtesting lets you discover things you couldn’t have predicted ahead of time, and you should be open to those discoveries. The goal isn’t to make the exact game you originally envisioned, because your original vision did not take into account all the things you learned from development and playtests. When you or the testers discover nuances or unexpected properties, you have the chance to build around those and incorporate them into the game’s balance.

The Tier List

During the balancing of Street Fighter, Kongai, and my card game called Yomi, I used a similar approach with playtesters. I think this approach doesn’t really depend on the genre, and the key idea is managing the tier list.

The term “tier list” is, I think, a term from the fighting game genre. It means a ranking of how powerful each character is from highest to lowest, but it also accepts that such a list cannot be exact. Instead of ranking 20 characters from 1 to 20, the idea is to group them together into “tiers” of power. Remember that if a divine being handed you a 100% perfectly balanced game, that players would still make tier lists. You should accept the existence of these lists from players as a given, and its your job to manage this list.

In Kongai and Yomi, I even gave the players a template for the tier list that is most useful for me as a designer. First, I tell them to think of three tiers: top, middle, and bottom. Then I tell them about the two “secret tiers” that I hope are empty.

0) God tier (no character should be in this tier, if they are, you are forced to play them to be competitive)

1) Top tier (don’t be afraid to put your favorite characters here. Being top tier does not necessarily mean any nerfs are needed)

2) Middle tier (pretty good, not quite as good as top)

3) Bottom tier (I can still win with them, but it’s hard)

4) Garbage tier (no one should be in this. Not reasonable to play this character at all.)

My first goal of balancing is to get the god tier empty. Of course some character will end up strongest, or tied for strongest, and that is ok. But a “god tier” character is so strong as to make the rest of the game obsolete. We have to fix that immediately because it ruins the whole playtest (and the game). Also, the power level of anything in the god tier is so high, that we can’t even hope to balance the rest of the game around it.

My next goal is get rid of the garbage tier characters. They are so bad that no one touches them, and it’s usually pretty easy to increase their power enough to get them somewhere between top, middle, and bottom. If they are somewhere in those three tiers (which gives you a lot of latitude actually), at least they are playable.

Public Tier Lists

I really like it when playtesters all see each other’s tier lists. The debate this spawns is very useful for me to read (or overhear in person) and for the playtesters to sort out their ideas. Sometimes when someone put a character unusually high or low on the list, I dug deeper to find out that player really did know something most of the rest of us didn’t. Other times, that player is just crazy and the rest of the testers are happy to point that out. It’s also good to see what kind of consensus the testers come up with, like if they all rank a certain character as the worst, for example.