万字长文,关于概率要素和统计学要素在游戏设计中的运用,上篇

篇目1,从“热手”现象看游戏风险与奖励机制设计

游戏邦注:本文原作者是认知心理学博士Paul Williams,他在这篇论文中讨论了游戏设计与“热手”心理现象之间的联系,并认为深入研究“热手”现象,可为游戏的风险和奖励机制设计指明方向。

摘要:本文主要通过探讨一款游戏的风险和奖励机制设计,研究被称为“热手”的心理现象。“热手”这种表达起源于篮球运动——人们普遍认为处于热手状态的球员在一定程度上,比起他们长期的记录情况更可能再次进球,很多运动员在赛场上表现出了这种倾向。然而,大量证据认为这其实是一种站不住脚的观念。对于这种观念与有效数据存在出入的一种解释是,处于成功势头的球员因为膨胀的信心,更加乐意去冒更大的风险。我们非常有兴趣通过开发一个上下神射手的游戏来研究这种可能性。这种游戏有独特的设计要求,它包括平衡性良好的风险和奖励机制,无论玩家采取何种策略,这种机制都能为玩家提供相应的奖励。我们对这种上下神射手的迭代开发过程的描述,包括定量分析玩家如何在变化的奖励机制下冒险。我们将根据游戏设计的一般原则,进一步讨论研究所发现的意义。

关键词:风险、奖励、热手、游戏设计、认知、心理学

背景介绍

平衡风险与奖励是设计电脑游戏时的一个重要考虑因素。一个优秀的平衡风险与奖励机制可以提供很多额外游戏价值。与之类似的是赌博所带来的兴奋感。当然,当玩家如果在一个策略上打赌,他们都会持有一定的胜算和风险。打赌时,希望更大的风险能得到更多奖励的想法是很合理的。Adams不仅称“风险总是必然与奖励同在”,而且他还认为这是电脑游戏设计的基本原则。

许多游戏设计书也讨论了平衡风险与奖励在游戏中的重要性:

·“奖励与风险相当”

·创造一个复杂而进退两难的困境,让玩家自己权衡利弊,判断每一步行动可能产生的风险或者回报

·给玩家一个选择的机会,要么在奖励少的情况下安全地玩,要么在奖励多的情况下冒险,这是使游戏有趣刺激的绝妙方法。

风险与奖励在其他领域也有所体现,例如股市交易和体育。在股票市场,风险与回报总会影响投资选择。因为高风险下潜藏着高回报,所以一些投资者可能喜欢冒险投资如纳米技术这种股票。其他投资者可能更保守,选择投资浮动性更小的联邦债券,虽然得到更低的回报,但同时也承担更小的风险。在体育赛场上,因为从远距离投球能得三分,所以篮球运动员有时会采用更困难的动作来争取三分球,因此得冒更大的风险。

心理学家、认知科学家、经济学家等对这些因素非常感兴趣,总喜欢研究这些因素对人类在风险和回报结构中不同决定的影响。然而,股票市场和体育领域是喧闹的环境,这一点给玩家和研究者分离出任何事件的风险与奖励情况增加了难度。电脑游戏提供了一种卓越的平台,可以在控制良好的环境下,让人们研究风险与奖励对玩家行为的影响。我们从认知科学和游戏设计两个角度来考察风险与奖励,并相信这两个角度是互补的。心理学可以为游戏设计提供理论依据,而设计得当的游戏也可以成为心理现象研究的有利工具。

本文讨论的对象是上下神射手这种可不断更改迭代、以玩家为主心,并运用于调查“热手”心理现象的游戏。虽然本文关注的重点在于风险与奖励机制的设计过程,这种机制符合热手游戏的设计需求,我们将从这种现象的综述和目前的研究情况入手进行探讨。在随后的部分中,我们将描述游戏设计和研发的三个阶段。在最后一部分,我们会把这些发现与游戏设计的更普遍原则相互联系起来。

热手效应

“热手”的表达起源于篮球,它描述的是认为球员在进球后更有可能在下一次投篮时得分的心理现象(游戏邦注:也就是说,人们普遍认为这些球员正处于得分顺势中,投篮顺手,这种心理现象被称为“热手效应”)。在一份针对100名篮球迷的调查中,91%的人认为球员在成功投篮两次或三次后更可能再次命中,而之前如果连失几个球,以后再投篮时就不会那么顺手了。

直观的看,这些观点和预言似乎合理,开创性研究并没有发现在1980-81年的费城76人队投篮命中率,或者1980-81年和1981-82年的凯尔特队的罚球命中率中有出现热手现象。随后一系列运动研究证实了一个惊人的发现——投篮表现的冷热势可能只是一种假象。

然而,之前的投篮热手结果研究揭示了一个更加复杂的情况。之前的研究暗示,有确定困难的任务和不定困难的任务之间存在显著区别。篮球中的罚球可以作为一个确定困难的任务的例子。

在这种投篮中,距离是不变的,所以每一次投篮都有相同的难度级别。而在不确定困难的任务里,就像篮球赛中的投篮,球员可能要调整他们每一投的风险级别,所以投篮的难度会随着不同的投射距离、守势的压力和整个比赛的情况而改变。

有证据表明,玩家可能在确定难度的任务,例如掷马蹄铁、台球和十瓶式保龄球中获得顺势。然而,在非固定难度的任务里,例如棒球、篮球和高尔夫球,这种冷热势并不明显——其实际情况与普遍观念相反。

对于流行观念和真实数据之间不一致的最普遍解释是,人类往往误读了数字中的小趋向模式。也就是说,我们倾向于形成基于几个事件组的模式,例如球员三连投,然后用这种模式来预测随后的情况。关于投篮,经过三次成功的投球后,人们会错误地认为下一个投射比起长期水准更可能成功。这就是顺势的谬误。

关于这种不一致的另一种解释表明,为了不产生失误,投球者在一连串的成功中往往要冒更大的风险。在这种情形下,一个球员在热手情况下确实表现更好—–因为他们在相同准确度的条件下承担了更困难的任务。这种能力的增强恰好印证了热手预言,然而传统的投篮表现纪录却没有发现这一点。尽管我们通过界定固定难度和非固定难度之间的区别(因为热手情况多发生于固定难度的任务中,运动员所面临的是难度固定的挑战),可以让这种假设暂时成立,但只有进一步的研究才能证实这种假设究竟是否站得住脚。

不幸的是,如果设法收集更多运动比赛中的数据来研究热手现象,则不免带有主观性因素。我们如何评估一个确定投射的难度超过另一个投射呢?如何辨别球员是否采取了更有风险的策略?

解决这个问题的一个好办法就是,设计出可以准确记录玩家策略变化、难度不定的电脑游戏。这种游戏或许可以回答与心理学和游戏设计相关的重要问题—-玩家如何对游戏中一连串的成功或失败做出反应?

这款“热手游戏”的开发过程正是本文讨论的重点。这种游戏需要一个非常协调的风险和奖励机制,并通过玩家所采取的冒险行为,不断调整游戏结构。在各个开发阶段,我们测试了玩家对风险和奖励机制作出的反应,然后按照玩家的策略和表现,分析这些结果,以便将其运用于下一阶段的游戏设计。

这种设计的特征是反复性、以玩家为主心。虽然本文所示的游戏设计比较简单,但考虑到心理学调查地准确性的要求,我们执行的是比一般游戏开发更为规范的玩家测试。结果发现,我们可以准确评估玩家策略的改变,发现即使是风险与奖励机制中的微小变化,也会对玩家的风险策略产生影响。

游戏要求和基本设计

这种热手游戏首先需要一个相当协调的风险和奖励机制,它必须具备几个(5-7)高度协调的风险级别,使得玩家乐于调整他们的冒险级别来应对成功和失败。比如,一个风险级别得到的奖励实际上多过其他的风险级别,玩家久而久之会学到这点,然后就不太可能在这个级别中改变策略了。所以我们希望每个风险级别都能让普遍玩家都得到相应奖励。换句话说,不论采用什么风险级别,玩家得到最佳奖励的机会应该是相等的。

第二个要求是,支持我们在玩家成功和失败后对其策略进行考察。如果玩家经常失败,我们就无法记录足够的成功次数。如果玩家大多时候成功了,我们就考察不了失败情况。所以这个游戏的核心要素和关键难度在于,提供平均的成功概率,其范围介于40-60%。

满足这些要求的是使用Actionscript在Flash环境中开发的上下神射手游戏。任何基于物理挑战,带有得失分的简单动作类游戏都适用于我们的研究,上下神射手则恰好具有这几个优势。首先,人们这种风格的游戏极为熟悉,这意味着玩家容易上手,有助于我们用这个游戏来收集实验数据。第二,简单的重点难度参数编码(即目标速度和加速度),可以使我们轻松而准确地操作奖励机制。最后,上下神射手游戏中的“一击”与篮球中的“一投”相类似,有相似的“命中”和“错失”结果。这就是当前实验与热手起源之间的联系所在。

在上下神射手游戏中,玩家的目标是在规定的时间内尽可能多地射击外星飞船。也就是说总射击量和命中数量取决于玩家的表现和策略。游戏的屏幕会显示两架飞船,代表外星人的飞船和玩家的射击机(图1)。简单的界面显示了当前命中数和所剩时间。在游戏过程中,玩家的飞船会在屏幕底部中间保持静止。任何时候屏幕都会只出现一架外星飞船,它会在屏幕上部水平地前后运动,并且每次返回都碰一下右边沿或左边沿。玩家按下空格键即向向外星飞船射击。玩家只有一次机会来摧毁每一架新出现的外星飞船。每击落一架外星飞船,玩家就得到一个命中数的奖励。

图1:游戏界面(from gamerboom.com)

每一架外星飞船都是从屏幕上方进入游戏界面,随机向左边沿或右边沿移动。飞船飞离屏幕两边,水平移动,如此经过八次后才会离开屏幕。最初,外星飞船移动飞快,但它以相同的速率减速,每一次经过都更加缓慢。因此这个游戏能够展示一种不同难度的任务;玩家可以选择适合的风险级别,这样每次外星飞船经过时射击就更简单些。

对于玩家来说,这种风险和奖励的等式相当简单。无论玩家何时开火,每一次命中的得分都是一样的。因为目标是在一个游戏周期里摧毁尽可能多的外星飞船,所以玩家可以尽快从射击中获利;越早射中目标,玩家不仅得到命中数,同时获得更多的时间来击落随后的外星飞船。然而,因为外星飞船在八次经过的每一次经过里都减速,玩家越早开火就越难命中。如果射空了,玩家就减少1.5秒作为惩罚。也就是,下一架飞船的出现只有1.5秒的延迟,这就增加了准确射击的间隔时间。

第一阶段:玩家锁定目标

经过自测游戏,我们将游戏拓展到在线版。通过向学生、家庭和朋友发电子邮件,我们找到了五名实验玩家。我们要求玩家在给定时间内击落尽可能多的外星飞船。玩家先在练习级别上试玩6分钟,然后在竞技级别上玩12分钟。因为玩家的策略和命中率存在差别,所以他们遇到的外星飞船数量也各不相同。一个玩家有可能在60秒内遇到大约10架外星飞船。游戏结束后,玩家对每架外星飞船的反应时间和命中率都被记录在案。

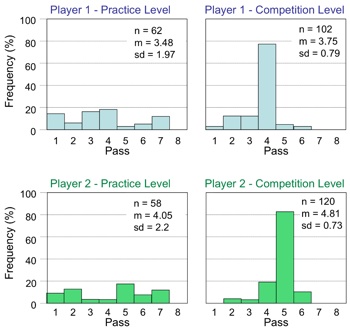

游戏的要求之一是,玩家在一系列难度级别中射击经过的飞船(越后面的飞船经过意味着更低的射击难度——这种简单的测试证明了在整个游戏中,玩家乐意探索空间,并且改变他们的冒险行为。图2是代表玩家1和玩家2的结果。通常玩家在游戏的练习级别时往往很有探索精神,这显示在第一次经过和第八次经过之间的射击数分布。然而在竞技级别,玩家往往采用单一策略,从图2的大尖峰可以看出来。它暗示了玩家在经过探索期后,试图通过射击三个固定的飞船经过以获得更多得分。

图2:玩家首次测试结果(from gamerboom.com)

上图是两名玩家在游戏第一阶段的测试结果。玩家1数据显示在上部,玩家2的数据显示在下部。左边的柱形代表在练习阶段的射击频率;右边则代表在竞技阶级中各个经过的射击频率。受测玩家在练习模块,射击频率均匀地分布在各个飞船经过,如左边图所示。但之后在竞技模块中,玩家采取固定策略,右半边的图中的尖峰正体现了这种情况。在以上各图中,n代表玩家的尝试射击总数;m代表命中数,sd代表尝试射击数的标准差。

在实验术语里,守定一个策略的行为被称为“投资”。在游戏结束时玩家反映,因为飞船的匀速减速,那么如果他们锁定同一次飞船经过,且与边界达到特定距离,就总能射中目标。针地特定经过的外星飞船,玩家采用特定的限时策略(即一个特定的难度级别)。在同一次飞船经过的射击中,玩家在每个时间单元里的命中数总是最高的。在案例表格(图2)里,一个玩家“投资”于第四次经过,另一个则是第五次经过。这种类型的投资行为与热手游戏的一个要求相反,也暴露了游戏的一个主要设计缺陷,这需要在下一个迭代调整中进行修正。

第二阶段:鼓励玩家探索

游戏设计第二个阶段的目标是解决玩家在单一策略上的投资问题。我们提议的解决办法是改变玩家飞船的位置,这样它就不再出现在屏幕中间那个相同位置了,而是在每架外星飞船出现时随机转移到中间的左边或右边(图3)。这样,在每一次测试,玩家飞船的位置都从平均分布的100像素中心的左边或右边中随机移动。这个操作旨在防止玩家习惯单一策略,总是等待凑效的时间序列采取行动(例如总是在飞船第四次经过,与屏幕边沿有固定距离时射击)。

图3:改变玩家射击位置(from gamerboom.com)

上图是游戏第二阶段的屏幕。蓝色矩形出现在这里是为了指明玩家飞船可以随机定位的范围,但在真正的游戏过程中不会出现这个蓝色矩形。

这一次我们推出了这个游戏的在线版本,并记录了6个玩家的测试数据。再次让他们先在练习级别上试玩6分钟,之后在竞技级别上玩12分钟。

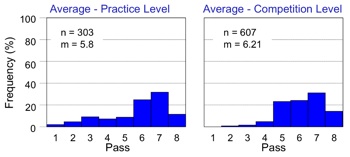

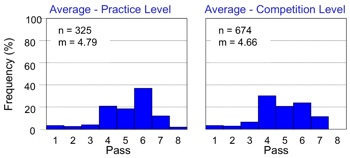

各个玩家在竞技级别的结果显示在图4上。在玩家射击位置里引入随机变化,显著减少了玩家投资于同一次飞船经过的倾向。与图2相比,图4变化的增加突显了投资的减少。因此,游戏中的微小调整对玩家的行为产生了重要影响,它鼓励玩家在游戏中改变冒险策略。另外,这种调整满足了热手研究的必备要求。

图4:玩家改进策略(from gamerboom.com)

上图是玩家在测试第二阶段中竞技级别的个人结果。减少的峰值和变化的增加表明,与第一阶段相比,玩家在竞技级别的单次飞船经过中射击的倾向大大减少。以上各图,n代表玩家的尝试射击总数;m代表命中数,sd代表尝试射击数的标准差。

图5代表所有的玩家在练习级别和竞技级别的平均测试数据。所有玩家在练习和竞技级别中的平均数据,均突出了游戏奖励机制对玩家游戏策略的影响。左边的柱形代表练习级别(没有在图4中显示)的数据,右边则代表竞技级别的数据。

图5:玩家推迟射击(from gamerboom.com)

上图是第二阶段中玩家的平均数据结果。左边的柱形图代表在练习级别每一次飞船经过的射击频率(%),右边则显示了在竞技级别每一次飞船经过的射击频率(%)。在以上各图中,n是所有玩家在该模块中尝试射击的总数;m是平均命中数。练习级别和竞技级别的射击对比,突出了玩家在游戏进程中推迟射击的趋势。

图5显示随着游戏的推进,玩家的射击策略有预见性地发生改变。例如,在练习级别的平均命中数(m=5.8)比竞技级别的(m=6.21)更少。这样在竞技级别,玩家往往更迟发动射击。这表明游戏奖励总集中于后面几次飞船经过,而玩家越来越熟悉这种奖励机制时,就会相应地调整游戏玩法。

为了在热手研究中最小化这种偏差,我们在平均玩家表现的基础上检测了风险与回报机制。我们特别感兴趣的是,第一次成功射击的可能性以及这种可能性如何转换为奖励系统。在后面的飞船经过中射击要花更多时间,但与之相伴的是更高的命中率。因为热手游戏的目标是在12分钟内击中尽可能多的外星飞船,所以命中率与所花时间对奖励机制来说具有同等重要性。

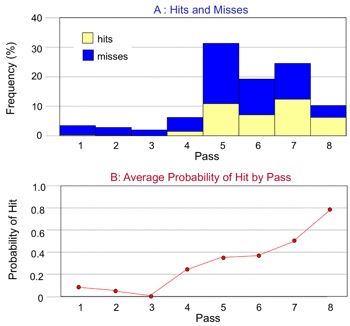

之后我们分析了一种情况,假如玩家坚持在特定的飞船经过里射击各个出现的飞船,那么12分钟内,平均命中数是多少。例如,在发现第一飞船经过的命中率后,玩家在第一次经过中会采取几次射击行动?第二次飞船经过时呢?以此类推,其后几次飞船经过的情况又将如何?图6显示了这个检测的结果。图6A显示了玩家在几次飞船经过时射击的平均数(柱形的总高度),以及每一次飞船经过的命中数(柱形黄色部分的高度)。图6B用这份数据表现成功的可能性,并且表明在后面几次的飞船经过中,玩家成功的概率更高。这从实验上证实,玩家在心理上感觉,后面几次飞船经过更容易让他们射中目标。

图6:预测玩家命中率

上图是游戏进程的第二阶段中的平均数据和模型预测。在图A,每个矩形的总高度表示尝试射击频率。黄色和蓝色矩形的高度表示命中和错失比例。图B表现的是给定尝试射击总数,每一次飞船经过命中的平均概率。图C和图D在实验结果的基础上,预测了假如玩家自始至终只在一次飞船经过时射击的命中数量。

这些可能性评估了在全程12分钟的模块里,只在一次从飞船经过中射击的情况下,一般玩家可能取得的总命中数。通过演示每次经过的期望命中数,我们为当前的游戏画出了一个最佳的策略曲线,如图6C所示。这个曲线是单调递增的,表明随着经过次数的增加,平均玩家的总命中数也随之增加。换句话说,玩家在低难度射击中更可能命中。游戏奖励明显集中于后面的飞船经过次数,这证实了玩家在游戏过程中改变了策略(即推后射击)。随着对奖励机制的熟悉,玩家的策略也相应地转向更迟,更容易的射击机会。

对游戏而言,在第8次飞船经过时射击可以认为是一种探索策略。图6C表明不断地在第8次飞船经过时进行射击产生了最大命中数,这也因此成了玩家的普遍策略。因为形成了这种策略,玩家为了获得一连串命中,就会减少早点射击的次数。可见这种设计仍然不能满足热手游戏的要求。

但只要一个简单的调整就能解决这个问题,那就是减少失败射击后的惩罚等待时间。目前惩罚时间是1.5秒,所以应该允许奖励机制中的惩罚时间发生一定的弹性变化。考虑到如果玩家选择在早一点的飞船经过时射击,击射次数越多,失误也就越多——减少每一次失误的惩罚时间,实际上等于是增加了在早期飞船经过时进行射击的奖励。

图6D表现的是在惩罚时间从1.5秒减少到0.25秒的情况下,一般玩家在12分钟里的预测命中数。这看似很小的调整平衡了奖励机制,这样玩家就得到更平衡的奖励(游戏邦注:至少从第3次飞船经过到第8次飞船经过是这样的)。对第一次飞船经过和第二次飞船经过准确率的评估是以小次数测试为基础的,这使它们难以成为测试模型;玩家避免采取更早的射击行动,有可能是因为外星飞船移动得太快。但允许玩家在第3次到第8次飞船经过中射击,仍然可为我们的热手研究提供了足够的参考数据。

第三阶段:平衡风险与奖励机制

在游戏设计的第二阶段,我们展示了玩家在飞船第8次经过时取得最佳表现的风险与奖励探索策略。我们认为这有可能就是促使玩家在飞船后面经过时射击的原因。可以用实验数据来模拟玩家表现,表明将惩罚时间降至0.25秒就能解决这个问题。

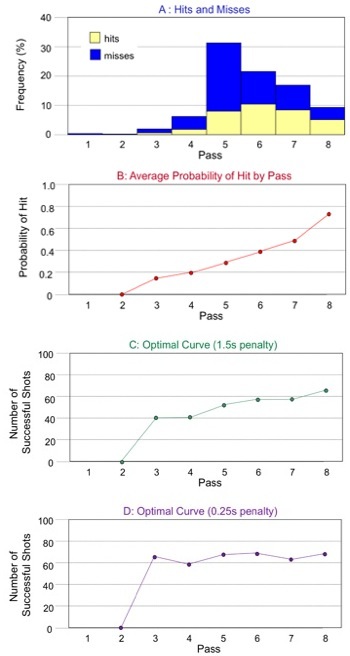

在改良版的在线游戏中,惩罚时间是0.25秒,五位玩家的测试数据均已一一记录。平均结果显示,在练习和竞技级别,玩家的射击大致发生在相同的飞船经过次数里(图7)。这个特征与图4相反,后者突显玩家在12分钟的竞技级别中,呈现了在飞船后面经过时才射击的倾向。这组数据证实了选择0.25秒惩罚时间的正确性,同时也证实了奖励机制的改变,可能影响玩家行为的说法。

图7:改变奖励机制的影响

图7:游戏第三阶段玩家的平均数据结果。左图代表玩家在练习级别的各次飞船经过的射击频率;右图代表玩家在竞技级别的各次飞船经过的射击频率。在以上各图中,m是平均命中率,n是玩家在该模块的总射击数。平均命中率显示了在均衡的奖励机制下,玩家不再尝试推迟射击。

我们开发热手游戏的前提条件就是,游戏在每一个假定风险级别中,都应该为一般玩家提供相应的奖励(总命中数)。第三阶段起的设计通过平衡奖励制度,开始与研究热手现象的要求保持一致。

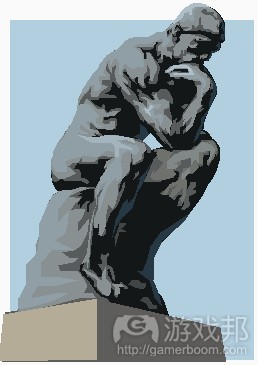

最后,我们要求这个游戏有一个总体上的难度级别,使玩家的尝试射击有40-60%的成功率。这个范围内的表现,有助于我们综合比较玩家对应一连串成功和失败的策略。也就是,对热势和冷势的测试。图8突出总体成功概率确实满足这个标准,总体成功概率(命中率)是43%。因此,这个游戏现在满足了研究热手现象的必要条件。

图8:第三阶段平均结果(from gamerboom.com)

图8:游戏开发第三阶段的竞技级别的平均结果。在图A,每一块矩形的总高度代表玩家在每一次飞船经过时尝试射击的频率。命中和错失比例分别用黄色和蓝色表示。图B是根据总体射击次数的情况,显示每一次飞船经过时的平均射击成功概率。在图B里,ps是总体成功概率(命中率)。

总结

为了研究热手心理现象,我们才开发这个电脑游戏作为研究工具,需要以此观察玩家对一连串成功和失败挑战的冒险反应。

我们设计了一款简单的上下神射手游戏,在该游戏中,外星飞船在屏幕上来回经过8次,玩家只有1次射击机会。玩家在游戏中面对几次相同的挑战。该游戏的目标是在一系列时间段内击落尽可能多的外星飞船。随着飞船减速,游戏设置里的风险就相应减小。玩家在靠前的飞船经过时成功击落目标就可获得1个命中的奖励,并且立即出现新的外星飞船。错失一次射击,就以一段额外的等待时间作为对玩家的惩罚。

作为一款热手游戏,它需要达到特殊的冒险和奖励标准。玩家需要在游戏中探索一系列冒险策略,并且均衡地获得与风险级别相当的奖励。我们还希望这个游戏挑战有一个与失败率大致相当的平均成功率,即介于40-60%,这样我们就可以用这个游戏来收集玩家应对成功和失败的行为数据。

为了达到目的,我们开发了超过三个阶段的迭代游戏版本。在每一个阶段,我们都通过在线版游戏的测试收集实验数据,并分析玩家的策略和表现。在各个后续设计阶段,我们都调整了游戏机制,使其在一定程度上达到平衡,以满足热手游戏的特殊要求。这个游戏设计的调整及其影响总结如表1所示。

表1:实验总结(from gamerboom.com)

表1总结:各阶段的设计调整及其对热手实验要求的影响。

游戏设计书往往会描述出游戏迭代的设计过程。这种迭代过程支持设计者在后继开发阶段解决原先没有预料到的问题。由于游戏机制在开发之初并不明朗,只有在游戏创建和操作阶段才突出来,因此迭代过程对游戏的完善来说尤其重要。Salen和Zimmerman将这种迭代过程描述为“以试玩为基础“的设计,同时强调了”游戏测试和创建原型“的重要性。为了实现目标,开发者往往需要连续创建游戏原型。我们确实是以高要求开始用相同的迭代完善、创建原型方法来改进我们的游戏设置。

我们这个方法的主要不同点在于,我们在各个设计阶段都会更规范地测验玩家的策略和探索行为。考虑到我们的游戏要求相当独特,单纯的客观反馈无法支持我们对游戏机制的需求进行微小调整。比如,在最初的测试里,我们发现玩家倾向于单一的游戏策略。进一步的分析也表明玩家通过射击最后一次经过的飞船,可以容易地增加他们的总体命中数,这是玩家对游戏策略的潜在开发。

游戏策略的探索问题在游戏界常引起争议,并屡屡被作为心理学界研究对象。开发和探索之间的权衡现象在许多领域也存在,外部和内部条件决定了玩家为扩大最大利益,或者最小化损失所采取的策略。例如,在寻找食物过程中,玩家就会关注资源分布情况。集中的资源,会让玩家集中对资源丰富的就近地区进行开发,而分散的资源则会将玩家引向对空间的探索过程。

Hills等人表明,探索和开发策略在精神领域也存在竞争,这取决于对需求信息的奖励,以及为研究探索所付出的代价。在我们的游戏环境里,玩家始终在最容易的情况下(即飞船第8次经过时)射击的这种策略总会产生最高的奖励。这就鼓励了玩家在游戏中采取推迟射击的策略,反过来也抑制了玩家探索其他策略(更早的射击)的尝试。如果没有收集玩家的实验数据,我们不可能预测到这个结果。

收集实验数据的另一大优点在于,它支持我们在衡量玩家表现的基础上,改变我们的奖励制度。在第一和第二阶段,每次损失1.5秒,玩家就会错失一个外星飞船。在第三阶段,我们在分析

玩家表现的基础上,将惩罚时间降至0.25秒。这个微小的调整却足以改变玩家的行为,并鼓励他们更早地冒险射击外星飞船。我们的游戏从本质上来看是相当简单的,但它却足以证实设计一个平衡性良好的冒险与奖励机制的困难性和重要性。

其他游戏文献里谈到的另一个共同的设计原则是以玩家为中心,这被Adams定义为“一种由设计者想象自己所希望遇到的玩家类型的设计哲学。”尽管如此,还是有一些观点认为游戏设计通常是以设计者的经验为基础。将玩家纳入设计过程往往也涉及更多客观反馈,例如将中心群体和采访广泛运用于可用性设计。我们在研究中发现,即使是很简单的游戏挑战,使用实验数据来测验玩家如何应对游戏以及他们如何表现,这也会成为平衡游戏设置的一个重要元素。

我们也认识到这种方法有一些缺点,即平均考察每位玩家的表现,有可能忽略玩家之间的重要差异。如果有一个理想的玩家模型就太好了,但这种玩家是不可能存在的,事实上,关于谁是“玩家”这个问题本身就存在许多富有争议的不同观点,因此我们才需要收集不同玩家群体的实验数据。如果玩家之间的差异很大,设计者有可能得针对不同群体进行抽样调查,例如将其分为休闲玩家、硬核玩家等不同群体。

现在我们所完成的游戏设计已满足研究热手现象的要求。这个游戏也许可以回答以下几个问题:

1.在游戏挑战中,玩家如何对一连串的成功或失败作出反应?

2.如果玩家处于热势,他们会接受更困难的挑战吗?

3.如果玩家处于冷势,他们是否会降低风险?

4.这种多变的风险级别如何影响玩家表现的总体测验?

5.热手原则如何运用到游戏机制设计中?

相信关于这些问题的答案不仅会激发心理学家的研究兴趣,而且也能进一步促进游戏设计。例如,设计者可以激起玩家的热势,使其更倾向冒险或探索他们的策略。当然也有可能运用冷势来抑制玩家当前策略,这种游戏机制可以在不打断玩家注意力的情况下,不知不觉地控制玩家的冷热势。进一步探索热手现象,将对心理学研究和游戏设计产生重大意义。

篇目2,设计师应合理设置游戏中的机率因素

作者:Soren Johnson

设计师开发游戏的一个强有力工具是机率,通过随机机会决定玩家行为结果或创建游戏环境。但借助运气元素也存在缺陷,设计师需把握其中取舍——什么机会元素能够植入游戏中,什么时候会产生反效果。

游戏回馈机率

借助随机元素的一大挑战是人类通常无法准确评估机率。一个典型例子是赌徒谬论,认为机率会逐步均等。若轮盘连续5次出现黑色,玩家会认为其再次出现黑色的机率非常小,虽然其机率都一样。相反,人类总是认为存在某些根本不存在的相关联系——例如,认为篮球场上带有“热手效应”的射手还会屡屡得手,这是个误区。调查显示,若二者存在某种联系,那就是成功射击通常预示着随后的失误。

老虎机 from (pcpop.com)

同时,就像老虎机和MMO游戏设计师了然于心的那样,不均衡设置奖励关卡机率通常会令玩家觉得奖励频繁(游戏邦注:胜过其实际情况)。某商业老虎机公司曾于2008年公布其回馈比例:

* 每8回合的回报率是1:1

* 每600回合的回报率是2:1

* 每33回合的回报率是5:1

* 每2320回合的回报率是20:1

* 每219回合的回报率是80:1

* 每6241回合的回报率是150:1

80:1回报率能够带给玩家挑战困难,大获全胜的快感,但这个概率实际上仍然极小,根本不会让赌场陷入赔钱的风险。此外,人类通常无法准确评估极限可能——人们太常预测1%机率,而且通常认为99%机率同100%一样保险。

公平竞争

人们无法准确评估机率这点令游戏设计师处在有利位置。简单游戏机制,例如《Settlers of Catan》中基于骰子的资源生成系统,令人难以把握。

其实,运气促使游戏变得更通俗易懂,因为它缩小熟练玩家同新手之间的差距(游戏邦注:不论是从理论上,还是从实际情况来看)。在融入强大运气元素的游戏中,初学者会认为无论如何他们都有获胜机会。很少人愿意竞争国际象棋大师位置,而西洋双陆棋戏老手更具吸引力——融入少量运气令所有玩家都有获胜机会。

在设计师Dani Bunten看来,“虽然多数玩家都讨厌会破坏其精密预期策略的随机事件,但没有什么能够像作品中的曲折因素那样令内容栩栩如生。不要让玩家决定内容。他们无法把握结果,但若他们失败,游戏要给予适当理由,同时在其获胜时享有‘挑战困难’的机会。”

因此,运气就像个社交润滑剂,能够提高多人游戏的吸引力,这通常不适合残酷的肉搏竞争内容。

运气元素失效的情况

然而,随机性并不适合所有情形或游戏。“糟糕惊喜”绝非好主意。若木箱在打开时会出现弹药和其他奖励,但在其中1%的时间里会爆炸,玩家就无法以安全方式把握机率。若爆炸提早发生,玩家就会立即停止打开木箱。若稍后才发生,玩家就会措手不及,感觉上当受骗。

同时,当随机性变成干扰性元素,运气就会降低玩家对游戏的理解。若《星际争霸》的机械兵以摇骰子的机率进行射击,其发射速率就会变得不均衡。运气带给游戏结果的影响就会逐渐变得微不足道,由于出现这些额外随机因素的干扰性,玩家越发无法把握机械兵攻击的强度。

history of the world from pairodicegames.com

此外,运气会不必要地放缓游戏进程。棋盘游戏《History of the World》和《Small World》就有非常类似的征服机制(游戏邦注:除前者使用骰子,后者未采用该元素)。每次攻击都旋转骰子促使《History of the World》的持续时间比《Small World》长3-4倍。原因不单是转动如此多骰子所存在的逻辑问题——获悉决策结果具有可预测性促使玩家提前策划所有步骤,而无需担心意外事件。通常来说,应对意外事件是游戏设计的核心内容,但游戏速度也是个重要元素,所以设计师需确保取舍具有价值。

最后,运气不适合计算胜算。在游戏结束之前,玩家经历越长时间才遇到不幸的摇骰结果,游戏就越能呈现一种公平感。因此,运气越早发生作用,游戏给人的平衡感就越好。很多经典纸牌游戏——《皮纳克尔》、《桥牌》和《红心大战》,都遵循最初随机分配的标准模式,这促使这类游戏逐步建立起自己的市场,该领域随后开始出现运气之外决定输赢的游戏诀窍。

机率就是内容

的确,随机性带来最初挑战观念在传统游戏中扮演重要角色,从《踩地雷》之类的简单游戏到《NetHack》和《 帝国时代》之类的深层游戏。就其核心来看,《单人纸牌》和《暗黑破坏神》差别不大——二者都呈现玩家需巧妙进展方能获胜的随机环境。

最近融入随机元素的典型例子是《洞穴探险》,在这款游戏中,独立开发者Derek Yu既融入《NetHack》中的随机生成关卡,也借鉴《Lode Runner》当中的2D装置。游戏沉浸性来自于其蕴含的无数有待探索的新关卡,但游戏融入某些意外怪兽和隧道,其难度颇令人沮丧。

其实,纯粹随机性就像头难以控制的野兽,其创造令原本稳固设计失去平衡的游戏机制。例如,《文明 3》引入战略资源理念,这是建设特定单元的必需品——战车需要马匹,坦克需要石油。这些资源随机分配于世界各角落,这当然会把玩家带入一个广阔大陆,这其中只有一簇由某AI敌人控制的铁矿。我们常常会看到社区中有人抱怨由于缺乏资源无法组建军队。

至于《文明 4》,游戏通过在某些重要资源中隔开一些空间以解决原有问题,这样两个烙铁资源就不会同时出现在7块砖的距离之内。游戏资源仍旧出乎意料地分布于世界各个角落,但若资源不是成簇存在,就会出现不幸玩家。此外,游戏鼓励集聚不那么重要的奢侈资源(游戏邦注:包括香、宝石和香料),以推动有趣交易机制。

呈现机率

最终,当谈到机率元素时,设计师需扪心自问:“运气的促进或阻碍度如何?”随机性是否巧妙地令玩家失去平衡,这样他们就无法轻易解决问题?或者它只是在体验中融入令人沮丧的意外因素,这样玩家就不会寄希望于他们的决定?

一个确保游戏采用前种模式的因素是清楚呈现机率。策略游戏《Armageddon Empires》的战斗基于少数简单的掷骰子活动,然后在屏幕上直接显示骰子结果。让玩家接触游戏算法能够提高机制的方便度,将机会变得一个工具,而不是一个谜团。

同样地,在《文明 4》中,我们引入帮助模式,这准确呈现战斗成功的机率,能够大大提高玩家对于潜在机制的满意度。由于人类无法准确评估可能性,帮助他们做出明智决定能够有效提高游戏体验。

Magic The Gathering from gamespy.com

有些纸牌游戏,例如《Magic: The Gathering》或者《Dominion》,通过将游戏体验集中于是否能够在玩家构建平面中绘制纸牌,把可能性元素放在显著位置。把握稀有和普遍元素比例,知晓纸牌每次只能通过平面绘制一次的玩家在能够在这些游戏中取得胜利。这个理念可以通过提供虚拟“平面骰子”(游戏邦注:以确保骰子旋转能够保持平衡)延伸到其他机会游戏中。

另一源自古老游戏历史的有趣理念是回合策略游戏《Lords of Conquest》中“机会元素”游戏选项。3个选项(低、中和高)决定运气是仅用于打破僵局,还是决定战斗方面扮演重要角色。决定机会在游戏中的最合理角色非常主观,给予玩家调整旋钮的权利能够促使游戏吸引更广泛口味不同的用户。

篇目3,分析游戏中掉落道具的随机性设计

作者:Chris Grey

尽管随机性能够用于影响玩家的游戏体验,但却很少人真的花心思去创造随机性。我们都曾遇到过有关稀有随机掉落道具的战争故事,而我们也需要花很多时间才能获得它们。更糟糕的是那些一下子便得到这种稀有道具的玩家还对社区中的其他玩家炫耀,就好像他们能够控制随机数生成器一样。不管会变得更好还是更糟,如今的随机性能够有效地丰富玩家的游戏体验;如此我们为何不说说该如何更积极地创造随机性?

而今天,我想侧重于讨论掉落道具,也就是我想说说在《双重国度》)中,玩家击败怪物以及在驯服怪物过程中所获得的掉落道具。我将避免提供简单的数字;相反地,我会呈献给玩家一些有关随机感的启发法。

ni-no-kuni(from ramblingofagamer)

首先,让我们着眼于当前其他设计师所使用的方法。一般情况下设计师都会着眼于道具在游戏内部的经济价值,并决定它的稀缺度。越强大的道具将越晚出现,或者带有更低的常数比例。我们需要让玩家能够在获得道具时产生成就感,或至少觉得自己足够幸运。不管怎样,这都能让玩家更加重视道具。如果玩家能在平均尝试次数后获得掉落道具,并且设计师也准确设定了道具的价值,那么玩家便能够感受到这一价值,并更紧密地依附于道具上。

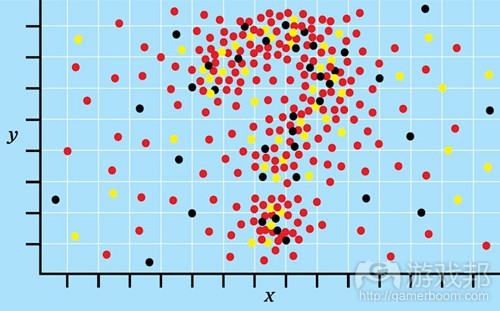

基于恒定的掉落比例,以下是关于农场体验的图表。你可能期待看到钟形曲线,但是我想在此列举其它包含于该数据中的内容。而为了做到这一点,我们将改变垂直轴以呈现出如下内容:假设你的玩家在获得其中一个道具再杀死敌人,那么以下便是关于玩家会持续农务多长时间。

.jpg)

population of players still farming for their first drop over time(from gamasutra)

让我们注意形状;在此我们需要注意的一个关键点便是曲线永远都不会真正到达0点。这便意味着有些玩家从未真正成功获得道具,而他们将更加辛苦地经营农场,因为他们需要投入大量时间去执行设计师所规划的,即要求他们在五分之一时间内完成的任务。甚至玩家在获得掉落道具时所拥有的好心情也会在这一关卡中被摧毁。更糟糕的是,使用这些道具而执行农务的过程中会歪曲玩家对道具的价值观‘即大多数玩家在看到别人无需投入大量时间去刷任务时便会产生不公平感,并且他们将把这种怨恨发泄在道具中。自然地,这种怨恨也会溢出游戏,而玩家也将大肆发泄游戏的不公平。这些玩家将被任务的属性给垦殖殆尽,而这也将破坏设计师们精心设计的难度曲线。如此的受挫将导致玩家退出游戏,如果玩家已经投入了极大的精力于游戏中并坚持了很长一段时间,那便说明你疏远了这些富有激情的玩家。如果从游戏全局来看,这些对于随机掉落道具的愤怒也就没那么重要了。

在这种情况下使用平均值去达到平衡将会引起各种各样的问题。在图表中,我们注意到6%的玩家会在尝试平均值出现前获得掉落道具,而一半的玩家在平均值出现前便拥有了道具。这便意味着大多数玩家不会满足设计师的设计目的而多次面对事件,实际上,任何玩家通常只会经历一次这一过程去获得任何一种掉落道具,这将成为玩家对体验持续时间的共识。也许这只是一个快乐的错误,但是它却有可能减少设计师希望玩家所获得的努力感。我们预测将会有25%的玩家花费多于平均值1.5倍的时间去争取掉落道具,而超过10%的的玩家将花费平均值2倍以上的时间去获得道具。如果这些玩家着眼于剩下的玩家群体,他们将会发现自己的体验在游戏过程中所占时间比例是运气元素的2至3倍。

当50%的玩家在早期完成刷任务而25%的玩家是在游戏末尾时,带有稳定资源的设计师将受到吸引去创造平均体验。此外,这些25%的玩家将开始着眼于活动所带有的缺点。如果设计师忽视了尾端的游戏体验并拥有一些不同的掉落道具要求,那么他们便很难迎合所有玩家的喜好;当玩家需要更多掉落道具,他们更有可能坚持到游戏最后。由于专注于数字上的平均体验,设计师其实忽视了任何一种掉落道具上75%的玩家。

其它类型的随机性–不断增加的掉落道具

我想要呈现出两种简单的选择。首先便是掉落比例的提升。每次当玩家不能在事件最后获得掉落道具时,那么该道具下次掉落的可能性便会大大提升。这种可能性将在以设定好的掉落道具上进行叠加,即当道具掉落时,可能性将重置到其它关卡上。而如果你希望游戏中只有一种掉落道具的话也可以将其重置为0;如果你希望让玩家在游戏体验中花相同的时间而获得另一种道具,你也可以将其重置为初始概率;如果你希望此时的道具更有价值而在之后更容易找到,你可以将其重置为较高的可能性。

以下是关于这种体验的新图表。

.jpg)

escalating drop rate(from gamasutra)

这次我们会注意到曲线在最右端已经触及0点了。如此便消除了我们之前所提到的无底的体验。当然也会出现一些运气欠佳的玩家,但是关于他们用于面对这种不幸的时间也存在着上限。这里仍是一些关于战争的故事,但是如果设计得当的话,设计师便能够更轻松地设计出最高的玩家体验,并且将更加靠近平均值。如此那些战争故事便能够有效地加强玩家体验,而玩家不仅能够感受到自己的努力,并且这种感受也不会超过设计师的预期,并且能够有效地带给玩家骄傲感。而玩家想要获得某一道具的焦虑感也将被填平。

此外,如果你最初所设置的掉落比例较低,并且让增长率能够不断提升,你将拥有更少的幸运玩家。如果你希望玩家能够通过反复争取强大道具而精通具有挑战性的战斗的话,这种设置便很有帮助。但是需要注意的是,那些在首次尝试便获得道具的玩家将扭曲他们的难度曲线,尽管这种情况比玩家进行多次尝试更加微妙。授权并不是件坏事,但是这将导致幸运的玩家觉得游戏很容易被战胜(因为一些偶然元素)。一般情况下,不断提升的掉落比例将让任何特定玩家的游戏体验更加均衡,并且对于他们来说这种变化是相对无形的。

这里存在着一种观点,即如果玩家在获得道具前射死一些相同类型的怪物会出现什么情况。如果玩家清楚发生什么了,并知道他们在获得一个掉落道具前需要进行多次战斗,那么战斗可能就会突然变得有效了。而因为任何回报都是隐藏在转角处,所以这种形式的投机也会更加有用。我们不能低估这种推动力量的强大性。强迫玩家重复做一件事50次是多么烦人啊,因为失去了最初的新鲜感后,所有的体验对玩家来说都没有了乐趣。如果游戏能让玩家在经历任何一次尝试后随机获得奖励,那么玩家便会对游戏更有兴趣。如果玩家觉得自己所付出的能够随时得到回报,而不是寄托于未来的某一时刻,那么他们便会更加重视游戏体验。

其它类型的随机性–收获递减

这与上述的随机性完全相反。这一理念是关于玩家拥有有限的机会在道具消失前把握住它。一般情况下,关于掉落道具的最初可能性都比较高,并会随着玩家每次的失败而降低,或者在玩家进行一系列尝试后事件将会消失。而不管是何种情况都会让玩家在经过几次尝试后而更加难以获得掉落道具。

这种随机性并不像我们在保证玩家基础将永远不会获得道具那样简单。比起传统方法而言,这更加人道化,并且玩家将不能以时间去交换游戏内部的价值。如果道具对于玩家来说具有很大的价值,那么玩家便会知道其中的风险,即最终结果将具有很大的紧迫性,而拥有技能的设计师便能使用这种方法去创造更大的情感标志。

关于这种类型的掉落道具存在着一种潜规则。基于permadeath机制,玩家仍然会因为结果而紧张,并能够使用加载功能而进行多次尝试去获得自己想要的掉落工具。如果游戏删除了重新加载功能,就像在《恶魔之魂》中那样,那么你便会考虑缩短游戏长度(但仍具有重完价值),或者设置不同的掉落工具,并且只有一种道具在游戏中是可得到的。如此设置将推动玩家基于他们所获得的道具而调整游戏风格。我们必须注意这种随机性,因为它很容易惹怒玩家。你已经非常接近于大多数玩家的核心期待:“我主导着这个游戏世界,并且因为付出了努力,所以我必须能够得到一切想要的。”

一些通用的启发法

因为大多数人都不知道如何看待可能性,所以我想要列举一些指南。

当你感到疑惑时,可以创造一个模拟对象。当你使用任何类型的可能性分布(除了恒定的掉落比例),你并不需要罗列出所有例子的数字试验。我更建议你们写下一个程序(游戏邦注:或者邀请程序员好友的帮忙)去模拟效果,并生成系统发展(经过多次测试后)的图表。这些信息非常有帮助(虽然不能百分百保证是准确的),并且我们也无需投入大量时间进行计算(要求获得准确答案)。

一般而言,玩家所面对的掉落道具越随机,他们的整体体验将越接近平均体验,并且他们将越有可能需要面对最糟糕的短期情况。让我们着眼于这种方法:如果每个人摇50次骰子,那么骰子所滚动的总数将不会有太大的区别,而每个人也至少滚动了几次。但是这里存在着一个很狡猾的陷阱:你不能假设运气欠佳只会影响着某些玩家;这几乎会打击到所有玩家。所以请谨慎地设计。

反面情况也是真的。即游戏中的少量随机掉落道具意味着玩家体验将非常不平衡,并且具有很大的差别。

人们总是不愿意去估算各种可能性。可能性越低就意味着估算能力越糟糕。特别是在面对稀有掉落道具时,这种情况便更加明显;如果玩家知道掉落道具是稀有的,他们便会感受到满满的压力。除此之外,当玩家需要面临较长的农务过程时,他们便会感受到消极情绪,而幸运的玩家在经历短暂的收获道具喜悦后将能够更快速地前进。

人们总是会将运气与技能结合在一起。调查能够强化这种情况的机制很有趣:为技能型玩家提高道具掉落比例将让他们能够继续尝试一些更有趣的内容,而让低技能的玩家能够获得各种道具却带有风险性。这种情况是我很少看到的,但是我却认为它具有很大的潜能。

一般情况下随机性是讨喜的,如果玩家买进一些带有风险性的内容,那么我们便可以使用投机去创造惊人的情感体验。遗憾的是,因为游戏机制背后一些额外的可能性将创造出各种不同的体验(来自任何玩家或者玩家间的游戏过程转变),所以最接近我们想法的内容总是未能得到理解。随机性具有巨大的潜能,而我只能通过文本去描述一些皮毛,所以我建议你们还是通过试验去深入摸索。

篇目4,举例论述游戏设计蕴含的概率学原理

作者:Tyler Sigman

下面是答题时间!

问题1. 假设你正在设计一款全新MMORPG游戏,你设定当玩家消灭一只怪兽时,特殊道具Orc Nostril Hair将有10%的出现几率。某位测试者回馈称,他消灭20只怪兽,发现Orc Nostril Hair 4次,而另一位测试者则表示,自己消灭20只怪兽,没有发现Orc Nostril Hair。这里是否存在编程漏洞?

问题2. 假设你正在设计游戏的战斗机制,决定植入一个重击机制。若角色进行成功袭击(假设是75%的成功几率),那么他就可以再次发动进攻。若第二次袭击也成功,那么玩家就会形成双倍破坏性(2x)。但若出现这种情况后,你再次进行袭击,且这次袭击也获得成功,那么破坏性就上升至3倍(3x)。只要袭击都获得成功,你就可以继续发动新的进攻,破坏性就会继续成倍提高,直到某次袭击出现失败。玩家释放至少双倍(2x)破坏性的几率是多少?玩家形成4倍(4x)或更高破坏性的几率是多少?

问题3. 你决定在最新杰作RTS-FPS-电子宠物-运动混合游戏中植入赌博迷你游戏。此赌博迷你游戏非常简单:玩家下注红宝石,赌硬币会出现正面,还是反面。玩家可以在胜出的赌局获得同额赌注。你会将硬币投掷设计成公平程式,但你会向玩家提供额外功能:在屏幕右侧显示最近20次的硬币投掷结果。你是否会请求程序员引入额外逻辑运算,防止玩家利用此20次投掷结果列表,以此摧毁你的整个游戏经济体系?

我们将在文章末尾附上这些问题的答案。

游戏设计师——复兴人士&非专家

Designerus Gamus from gamasutra.com

如今设计师这一职业要求各种各样的技能。设计师是开发团队的多面手,需要消除美工和编程人员之间的隔阂,有效同团队成员沟通——或者至少要学会不懂装懂。优秀设计师需要对众多知识有基本的了解,因为游戏设计是各学科的随机组合。

我们很常听到设计师争论线性或非线性故事叙述、人类心理学、控制人体工学或植入非交互事件序列中的细节内容;你很少看到他们深究微积分、物理学或统计学之类晦涩科学的梗概内容。当然依然存在Will Wrights这样的人士,全心致力于天体粘性物及动态城市交通规划。但多数人都会在遇到方程式时选择退缩。

概率学+统计学=杰出成果

概率学(P)和统计学(S)是两门对游戏设计师来说非常重要的复杂科学——或者至少对他们来说应该非常重要。它们之间的关系就像豌豆和胡萝卜,但和那些美味的蔬菜一样,它们不是同个事物。简略来说就是:

概率学:预测事件发生的可能性

统计学:基于已发生事件下结论

综合起来,P和S让你可以做到这些:同时预测未来和分析过去。这多么强大!但记住:“力量越强大,责任越重大。”

P和S只是设计师工具箱中的工具。你可以且应该在设计游戏时充分利用它们,这样游戏才会更具平衡性和趣味性。

好事坏事接二连三

P和S有许多厚厚的教材,本文并非这类教材的替代内容。

这一系列的文章旨在让你把握P和S的若干主要话题,主要围绕设计师需投以关注的要点。

这一部分主要谈论针对游戏设计师的概率学。

记住,成为多面手设计师并不意味着你需要变成这些领域的专家;你只要能够唬弄其他人即可。

建议:强化对“理论”、“编撰”和“分类法”的运用能够促使合伙伙伴朝这些目标迈进。开发者不妨对各学科进行高谈论阔。

现在我们开始切入正题。

概率学

多数游戏都会在基础机制中融入1-2个概率学元素。就连国际象棋也需要靠掷硬币来决定谁执白棋。通常,我们将概率学机制称作“随机事件”。随机一词的意思也许是“完全随机”,也许是“刻意随机”。无论是《德州扑克》、《魔兽世界》,还是《炸弹人》,随机事件都有融入它们的核心游戏机制中。

概率学:这不仅是个不错构思,还是个设计法则!

你多半听过“根据概率学法则”这样的表述。这个短语的关键词是“法则”。概率学围绕的是无可争辩的事实,而不是猜测。从学术角度来说,这就主要是概率论,但出于游戏设计目的,你完全能够计算概率。当你投掷6面骰时,摇到“6”的几率是1/6=16.7%—–假设这是次公正的“投掷”,骰子制作合格。16.7%不是猜测数值。这几乎等同于事实(也许有人会从量子力学角度出发,认为16.7%不属于事实。我的意思是,骰子可能会突然变形,进而不复存在,或者你查看骰子的不当方式曲解它原本的波动函数)。大家在概率学方面的多数错误理念都和认为概率学不是基于法则,而是基于近似值或指导方针的观念有关。不要陷入这些误区。下面我将谈到几个常见误区,大家务必多加注意。

独立和相关事件

我将先从一个重要特性切入,谈论概率学的热门话题:事件属于独立,还是相关。这你是计算概念前必须要把握的要点。

独立事件:事件的出现概率和另一事件发生与否无关。例如,投掷6面骰(事件1),然后再次进行摇掷(事件2),都是属于独立事件。第一次摇掷和第二次摇掷没有任何关系。你在事件1的摇掷结果对事件2没有任何影响。另一独立事件的例子是,从一个牌组中抽出一张纸牌,然后再从另一个不同牌组中抽出一张纸牌。

相关事件:一个事件的出现几率和另一事件存在相关性。例如,从牌组中抽出一张牌(事件1),然后再从同个牌组中抽出一张牌(事件2)。第二次抽到王的几率会受到事件1的影响(游戏邦注:若你在事件1中抽到王,那么在事件2中抽到王的几率就会受到影响,因为牌组中的王变少了)。

条件概率

概率学的一大益处是,能够计算条件事件的概率——也就取决于其他事件发生概率的事件。例如,我过去一直玩传统《战锤》桌面游戏,游戏主要基于6面骰。根据“撞击”图表,若不熟练的战士(配备低级的武器技能)和高级敌人配成一组,那么你就需要连续摇到两次“6”,方能进行袭击。那么连续两次摇到“6”的概率是多少?

先说重点,你需要先摇到第一个“6”(1/6的几率),然后你得摇到另一个“6”(1/6的几率)。若一个事件的发生取决于另一事件的成败,那么你需要将二者的概率相乘,方能得到最终发生概率。在此,就是1/6 x 1/6 = 1/36,这就是你连续两次摇到“6”的概率。

通过这一新发现的条件概率,我们很容易进行疯狂骰子投掷的几率运算。你连续摇到4个“6”的几率是多少?答案是1/6 x 1/6 x 1/6 x 1/6。或者更简单的,(1/6)4 = .0008 = .08%。那么连续摇到10个“2”呢?(1/6)10 =相当小的百分比。

Incontrovertible Visual Proof that Four “6”s is Possible from gamasutra.com

逐步提高难度,在摇到“5”或“5”以上数字后,摇到“3”或“3”以上数字的几率是多少?就是4/6 x 2/6 = 8/36 = 2/9 = 22.2%。

迷信和均分谬论——“赌徒谬论”

大家在概率学方面的一个常见错误观念是,模糊独立事件和相关事件之间的界限。这主要体现在如下模式:

错误1:认为若上次摇到的是“5”,那么“5”出现的几率就变小。

错误2:认为若连续10次都没摇到“6”,那么“6”出现的几率就很高。这相当于认为,若“红色”多次没有在轮盘上出现,那么它很快就会出现。

错误3:在投掷10次硬币,8次出现正面,2次出现反面后认为,在接下来的10次投掷中,反面出现的几率会更高,以实现“平均化”。

所有这些都属于“赌徒谬论”。从根本来说,这其实就是混淆独立事件和关联事件的概念。这一谬论的另一表现是,“我刚在赌轮盘中输掉所有资金,因为概率法则违抗均分谬论”。这和鲜为人知的“赌场为什么允许我记录轮盘旋转结果?——显然他们知道我将发现其中模式,打破轮盘谬论?”这一观念存在密切关系。

不要陷入这些误区。摇掷骰子多次或旋转轮盘都是属于独立事件,纯粹而简单。下面就来深入查看上述错误:

错误1:通过6面骰摇到“5”的概率是1/6 = 16.7%。这从来没有变过。这和你是否连续摇到8次“5”或很久都没摇到“5”毫无关系。16.7%依然是个幻数。“骰子没有记忆”是个惯用语,这完全正确。

错误2:和上述内容相同。摇到“6”或转到“红色”的几率和此前的摇掷或旋转情况毫无关系。轮盘也没有任何记忆。

平均数定律遭到否决

错误3也是个类似,但有所拓展的错误观念:认为所有事件在长期范围内都会“均衡化”——平均数法则。的确投掷硬币1000次,你有望看到50%的正面,50%的反面。但这里没有所谓的“校正”。若你投掷硬币10次,有8次正面,2次反面,那么接下来的10次投掷没有理由会出现更多反面。你也许会犯下哲学错误,认为“该出现正面”,甚至犯下更大错误,在此投入众多资金。

这里的要领是,若你投掷硬币100万次,你看到正面和反面的几率都是50%。但不要认为正面出现的次数会和反面保持平均——其实它们可能会相差几百次,或者甚至几千次。记住,当正面出现次数比反面少1万次时,二者的出现概率依然接近于50%/50%(游戏邦注:准确来说,是49%/51%)。所以不要在此下赌注,认为8:2的正反出现概率会在随后的投掷过程中得到“校正”。虽然从长远来看,正反面的出现概率接近于50%/50%,但正反面各自的出现次数差距会随投掷次数的增加而增加。

反向概率

我们很容易找到计算独立或关联事件出现概率的公式。但有时要计算更多相关概率就没那么容易。一个需要你把握的重要概念是“反向概率”。计算反向概率,你需要判断的是某事件没有发生的概率,而不是它发生的概率。然后将1.0 (100%)扣除此数,这样你就会得到你所要的概率数值。

反向概率101:简单例子

假设你即将投掷一个6面骰。你投到“6”的概率有多大?虽然我们已经知道答案,这里我们将运用反向概率进行论证。你没有摇到“6”的概率是5/6 ,因此你摇到“6”的概率是1–5/6 = 1/6,或是16.7%。换而言之,你没有摇到“6”的概率是5/6,那么你摇到“6” 的概率是1/6。这毫无疑义。

反向概率201:凑成同花顺

在某情况下,反向概率能够帮你节省资金。那就是《德州扑克》,假设你在拼凑红桃同花顺,手中已有2张红桃(公共牌有2张),然后还有2次抽牌机会。换而言之,若你下次抽到红桃,那么你的牌组就是同花顺。这出现的概率有多大?

flush from gamasutra.com

我们很容易就能算出红桃在下张牌中出现红桃的概率。“牌组”中还有9张红桃没被抽取(13-4=9,手中2张+公共牌2张)。牌组还剩47张牌(52-5=47,手中3张,公共牌3张)。因此,下次抽到红桃的概率是9/47。若抽到的不是红桃,那么随后抽到的概率就是9/46(红桃数量依然没变,但总牌数减少)。

唯一问题是,我们如何算出在两次抽牌中抽到红桃的总概率?我们很容易就会犯下这一错误,认为是9/47 + 9/46。但这并不正确。这和下述错误类似:认为6次摇到“6”的总概率是1/6 + 1/6 + 1/6 + 1/6 + 1/6 + 1/6 = 1.0 = 100%。遗憾的是,我们无法在摇掷6次骰子后100%摇到“6”。

事实证明,通过反向概率解决这两个问题要简单得多。我们会这样设问:“没有抽到红桃的概率是多少?”就第一次而言,概率是(47 – 9)/47 = 38/47。第二次的概率是(46 – 9)/46 = 37/46。根据条件事件方面的知识,我们很容易就能够算出这两个事件的发生概率。换而言之,我们需要算出两次都没抽到红桃的概率,即38/47 x 37/46 = 65.0%。我们对凑成同花顺的概率非常感兴趣,所以我们将1.0扣去此数值,得到1.0 –0 .65 = 0.35 = 35%。所以凑成同花顺的概率是35%。

注意:摇骰子问题的计算方式也类似。6次中至少摇到1次“6” 的概率是通过计算没有摇到“6” 的概率得来。在每次摇掷中,没有摇到“6”的概率是5/6。因此,6次完全没有摇到“6”的概率就是:5/6 x 5/6 x 5/6 x 5/6 x 5/6 x 5/6 = 0.33 = 33%。所以,至少摇到1次“6”的概率是1.0 –0 .33 =0 .67 = 67%。因此你将有2/3的几率摇到至少一次“6” 。

关于反向概率,我们需记住的是,有时算出事件未发生的概率要比计算它们发生的概率简单得多。

关于随机数据生成器

关于概率,数字游戏设计师须知的一点是:随机数据生成器并不随机。随机数据算法需要“始值”,这是算式获得自身原始数据,进行能够带来所谓随机数据的循环运算的的基础。很多时候,程序会取样CPU钟点,或类似于始值的数据(游戏邦注:这能够促使算法保持随机性)。但对于包含众多随机数据生成内容的高强度游戏而言,有时这依然不够随机。让我们以在线扑克供应商为例。玩家需要知道控制牌组重洗的随机数据没有潜在模式。在诸如这类的极端情况下:资金取决于收入,程序员需要进行精心设计,逐步以CPU热量和函数作为始值,而不是钟点。

这里的建议是,数字游戏没有真正的随机数据。很多时候,这没有问题,但若你的游戏融入众多数据信息,就要留心其中模式。

回到问答题目

下面回到文章开始的问题。

问题1. Orc Nostril Hair的出现概率

在此情况中,两位测试者的结果都处于概率范围内。若每次消灭怪兽,发现Orc Nostril Hair(ONH)的基本概率是10% ,那么消灭20只怪兽后发现至少4次ONH的概率是13.3%。你也许会问,我如何得到这一数据?在此,我运用了一个高级概念,叫做二项分配,但这并不是本文的谈论内容。

在20次机会中发现0次ONH的几率是由反向概率决定:

在每次消灭怪兽后有10%的几率发现道具意味着同时会有90%的概率没有发现道具。

在20次机会中没有发现道具的概率是(.90)^20 = 12.2%。

所以在20次机会中发现4次ONH的概率和发现0次ONH的相同。

要判定自己是否真的需要担心编程漏洞,你需要更多信息。你需要测试者提供更多数据点,这样你才能够做出令人信服的结论。

例如收集100个体验回合的信息(游戏邦注:每个回合包含100种技能)。这是一组庞大数据。若在这些体验回合中,玩家发现ONH的概率多于或少于10%,那么你的回馈率可能存在漏洞。在这种情况下,你就应该加以关注。

问题2. 2x-3x-4x+重击

形成至少2x破坏性也就是连续击中两次的条件概率:

2x或更高破坏性的概率=0.75 x 0.75 = 56.3%

4x或更高破坏性的概率=(0.75)^4 = 31.6%

哇,玩家形成4x或更高破坏性的几率是1/3。你需要修改游戏机制。或降低基础击中率,或提高连续重击难度。

问题3. 投掷硬币

这个问题是个设计粗糙的陷阱。首先,你通过向玩家呈现前20次投掷情况给予他们一定援助,然后你需要稳固自己的机制,防止玩家滥用。

答案就是,告知玩家前20次投掷情况丝毫不影响硬币投掷结果的50/50比例。这让玩家陷入赌徒谬论中。

我甚至建议当玩家在连续出现两次“反面”后下注“正面”时,若他们胜出,支付他们少于同额赌注的资金。告诉他们,你们处于不公平的有利地位,知道“正面”定会出现。他们会信以为真。

篇目5,阐述统计学在游戏设计领域的应用

作者:Tyler Sigman

本文主要摘选了一些游戏设计者需掌握的统计学话题。特别对于系统设计师、机械设计师、平衡设计师等设计领域的设计师来说,统计学着实有用且很重要。(请点击阅读本系列第一篇)

虽然统计学是一门基于数学的学科,但是它实在很枯燥!严格地说——如果你曾经不得不大量地研究双边置信区间、学生T检验以及卡方分布测试,有时你会觉得很难消化这些知识点。

一般来说,我是喜欢物理学和力学的,因为很多时候只需简单地分析一个事例,你就能核实现状。当你计算苹果从树上落下的速度及方向时,如果你的结果是苹果应以每小时1224英里垂直向上抛出,也就是实际上你已经在头脑中核实过结果了。

统计学的优势在于易理解且具合理性;而劣势在于它的奇特性。无论如何,这篇文章的话题不会让你觉得枯燥。因为大部分的话题都是有形的、属于重要的数据资料,你应有精力去慢慢摸索。

statistics(from wired.com)

统计学:黑暗的科学

统计学是所有学科领域中最易被邪恶势力滥用的科学。

统计学可以同邪恶行径相比较是因为在使用不当时,这门学科的分支就会被推断出各种无意义或者不真实的裙带关系(参见本文末尾的实例)。如果政治家或其它非专业人士掌控了统计学,那么他们就可以操纵一些重要决定。一般来说,基于错误总结的坏决策从来不受好评。

也就是说,使用得当时,统计学无疑非常有用且有益。而对于强权势力者来说,他们会将统计学应用于一些非法途径,甚至是一些纯粹无用的渠道。

统计学——所谓的争议

我已准备好作一个紧凑的总结,然而我注意到维基百科已经对统计学作了定义,而且语言几近诗歌体系。如下:

统计学是应用数学的一个分支,主要通过收集数据进行分析、解释及呈现。它被广泛应用于各个学科领域,从物理学到社会科学到人类科学;甚至用于工商业及政府的情报决策上。(Courtesy Wikipedia.org)

这真的是一段很感人的文章。特别是最后那句“用于情报决策上”。

当然,作者忘记添上“在游戏设计领域”,但是我们原谅他对这一蓬勃发展的新兴行业的无知。

以下为我自己撰写:

统计学是应用数学的一个分支,它涉及收集及分析数据,以此确定过去的发展趋势、预测未来的发展结果,获得更多我们需了解的事物。(Courtesy Tylerpedia)

如果将此修改为适用游戏设计领域,那可以如此陈述:

统计学为你那破损的机制及破碎的设计梦指引了一条光明大道。它为你有意义的设计决策提供了稳定且具有科学性的数据。

须知的事实

统计学同其它硬科学一样深奥且复杂。如同第一部分的内容一样,本文只涉及一些精选的话题,我自认为只要掌握这些就足够了。

再次突击测验

很抱歉我要采取另一项测试了。别讨厌出题目的人,讨厌测试吧。

Q1a)假设有20名测试员刚刚完成新蜗牛赛跑游戏《S-car GO!》中的一个关卡。你得知完成一圈的时间最少为1分24秒,最多为2分32秒。你期望的平均时间为2分钟左右。请问这个测试会成功吗?

Q1b)在同一关卡中你收集了过多的数据,在分析后得出这样的结果:平均值=2分5秒;标准差=45秒。请问你会满意这个答案吗?

Q2)你设计了一款休闲游戏,不久就要发行。在最后的QA阶段,你分布了一个测试版本,然后收集了所有的数据作为试验对象。你记录了1000多位玩家的分数,还有100多位特殊的玩家的分数(有些玩家允许重复玩游戏)。运算这些数据可知平均分为52000pts,标准差为500pts。请问这游戏可以发行了吗?

Q3)你设计了一款RPG游戏,然后收集数据分析新的玩家从关卡1到关卡5的游戏进程会有多快。收集的数据如下所示:4.6小时、3.9小时、5.6小时、0.2小时、5.5小时、4.4小时、4.2小时、5.3小时。请问你可以计算出平均值和标准差吗?

总体和样本

统计学的基础为分析数据。在分析数据的时候,你需要了解两个概念:

1.总体:

总体是指某一领域中所有需要测量的对象。总体是抽象的,只在你需要测量时候才会具体化。比如,你想了解人们对某一特定问题的看法。那你就可以选择地球上所有的人,或者爱荷华州所有的人或者只是你街道附近所有的人作为一个总体。

2.样本:

样本实际上就是指抽取总体中部分用于测量的对象。原因很明显,因为我们很难收集到所有总体的数据。相对来说,你可以收集部分总体的数据。这些就是你的样本了。

正确性及样本容量

统计学结果的可靠性通常由样本容量的大小决定。

我们完美的想法是希望样本容量就是我们的总体——也就是说,你想整个收集全部涉及到的数据!因为样本越少,你就需要估计可能的趋势(这是一种数学性的推断)。而且,数据点越多越好;你最好能建立一个大型的总体而不是小型的。

例如,相对于调查10000个初中生对《Fruit Roll-Ups》的感想,试想下调查人员能否询问到每一个学生。100万个的数目过于庞大,做不到的话,10万个也不错。仍然做不到,好吧,10000个刚刚好。

由于时间和费用的关系,通常呈现出的研究结果都是基于样本所做的调查。

1.统计学的常识性规则:

你无法通过一个数据点来预测整个趋势。如果你知道我喜欢巧克力冰淇淋,你不能总结所有的Sigmans都喜欢巧克力冰淇淋。如果现在你询问我家庭中的许多成员,然后你可能会得出关于他们的想法这类比较合理的结论,或者你至少知道是否能总结出一个合理的推断。

广泛的分布图(重点!)

由于种种原因,只有《The Big Guy》可以解释生活中的许多事情倾向于同一模式发展或者分布。

最普遍的分布也有一个合理的名称——“正态分布”。是的,无法匹配这一分布图的都为非正态,所以有点怪异(需要适当避免)。

正态分布也称“高斯分布”,主要因为“正态”一词听起来不够科学。

正态分布也称为“钟形曲线”(又称贝尔曲线),因为其曲线呈钟形。

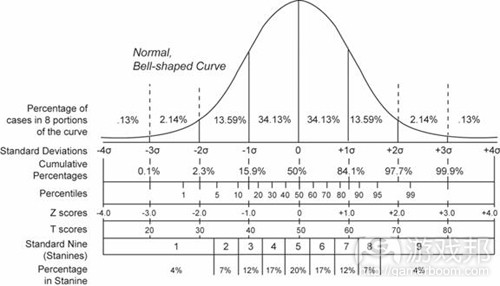

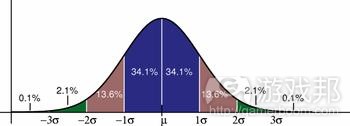

bell curve(from gamasutra)

钟形曲线的突出特点是大多数的总体均分布在平均值周围,只有个别数据散落在一些极限位置(主要指那些偏高或偏低的数据)。中间成群的数据构成了钟的外形;而那些偏高数据或偏低数据分布在钟的边缘。

我们周围有上百万的不同事例呈现出正态分布的景象。如果你测量了你所生活的城市中所有人的身高,结果可能呈现正态分布。这表明,只有少数个体属于非正常的矮,少数个体属于姚明那样的身高,而大多数人会比平均身高多几英寸或者矮几英寸。

钟形曲线同样极典型地适用于调查人们的技能水平。以运动为例——极少部分人在这一领域为专业人士,大多数的人都还过得去,只有少部分的人实在不擅长,所以没有被选为队员(比如我)。

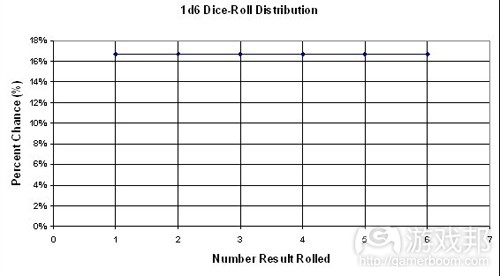

其它分布图

尽管正态分布图很完美,但它并非我们周围唯一的一种分布图。只是它比较普遍地存在。

比如有些其它的分布图直接与赌博及游戏设计有关,只要看下扔骰子的概率分布图,这种情况下出现了如下的d6情形及2d6情形:

D6 distribution(from gamasutra)

现在我想说的是第一个分布图看起来一点也不像钟形曲线,而第二幅图开始呈现出了钟的形状。

平均值

这一小块内容可以说是这篇冗长的文章中的一个小插曲。这块自我指涉的小内容的存在只有一个目的:提醒你什么是“平均值”。这块自我指涉且迂腐的小内容将被动地提醒你平均值是指一整套的数学平均数据。

方差和标准偏差

我们必须理解什么是方差和标准偏差,并且它们也具有许多有形的价值。除了能够帮助我们做出有价值的数据总结外,这两个术语还能够帮助我们更明智地陈述分布问题。比起说“中间聚集了大量的数据点”,我们可以换个说法,即“68.2%的样本是一个平均值的标准偏差”。

sigman(from gamasutra)

方差和标准偏差是相互联系的,它们都能够测量一个元素,即分散数据。直观地说,较高的方差和标准偏差也就意味着你的数据分散于四处。当我在投掷飞镖时,我便会获得一个较高的方差。

我们可以通过任何数据集去估算方差和标准偏差。我本来应该在此列出一个方程式的,但是这似乎将违背“听起来不像是一本教科书”的规则。所以我这里不引用公式,而是采用以下描述:

标准偏差:样本或人口统计的平均数值偏离平均值的程度。由希腊之母σ(sigma)表示。

举个例子来说吧,你挑选了100个人并测试他们完成你的新游戏第一个关卡分别用了多长时间。让我们假设所有数据的平均值是2分钟30秒而标准偏差则是15秒。这一标准偏差表明游戏过程中出现了集聚的情况。也就是平均来看,每个游戏过程是维持在平均值2.5分钟中的±0.25分钟内。从中看来这一数值是非常一致的。

这意味着什么以及为何你如此在乎这一数值?答案很简单。假设你不是获得上述结果,而是如下结果:

平均值=2.5分钟(如上)

σ=90秒=1.5分钟

所以我们现在拥有相同的平均值以及不同的标准偏差。这套数值表明玩家所用的游戏时间差别较大。90秒钟的游戏时间背离了平均游戏时间。而因为游戏时间是2.5分钟,所以这种偏差过大了!基于各种设计目的,出现这种较大的差值都不是设计师想看到的结果。

而如果我们所说的游戏时间是15分钟而标准偏差是90秒(1.5分钟)的话差别变更大了。

通过一个小小的标准偏差便能够衡量一致性。标准偏差比率除以平均值便能够获得相关数值。就像在第一个例子中,15秒/150秒=10%,而在第二个例子中,90秒/150秒=60%。很明显,60%的标准偏差真是过大了!

但是并不是说较大的标准偏差“总是”糟糕的。有时候设计师在进行测量时反而希望看到较大的标准偏差。不过大多数情况下还是糟糕的,因为这就意味着数值的差异性和变化性较大。

更重要的是,标准偏差的计算将告诉你更多有关游戏/机制/关卡等内容。以下便是通过测量标准偏差能够获得的有用的数据:

1.玩家玩每个关卡的游戏时间

2.玩家玩整款游戏的游戏时间

3.玩家打败一个经典的敌人需要经历几次战斗

4.玩家收集到的货币数量(游戏中有一个意大利水管工)

5.玩家收集到的吊环数量(游戏中有一个快速奔跑的蓝色刺猬)

6.在教程期间时间控制器出现在屏幕上

误差

误差与统计结论具有密切的关系。就像在每一次的盖洛普民意测验(游戏邦注:美国舆论研究所进行的调查项目之一)中也总是会出现误差,如±2.0%的误差。因为民意调查总是会使用样本去估算人口数量,所以不可能达到100%精准。零误差便意味着结果极其精确。当你所说的人口数量大于你所采取的样本数量,你便需要考虑到误差的可能性。

如果你是利用全部人口作为相关数据来源,你便不需要考虑到误差——因为你已经拥有了所有的数据!就像我问街上的任何一个人是喜欢象棋还是围棋,我便不需要考虑误差,因为这些人便是我所报告的全部数据来源。但是如果我想基于这些来自街上行人的数据而对镇上的每个人的答案做出总结,我便需要估算误差值了。

你的样本数量越大,最终出现的误差值便会越小。Mo data is bettuh(越多数据越好)。

置信区间

你可以使用推论统计为未来数据做出总结。一个非常有效的方法便是估算置信区间。理论上来看,置信区间与标准偏差密切相关,即通过一种数学模式去表示我们多么确定某一特定数据是位于一个特定范围内。

置信区间:即通过一种数学方法传达“我们带着A%的置信保证B%的数据将处于C和D价值区间。”

虽然这个定义很绕口,但是我们必须知道,只要具有一定的自信,我们便能够造就任何价值。让我以之前愉快但却缺乏满足感的工作为例:

我过去是从事应力分析和飞机零部件的设计工作。如果你知道,或者说你必须知道,飞机,特别是商业飞机的建造采用的是现代交通工具中最严格的一种形式。人们总是会担心机翼从机身上脱落下来。

作为飞机建造工程师,我们所采取的一种方法便是基于材料优势属性设置一个高置信区间。关于飞机设计的传统置信区间便是“A基值许可”,即我们必须95%地确信装运任何一种特殊材料都有99%的价值落在一个特定的价值区间内。然后我们将根据这一价值与可能发生的最糟糕的空气条件进行设计,并最终确立一个最佳安全元素。

当你真正想了解某种数据值时,置信区间便是一种非常有帮助的方法。幸运的是在游戏中我们并不会扯到生死,但是如果你想要平衡一款主机游戏,你便需要在设计过程中融入更多情感和直觉。计算置信区间能够帮助你更清楚地掌握玩家是如何玩你的游戏,并更好地判断游戏设置是否可行。

不管你何时想要计算置信区间,备用统计规则都是有效的:越多数据越好。你的样本中拥有越多数据点,你的置信区间也就越棒!

你不可能做到100%的肯定

这便引出了另一个统计规则:

并不存在100%之说:你永远不可能创造一个100%的置信区间。你不可能保证通过推论统计便能够预测一个数据点具有一个特定的价值。

当玩家在《魔兽世界》中挑战任务时,唯一可以确定的只有死亡,税金以及不可能找到最后的Yeti Hide。所以玩家只需要接受这些事实并勇往直前便可。

滥用

我在之前提过,统计是一种邪恶的技能。为了更好地解释原因,我写下了这篇弹头式爱情诗:

十四行诗1325:美好的统计,让我细数下我滥用你的每种方式:

1.误解

2.未明确置信区间

3.只因为不喜欢而丢弃了有效的结论

4.基于有缺陷的数据而做出总结

5.体育实况转播员的失误——混淆了概率和统计错误

6.基于一些不相干元素做出总结

误解

人们一直在误解统计报表。我知道,这一点让人难以置信。

未明确置信区间或误差

置信区间和误差是信息中非常重要的组成部分。在过去30天内有43%的PC拥有者购买了一款可下载的游戏(误差为40%)与同样的陈述但存在2%的误差具有巨大的差别。而如果遗漏了误差,便只会出现最糟糕的情况。我们需要始终牢记,小样本=高误差。

只因为偏见而丢弃了有效的结论

操作得当的话,统计数据是不会撒谎的。但是人们却一直在欺骗自己。我们经常在政治领域看到这类情况的出现,人们总是因为结论不符合自己预期的要求而忽视统计数据。在焦点小组中亦是如此。当然了,政治领域中也常常出现滥用统计结论的现象。

基于有缺陷的数据而做出总结

这种情况真是屡见不鲜,特别是在市场调查领域。你的统计结果总是会受到你所获得的数据的影响。如果你的数据存在缺陷,那么你所获得的结果便不会有多少价值。得到有缺陷的数据的原因多种多样,包括失误和严重的操作问题等。提出含沙射影式问题便是引出能够支持各种结论(就像你所希望的那样)的缺陷数据的一种简单方法。“你比较喜欢产品X,还是糟糕的产品Y?”将快速引出反弹式回答,如“95%的费者会选择产品X!”

体育实况转播员的失误

体育实况转播员可以说是当今时代的巫医。他们会收集各种统计,概率以及情感,然后将其混合在一起而创造出一些糟糕的结果。如果你想看一些围绕着没有根据的结论的统计,你只要去观看一款足球比赛便可。

例如一个广播员会说“A队在最后5局游戏中并未阻止B队的进攻。”这种模糊的结论是关于A队不大可能阻止B队的进攻,而不是他们在最后5局游戏中成功阻拦了B队。但是你也可以反过来说——也许他们将会这么做,因为他们之前从未阻挡过任何对手。

但是事实却在于根本不存在足够的信息能够支持任何一种说法。也许这更多地取决于一种概率。阻挡进攻的机会是否就取决于一方在之前的游戏中是否这么做过?它们也许是两种相互独立事件,除非彼此间存在着互相影响的因素。

但是这并不是说所有体育运动的结论都存在着缺陷。就像对于棒球来说统计数据便非常重要。有时候统计分析也将影响着球的投射线或者击球点等元素。

最终还是取决于数据:当你拥有足够的数据时,你便能够获得更好的统计结论。棒球便能够提供各种数据:每一赛季大约会进行2百多场比赛。但是足球比赛的场次却相对地少了很多。所以我们最终所获得的误差也会较大。但是我并不会说统计对于足球来说一点用处都没有,只是我们很难去挖掘一些与背景相关的有用数据。

基于一些不相干元素做出总结

人们始终都在误解统计报表。比起使用对照关系,我们总是更容易推断出一些并不存在的深层次的关系。我最喜欢的一个例子便是著名的飞行面条怪物信仰(游戏邦注:是讽刺性的虚构宗教)的《Open Letter to the Kansas School Board》中的“海盗vs.全球变暖”图表:

http://www.venganza.org/about/open-letter/

我们是否能够开始解答问题了?

问题1的答案—-关卡时间

这一问题的答案很简单:你未能获得足够的信息去估算平均值。因为在1:24与2:32范围中波动的价值并不意味着它们的平均值就是2分钟。(单看这两个数值的平均值是1.97分钟,但是我们却不能忽视其它18个结果!)你必须掌握了所有的20个结果才能估算平均值,除此之外你还需要估算标准偏差值。

问题2的答案—-后续关卡时间

这时候你可能不会感到满足,因为标准偏差值过高了,超过平均值的40%。如此看来你的关卡中存在着过多变量。同时这里也存在着一些可利用的潜在元素,并且技能型玩家能够发挥其优势而造福自己。或者,你也可以严厉惩罚那些缺少技能的玩家。而作为游戏设计师,你最终需要做的便是判断这些结果(居于高度变量)是否符合预期要求。

问题2的答案—-标准偏差值

统计只是你所采用的一种方法,你同时还需要懂得如何进行游戏设计。如此,过于接近的计数分组使得我们总是能够获得一个较低的标准偏差值(500/52000=1%),这就意味着你所获得的分数几乎没有任何差别,也就是说在最终游戏结果中玩家的不同技能并不会起到任何影响作用。而当玩家发现自己技能的提高并不会影响游戏分数的发展时,便会选择退出游戏。

所以在这种情况下你更希望看到较高的标准偏差,如此游戏分数才能随着技能的提高而提高。

问题3的答案—-游戏时间

可以说这是一个很难获取的数值,不过它却说明了数据收集中的一个要点:你需要警惕那些看起来是错误的数据。就像0.2小时看起来就有问题。也许这是排印错误,或者是设备故障所造成的,谁知道呢。但是不管怎样在进行各种计算之前你都需要坚定不移地说服自己0.2小时是一个有效数据,或者你也可以选择将其丢弃而基于剩下的数据点进行估算。

其它有趣的内容

为了控制本文篇幅,我不得不略过许多有趣的主题。我只要在此强调理解统计不仅能够帮助你更好地进行游戏设计,同时也能够帮助你做出消费者决策,投票决策或者财政决策等。我敢下23.4%的赌注保证我所说的内容中至少有40%的内容是正确的。

对于设计师而言,统计能够帮助他们获取来自有记录的游戏过程(样本)的相关数据,并帮助他们为更大的未记录的游戏过程(人口统计)做出总结。

在实践中学习

例如在我刚完成的游戏中,我便是通过记录游戏过程的相关数据,并围绕着源自这些数据的平均值和标准偏差去设定游戏挑战关卡。我们将中等难度等同于平均值,较容易的等同于平均值减去一定量的标准偏差,而较困难的等同于平均值加上一定量的标准偏差。如果我们能够收集到尽可能多的数据,我们的统计便会越精准。

就像概率论一样,当你的项目范围变得越来越大时,统计也会变得越来越有帮助。很多时候你可以通过自己的方法进行摸索,而无需使用任何形式理论。但是随着游戏变大,用户群体的壮大以及预算的扩大,你便需要做好面对一个不平衡,且完全凭直觉的游戏设计中存在固有缺陷的准备。

你需要牢记的是,统计和概率都不可能为你进行游戏设计,它们最多只能起到辅助作用!

篇目1,篇目2,篇目3,篇目4,篇目5(本文由游戏邦编译,转载请注明来源,或咨询微信zhengjintiao)

篇目1,Paul Williams is undertaking a PhD in Cognitive Psychology at the University of Newcastle, under the supervision of Dr. Ami Eidels. He is interested in developing online gaming platforms suitable for the investigation of cognitive phenomena, and is currently focused on refining and implementing a novel paradigm to study the behavioral phenomenon known as the “hot hand.”

Balancing Risk and Reward to Develop an Optimal Hot-Hand Game

Abstract

This paper explores the issue of player risk-taking and reward structures in a game designed to investigate the psychological phenomenon known as the ‘hot hand’. The expression ‘hot hand’ originates from the sport of basketball, and the common belief that players who are on a scoring streak are in some way more likely to score on their next shot than their long-term record would suggest. There is a widely held belief that players in many sports demonstrate such streaks in performance; however, a large body of evidence discredits this belief. One explanation for this disparity between beliefs and available data is that players on a successful run are willing to take greater risks due to their growing confidence. We are interested in investigating this possibility by developing a top-down shooter. Such a game has unique requirements, including a well-balanced risk and reward structure that provides equal rewards to players regardless of the tactics they adopt. We describe the iterative development of this top-down shooter, including quantitative analysis of how players adapt their risk taking under varying reward structures. We further discuss the implications of our findings in terms of general principles for game design.

Key Words: risk, reward, hot hand, game design, cognitive, psychology

Introduction

Balancing risk and reward is an important consideration in the design of computer games. A good risk and reward structure can provide a lot of additional entertainment value. It has even been likened to the thrill of gambling (Adams, 2010, p. 23). Of course, if players gamble on a strategy, they assume some odds, some amount of risk, as they do when betting. On winning a bet, a person reasonably expects to receive a reward. As in betting, it is reasonable to expect that greater risks will be compensated by greater rewards. Adams not only states that “A risk must always be accompanied by a reward” (2010, p. 23) but also believes that this is a fundamental rule for designing computer games.

Indeed, many game design books discuss the importance of balancing risk and reward in a game:

* “The reward should match the risk” (Thompson, 2007, p.109).

* “… create dilemmas that are more complex, where the players must weigh the potential outcomes of each move in terms of risks and rewards” (Fullerton, Swain, & Hoffman, 2004, p.275).

* “Giving a player the choice to play it safe for a low reward, or to take a risk for a big reward is a great way to make your game interesting and exciting” (Schell, 2008, p.181).

Risk and reward matter in many other domains, such as stock-market trading and sport. In the stock market, risks and rewards affect choices among investment options. Some investors may favour a risky investment in, say, nano-technology stocks, since the high risk is potentially accompanied by high rewards. Others may be more conservative and invest in solid federal bonds which fluctuate less, and therefore offer less reward, but also offer less risk. In sports, basketball players sometimes take more difficult and hence riskier shots from long distance, because these shots are worth three points rather than two.

Psychologists, cognitive scientists, economists and others are interested in the factors that affect human choices among options varying in their risk-reward structure. However, stock markets and sport arenas are ‘noisy’ environments, making it difficult (for both players and researchers) to isolate the risks and rewards of any given event. Computer games provide an excellent platform for studying, in a well-controlled environment, the effects of risk and reward on players’ behaviour.

We examine risk and reward from both cognitive science and game design perspectives. We believe these two perspectives are complementary. Psychological principles can help inform game design, while appropriately designed games can provide a useful tool for studying psychological phenomena.

Specifically, in the current paper we discuss the iterative, player-centric development (Sotamma, 2007) of a top-down shooter that can be used to investigate the psychological phenomenon known as the ‘hot hand’. Although the focus of this paper is on the process of designing risk-reward structures to suit the design requirements of a hot-hand game, we begin with an overview of this phenomenon and the current state of research. In subsequent sections we describe three stages of game design and development. In our final section we relate our findings back to more general principles of game design.

The Hot Hand

The expression ‘hot hand’ originates from basketball and describes the common belief that players who are on a streak of scoring are more likely to score on their next shot. That is, they are on a hot streak or have the ‘hot hand’. In a survey of 100 basketball fans, 91% believed that players had a better chance of making a shot after hitting their previous two or three shots than after missing their previous few shots (Gilovitch, Vallone, & Tversky, 1985).

While intuitively these beliefs and predictions seem reasonable, seminal research found no evidence for the hot hand in the field-goal shooting data of the 1980-81 Philadelphia 76ers, or the free-throw shooting data of the 1980-81 and 1981-82 Boston Celtics (Gilovitch et al., 1985). With few exceptions, subsequent studies across a range of sports confirm this surprising finding (Bar-Eli, Avugos, & Raab, 2006) – suggesting that hot and cold streaks of performance could be a myth.

However, results of previous hot hand investigations reveal a more complicated picture. Specifically, previous studies suggest that a distinction can be made between tasks of ‘fixed’ difficulty and tasks of ‘variable’ difficulty. A good example of a ‘fixed’ difficulty task is free-throw shooting in basketball. In this type of shooting the distance is kept constant, so each shot has the same difficulty level. In a ‘variable’ difficulty task, such as field shooting during the course of a basketball game, players may adjust their level of risk from shot-to-shot, so the difficulty of the shot varies depending on shooting distance, the amount of defensive pressure, and the overall game situation.

Evidence suggests it is possible for players to get on hot streaks in fixed difficulty tasks such as horseshoe pitching (Smith, 2003), billiards (Adams, 1996), and ten-pin bowling (Dorsey-Palmenter & Smith, 2004). In variable difficulty tasks, however, such as baseball (Albright, 1993), basketball (Gilovitch et al., 1985), and golf (Clark, 2003a, 2003b, 2005), there is no evidence for hot or cold streaks – despite the common belief to the contrary.

The most common explanation for the disparity between popular belief (hot hand exists) and actual data (lack of support for hot hand) is that humans tend to misinterpret patterns in small runs of numbers (Gilovitch et al., 1985). That is, we tend to form patterns based on a cluster of a few events, such as a player scoring three shoots in a row. We then use these patterns to help predict the outcome of the next event, even though there is insufficient information to make this prediction (Tversky & Kahneman, 1974). In relation to basketball shooting, after a run of three successful shots, people would incorrectly believe that the next shot is more likely to be successful than the player’s long term average. This is known as the hot-hand fallacy.

A different explanation for this disparity suggests shooters tend to take greater risks during a run of success, for no loss of accuracy (Smith, 2003). Under this scenario, a player does show an increase in performance during a hot streak – as they are performing a more difficult task at the same level of accuracy. This increase in performance may in turn be reflected in hot hand predictions, however would not be detected by traditional measures of performance. While this hypothetical account receives tentative support by drawing a distinction between fixed and variable difficulty tasks (as the hot hand is more likely to appear in fixed-difficulty tasks, where players cannot engage in a more difficult shot), this hypothesis requires further study.

Unfortunately, trying to gather more data to investigate the hot hand phenomenon from sporting games and contests is fraught with problems of subjectivity. How can one assess the difficulty of a given shot over another in basketball? How can one tell if a player is adopting an approach with more risk?

An excellent way to overcome this problem is to design a computer game of ‘variable’ difficulty tasks that can accurately record changes in player strategies. Such a game can potentially answer a key question relevant to both psychology and game design – how do people (players) respond to a run of success or failure (in a game challenge)?

The development of this game, which we call a ‘hot hand game’, is the focus of this paper. Such a game requires a finely tuned risk and reward structure, and the process of tuning this structure provides a unique empirical insight into players risk taking behaviour. At each stage of development we test the game to measure how players respond to the risk and reward structure. We then analyse these results in terms of player strategy and performance and use this analysis to inform our next stage of design.

This type of design could be characterised as iterative and player-centric (Sotamaa, 2007). While the game design in this instance is simple, due to the precise requirements of the psychological investigation, player testing is more formal than might traditionally be used in game development. Consequently, changes in player strategy can be precisely evaluated. We find that even subtle changes to risk and reward structures impact on player’s risk-taking strategy.

Game Requirements and Basic Design

A hot hand game that addresses how players respond to a run of success or failure has special requirements. First and foremost, the game requires a finely-tuned risk and reward structure. The game must have several (5-7), well-balanced risk levels, so that players are both able and willing to adjust their level of risk in response to success and failure. If, for example, one risk level provides substantially more reward than any other, players will learn this reward structure over time, and be unlikely to change strategy throughout play. We would thus like each risk level to be, for the average player, equally rewarding. In other words, regardless of the level of risk adopted, the player should have about the same chance of obtaining the best score.

The second requirement for an optimal hot hand game is that it allows measurement of players’ strategy after runs of both successes and failures. If people fail most of the time, we will not record enough runs of success. If people succeed most of the time, we will not observe enough runs of failure. Thus, the core challenge needs to provide a probability of success, on average, somewhere in the range of 40-60%.

The game developed to fulfil these requirements was a top-down shooter developed in Flash using Actionscript. While any simple action game based on a physical challenge with hit-miss scoring could be suitably modified for our purposes, a top-down shooter holds several advantages. Firstly, high familiarity with the style means the learning period for players is minimal, supporting our aims of using the game for experimental data collection. Secondly, the simple coding of key difficulty parameters (i.e. target speeds and accelerations) allows the reward structure to be easily and precisely manipulated. Lastly, a ‘shot’ of a top-down shooter is analogous to a ‘shot’ in basketball, with similar outcomes of ‘hit’ and ‘miss’. This forms a clear and identifiable connection between the current experiment and the origins of the hot hand.

In the top-down shooter, the goal of the player is to shoot down as many alien spaceships as possible within some fixed amount of time. This means the number of overall shots made, as well as the number of hits, depend on player performance and strategy. The game screen shows two spaceships, representing an alien and the player-shooter (Figure 1). The simple interface provides feedback about the current number of kills and the time remaining. During the game the player’s spaceship remains stationary at the bottom centre of the screen. Only a single alien spaceship appears at any one time. It moves horizontally back-and-forth across the top of the screen, and bounces back each time it hits the right or left edges. The player shoots at the alien ship by pressing the spacebar. For each new alien ship the player has only a single shot with which to destroy it. If an alien is destroyed the player is rewarded with a kill.

Figure 1: The playing screen.

Each alien craft enters from the top of the screen and randomly moves towards either the left or right edge. It bounces off each side of the screen, moving horizontally and making a total of eight passes before flying off. Initially the alien ship moves swiftly, but it decelerates at a constant rate, moving more slowly after each pass. This game therefore represents a variable difficulty task; a player can elect a desired level of risk as the shooting task becomes less difficult with each pass of the alien.

The risk and reward equation is quite simple for the player. The score for destroying an alien is the same regardless of when the player fires. Since the goal is to destroy as many aliens as possible in the game period, the player would benefit from shooting as quickly as possible; shooting in the early passes rewards the player with both a kill and more time to shoot at subsequent aliens. However, because the alien ship decelerates during each of the eight passes, the earlier a player shoots the less likely this player will hit the target. If a shot is missed, the player incurs a 1.5 second time penalty. That is, the next alien will appear only after a 1.5 second delay which is additional to the interval experienced for an accurate shot.

Stage One–Player Fixation

After self-testing the game, we deployed it so that it could be played online. Five players were recruited via an email circulated to students, family and friends. Players were instructed to shoot down as many aliens as possible within a given time block. They first played a practice level for six minutes before playing the competitive level for 12 minutes. The number of alien ships a player encountered varied depending on the player’s strategy and accuracy. A player could expect to encounter roughly 10 alien ships for every 60 seconds of play. At the completion of the game the player’s response time and accuracy were recorded for each alien ship.

Recall that one of the game requirements was that players take shots across a range of difficulty levels, represented by passes (later passes mean less difficult shots)–this simple test provides evidence that a player is willing to explore the search space and alter her or his risk-taking behaviour throughout the game. Typical results for Players one and two are shown in Figure 2. In general players tended to be very exploratory during the practice level of the game, as indicated by a good spread of shots between alien passes one and eight. During the competitive game time however players tended to invest in a single strategy, as indicated by the large spikes seen in the competition levels of Figure 2. This suggests that players, after an exploratory period, attempted to maximise their score by firing on a single, fixed pass.

Figure 2: Results for two typical players in Stage one of game development. The upper row shows data for Player 1, and the bottom row shows data for Player 2. The left column presents the frequency (%) of shots taken on each pass in the practice level, while the right column indicates the frequency (%) of shots taken on each pass in the competition level. Note that players experimented during the practice level, as evidenced by evenly spread frequencies across passes in the left panels, but then adopted a fixed strategy during the competitive block, as evidenced by spikes at pass 4 (Player 1) and pass 5 (Player 2). For each panel, n is the overall number of shots attempted by the player in that block, m is the mean firing pass, and sd is the standard deviation of the number of attempted shots.

In experimental terms, this fixation on a single strategy is known as ‘investment’. At the end of the game the players reported that, because of the constant level of deceleration, they could always shoot when the alien was at a specific distance from the wall if they stuck to the same pass. Players thus practiced a timing strategy specific to a particular alien pass (i.e., a specific difficulty level). The number of kills per unit time (i.e., the reward) was therefore always highest for that player when shooting at the same pass. In the example graphs (Figure 2), one player ‘invested’ in learning to shoot on pass four, the other, on pass five. This type of investment runs counter to one requirement of a hot-hand game, creating a major design flaw that needed to be fixed in the next iteration.

Stage Two–Encouraging Exploratory Play

The aim of the second stage of design was to overcome the problem of player investment in a single strategy. The proposed solution was to vary the position of the player’s ship so that it no longer appeared in the same location at the centre of the screen but rather was randomly shifted left and right of centre each time a new alien appeared (Figure 3). Thus, on each trial, the shooter’s location was sampled from a uniform distribution of 100 pixels to the left or to the right of the centre. This manipulation was intended to prevent the player from learning a single timing-sequence that was always successful on a single pass (such as always shooting on pass four when the alien was a certain distance from the side of the screen).

Figure 3: The screen in Stage two of game development. The blue rectangle appears here for illustration purposes and indicates the potential range of locations used to randomly position the player’s ship. It did not appear on the actual game screen.

Once again we deployed an online version of the game and recorded data from six players. Players once again played a practice level for six minutes before they played the competitive level for 12 minutes.

The results for all individual players in the competitive game level are shown in Figure 4. Introducing random variation into the players firing position significantly decreased players’ tendency to invest in and fixate on a single pass. This decrease in investment is highlighted by the increase in the variance seen in Figure 4 when compared to Figure 2. Thus, the slight change in gameplay had a significant effect on players’ behaviour, encouraging them to alter their risk-taking strategy throughout the game. Furthermore, this change helps to meet the requirements necessary for hot hand investigation.

Figure 4: Individual player results for the competition level in Stage two testing. Player’s tendency to fire on a single pass in the competition level has been significantly reduced compared to Stage One, as evidenced by the reduction in spikes and, in most cases, increase in variance. For each panel, n is the overall number of shots attempted by the player in that block, m is the mean firing pass, and sd is the standard deviation of the number of attempted shots.

In Figure 5 we present data averaged across all players for both the practice and competition levels. This summary highlights how the game’s reward structure influenced player strategy throughout play. The left column corresponds to the practice level (not shown in Figure 4), while the right column corresponds to the competition level.

Figure 5: Average player results for Stage two. The left column presents the frequency (%) of shots taken on each pass in the practice level, while the right column indicates the frequency (%) of shots taken on each pass in the competition level. For each panel, m is the mean firing pass and n is the overall number of shots attempted by all players in that block. A comparison of mean firing pass for practice and competition levels highlights that as the game progressed, players fired later.

An inspection of Figure 5 highlights the fact that players’ shooting strategy altered in a predictable manner as the game progressed. For example, the mean firing pass for the practice level (m = 5.8) was smaller than that seen in the competition level (m = 6.21). Thus players tended to shoot later in the competition level. This suggests that the reward structure of the game was biased towards firing at later passes, and that as players became familiar with this reward structure they altered their gameplay accordingly.

Given the need to minimise such bias for hot hand investigation, we examined the risk and reward structure on the basis of average player performance. We were particularly interested in the probability of success for each pass, and how this probability translated into our reward system. Recall that firing on later passes takes more time but is also accompanied by a higher likelihood for success. As the aim of the hot hand game is to kill as many aliens as possible within a 12 minute period, both the probability of hits as well as the time taken to achieve these hits are important when considering the reward structure.

We therefore analysed how many kills per 12-minute block the average, hypothetical player would make if he or she were to consistently fire on a specific pass for each and every alien that appeared. For example, given the observed likelihood of success on pass one, how many kills would a player make by shooting only on pass one? How many kills on pass two, and so on. Results of this examination are reported in Figure 6. Figure 6A shows the average number of shots taken by players on each pass of the alien (overall height of bar) along with the average number of hits at each pass (height of yellow part of the bar). Figure 6B uses this data to plot the observed probability of success and shows that the probability for success is higher for later passes. This empirically validates that later passes are in fact ‘easier’ in a psychological sense.

Figure 6: Averaged results and some modelling predictions from Stage two of game development. In Panel A, the frequency (%) of shots attempted on each pass is indicated by the overall height of each bar. The proportion of hits and misses are indicated in yellow and blue. Panel B depicts the average probability of a hit for each pass, given by the number of hits out of overall shot attempts. Based on the empirical results, Panels C and D show the predicted number of successful shots if players were to consistently shoot on only one pass for the entire game (see text for details).

These probabilities allow empirical estimation of the number of total kills likely to be attained by the hypothetical average player if they were to shoot on only one pass for an entire 12 minute block. By plotting the number of total kills expected for each pass number, we produce an optimal strategy curve for the current game, as shown in Figure 6C. The curve is monotonically increasing, indicating that the total number of kills expected of an average player increases as the pass number increases. In other words, players taking less difficult shots are expected to make more hits within each game. The reward structure is clearly biased toward later passes, which validates the change in player strategy (i.e. firing on later passes) as the game progressed. As the players became accustomed to the reward structure, their strategy shifted accordingly to favour later, easier shots.

In game terms it might be considered an exploit to shoot on pass eight. Figure 6C indicates that consistently firing on pass 8 would clearly result in the greatest number of kills, making it the ‘optimal’ strategy for the average player. Given that an exploit of this kind reduces the likelihood of players to fire earlier in response to a run of successful shots, the current design still failed to meet the requirements for our hot hand game.

One simple adjustment to overcome this issue was to reduce the penalty period after an unsuccessful shot. While the current time penalty for a missed shot was set to 1.5 seconds, the ability to vary this penalty allows a deal of flexibility within the reward structure. Given that players make many more shots, and thus many more misses, if they choose to fire on early passes – decreasing the time penalty for a miss substantially increases the relative reward for firing on early passes.

In line with this thinking, Figure 6D shows the predicted number of kills in 12 minutes for the average player if the penalty for missing is reduced from 1.5 seconds to 0.25 seconds. This seemingly small change balances the reward structure so that players are more evenly rewarded, at least for passes three to eight. Estimation of accuracy rate on passes one and two were based on a small number of trials, which makes them problematic for modelling; participants avoided taking early shots, perhaps because the alien was moving too fast for them to intercept. Allowing for players to fire on passes three to eight still provided us with sufficient number of possible strategies for a hot hand investigation.

Stage Three–Balancing Risk and Reward

In stage two of our design we uncovered an exploitation strategy in the risk and reward structure of the game where players could perform optimally by shooting on pass eight of the alien. We suspect this influenced players to fire at later passes of the alien, particularly as the game progressed. Using empirical data to model player performance suggested that reducing the time penalty for a miss to 0.25 seconds would overcome this problem.

A modified version of the game, with a 0.25 seconds penalty after a miss, was made available online and data were recorded from five players. Averaged results show that players shot at roughly the same mean pass of the alien in the practice level and the competitive level (Figure 7). This pattern is in contrast with Figure 4, which highlighted a tendency for players to fire at later passes in the 12 minute competitive level. This data confirms the empirical choice of a 0.25 second penalty, and provides yet another striking example of how subtle changes in reward structure may influence players’ behaviour.

Figure 7: Average player results for Stage three of game development. The left plot presents the frequency (%) of shots attempted on each pass in the practice level, while the right plot indicates the frequency (%) of shots attempted on each pass in the competition level. For each panel, m is the mean firing pass and n is the overall number of shots taken by all players in that block. As indicated by the mean firing pass, under a balanced reward structure players no longer attempted to shoot on later passes as the game progressed.

Recall that we began the development of a hot-hand game with the requirement that for each level of assumed risk the game should be equally rewarding (total number of kills) for the average player. By balancing the reward structure, the design from stage three is now consistent with this requirement for investigating the hot hand.

Finally, we required the game to have an overall level of difficulty such that players would succeed on about 40-60 percent of attempts. Performance within this range would allow us to compare player strategy in response to runs of both success and failure. That is, testing for both hot and cold streaks. As highlighted by Figure 8, the overall probability of success does indeed meet this criteria; the overall probability of success (hits) was 43%. Thus, the game now meets the essential criteria required to investigate the hot hand phenomenon.

Figure 8: Averaged results from the competition level of Stage three of game development. In Panel A, the frequency (%) of shots attempted on each pass is indicated by the overall height of each bar. The proportion of hits and misses are indicated in yellow and blue. Panel B depicts the average probability of success for each pass, given by the number of hits out of overall shot attempts. In Panel B, ps is the overall probability of success (hits).

Discussion

We set out to design a computer game as a tool for studying a fascinating and widely studied psychological phenomenon called the ‘hot hand’ (e.g., Gilovitch, Valone, & Tversky, 1985). For this we needed a game that allowed us to investigate player risk-taking in response to a string of successful or unsuccessful challenges.

We designed a simple top-down shooter game where players had a single shot at an alien spacecraft as it made eight passes across the screen. During the game the player faced this same challenge a number of times. The goal of the game was to kill as many aliens as possible in a set amount of time. The risk in the gameplay reduced on each pass as the alien ship slowed down. Shooting successfully on earlier passes rewarded the player with a kill and made a new alien appear immediately. Missing a shot penalised the player with an additional wait time before the next alien appeared.

As a hot hand game it was required to meet specific risk and reward criteria. Players should explore a range of risk-taking strategies in the game and they should be rewarded in a balanced way commensurate with this risk. We also wanted the game challenge to have an average success rate roughly equal to the failure rate, between 40 and 60 percent so that we could use the game to gather data about player’s behaviour in response to both success and failure.

To achieve our objective we developed the game in an iterative fashion over three stages. At each stage we tested an online version of the game, gathering empirical data and analysing the players’ strategy and performance. In each successive stage of design we then altered the game mechanics so they were balanced in a way that met our specific hot hand requirements. The design changes and their effects are summarised in Table 1.

Table 1. A summary of changes to design in each of the stages and the effect of these changes on meeting the hot hand requirements.

Books on game design tend to prescribe an iterative design process. Iterative processes allow unforseen problems to be addressed in successive stages of design. This is especially important in games where the requirements for the game mechanics are typically only partially known and tend to emerge as the game is built and played. Salen and Zimmerman describe this iterative process as “play-based” design and also emphasise the importance of “playtesting and prototyping” (2004, p. 4). For this purpose successive prototypes of the game are required. Indeed we began with only high-level requirements and used this same iterative, prototyping approach to refine our gameplay.

The main difference in our approach is that we more formally measured player’s strategies and exploration behaviours in each stage of design. Given that our game requirements are rather unique, it is unlikely that subjective feedback alone would have allowed us to make the required subtle changes to game mechanics. For example, during the initial testing of the game we found that players tended to invest in a single playing strategy. Further analysis also revealed a potential exploit in the game as players could easily optimise their total number of kills by shooting on the last pass of each alien ship.

The issue of exploits in games is often debated in gaming circles and is also well studied in psychology. Indeed trade-offs between exploitation and exploration exist in many domains (e.g., Hills, Todd, & Goldstone, 2008; Walsh, 1996). External and internal conditions determine which strategy the organism, or the player, will take in order to maximise gains and minimise loses. For example, when foraging for food, the distribution of resources matters. Clumped resources lead to a focused search in the nearby vicinity where they are abundant (exploitation), whereas diffused resources lead to broader exploration of the search space.

Hills et al. showed that exploration and exploitation strategies compete in mental spaces as well, depending on the reward for desired information and the toll incurred by search time for exploration. In the context of our game, a shooting strategy of consistently attempting the easiest shooting level produced the highest reward. This encouraged players to drift toward later firing as the game progressed, and in turn inhibited players from exploring alternate (earlier firing) strategies. It is unlikely we could have predicted this without collecting empirical data from players.

A further advantage of gathering empirical data was that it allowed us to remodel our reward structure based on precise measures of player performance. In stages one and two players lost 1.5 seconds each time they missed an alien. In stage three we reduced this penalty to 0.25 seconds based on our analysis and modelling of player behaviour. This relatively minor change was enough to change players’ behaviour and encourage them to risk earlier shots at the alien. The fact that our game is quite simple in nature reinforces both the difficulty and importance of designing a well-balanced risk and reward structure.

Another common principle referred to in game literature is player-centred design which is defined by Adams as “a philosophy of design in which the designer envisions a representative player of a game the designer wants to create.” (2010, p. 30). Although player-centred design is often a common principle referred to in game-design texts there is some suggestion that design is often based purely on designer experience (Sotamaa, 2007). Involving players in the design process typically involve more subjective feedback from approaches such as focus groups and interviews which have been generally used in usability design. In our study, when designing even a simple game challenge it is clear that the use of empirical data to measure how players approach the game and how they perform can be another vital element in balancing the gameplay.

We also recognise some dangers with this approach, as averaging player performance can hide important differences between players. It would be nice to have a model of an ideal player but it is unlikely such a player exists. In fact there are many different opinions about who the ‘player’ is (Sotamaa, 2007). The empirical data therefore need to be gathered from the available players’ population. If there are broad differences among these players then it may require the designer to sample different groups, for example, a group of casual players and a group of hard-core gamers.

Importantly for future research, the game design at which we arrived is now suitable to investigate the hot hand phenomena. Such a game can potentially answer a number of questions:

1. How do players respond to a run of success or failure in a game challenge?

2. Will a player take on more difficult challenges if they are on a hot streak?

3. Will they lower their risk if they are on a cold streak?

4. How will this variable risk level impact on their overall measure of performance?

5. How can the hot hand principle be used in the design of game mechanics?

Answers to such questions will not only be of interest to psychologists, but could also further inform game design. For example, it might allow the designer to engineer a hot streak so that players would take more risks or be more explorative in their strategies. Of course in a game it might even be appropriate to use a cold streak to discourage a player’s current strategy. The game mechanics could help engineer these streaks in a very transparent way without breaking player immersion. Further investigations of the hot hand hold significant promise for both psychology and game design.

篇目2,Game Developer Column 9: Playing the Odds

By Soren Johnson

One of the most powerful tools a designer can use when developing games is probability, using random chance to determine the outcome of player actions or to build the environment in which play occurs. The use of luck, however, is not without its pitfalls, and designers should be aware of the trade-offs involved – what chance can add to the experience and when it can be counterproductive.

Failing at Probability

One challenge with using randomness is that humans are notoriously poor at accurately evaluating probability. A common example is the Gambler’s Fallacy, which is the belief that odds will even out over time. If the Roulette wheel comes up black five times in a row, players often believe that the odds of coming up black again are quite small, even though clearly the streak makes no difference whatsoever. Conversely, people also see streaks where none actually exist – the shooter with a ‘hot hand’ in basketball, for example, is a myth. Studies show that, if anything, a successful shot actually predicts a subsequent miss.

Also, as designers of slot machines and MMO’s are quite aware, setting odds unevenly between each progressive reward level makes players think that the game is more generous than it really is. One commercial slot machine had its payout odds published by wizardofodds.com in 2008:

* 1:1 per 8 plays

* 2:1 per 600 plays

* 5:1 per 33 plays

* 20:1 per 2,320 plays

* 80:1 per 219 plays

* 150:1 per 6,241 plays

The 80:1 payoff is common enough to give players the thrill of beating the odds for a a big win but stillrare enough that the casino is in no risk of losing money. Furthermore, humans have a hard time estimating extreme odds – a 1% chance is anticipated too often and 99% odds are considered to be as safe as 100%.

Leveling the Field

These difficulties in accurately estimating odds actually work in the favor of the game designer. Simple game design systems, such as the dice-based resource generation system in Settlers of Catan, can be tantalizingly difficult to master with a dash of probability.

In fact, luck makes a game more accessible because it shrinks the gap – whether in perception or in reality – between experts and novices. In a game with a strong luck element, beginners believe that, no matter what, they have a chance to win. Few people would be willing to play a chess Grandmaster, but playing a backgammon expert is much more appealing – a few lucky throws can give anyone a chance.

In the words of designer Dani Bunten, “Although most players hate the idea of random events that will destroy their nice safe predictable strategies, nothing keeps a game alive like a wrench in the works. Do not allow players to decide this issue. They don’t know it but we’re offering them an excuse for when they lose (‘It was that damn random event that did me in!’) and an opportunity to ‘beat the odds’ when they win.”

Thus, luck serves as a social lubricant – the alcohol of gaming, so to speak – that increases the appeal of multiplayer gaming to audiences which would not normally be suited for cutthroat head-to-head competition.

Where Luck Fails

Nonetheless, randomness is not appropriate for all situations or even all games. The ‘nasty surprise’ mechanic is never a good idea. If a crate provides ammo and other bonuses when opened but explodes 1% of the time, the player has no chance to learn the probabilities in a safe manner. If the explosion occurs early enough, the player will immediately stop opening crates. If it happens much later, the player will feel unprepared and cheated.

Also, when randomness becomes just noise, the luck simply detracts from the player’s understanding of the game. If a die roll is made every time a StarCraft Marine shoots at a target, the rate of fire will simply appear uneven. Over time, the effect of luck on the game’s outcome will be negligible, but the player will have a harder time grasping how strong a Marine’s attack actually is with all the extra random noise.

Further, luck can slow down a game unnecessarily. The board games History of the World and Small World have a very similar conquest mechanic, except that the former uses dice and the latter does not (until the final attack). Making a die roll with each attack causes a History of the World turn to last at least three or four times as long as a turn in Small World. The reason is not just the logistical issues of rolling so many dice – knowing that the results of one’s decisions are completely predictable allows one to plan out all the steps at once without worrying about contingencies. Often, handling contingencies are a core part of the game design, but game speed is an important factor too, so designers should be sure that the trade-off is worthwhile.

Finally, luck is very inappropriate for calculations to determine victory. Unlucky rolls feel the fairest the longer players are given to react to them before the game’s end. Thus, the earlier luck plays a role, the better for the perception of game balance. Many classic card games – pinochle, bridge, hearts – follow a standard model of an initial random distribution of cards that establishes the game’s ‘terrain’ followed by a luck-free series of tricks which determines the winners and losers.

Probability is Content

Indeed, the idea that randomness can provide an initial challenge to be overcome plays an important role in many classic games, from simple games like Minesweeper to deeper ones like NetHack and Age of Empires. At their core, solitaire and Diablo are not so different – both present a randomly-generated environment that the player needs to navigate intelligently for success.

An interesting recent use of randomness was Spelunky, which is indie developer Derek Yu’s combination of the random level generation of NetHack with the game mechanics of 2D platformers like Lode Runner. The addictiveness of the game comes from the unlimited number of new caverns to explore, but frustration can emerge from the wild difficulty of certain, unplanned combinations of monsters and tunnels.

In fact, pure randomness can be an untamed beast, creating game dynamics that throw an otherwise solid design out of balance. For example, Civilization 3 introduced the concept of strategic resources which were required to construct certain units – Chariots need Horses, Tanks need Oil, and so on. These resources were sprinkled randomly across the world, which inevitably led to large continents with only one cluster of Iron controlled by a single AI opponent. Complaints of being unable to field armies for lack of resources were common among the community.

For Civilization 4, the problem was solved by adding a minimum amount of space between certain important resources, so that two sources of Iron could never be within seven tiles of each other. The result was a still unpredictable arrangement of resources around the globe but without the clustering that could doom an unfortunate player. On the other hand, the game actively encouraged clustering for less important luxury resources – Incense, Gems, Spices – to promote interesting trade dynamics.

Showing the Odds

Ultimately, when considering the role of probability, designers need to ask themselves ‘how is luck helping or hurting the game?’ Is randomness keeping the players pleasantly off-balance so that they can’t solve the game trivially? Or is it making the experience frustratingly unpredictable so that players are not invested in their decisions?

One factor which helps ensure the former is making the probability as explicit as possible. The strategy game Armageddon Empires based combat on a few simple die rolls and then showed the dice directly on-screen. Allowing the players to peer into the game’s calculations increases their comfort level with the mechanics, which makes chance a tool for the player instead of a mystery.

Similarly, with Civilization 4, we introduced a help mode which showed the exact probability of success in combat, which drastically increased player satisfaction with the underlying mechanics. Because humans have such a hard time estimating probability accurately, helping them make a smart decision can improve the experience immensely.

Some deck-building card games, such as Magic: The Gathering or Dominion, put probability in the foreground by centering the game experience on the likelihood of drawing cards in the player’s carefully constructed deck. These games are won by players who understand the proper ratio of rares to commons, knowing that each card will be drawn exactly once each time through the deck. This concept can be extended to other games of chance by providing, for example, a virtual “deck of dice” that ensures the distribution of die rolls is exactly even.