阐述游戏用户分析学的定义及作用(2)

作者:Anders Drachen, Alessandro Canossa, Magy Seif El-Nasr

3、核心业务属性:例如,有关公司的业务模式核心的必要属性会记录下用户每次购买一件虚拟道具(该道具是什么),在游戏中建立好友联系或向Facebook好友推荐游戏—-或者其它有关收益,用户留存,病毒式传播等属性。对于一款手机游戏来说,地理定位数据能够推动目标市场营销的发展。但是在传统的零售产业中,这些内容便没有任何意义。(请点击此处阅读本文第1部分)

4、利益相关者的要求:此外,我们还需要考虑一类利益相关者的要求。例如,管理人员或市场营销者会更加看重日活跃用户指数(DAU)。这些要求可能与上述提到的类别结合在一起。

5、QA和用户研究:最后,如果你想要使用遥测数据去进行用户研究/用户测试和质量保证(记录崩溃出现和崩溃原因,用户端系统的硬件配置,显著的游戏设置等),你就需要相应地在功能列表上扩大属性。

当你在创建最初的属性组并规划指标时,你需要确保选择过程足够开放,并包含所有利益相关者。这能够避免你在之后再次回到代码中并添加额外内容—-只要谨慎规划便可以无需浪费这些时间。

不管游戏在制作过程中还是之后的发行时发生变化。我们都必须往代码中嵌入新内容,如此才能追踪全新属性并支持不断发展的分析过程。样本是另一个值得考虑的重要元素。虽然它不是追踪每次玩家开枪的必要元素,但是样本本身就是一个大问题,不过这并不是我在本文所强调的内容,我只能说样本能够帮助我们有效地削减游戏分析的资源要求。

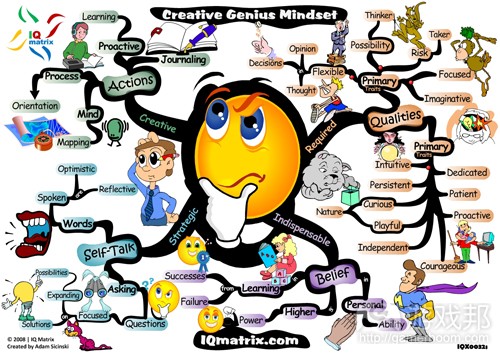

(图2:用户行为属性的属性选择驱动程序。考虑到广泛的游戏分析使用范围,所以我们需要一定数量的需求源。)

预先挑选功能

在功能选择过程中,一个需要考虑的重要元素便是你的属性组能够受到预先计划所驱动的程度,即通过定义我们从用户遥测和选择属性中获得游戏指标和分析结果。

减少复杂性非常重要,但是当你约束数据收集过程的范围时,你便会趟进措施用户行为中重要模式的风险(这是使用预选属性所检测不到的)。而当游戏指标和分析也是预先定义好的时,这种问题将会恶化—-例如,基于一组关键绩效指标(如DAU,MAU,ARPU,LTV等)便有可能降低你在行为数据中找到任何模式的机会。总之,根据可行的分析资源,这两种情况的平衡才是最佳解决方法。例如,完全专注于KPI的话你便不可能掌握游戏内部行为,例如为什么35%的玩家在第8个关卡时选择退出,而为了找出这些答案我们就需要着眼于与设计和性能相关的指标。

我们需要注意的是,当提到用户分析时,我们面对的是不可预测的人类行为。这便意味着预测用户分析要求变得更具有挑战性。这便强调了同时使用探索性(我们着眼于通过用户数据去找出它们所包含的模式)和假设方法(我们知道自己想要衡量并知道可能结果,而不只是什么才是对的)的必要性。

受设计师知识所驱动的策略

在游戏过程中,用户创造一个持续行动循环和回应将让游戏状态不断变化着。这便意味着在任何时候总会出现许多用户行为功能会改变价值。隔离分析过程中的第一步便是有关游戏及玩家间所有可能互动的综合和详细列表。设计师非常了解游戏和玩家间所有可能的互动;所以利用这些知识并让他们一开始就编辑这些列表将非常有帮助。

其次,考虑到最简单游戏中的大量变量,我们就需要通过缩减受知识驱动的元素而降低复杂性:设计师可以轻松地定义同构互动。这些是关于基本相同的互动,行为和状态改变的群组,尽管在形式上可能有点不同。举个例子来说吧,“使用绷带恢复5个HP”或“使用药剂恢复50个HP”从形式上看来可能不同,但从本质上来看它们却是相同的行为。之后同构互动也被整合到更大的区域中。最后,我们需要明确能够获得每个区域中所有同构互动的方法。例如,在“治愈”区域中,我们便不需要追踪使用药剂和绷带的数量,只需要记录玩家“健康情况”的每次状态改变。

我们不能通过缩小客观元素去推断这些区域;而关于让人们在类别中组合元素则存在一个明显的解释偏见,即设计师总是拥有许多专业知识。这些更大的区域总是包含所有玩家可能在游戏中传达的行为,并同时能够帮助我们选择需要监控哪些游戏变量,以及如何监控。

受机器学习驱动的策略

机器学习是关于让计算机在没有编程的前提下拥有学习能力的研究领域。除了作为手设计师驱动策略的替代选择,自动功能选择也是减少玩家与游戏互动所创造的各种状态改变的复杂性的一种补充方法。从传统上来看,自动方法是用于现有的数据集,关系数据库或者数据库中,即代表分析游戏系统,定义变量以及为这些变量创造方法的过程(超过了自动策略的范围);而我们已经定义了该追踪哪些变量以及如何追踪。因此自动方法只能突出所有受监控的变量中,最重要且最明确的功能。

自动功能选择是根据算式去探索与其它内容相关的属性空间和掉落功能;算式的范围是从简单到复杂。而方法则包括聚集,分类,预测和序列挖掘。这也能够用于寻找最重要的功能,因为如果功能的呈现与影响相似性测度的类型不相干,便会大大降低算式所发现的聚类的质量。

收益递减

在拥有有限资源的情况下,你可以追踪,保存并分析所有用户发起行动—-所有服务器端系统信息,移动角色的每一步,每一次购买,每一条聊天信息,每次按键按压,甚至每次敲击。这么做将引起带宽问题,并要求大量资源往游戏代码中添加信息内容,但是从理论上看,这种分析游戏的“蛮力”方法也是可行的。

然后它将创造出非常大的数据集,并反过来可能引出巨大的资源需求(为了转换并分析这些数据)。举个例子来说,在一款FPS游戏中,追踪武器类型,武器修改,范围,伤害,目标,杀戮,玩家和目标位置,子弹轨迹等等将能够实现一次具有深度的武器使用分析。但是评估武器平衡的关键指标可能只是某些范围以及每种武器的使用频率。添加一些附加变量/功能也许不能带来全新的见解,甚至有可能混淆分析。同样地,它并不能用于获取所有游戏玩家身上的行为元素,只能涉及一些百分比(当涉及销售记录时,这就没有意义了,因为你需要追踪所有收益)。

总之,如果选择合理的话,那么最先追踪,收集和分析的变量/功能将提供许多有关用户行为的见解。当追踪更多用户行为的细节时,储存,处理和分析成本也会提高,而遥测数据中所包含的信息的附加值率将会减少。

这便意味着在游戏遥测技术中存在着成本效益关系,能够描写收益递减的简化理论:在分析过程中提到数据来源的数量将生成较低的每单位返回。

在经济文学中一个经典的例子便是,为一块地施肥。在一个不平衡的系统中(未施肥的)添加肥料将促进庄稼的生长,但是在某个点之后,这也可能缩减甚至停止庄稼的生长。而在一个已经得到平衡的系统中施肥则不可能再促进庄稼的生长,甚至有可能抑制它们的生长。

从根本上来看,游戏分析也是遵循着相同的原则。我们能在使用全新功能前在一个特殊点上优化分析,并提供一个特定的输入功能/变量。除此之外,在一个分析过程中提高数据数量将减少返回,或者在极端情况下会因为额外数据所造成的混淆而创造出负返回值。这当然也存在例外—-例如出现问题行为模式的原因将降低社交在线游戏的用户留存(可能是基于一个较小的设计缺陷),从而导致我们很难去判断是否追踪了相关缺陷的特殊行为变量。

用户导向分析的目标

用户导向游戏分析具有各种各样的目的,但是我们可以将其概括性地划分为:

策略分析,即根据用户行为和业务模式的分析而瞄准游戏将如何发展的全局观点。

战术分析,致力于在短期内告知游戏设计,如一个新游戏功能的A/B测试。

操作分析,注重对游戏现状的分析和评估。例如,传达你需要做出哪些改变去创造一款持久的游戏而匹配实时用户行为。

在某种程度上,操作和战术分析将传达技术和基础设施问题,而策略分析则注重整合用户遥测数据与其它用户数据或市场研究。

如果你正在规划一个处理用户遥测的策略,那么你最先需要考虑的便是现有的三种用户导向游戏分析类型,它们所需要的输入数据类型,你该如何保证这三种分析的有效执行以及最终呈献给利益相关者的结果数据。

其次你需要考虑如何同时满足公司和用户的需求。游戏设计的基本目标便是创造能够提供有趣用户体验的游戏。但是运行一家游戏开发公司的基本目标则是赚钱(游戏邦注:至少从投资者的角度来看是这样的)。我们必须确保分析过程所生成的结果能够支持这些目标的相关决定。推动游戏分析的潜在动机主要源自两方面:1)为了获取并留住用户而保证高质量的用户体验;2)确保盈利周期能够生成收益—-而不考虑业务模式。用户导向游戏分析将同时传达设计和盈利。那些在免费市场中取得成功的公司们便证实了这一方法的合理性,即他们使用了像A/B测试等方法去评估一个特定设计改变是否会提高用户体验和盈利。

总结

到目前为止有关功能选择的讨论一直处在一个抽象层面上,尝试着去创造分类指导选择,确保涉及范围的综合性而不是生成一些具体指标列表(如每种武器发射的弹药数/分钟,死亡率,跳跃成功率)。这是因为我们不能为所有游戏类型和使用情况的指标创造一个通用指南。这不只是因为游戏不属于整齐的设计分类(游戏邦注:游戏具有很大的设计空间并且不能聚集在一个特定区域内),同时也因为设计的更新率较高,这将快速导致推荐不再具有效用。因此我们关于用户分析的最佳建议是自上而下创造模式,如此你便能够确保数据收集的综合覆盖面,并能从主要机制出发去推动用户体验(帮助设计师)和盈利(帮助确保设计师能够获得回报)。同时还可以添加额外的细节作为资源许可。最后,你需要努力确保决定和过程够顺畅且可被修改;这在一个复杂且令人兴奋的产业中非常重要。

(本文为游戏邦/gamerboom.com编译,拒绝任何不保留版权的转载,如需转载请联系:游戏邦)

Intro to User Analytics

by Anders Drachen, Alessandro Canossa, Magy Seif El-Nasr

3) Core business attributes: The essential attributes related to the core of the business model of the company, for example, logging every time a user purchases a virtual item (and what that item is), establishes a friend connection in-game, or recommends the game to a Facebook friend — or any other attributes related to revenue, retention, virality, and churn. For a mobile game, geolocation data can be very interesting to assist target marketing. In a traditional retail situation, none of these are of interest, of course.

4) Stakeholder requirements: In addition, there can be an assortment of stakeholder requirements that need to be considered. For example, management or marketing may place a high value on knowing the number of Daily Active Users (DAU). Such requirements may or may not align with the categories mentioned above.

5) QA and user research: Finally, if there is any interest in using telemetry data for user research/user testing and quality assurance (recording crashes and crash causes, hardware configuration of client systems, and notable game settings), it may be necessary to augment to attributes on the list of features accordingly.

When building the initial attribute set and planning the metrics that can be derived from them, you need to make sure that the selection process is as well informed as possible, and includes all the involved stakeholders. This minimizes the need to go back to the code and embed additional hooks at a later time — which is a waste that can be eliminated with careful planning.

That being said, as the game evolves during production as well as following launch (whether a persistent game or through DLCs/patches), it will typically be necessary to some degree to embed new hooks in the code in order to track new attributes and thus sustain an evolving analytics practice. Sampling is another key consideration. It may not be necessary to track every time someone fires a gun, but only 1 percent of these. Sampling is a big issue in its own right, and we will therefore not delve further on this subject here, apart from noting that sampling can be an efficient way to cut resource requirements for game analytics.

Figure 2: The drivers of attribute selection for user behavior attributes. Given the broad scope of application of game analytics, a number of sources of requirements exist.

Preselecting Features

One important factor to consider during the feature selection process is the extent to which your attribute set selection can be driven by pre-planning, by defining the game metrics and analysis results (and thereby the actionable insights) we wish to obtain from user telemetry and select attributes accordingly.

Reducing complexity is necessary, but as you restrict the scope of the data-gathering process, you run the risk of missing important patterns in user behavior that cannot be detected using the preselected attributes. This problem is exasperated in situations where the game metrics and analyses are also predefined — for example, relying on a set of Key Performance Indicators (such as DAU, MAU, ARPU, LTV, etc.) can eliminate your chance of finding any patterns in the behavioral data not detectable via the predefined metrics and analyses. In general, striking a balance between the two situations is the best solution, depending on available analytics resource. For example, focusing exclusively on KPIs will not tell you about in-game behavior, e.g., why 35 percent of the players drop out on level 8 — for that we need to look at metrics related to design and performance.

It is worth noting that when it comes to user analytics, we are working with human behavior, which is notoriously unpredictable. This means that predicting user analytics requirements can be challenging. This emphasizes the need for the use of both explorative (we look at the user data to see what patterns they contain) and hypothesis-driven methods (we know what we want to measure and know the possible results, not just which one is correct).

Strategies Driven by Designers’ Knowledge

During gameplay, a user creates a continual loop of actions and responses that keep the game state changing. This means that at any given moment, there can be many features of user behavior that change value. A first step toward isolating which features to employ during the analytical process could be a comprehensive and detailed list of all possible interactions between the game and its players. Designers are extremely knowledgeable about all possible interactions between the game and players; it’s beneficial to harness that knowledge and involve designers from the beginning by asking them to compile such lists.

Secondly, considering the sheer number of variables involved even in the simplest game, it is necessary to reduce the complexity through a knowledge-driven factor reduction: Designers can easily identify isomorphic interactions. These are groups of similar interactions, behaviors, and state changes that are essentially similar even if formally slightly different. For example “restoring 5 HP with a bandage” or “healing 50 HP with a potion” are formally different but essentially similar behaviors. The isomorphic interactions are then grouped into larger domains. Lastly, it’s required to identify measures that capture all isomorphic interactions belonging to each domain. For example, for the domain “healing,” it’s not necessary to track the number of potions and bandages used, but just record every state change to the variable “health.”

These domains have not been derived through objective factor reduction; there is a clear interpretive bias any time humans are asked to group elements in categories, even if designers have exhaustive expert knowledge. These larger domains can potentially contain all the possible behaviors that players can express in a game and at the same time help select which game variables should be monitored, and how.

Strategies Driven by Machine Learning

Machine learning is a field of study that gives computers the ability to learn without being explicitly programmed. More than an alternative to designer-driven strategies, automated feature selection is a complementary approach to reducing the complexity of the hundreds of state changes generated by player-game interactions. Traditionally, automated approaches are applied to existing datasets, relational databases, or data warehouses, meaning that the process of analyzing game systems, defining variables, and establishing measures for such variables, falls outside of the scope of automated strategies; humans already have defined which variables to track and how. Therefore, automated approaches individuate only the most relevant and the most discriminating features out of all the variables monitored.

Automated feature selection relies on algorithms to search the attribute space and drop features that are highly correlated to others; algorithms can range from simple to complex. Methods include approaches such as clustering, classification, prediction, and sequence mining. These can be applied to find the most relevant features, since the presence of features that are not relevant for the definition of types affects the similarity measure, degrading the quality of the clusters found by the algorithm.

Diminishing Returns

In a situation with infinite resources, it is possible to track, store, and analyze every user-initiated action — all the server-side system information, every fraction of a move of an avatar, every purchase, every chat message, every button press, even every keystroke. Doing so will likely cause bandwidth issues, and will require substantial resources to add the message hooks into the game code, but in theory, this brute-force approach to game analytics is possible.

However, it leads to very large datasets, which in turn leads to huge resource requirements in order to transform and analyze them. For example, tracking weapon type, weapon modifications, range, damage, target, kills, player and target positions, bullet trajectory, and so on, will enable a very in-depth analysis of weapon use in an FPS. However, the key metrics to evaluate weapon balancing could just be range, damage done, and the frequency of use of each weapon. Adding a number of additional variables/features may not add any new relevant insights, or may even add noise or confusion to the analysis. Similarly, it may not be necessary to log behavioral telemetry from all players of a game, but only a percentage (this is of course not the case when it comes to sales records, because you will need to track all revenue).

In general, if selected correctly, the first variables/features that are tracked, collected, and analyzed will provide a lot of insight into user behavior. As more and more detailed aspects of user behavior are tracked, costs of storage, processing, and analysis increase, but the rate of added value from the information contained in the telemetry data diminishes.

What this means is that there is a cost-benefit relationship in game telemetry, which basically describes a simplified theory of diminishing returns: Increasing the amount of one source of data in an analysis process will yield a lower per-unit return.

A classic example in economic literature is adding fertilizer to a field. In an unbalanced system (underfertilized), adding fertilizer will increase the crop size, but after a certain point this increase diminishes, stops, and may even reduce the crop size. Adding fertilizer to an already-balanced system does not increase crop size, or may reduce it.

Fundamentally, game analytics follow a similar principle. An analysis can be optimized up to a specific point given a particular set of input features/variables, before additional (new) features are necessary. Additionally, increasing the amount of data into an analysis process may reduce the return, or in extreme cases lead to a situation of negative return due to noise and confusion added by the additional data. There can of course be exceptions — for example, the cause of a problematic behavioral pattern, which decreases retention in a social online game, can rest in a single small design flaw, which can be hard to identify if the specific behavioral variables related to the flaw are not tracked.

Goals of User-Oriented Analytics

User-oriented game analytics typically have a variety of purposes, but we can broadly divide them into the following:

Strategic analytics, which target the global view on how a game should evolve based on analysis of user behavior and the business model.

Tactical analytics, which aim to inform game design at the short-term, for example an A/B test of a new game feature.

Operational analytics, which target analysis and evaluation of the immediate, current situation in the game. For example, informing what changes you should make to a persistent game to match user behavior in real-time.

To an extent, operational and tactical analytics inform technical and infrastructure issues, whereas strategic analytics focuses on merging user telemetry data with other user data and/or market research.

When you’re plotting a strategy for approaching your user telemetry, the first factors you should concern yourself with are the existence of these three types of user-oriented game analytics, the kinds of input data they require, and what you need to do to ensure that all three are performed, and the resulting data reported to the relevant stakeholder.

The second factor to consider is to clarify how to satisfy both the needs of the company and the needs of the users. The fundamental goal of game design is to create games that provide a good user experience. However, the fundamental goal of running a game development company is to make money (at least from the perspective of the investors). Ensuring that the analytics process generates output supporting decision-making toward both of these goals is vital. Essentially, the underlying drivers for game analytics are twofold: 1) ensuring a quality user experience, in order to acquire and retain customers; 2) ensuring that the monetization cycle generates revenue — irrespective of the business model in question. User-oriented game analytics should inform both design and monetization at the same time. This approach is exemplified by companies that have been successful in the F2P marketplace who use analysis methods like A/B testing to evaluate whether a specific design change increases both user experience (retention is sometimes used as a proxy) and monetization.

Summing Up

Up to this point, the discussion about feature selection has been at a somewhat abstract level, attempting to generate categories guiding selection, ensuring comprehensiveness in coverage rather than generating lists of concrete metrics (shots fired/minute per weapon, kill/death ratio, jump success ratio). This because it is nigh-on impossible to develop generic guidelines for metrics across all types of games and usage situations. This not just because games do not fall within neat design classes (games share a vast design space and do not cluster at specific areas of it), but also because the rate of innovation in design is high, which would rapidly render recommendations invalid. Therefore, the best advice we can give on user analytics is to develop models from the top down, so you can ensure comprehensive coverage in data collection, and from the core out, starting from the main mechanics driving the user experience (for helping designers) and monetization (for helping making sure designers get paid). Additional detail can be added as resources permit. Finally, try to keep your decisions and process fluent and adaptable; it’s necessary in an industry as competitive and exciting as ours.(source:gamasutra)

下一篇:行业应探索适用于儿童的盈利模式

.png)

闽公网安备35020302001549号

闽公网安备35020302001549号